Overview

BI charts play a pivotal role in data visualization, transforming complex datasets into clear visuals. This transformation enables organizations to swiftly analyze trends and make informed decisions. How effectively are you leveraging these tools? The significance of BI charts is underscored by their ability to enhance comprehension and drive strategic initiatives. Particularly in Power BI, tailored solutions can profoundly improve data representation and operational efficiency. By adopting these practices, organizations can elevate their data-driven strategies and foster a culture of informed decision-making.

Introduction

In the realm of business intelligence, effective data visualization is paramount for making informed decisions. Business Intelligence (BI) charts emerge as essential instruments that transform complex datasets into accessible visuals. These charts simplify information for stakeholders and highlight trends and insights that can drive strategic actions.

As organizations increasingly rely on data to navigate market complexities, understanding the different types of charts available—such as waterfall, bar, and line charts—becomes crucial.

This article delves into the significance of BI charts, explores their various types, and provides practical guidance on creating and customizing these visualizations to enhance clarity and impact. With the right approach, businesses can leverage these tools to unlock actionable insights and foster growth in an ever-evolving data landscape.

What Are BI Charts and Their Importance in Data Visualization?

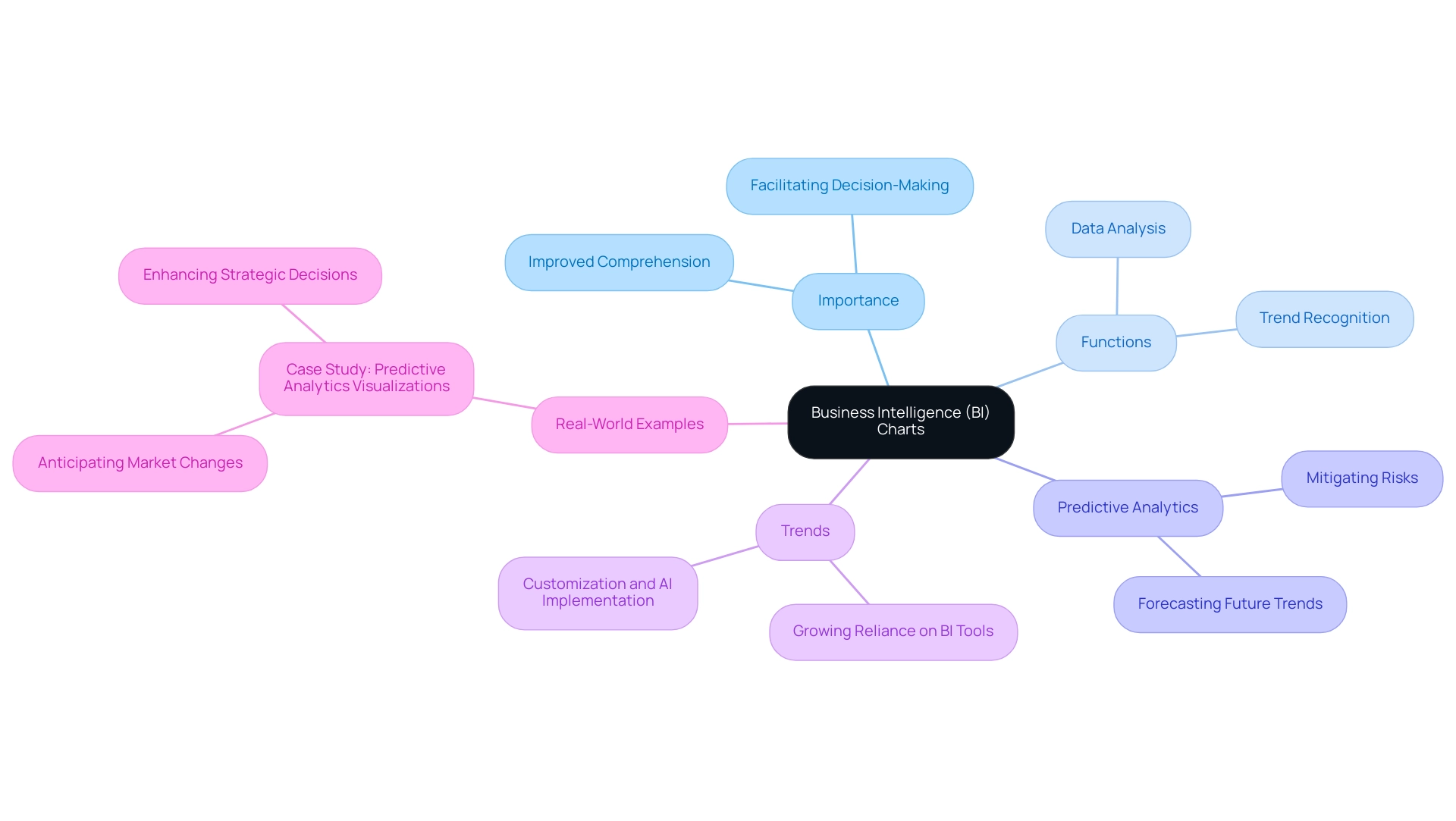

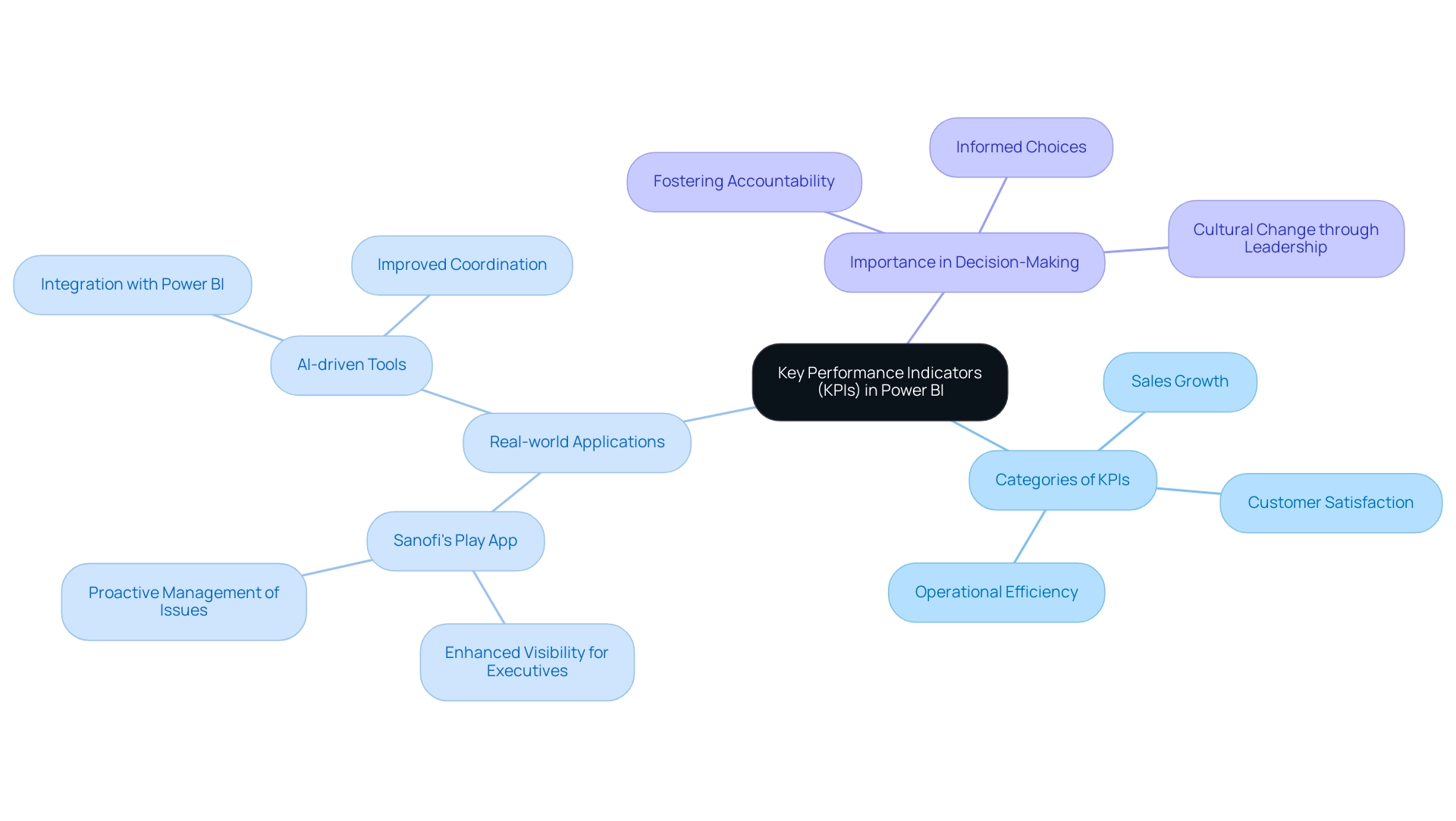

Business Intelligence (BI) visuals are essential tools that empower organizations to analyze and interpret complex datasets with precision. By transforming unprocessed information into clear visuals, these representations streamline details, enabling stakeholders to recognize insights and trends swiftly. In the context of Power BI, BI visuals not only enhance the communication of insights but also facilitate rapid, informed decision-making.

The significance of BI graphs in information representation lies in their ability to improve comprehension and promote strategic initiatives within organizations. For instance, predictive analytics representations allow companies to forecast future trends based on historical data, enabling them to anticipate market shifts and mitigate risks. This capability is vital in today’s data-rich environment, where organizations must navigate complexities to maintain a competitive edge.

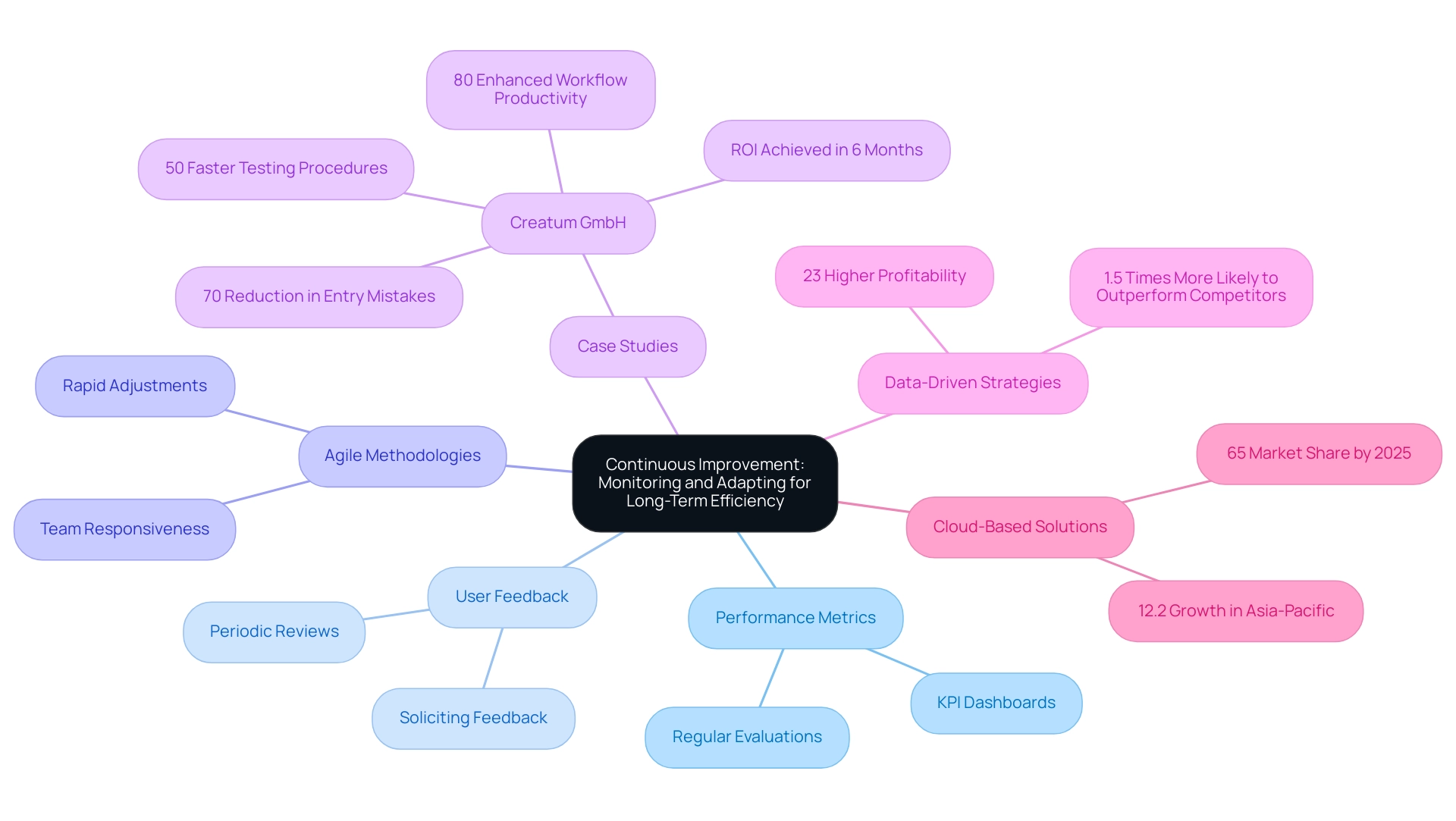

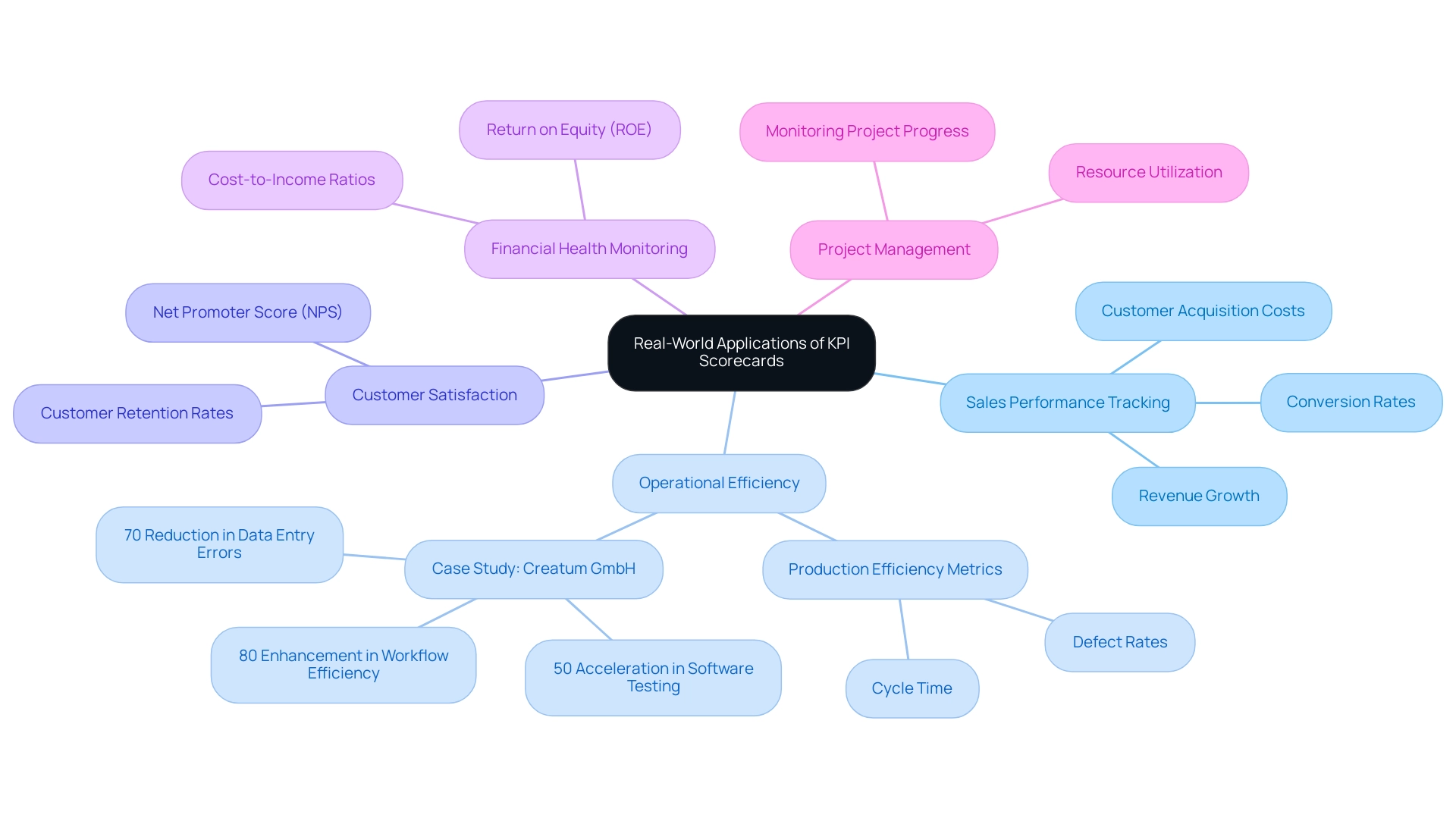

Recent trends indicate a growing reliance on BI charts, with 2025 poised to be a pivotal year for the adoption of these tools. As organizations increasingly recognize the value of visualization, the demand for tailored solutions that enhance quality and simplify AI implementation has surged. This aligns with Creatum GmbH’s unique value proposition, which emphasizes customized AI solutions that enable businesses to leverage information effectively.

Moreover, continuous learning is crucial in the realm of business intelligence. Engaging in initiatives like MDS@Rice equips individuals with the latest skills and knowledge, ensuring they stay ahead in the data-driven landscape.

Real-world examples underscore the impact of BI charts on decision-making. Organizations utilizing these graphical tools, as highlighted in the case study on predictive analytics representations, have reported enhanced clarity in their analyses, leading to more strategic and informed decisions. As Mussarat Nosheen aptly states, “Information representation is an essential element of contemporary business intelligence, allowing organizations to convert intricate information into actionable insights.”

This sentiment reflects a growing consensus on the vital role BI visuals play in shaping effective business strategies.

Exploring Different Types of Charts in Power BI

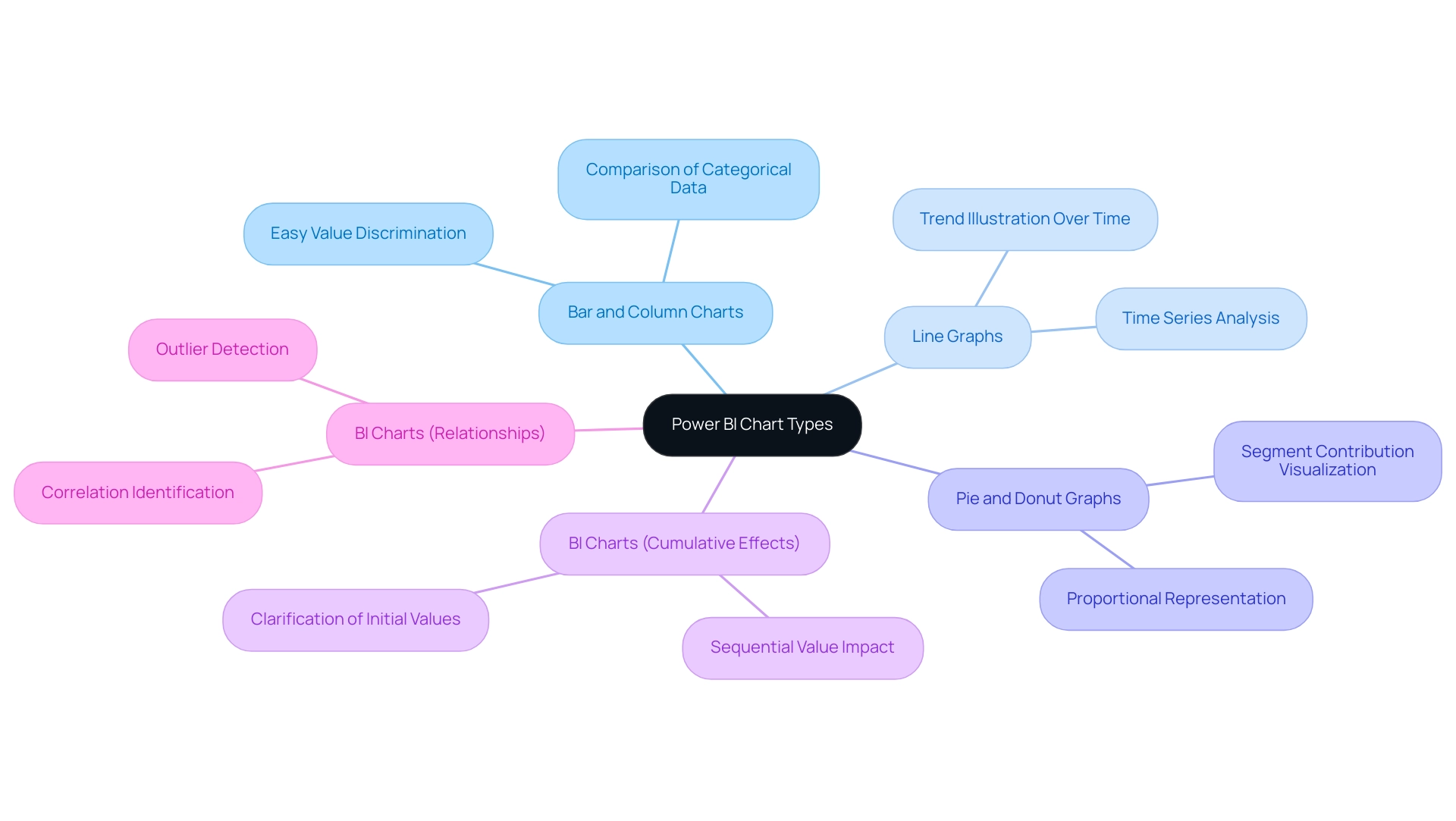

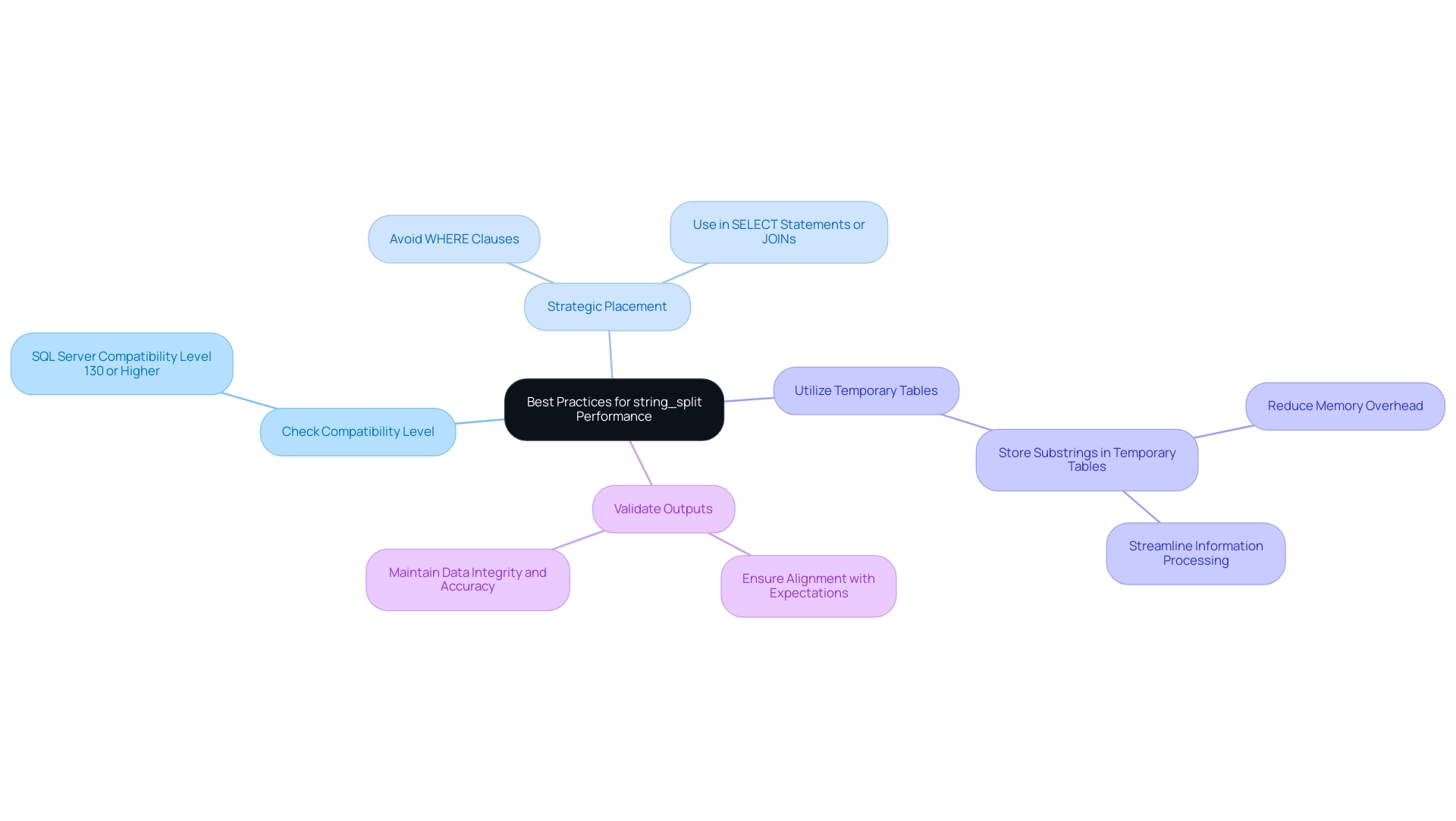

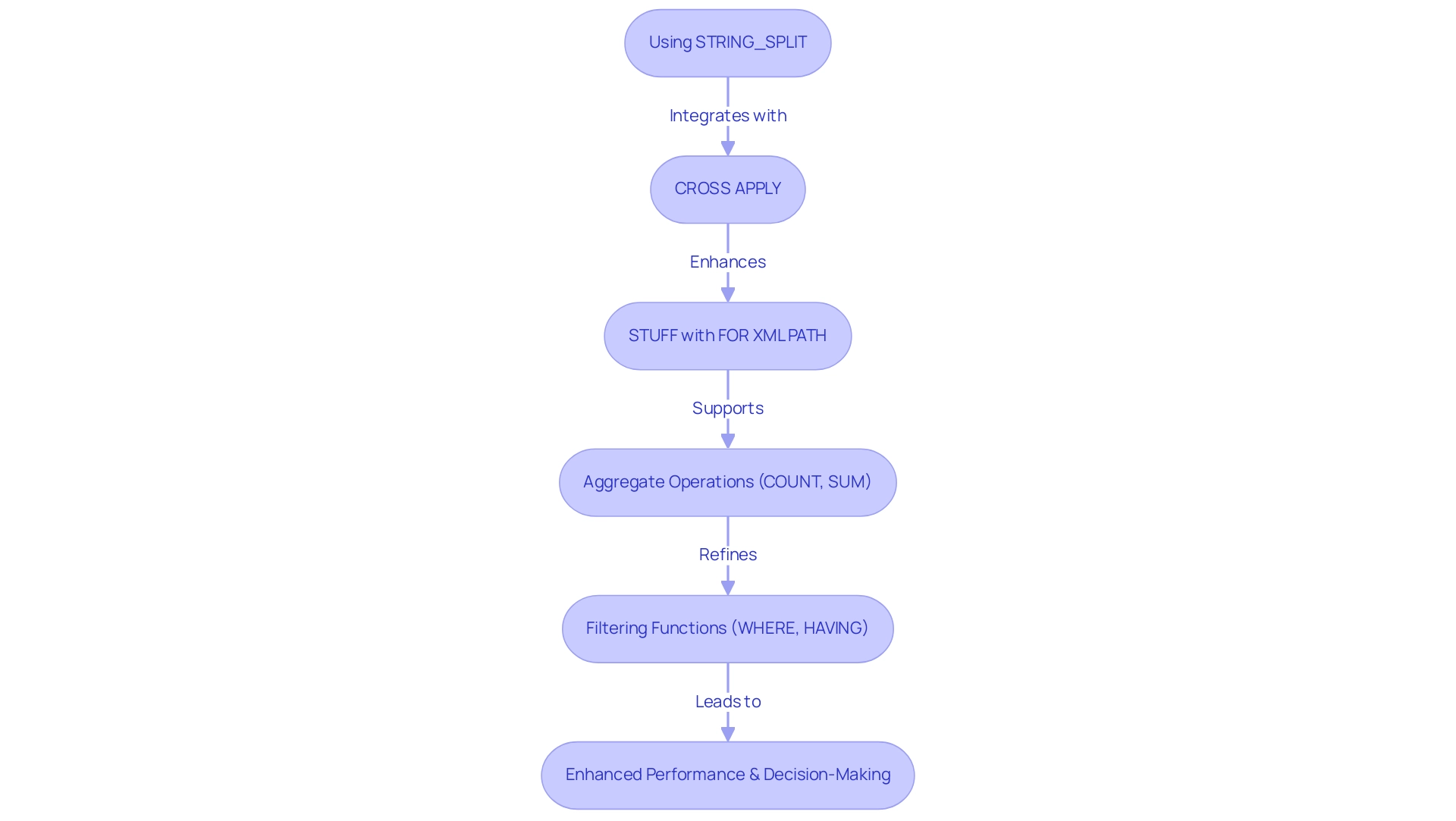

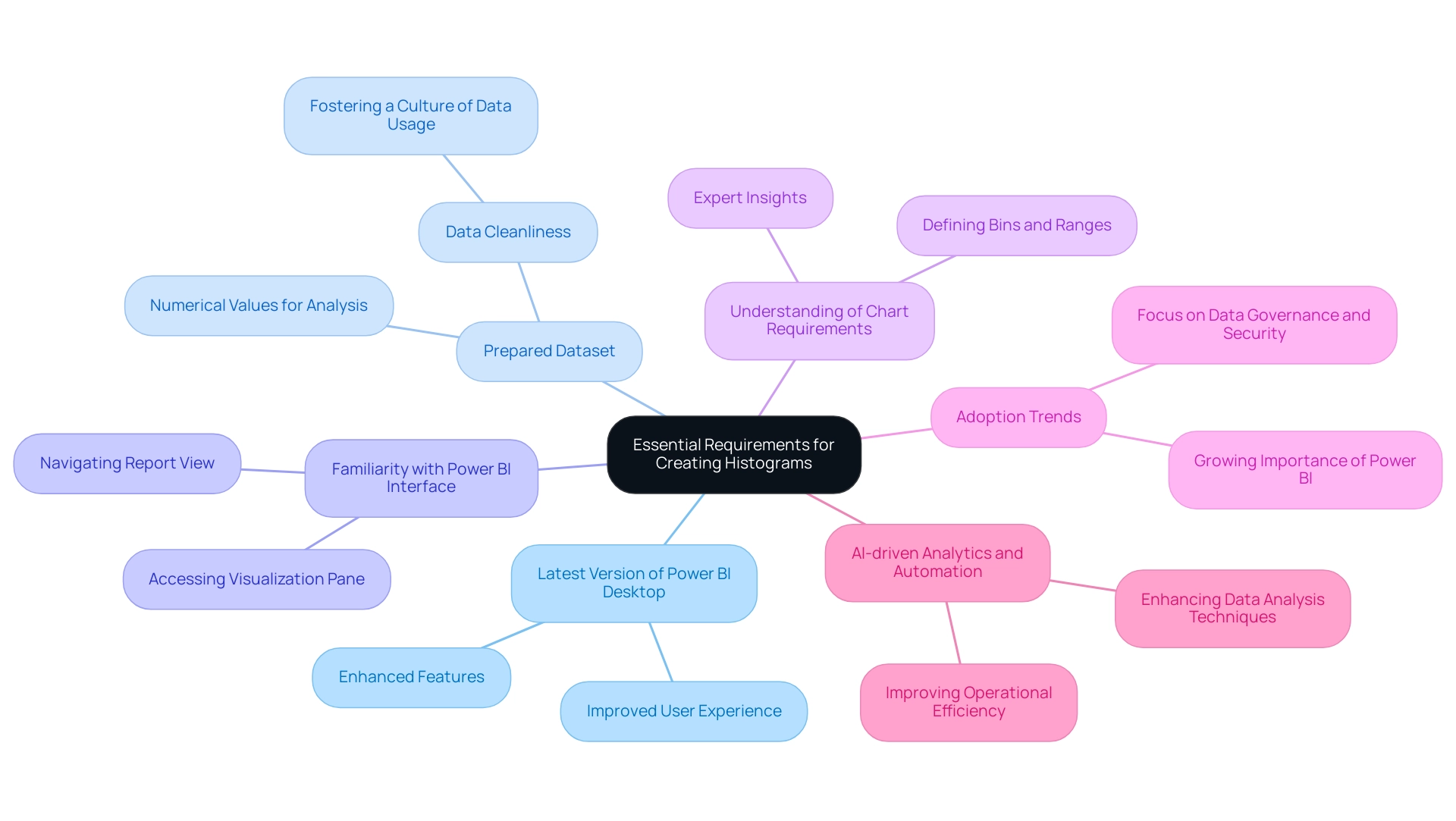

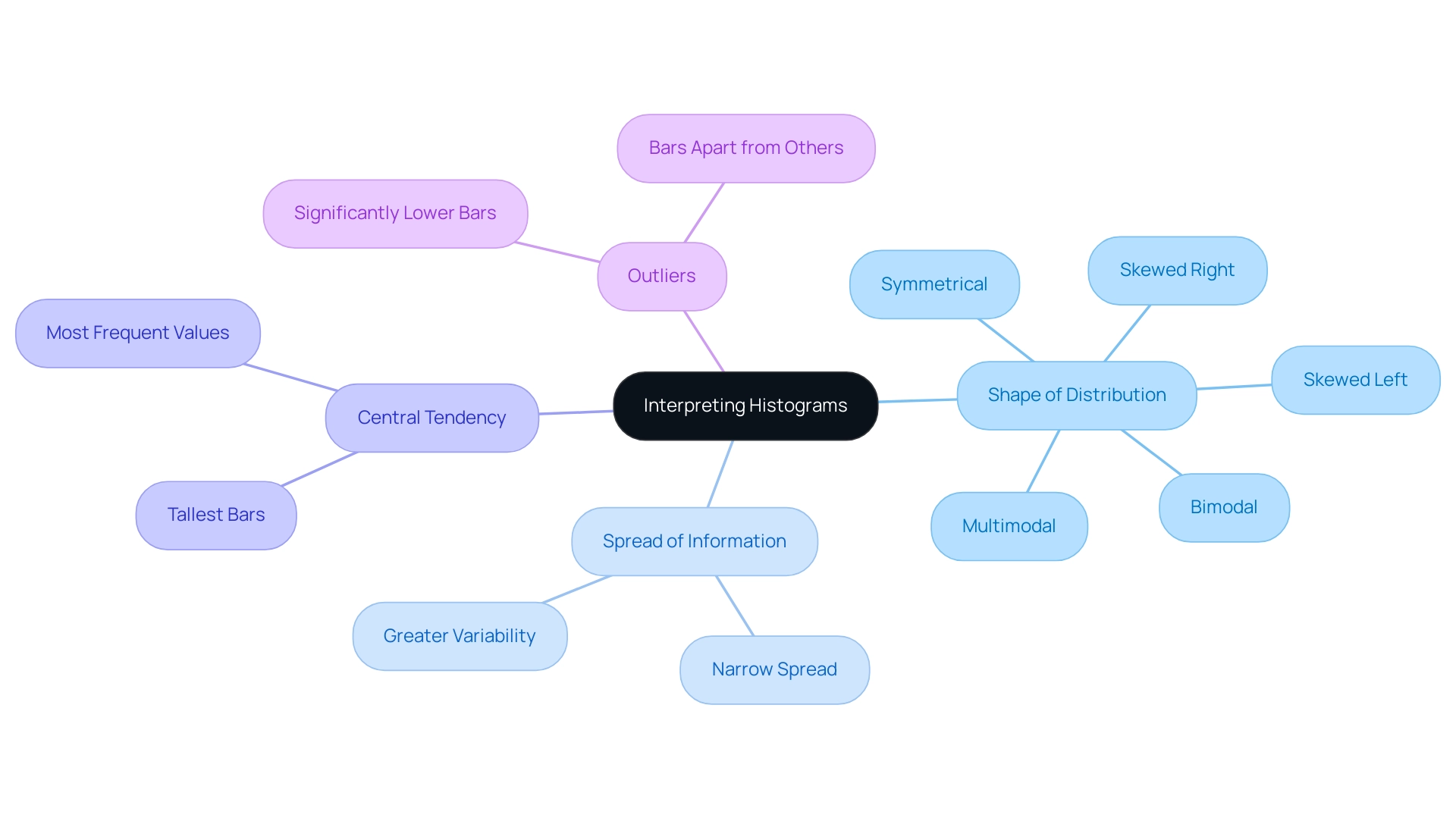

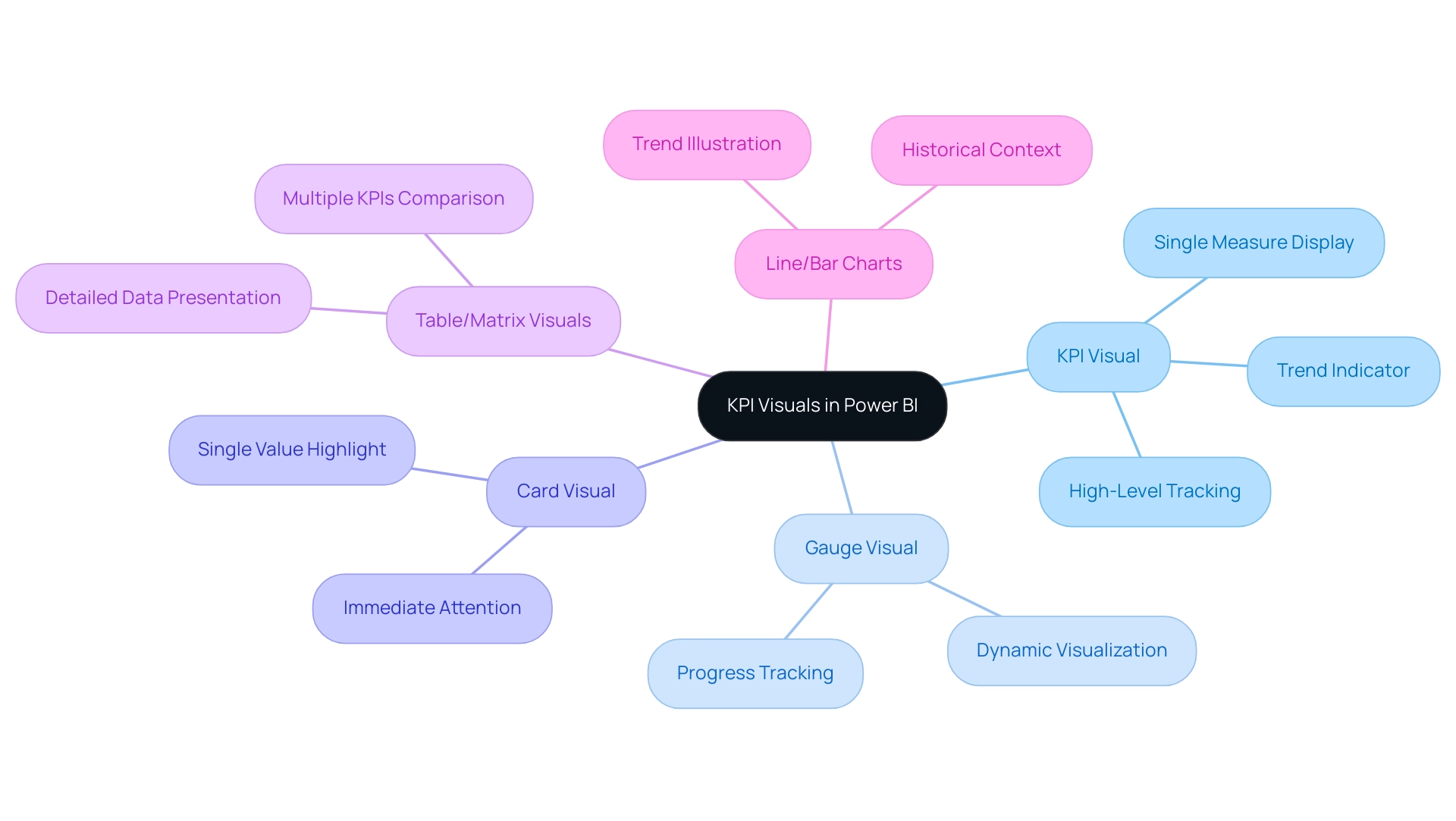

Power BI offers a wide variety of BI charts, each meticulously designed to meet specific visualization requirements. Understanding these options is crucial for effectively conveying insights, especially in light of common challenges such as time-consuming report creation, inconsistencies, and the lack of actionable guidance that can erode trust in the data presented. The primary chart types include:

- Bar and Column Charts: Excellent for comparing categorical data, these charts allow users to easily discern differences in values across categories.

- Line Graphs: Ideal for illustrating trends over time, line graphs enable users to track changes and patterns in data, making them essential for time series analysis, akin to BI charts.

- Pie and Donut Graphs: Effective for displaying proportions of a whole, these visuals help to visualize how individual segments contribute to the total, similar to BI charts.

- BI Charts: Particularly useful for visualizing the cumulative effects of sequentially introduced values, BI charts clarify how initial values are impacted by subsequent additions or subtractions.

- BI Charts: These are valuable for showcasing relationships between two variables, enabling users to identify correlations and outliers within their information.

In 2025, the latest updates to Power BI introduced enhanced features for these visual types, significantly improving usability and functionality. For instance, network diagrams have gained popularity for illustrating intricate connections, particularly in hierarchical information contexts. A case study on network diagrams demonstrated their effectiveness in displaying complex relationships, while also emphasizing the importance of clarity in design to prevent overwhelming users.

Expert opinions underscore the significance of selecting the appropriate graph type according to the information narrative being conveyed. As organizations increasingly adopt business intelligence tools, understanding the most popular types of graphs, such as BI charts, bar, and line, becomes essential for effective data analysis. Notably, out of 1,523 surveyed individuals, 843 voted ‘Yes’ on the effectiveness of these chart types, while 520 voted ‘No’, and 160 abstained, indicating a strong preference for certain representations.

Successful case studies illustrate how various organizations utilize these representations to derive actionable insights, ultimately enhancing operational efficiency and informed decision-making. As highlighted by Sascha Rudloff, Team Leader of IT and Process Management at PALFINGER Tail Lifts GMBH, “Mit dem Power BI Sprint von CREATUM haben wir nicht nur einen sofort einsetzbaren Power BI Bericht und ein Gateway Setup erhalten, sondern auch eine signifikante Beschleunigung unserer eigenen Power BI Entwicklung erlebt.” This testimonial underscores the transformative impact of Creatum’s Power BI Sprint on business intelligence development, particularly in addressing the challenges of report creation and providing clear, actionable guidance that enhances information quality and simplifies AI implementation.

Understanding Waterfall Charts: Definition and Use Cases

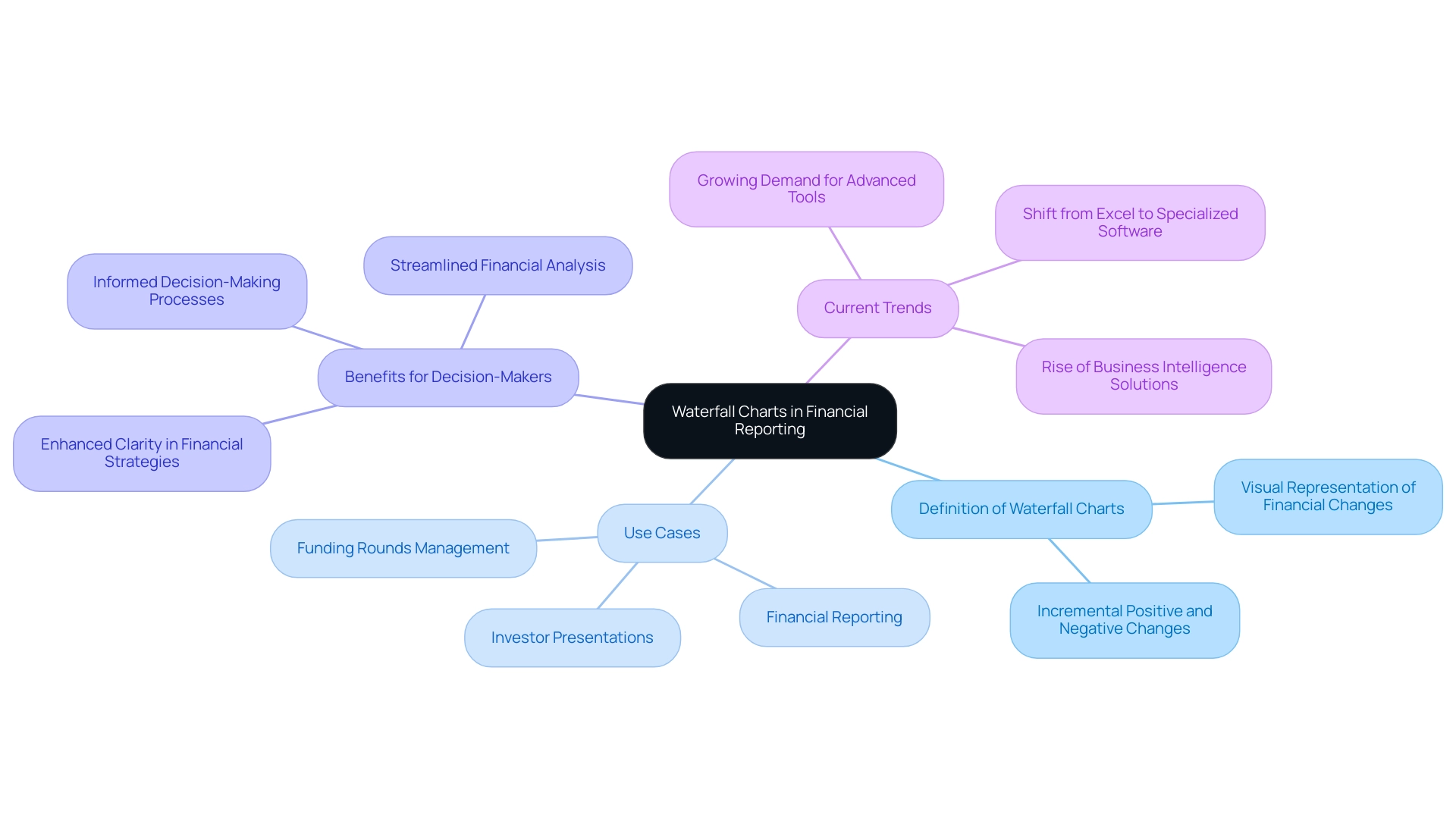

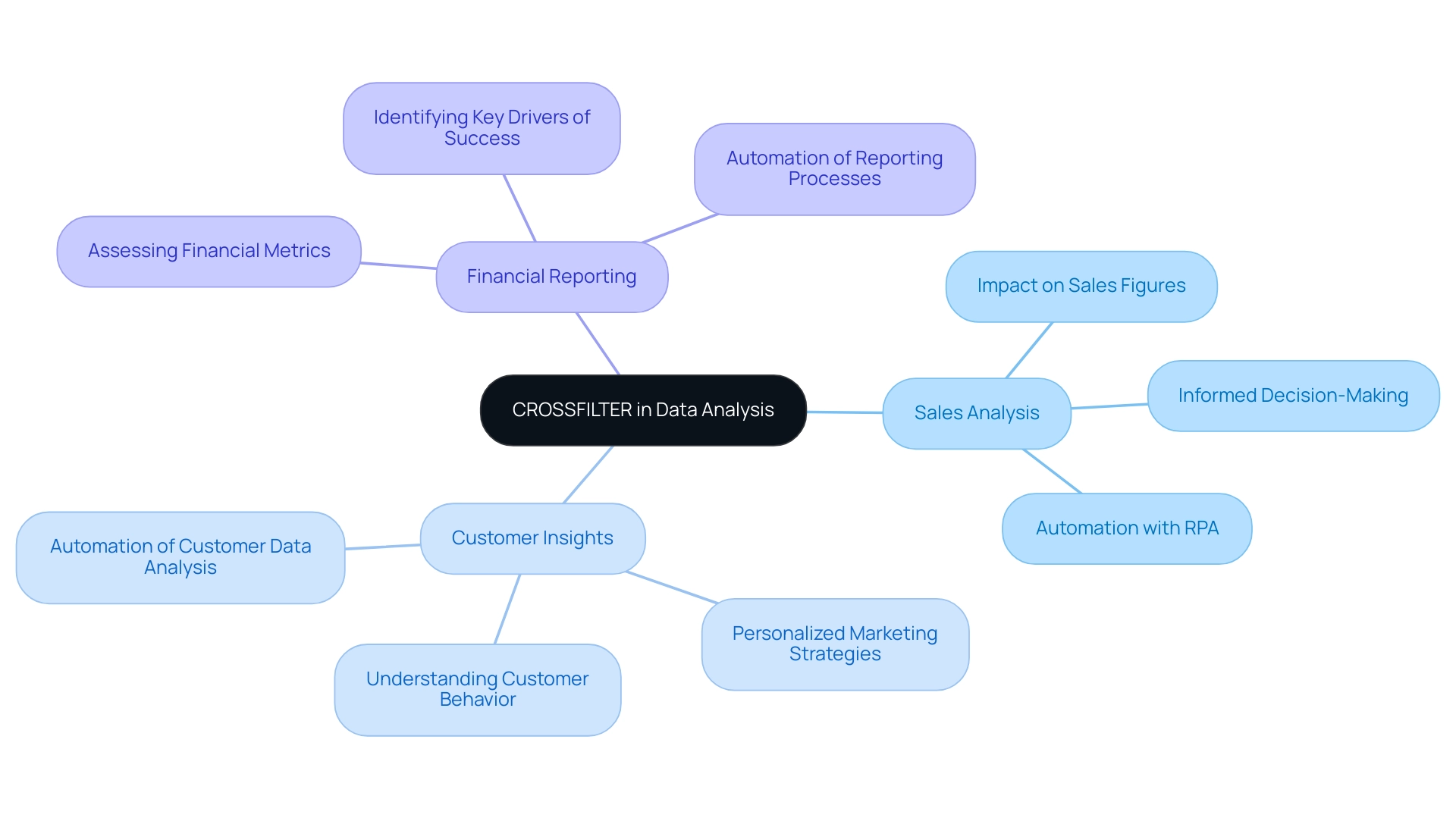

A cascading diagram serves as a powerful representation tool, effectively illustrating how a starting value is influenced by a series of incremental positive and negative changes. This visualization proves particularly advantageous in financial reporting, as it clearly depicts profit and loss over time, alongside the impact of various factors on revenue streams. For instance, a cascading diagram can adeptly showcase the progression of sales figures from one period to another, highlighting both increases and decreases in a visually clear manner.

The utility of cascading diagrams extends beyond mere representation; they are vital resources for decision-makers, distilling complex data into easily digestible insights. Current trends indicate that as businesses expand their investor bases and secure additional financing, the demand for sophisticated financial evaluation tools, such as graphical representations, becomes increasingly essential. Statistics reveal that organizations utilizing flow charts in their financial reporting have experienced heightened clarity in their financial strategies, ultimately fostering more informed decision-making processes.

Moreover, tiered analysis is poised to gain traction as a financial tool, particularly as companies expand and their shareholder pools grow. Case studies further underscore the rising significance of tiered analysis within the financial landscape. For example, Eqvista’s tiered analysis tool has proven instrumental for companies navigating intricate funding rounds, enabling them to manage future investments with greater efficacy. This tool streamlines the calculation of financial rounds upon exit, aligning with the overarching goal of enhancing financial strategy and decision-making processes.

As Excel becomes increasingly inadequate for sequential analysis—due to an expanding number of shareholders and complex scenarios—the demand for advanced tools like Eqvista is on the rise. By leveraging such tools, companies can gain deeper insights into their financial cycles, thereby refining their financial strategies and enhancing overall operational efficiency.

In today’s data-rich environment, employing Business Intelligence (BI) through tools like Power BI and BI charts can significantly augment the effectiveness of sequential diagrams. Creatum GmbH’s Power BI services facilitate efficient reporting and data consistency, empowering organizations to convert raw data into actionable insights. With offerings like the 3-Day Power BI Sprint, businesses can swiftly produce professionally crafted reports that amplify the insights derived from flow diagrams.

As the need for clear and actionable financial insights continues to escalate, BI charts, bolstered by robust BI solutions, are set to emerge as a pivotal component of business intelligence and financial reporting by 2025.

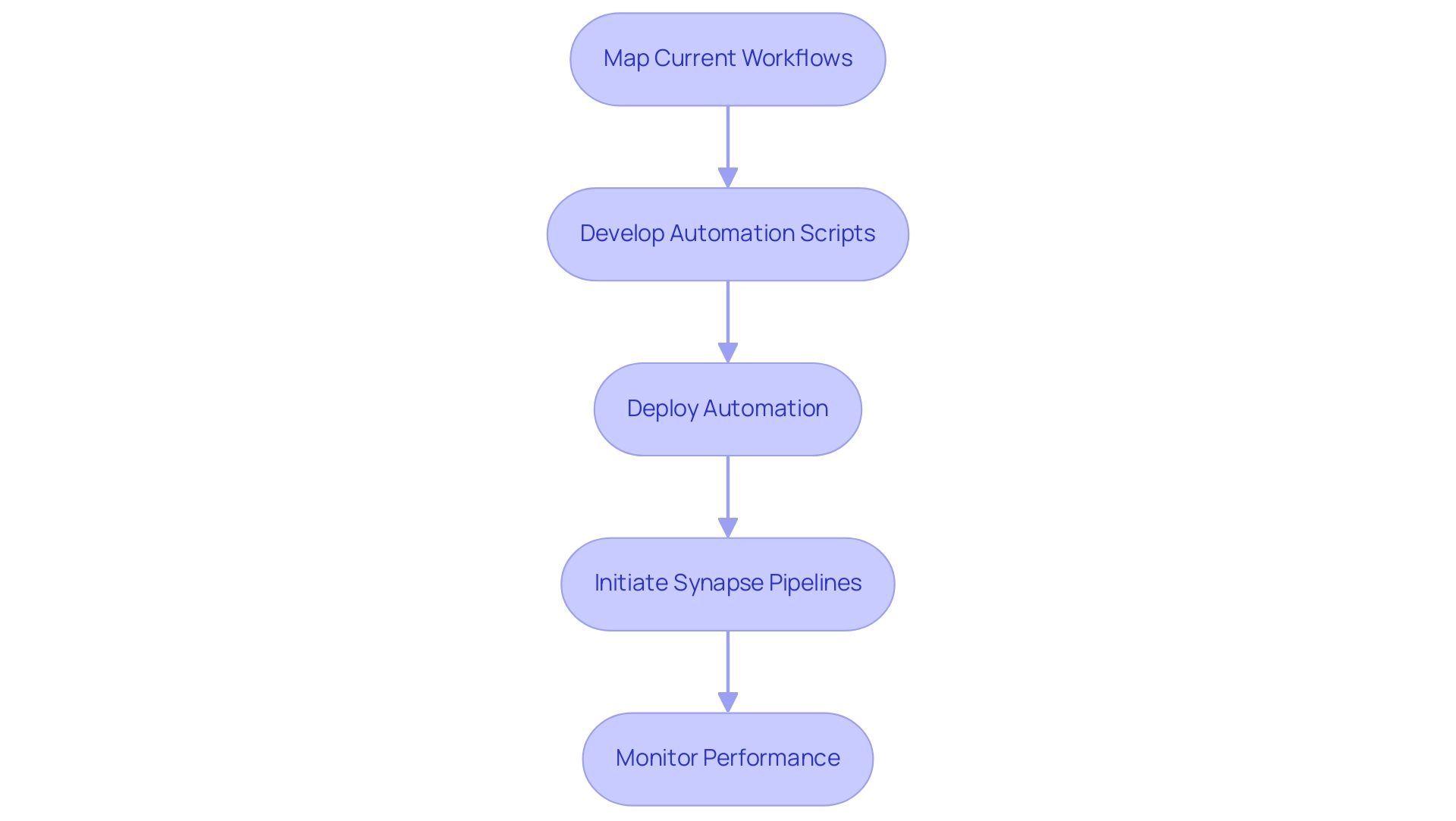

Step-by-Step Guide to Creating Waterfall Charts in Power BI

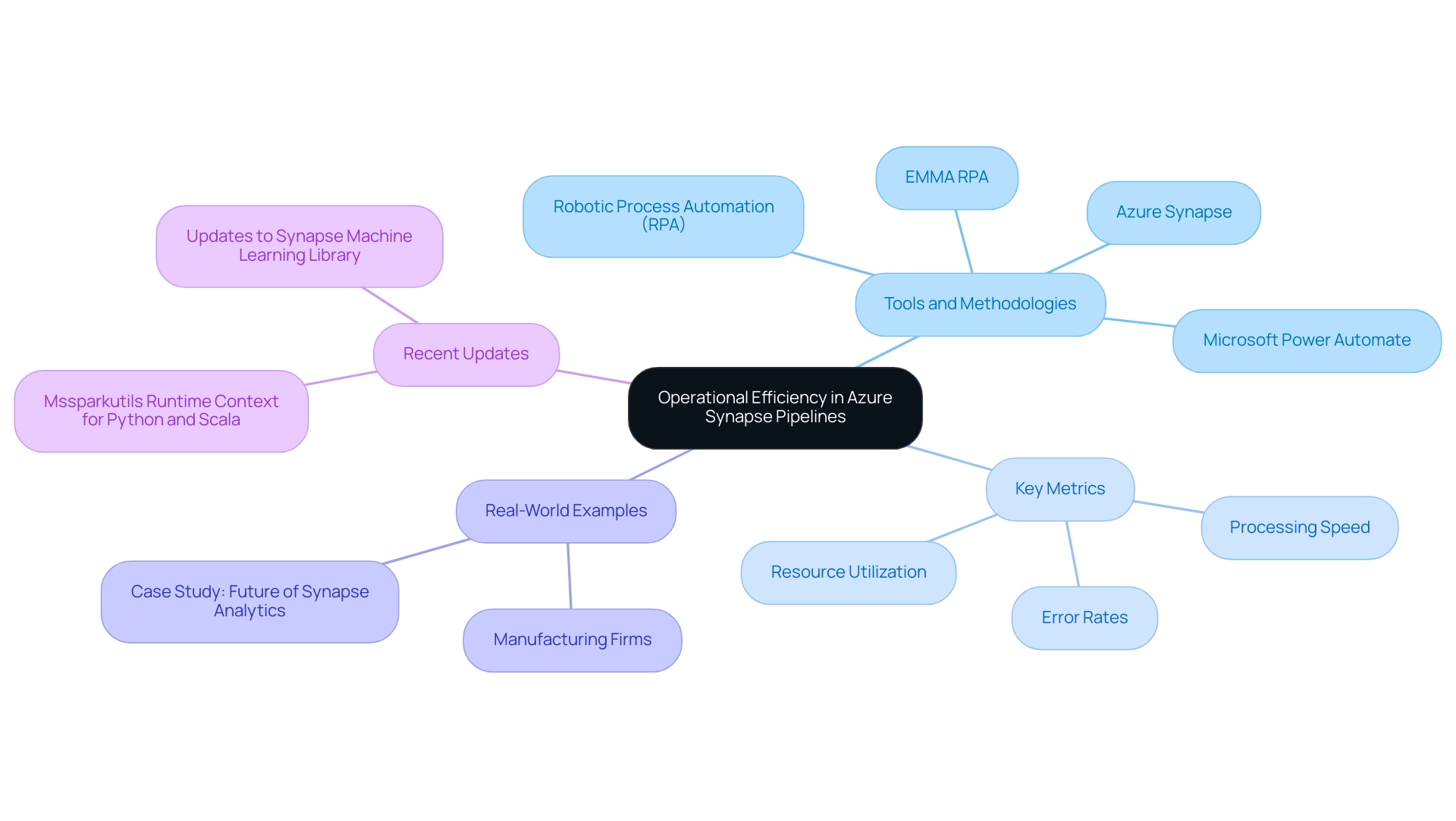

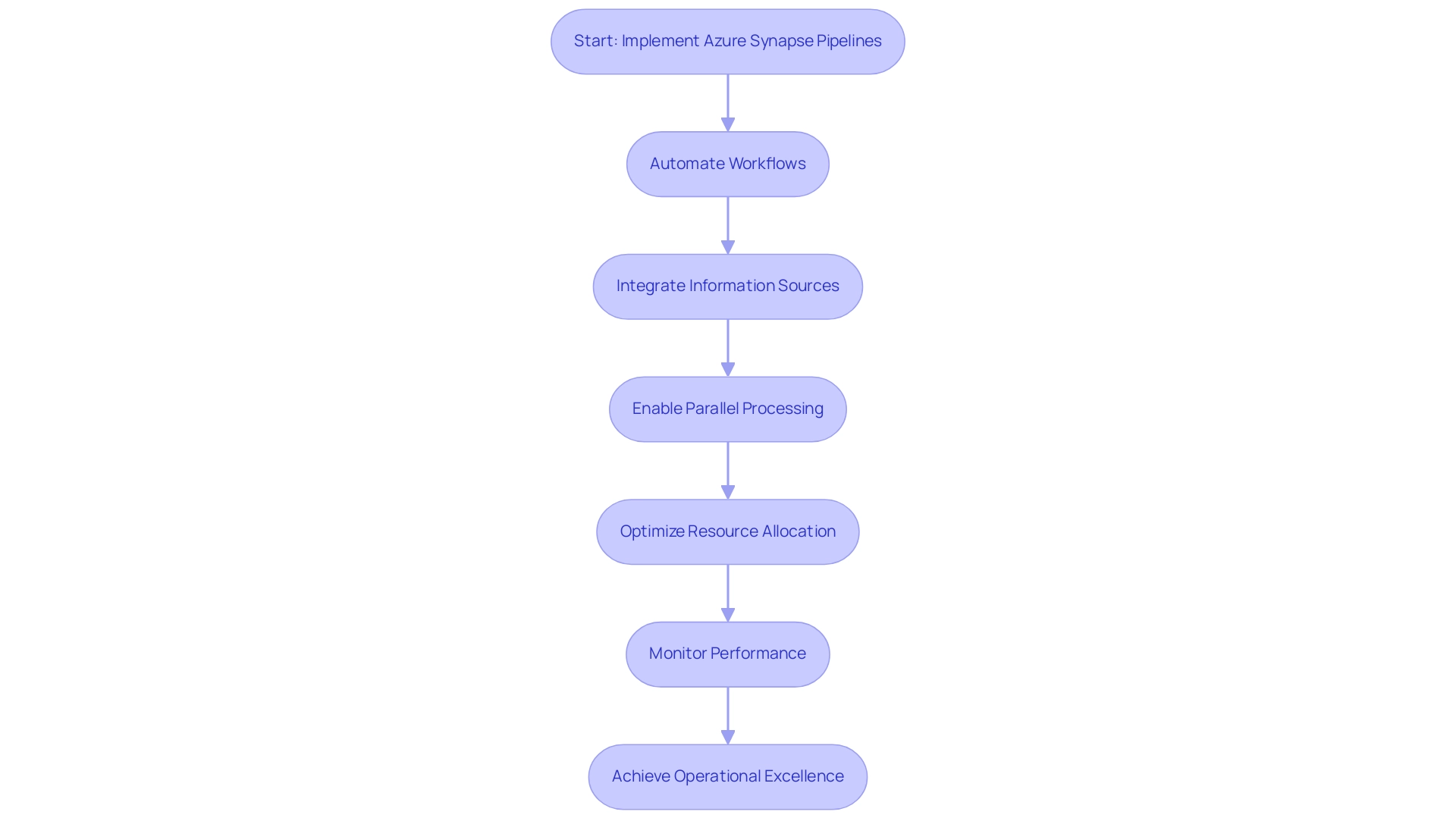

Creating BI charts, such as a waterfall chart in Power BI, is a straightforward process that significantly enhances visualization and facilitates informed decision-making across various business functions. This capability is particularly vital in the realm of Business Intelligence, where converting raw data into actionable insights is essential for driving growth and operational efficiency. Moreover, integrating Robotic Process Automation (RPA) can streamline information handling processes, further enhancing the effectiveness of BI tools like Power BI.

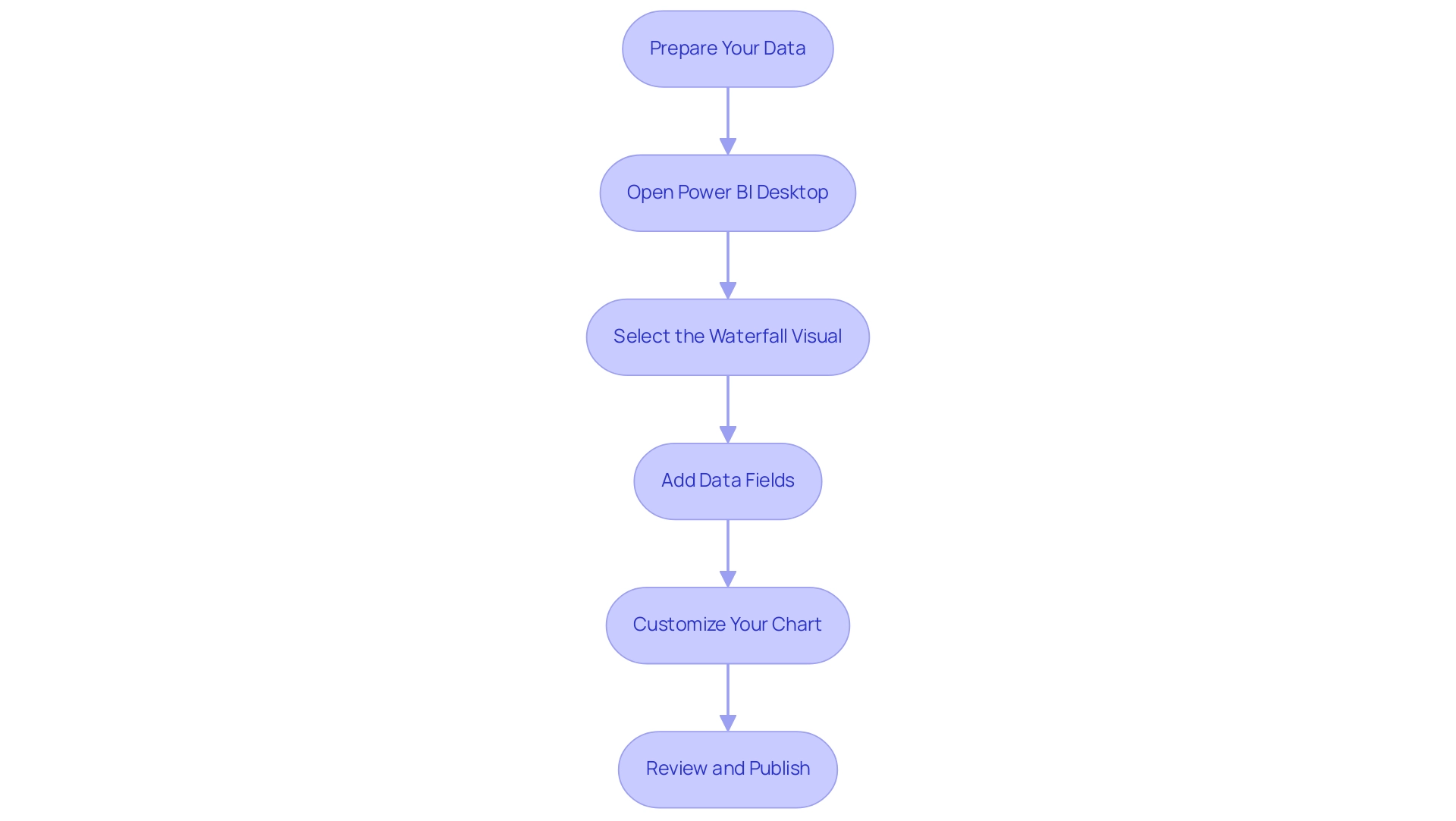

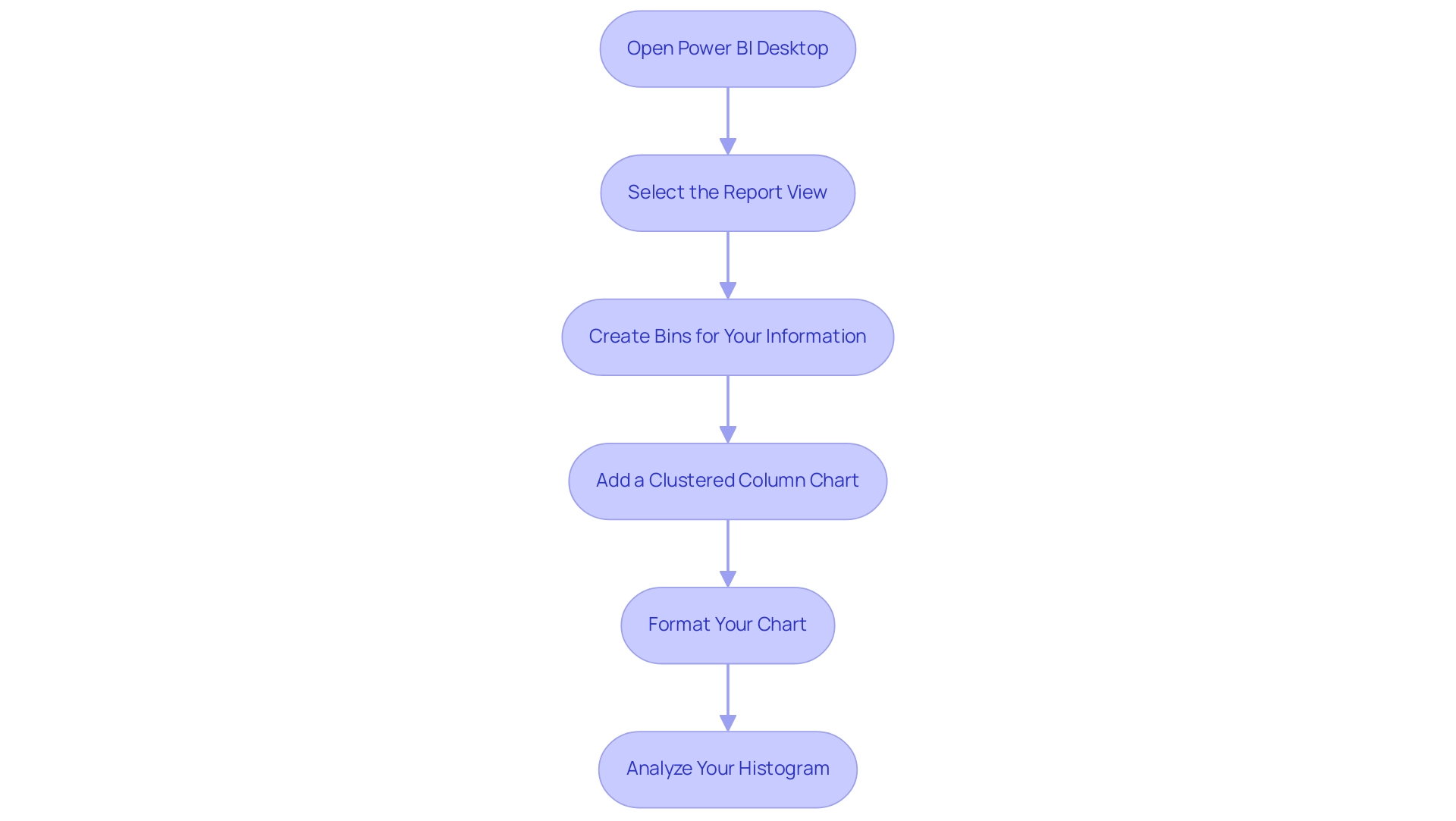

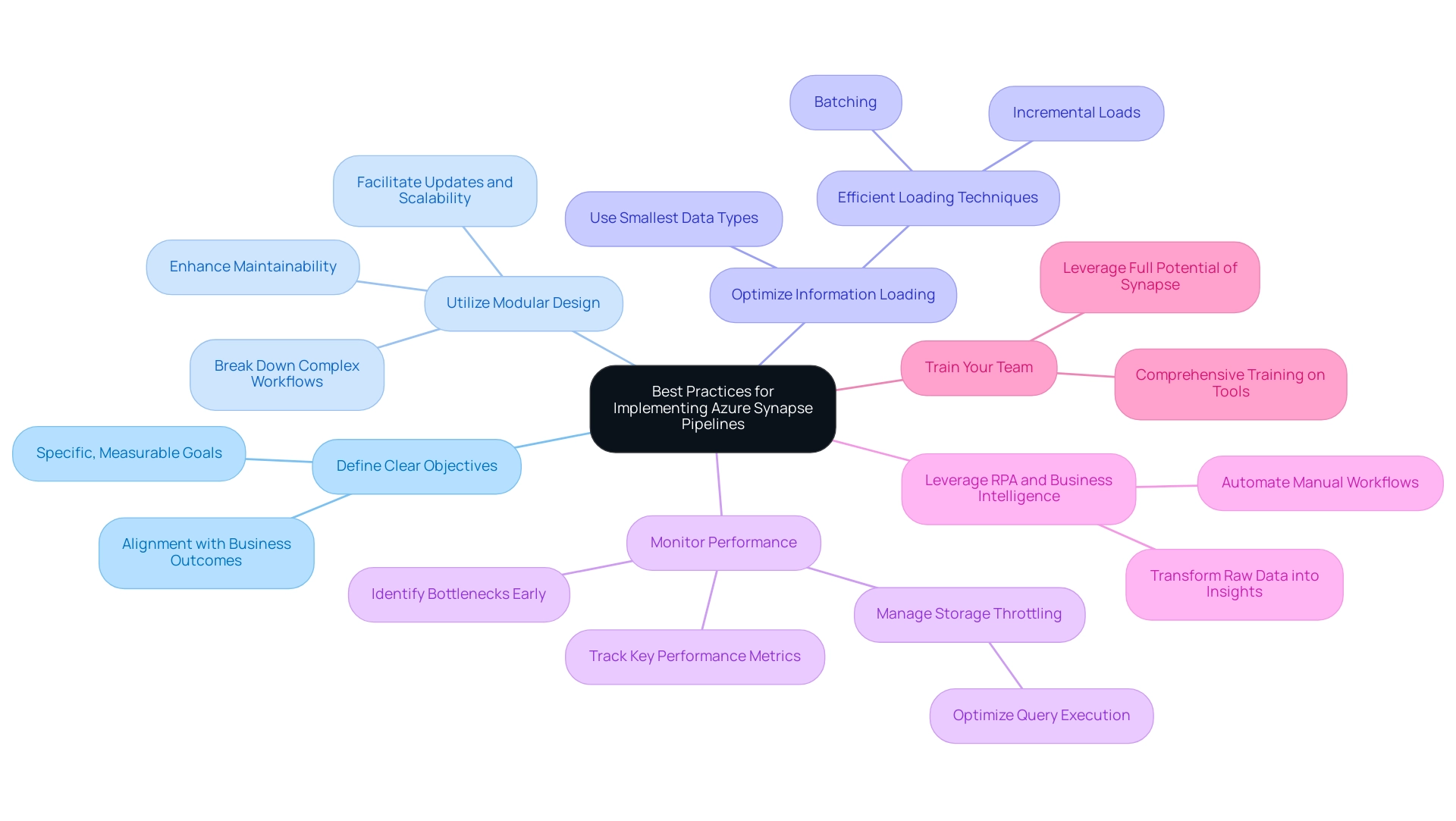

To effectively create and customize your chart, follow these steps:

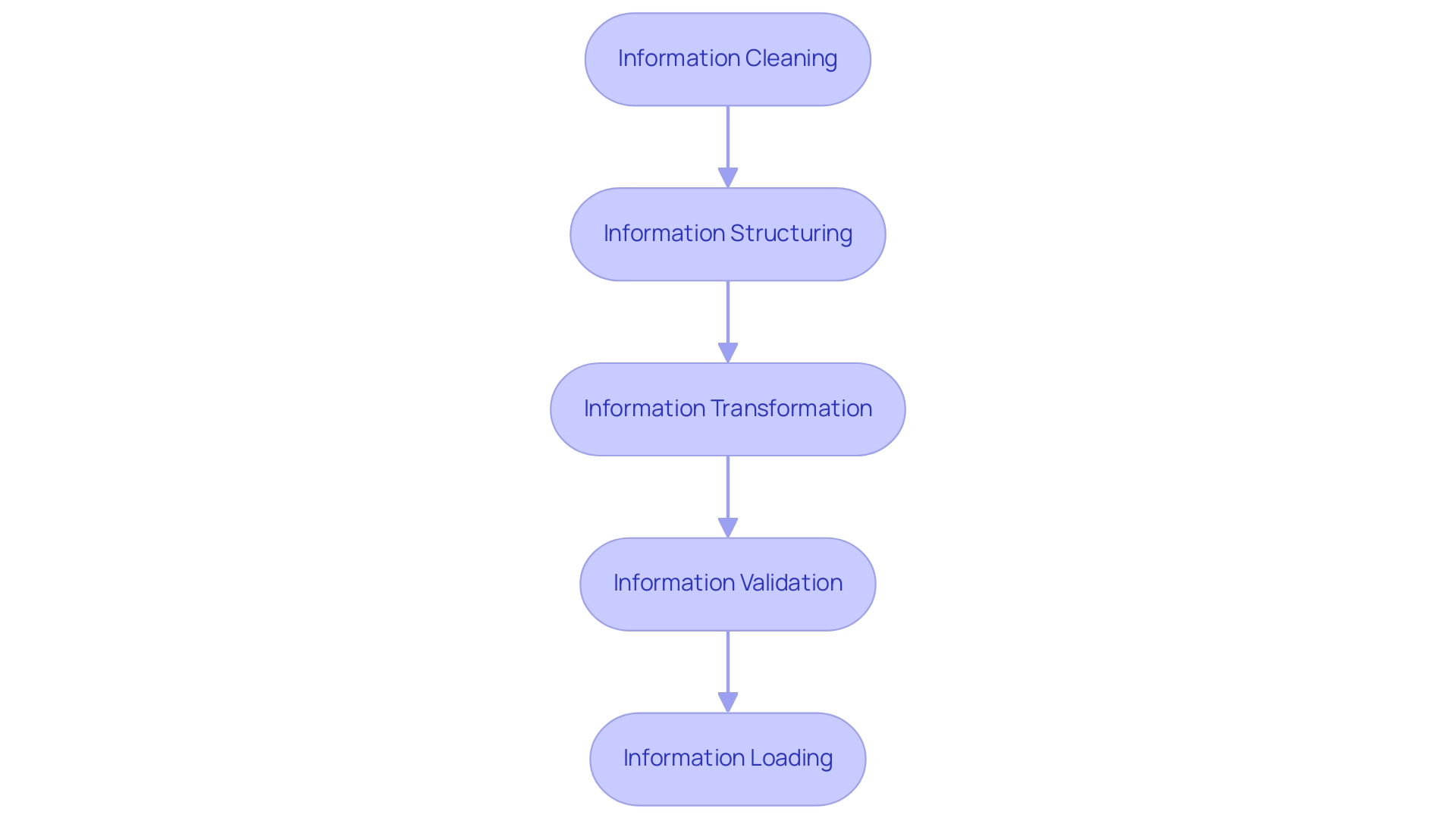

- Prepare Your Data: Ensure your dataset includes the necessary values for both the initial state and subsequent changes. This foundational step is crucial for accurate representation in BI charts, helping to mitigate challenges such as data inconsistencies.

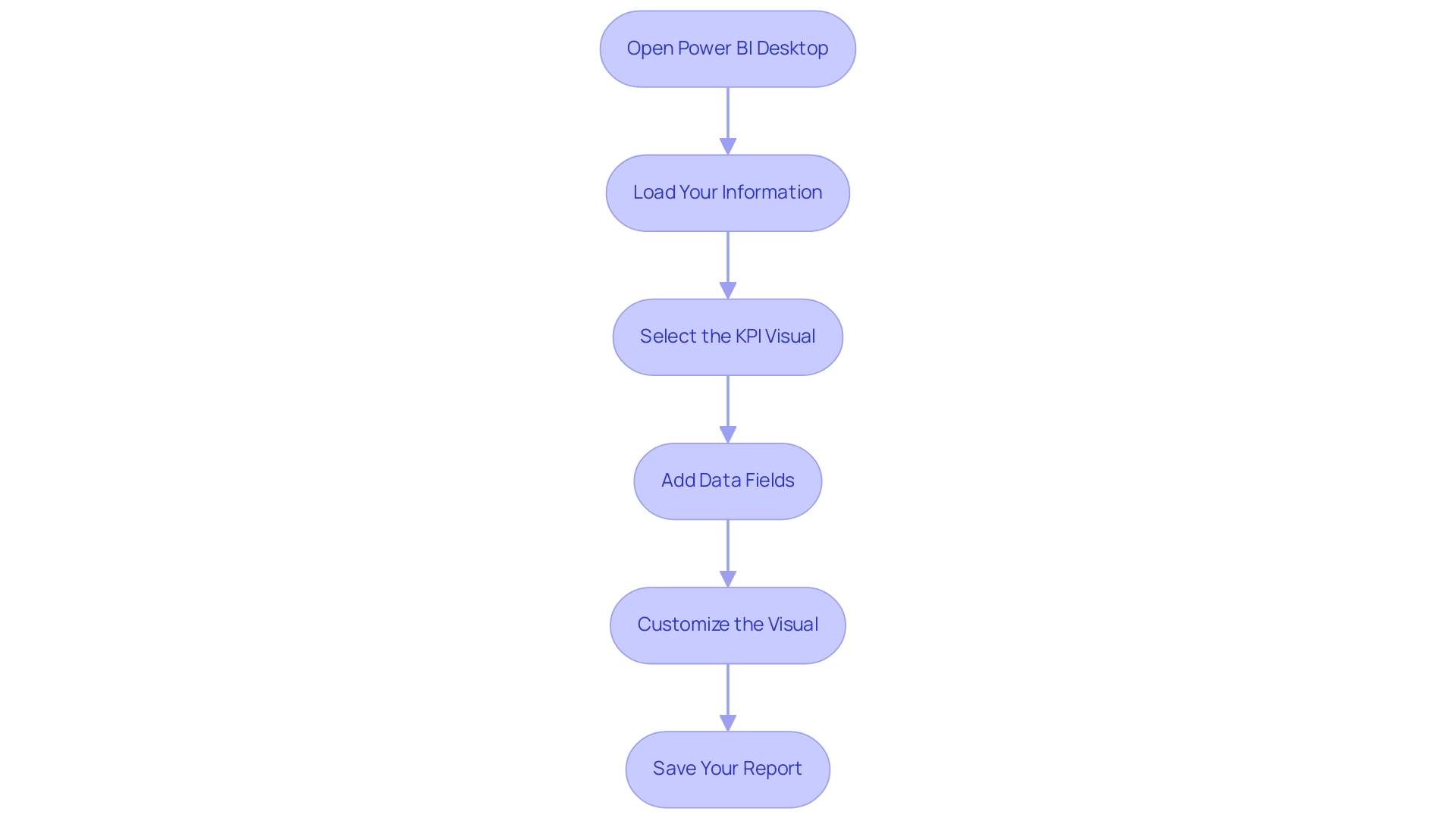

- Open Power BI Desktop: Launch the application and load your prepared dataset to begin the visualization process.

- Select the Waterfall Visual: In the Visualizations pane, locate and select the waterfall icon to initiate the creation of the visual. Add Data Fields: Drag and drop the relevant fields into the ‘Values’ and ‘Category’ areas. This step establishes how your information will be illustrated in the graph, enabling a clear representation of changes over time.

- Customize Your Chart: Tailor the appearance of your chart by adjusting colors, labels, and titles. Customization enhances clarity, ensuring that your audience can easily interpret the data displayed in BI charts, which is essential for effective decision-making.

- Review and Publish: After finalizing your visualization, review it for accuracy and clarity. Once satisfied, save your work and publish it to the Power BI service for sharing with stakeholders.

In practical applications, note that March has the largest positive variance while July has the largest negative variance, effectively demonstrated using BI charts. Furthermore, these charts allow users to perform ‘what-if’ evaluations, simulating various scenarios and illustrating possible results, including the effect of price alterations on customer loyalty.

While these types of graphs are beneficial, it’s crucial to acknowledge options like bar graphs, stacked bar graphs, and diverging bar graphs, each providing distinct benefits and drawbacks based on the information displayed. As Jon Oringer wisely noted, it’s essential to question whether specific tools are truly needed, aligning with a strategic focus on operational efficiency. Additionally, addressing issues like lengthy report preparation and inconsistencies in information can enhance the overall efficacy of insights obtained from Power BI dashboards.

By adhering to these measures and integrating best practices, users can effectively illustrate their information using BI charts, thereby enhancing their decision-making abilities and leveraging Business Intelligence and RPA to propel business growth.

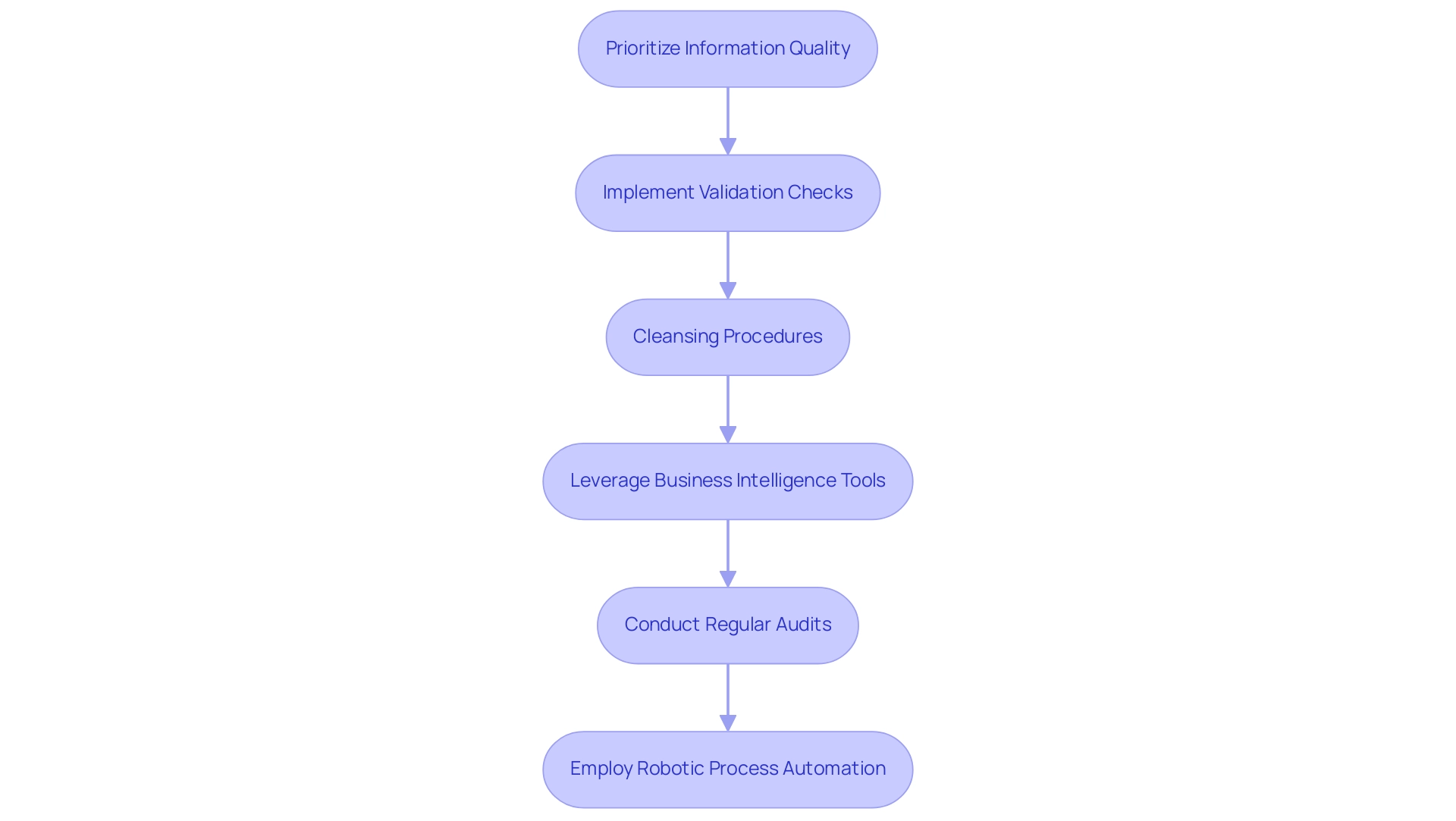

Customizing Your Waterfall Chart for Enhanced Clarity

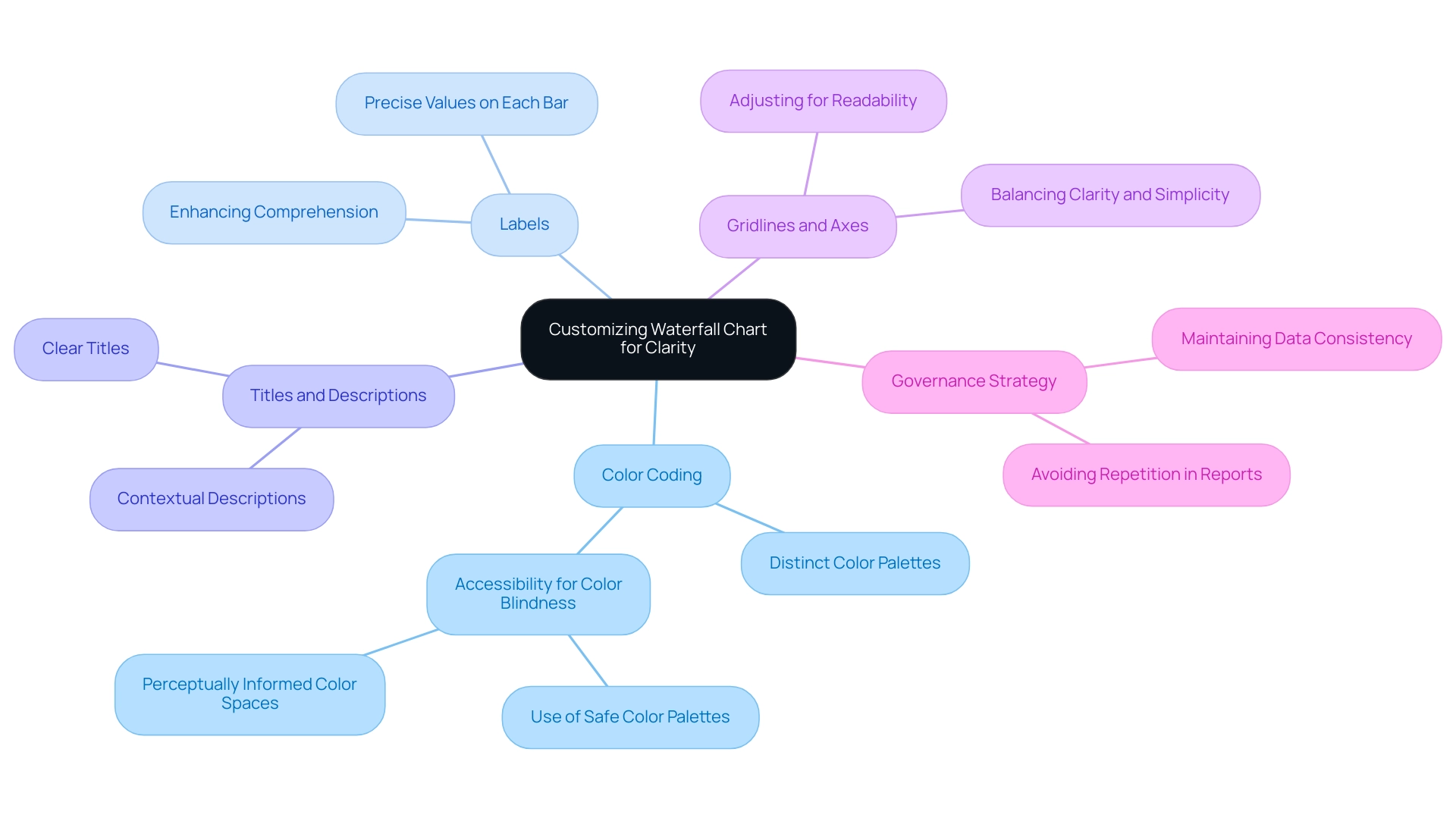

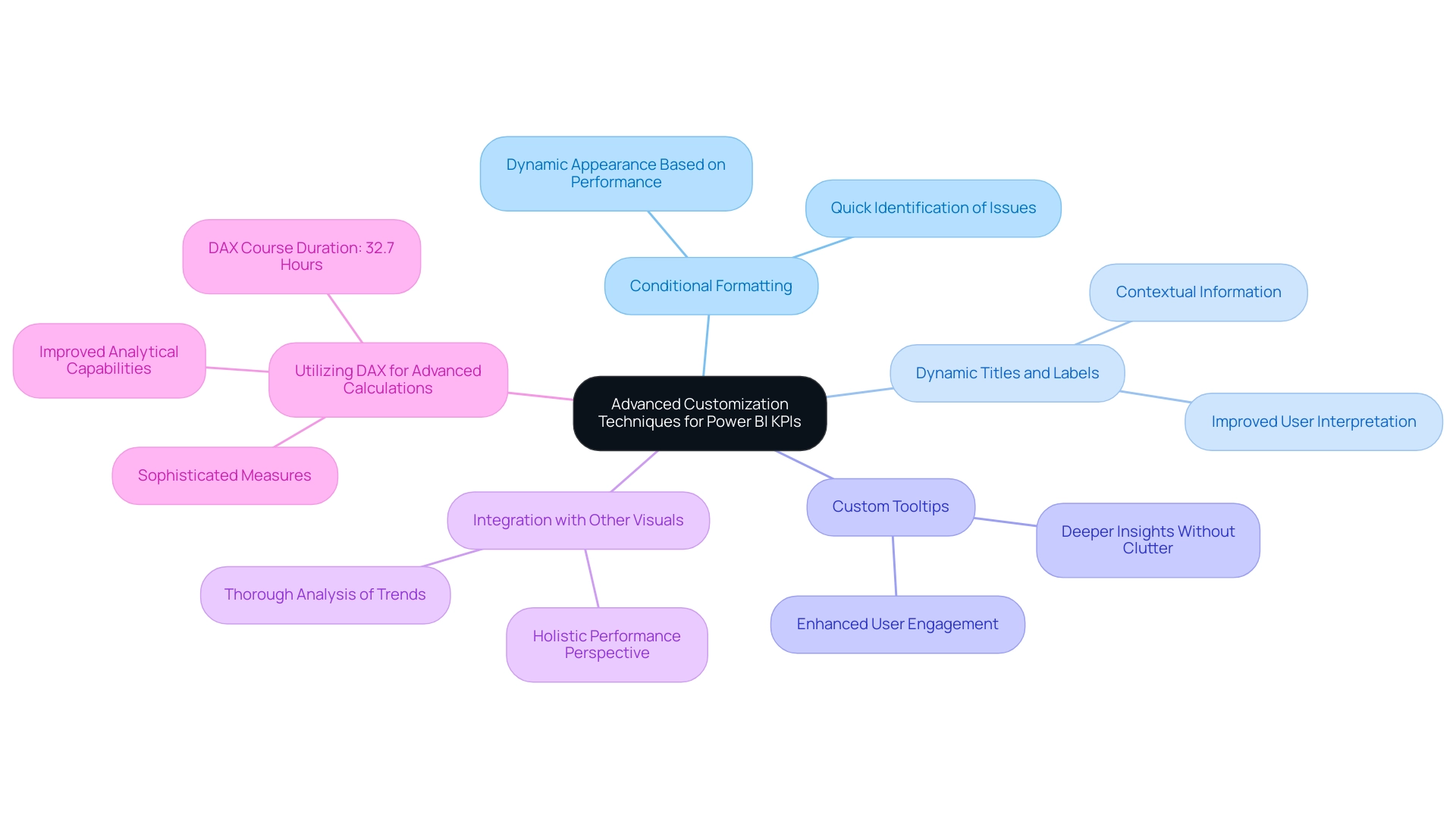

Customizing a waterfall chart in Power BI significantly enhances its clarity and effectiveness, thereby improving storytelling. Here are essential tips for customization:

-

Color Coding: Implement distinct color palettes to differentiate between positive and negative changes. This practice not only aids immediate understanding but also addresses accessibility requirements, ensuring that individuals with color blindness, including those with Protanopia—the most prevalent type of color blindness—can interpret the information accurately. Utilizing perceptually informed color spaces can further enhance differentiation among nominal classes.

-

Labels: Incorporate labels on each bar to provide precise values. This addition enhances the visual’s informational worth and enables viewers to quickly grasp the information, thus enhancing overall comprehension.

-

Titles and Descriptions: Clear titles and contextual descriptions are essential for guiding viewers through the visual. They assist in framing the narrative of the information, making it easier for the audience to comprehend the significance of the details presented.

-

Gridlines and Axes: Adjusting gridlines and axes can enhance readability without overcrowding the visual. Achieving a balance between clarity and simplicity is vital for sustaining viewer involvement. Research shows that effective customization of graphs can greatly influence viewer understanding. These strategies are not merely aesthetic options; they are fundamental elements of effective information presentation. For instance, a case study on accessibility in visual representation highlights the importance of creating inclusive visualizations that cater to diverse audiences. It recommends strategies such as using color palettes safe for color-blind viewers and employing dual encoding techniques to convey information effectively. Additionally, Helgeson & Moriarty observed that incorporating specific elements results in no enhancement in memory for the information, underscoring the necessity for careful customization.

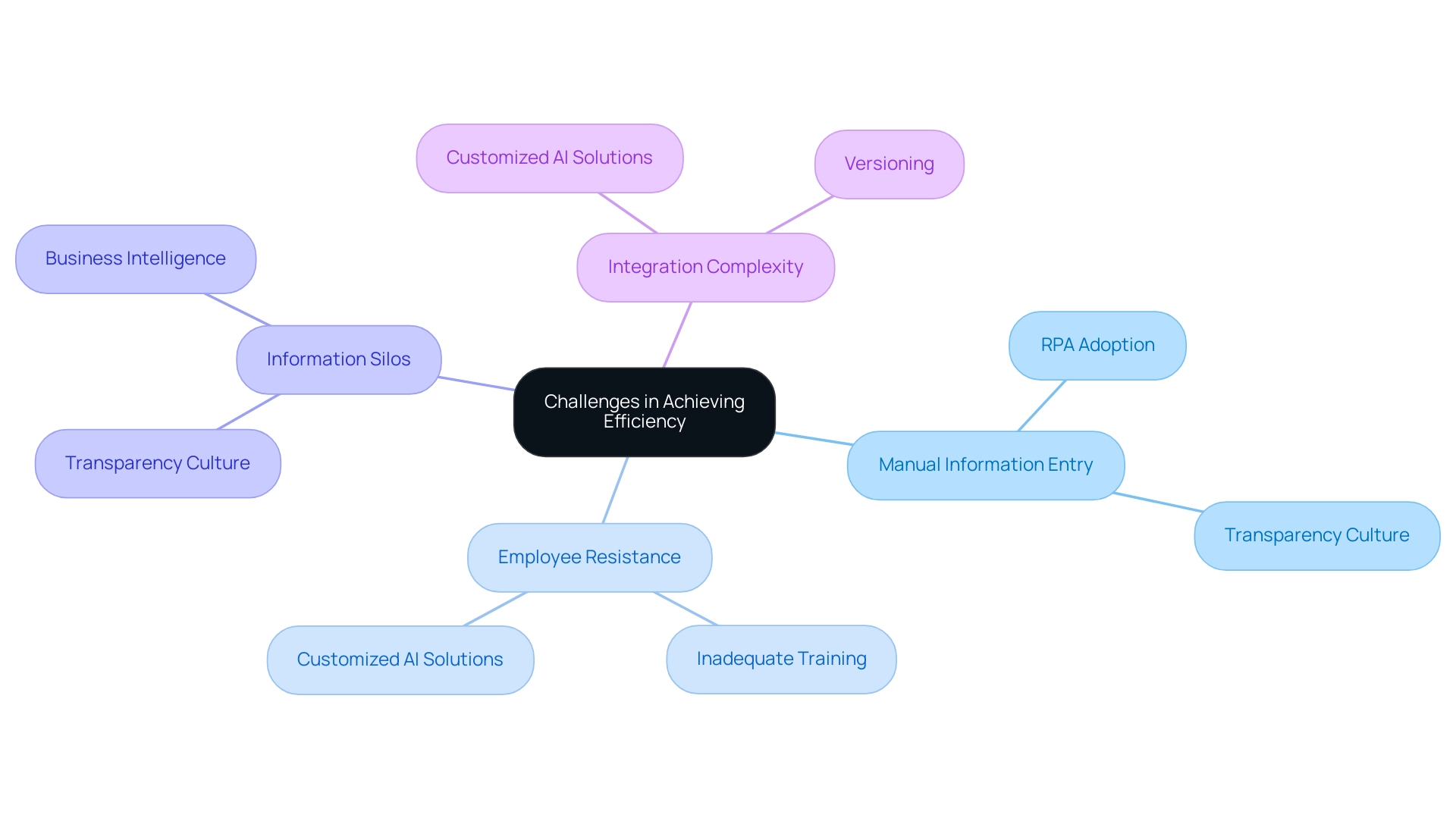

By applying these customization strategies, users can develop more impactful BI charts that effectively convey their narratives, ultimately fostering informed decision-making. Furthermore, addressing challenges such as investing excessive time in constructing reports instead of leveraging insights from BI charts, along with inconsistencies, through tools like Robotic Process Automation (RPA) and customized AI solutions can further enhance productivity. This ensures that stakeholders receive clear, actionable insights from their BI charts. It is also crucial to avoid repeating information in tables or figures, as this can detract from the clarity and effectiveness of the presentation.

A governance strategy is essential to maintain data consistency and trustworthiness across reports.

Best Practices for Effective Waterfall Chart Usage

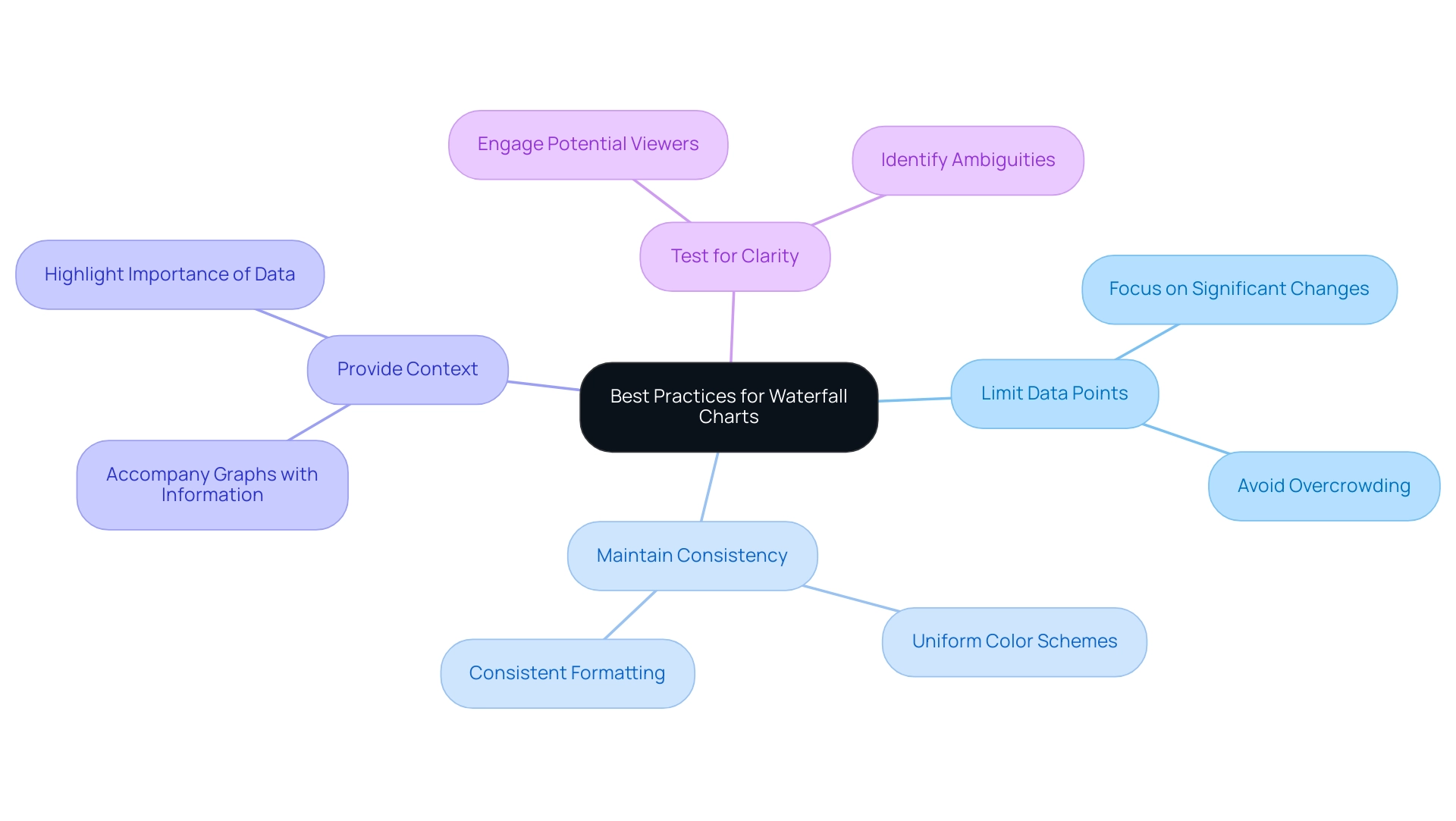

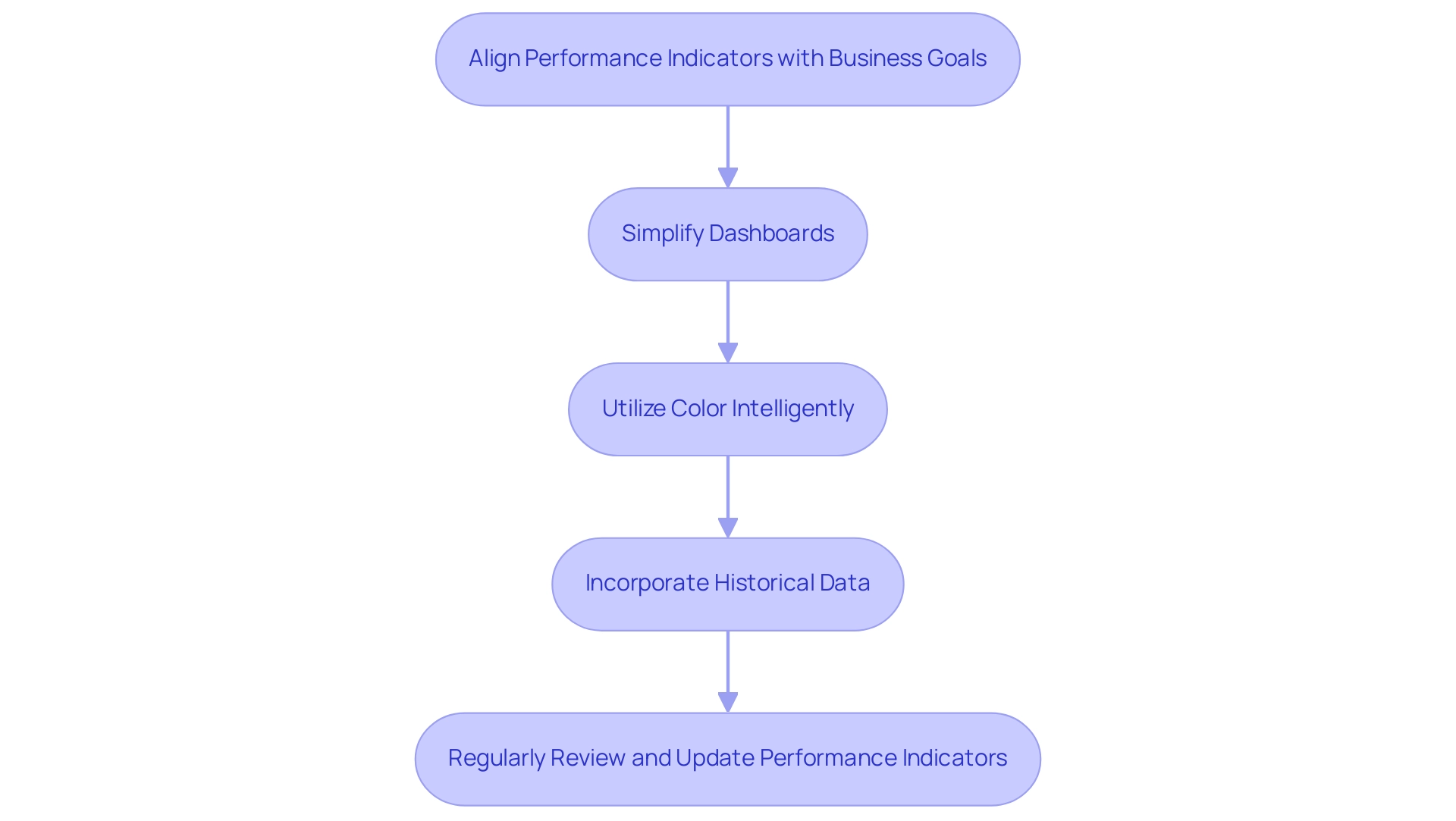

To maximize the effectiveness of waterfall charts in business intelligence, adhering to several best practices is essential:

- Limit Data Points: Focus on the most significant changes to avoid overcrowding the chart. A streamlined approach enhances clarity and allows viewers to grasp key insights quickly.

- Maintain Consistency: Employ uniform color schemes and formatting throughout all visuals. This intentional choice not only improves understanding but also prevents misleading interpretations of the information.

- Provide Context: Always accompany graphs with contextual information. This assists viewers in grasping the importance of the presented information, making the insights more actionable.

- Test for Clarity: Before finalizing, test the visual with potential viewers to ensure it communicates the intended message clearly. This step can reveal ambiguities and enhance overall effectiveness.

Organizations that implement these best practices often experience significant improvements in data visualization through BI charts. For instance, financial companies frequently utilize graphical representations to demonstrate financial gains and losses, effectively highlighting the cumulative impact of individual elements on overall outcomes. A case study involving employee headcount changes within a team over a year illustrates how a well-constructed flow diagram can clearly depict starting quantities, positive and negative changes, and the resulting ending quantity.

This case study also emphasizes the process of creating BI charts in Excel, detailing the use of an invisible series and mathematical adjustments to accurately represent information.

Moreover, the transformative effect of Creatum’s Power BI Sprint has empowered organizations like PALFINGER Tail Lifts GMBH to significantly enhance their analysis and reporting capabilities. As noted by Sascha Rudloff, Teamleader of IT- and Process Management, “With the Power BI Sprint from Creatum, we not only received an immediately deployable Power BI report and a gateway setup but also experienced a significant acceleration in our own Power BI development. The outcomes of the sprint surpassed our expectations and offered a vital enhancement to our analysis strategy.” By leveraging such innovative solutions alongside best practices in information presentation, businesses can refine their decision-making processes and stimulate growth in 2025 and beyond.

Incorporating Robotic Process Automation (RPA) can further simplify manual workflows, enabling teams to focus on strategic initiatives that enhance organizational value. This underscores the significance of efficient information representation practices.

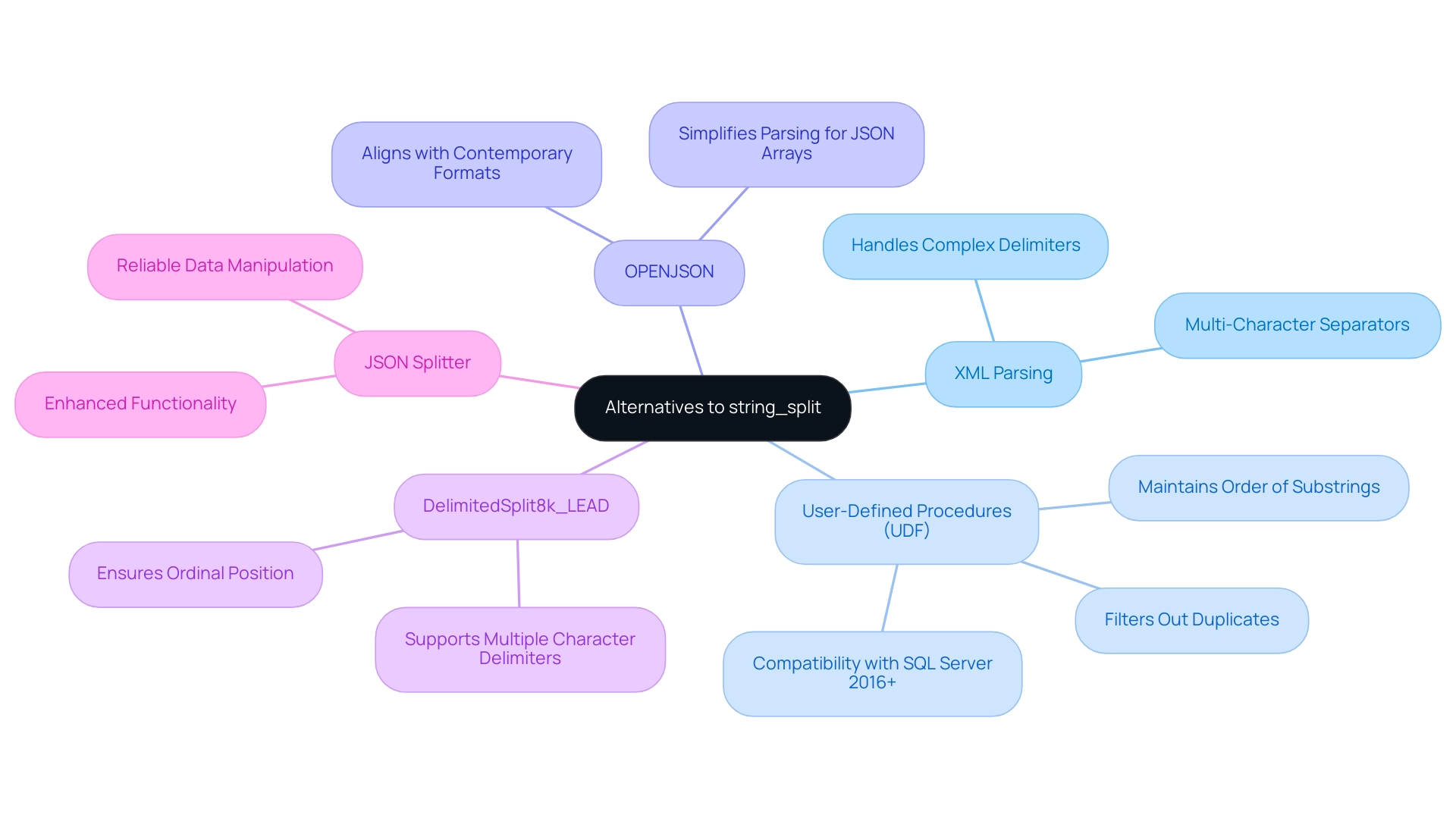

Alternatives to Waterfall Charts: When to Use Other Visualizations

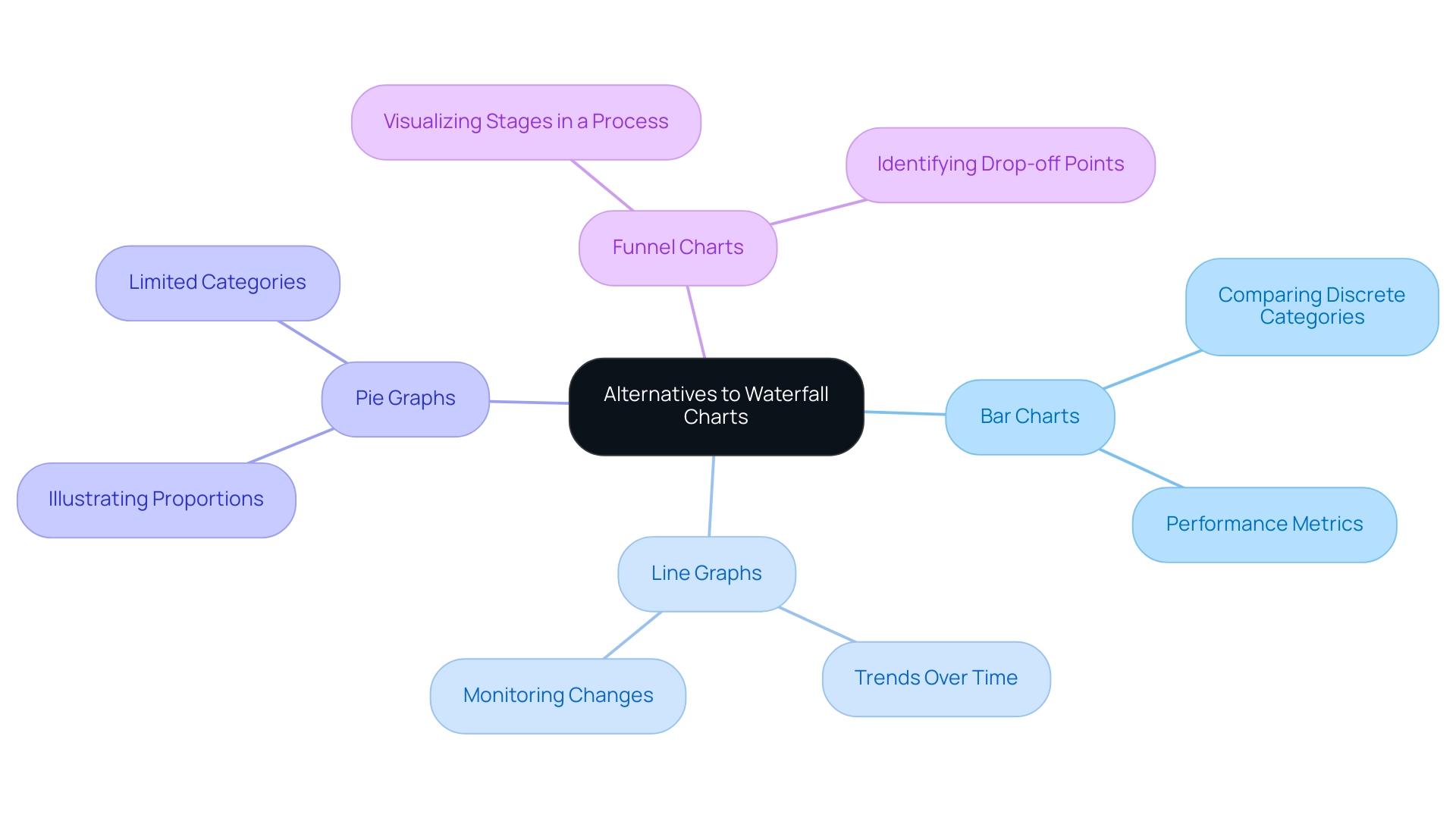

While waterfall graphs serve as powerful tools for visualizing cumulative information, there are specific scenarios where alternative visualizations may prove more effective. Understanding when to employ various types of BI charts can significantly enhance information analysis and decision-making, particularly within the realm of Business Intelligence (BI) and operational effectiveness. Consider the following alternatives:

-

Bar Charts: These are particularly effective for comparing discrete categories, enabling quick visual comparisons across different groups. Organizations frequently use bar graphs to evaluate performance metrics across various departments, simplifying the process of identifying areas needing improvement and fostering targeted strategies for growth.

-

Line Graphs: Best suited for illustrating trends over time, line graphs are invaluable for monitoring changes in data points, such as sales figures or website traffic. They provide a clear visual representation of growth or decline, empowering teams to make informed strategic choices based on historical data, which is crucial in a rapidly evolving AI landscape.

-

Pie Graphs: Although often debated, pie graphs can effectively illustrate proportions within a whole, particularly when the number of categories is limited. They assist stakeholders in quickly grasping the distribution of resources or market share among competitors, facilitating data-driven insights that enhance operational efficiency.

-

Funnel Charts: These charts excel in visualizing stages in a process, such as sales funnels. They enable organizations to identify drop-off points in customer journeys, facilitating targeted strategies to improve conversion rates and optimize workflows through Robotic Process Automation (RPA).

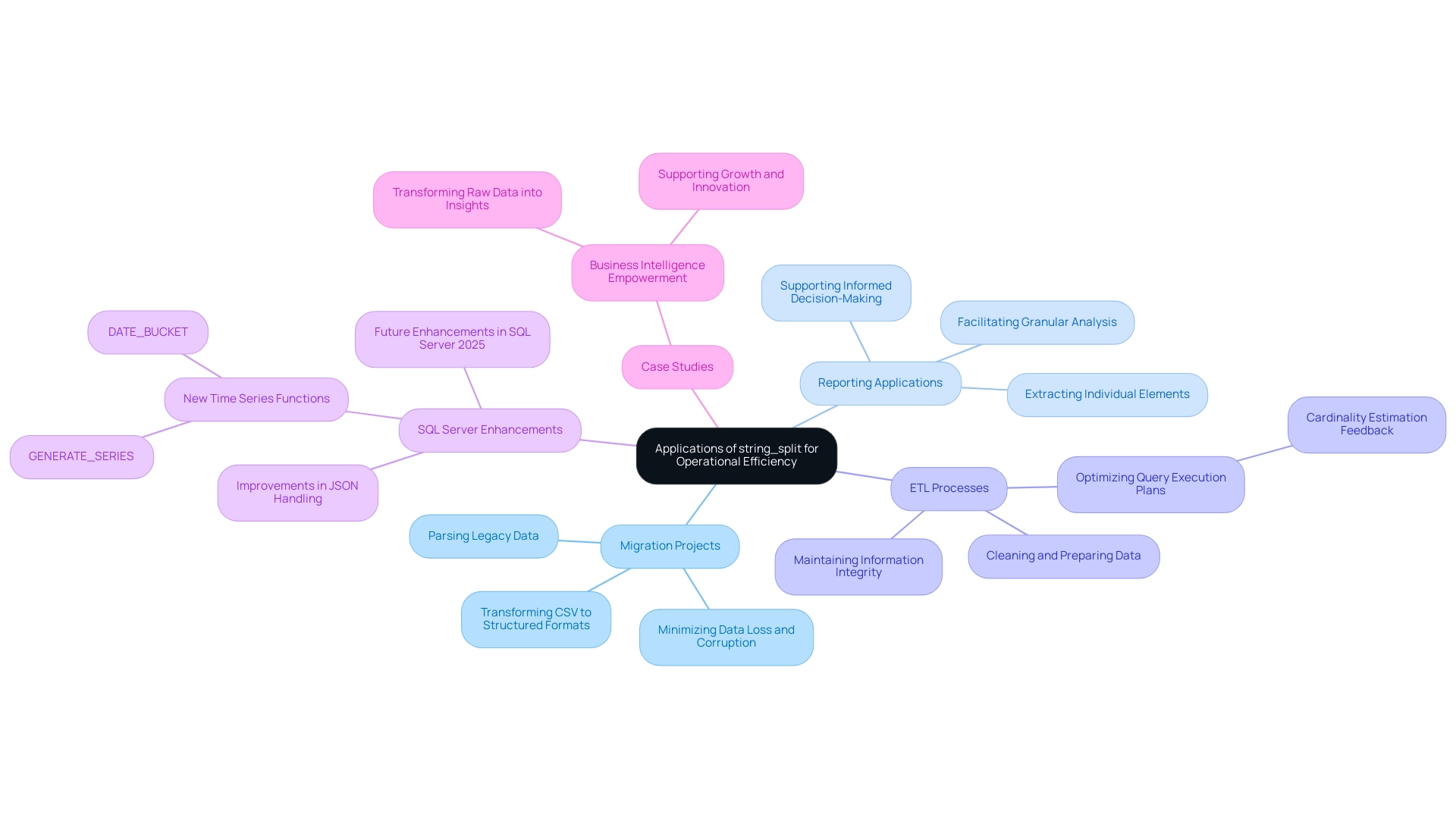

Selecting the appropriate illustration hinges on the specific data available and the narrative you wish to convey. A recent case study titled “Business Intelligence Empowerment” emphasized how a retail company effectively utilized bar graphs to compare sales performance across various regions, resulting in actionable insights that fueled targeted marketing initiatives. This case study underscores the importance of transforming raw data into actionable insights through effective visualization, which is essential for creating BI charts.

Moreover, expert advice highlights that understanding the strengths and weaknesses of each diagram type is crucial. As Sara Dholakia notes, it is essential to consider the audience’s familiarity with the subject matter of your data and the context they possess versus what you should provide. For instance, while waterfall diagrams excel in illustrating how an initial value is influenced by a series of positive and negative values, they may not be as effective in scenarios requiring straightforward comparisons of categories.

In navigating the overwhelming AI landscape, tailored AI solutions can assist organizations in selecting the most suitable BI chart representation techniques that align with their specific business goals. Statistics indicate that users can explore various display tools, such as Datylon, with a free 14-day trial, allowing them to experiment with different chart types without commitment. This flexibility encourages organizations to discover the most suitable representation methods tailored to their specific needs, ultimately enhancing operational efficiency.

In summary, employing the appropriate graphical method not only improves clarity but also enables organizations to make knowledgeable choices that foster growth and innovation. A keen mind and vision for technical illustration and data representation are essential for success in the field, ensuring that the chosen methods align with the overarching goals of business intelligence and operational excellence.

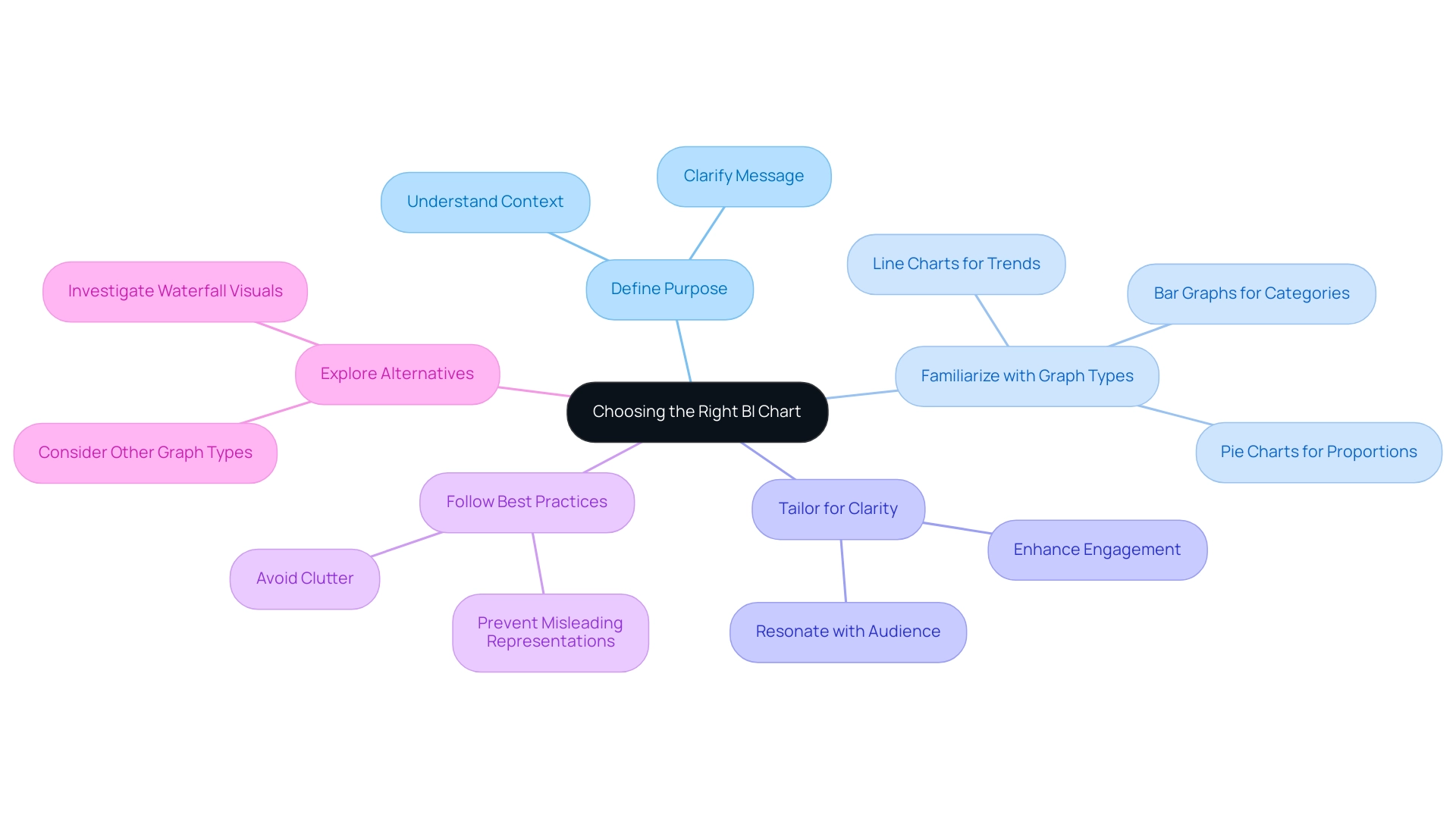

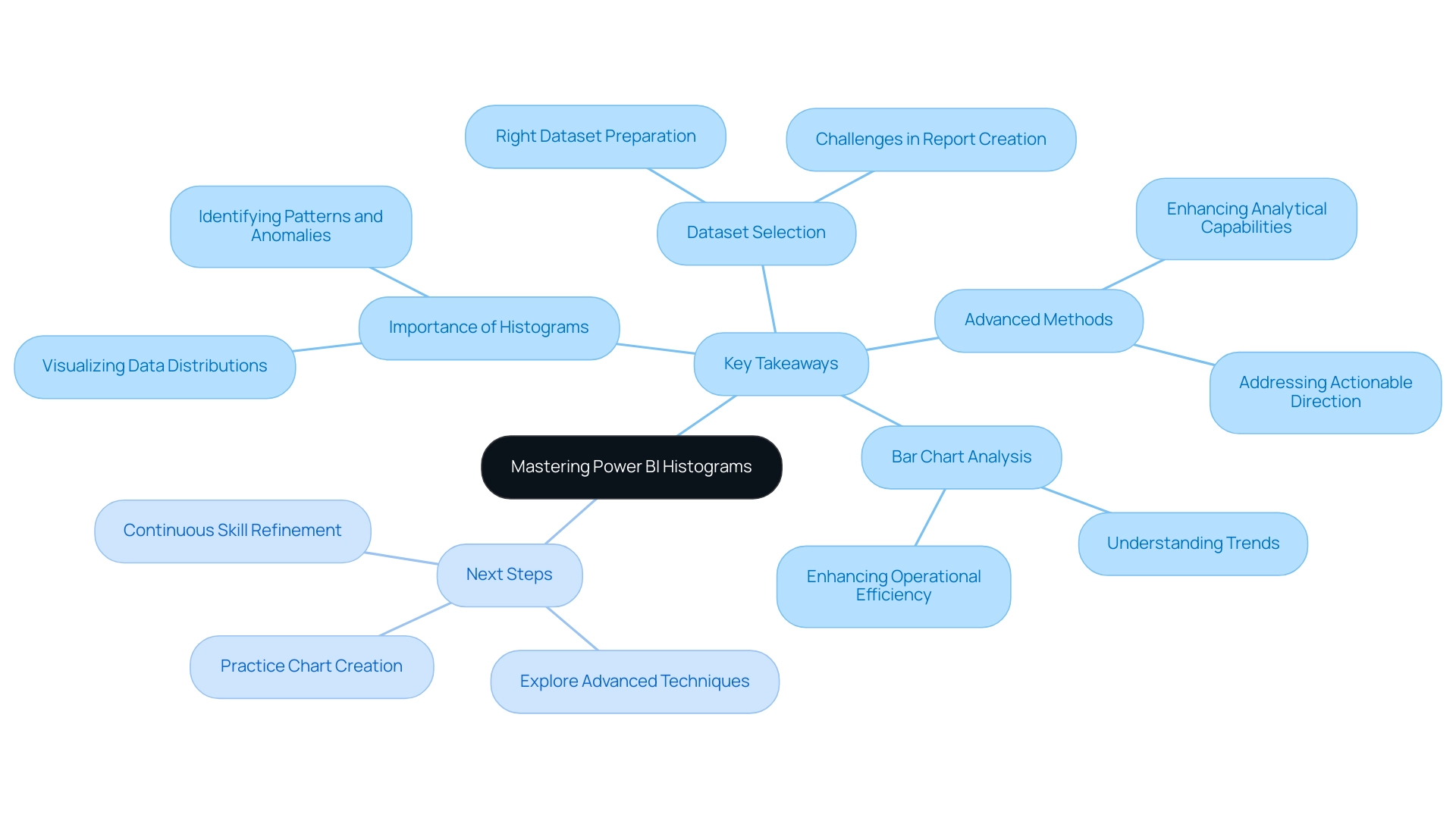

Key Takeaways: Choosing the Right BI Chart for Your Data

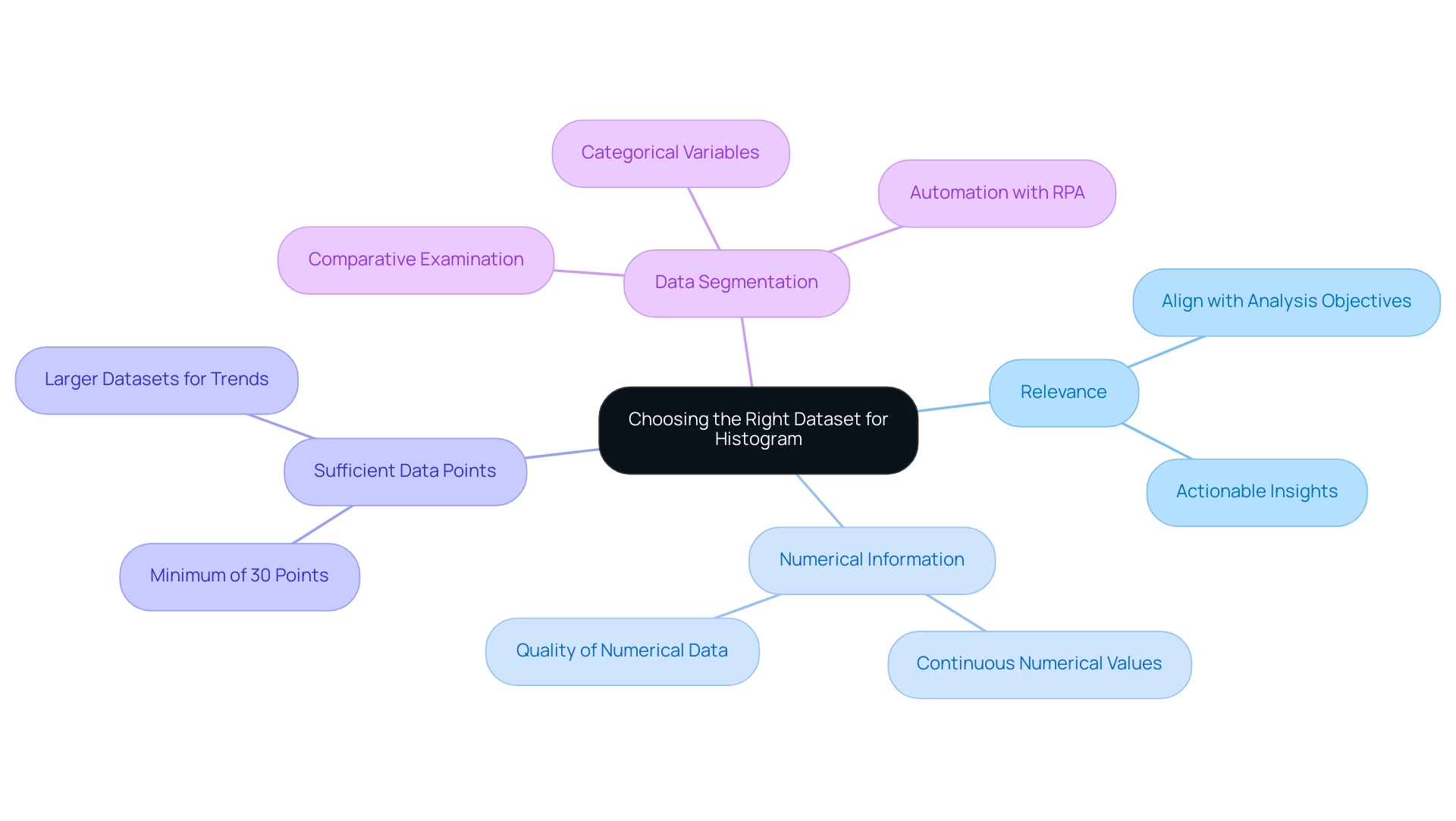

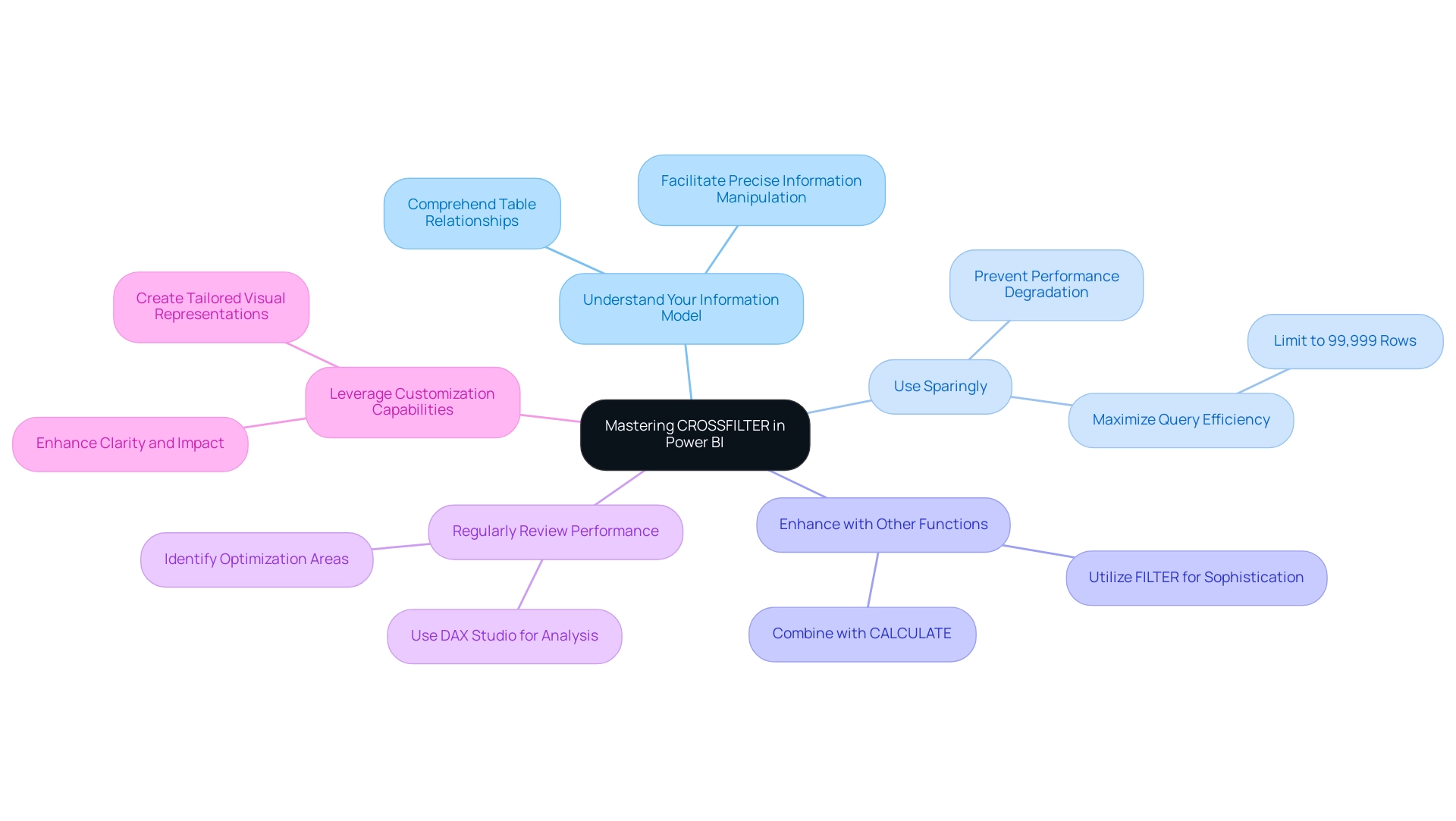

When selecting BI charts for data visualization in Power BI, it is essential to consider the following key takeaways:

- Clearly define the purpose of your data and the specific message you wish to convey. Grasping the context will direct your selection of visuals.

- Familiarize yourself with the different types of graphs available, such as bar graphs, which are especially effective for presenting lengthy text in category labels and organized information.

- Tailor your visuals to enhance clarity and engagement, ensuring that they resonate with your audience.

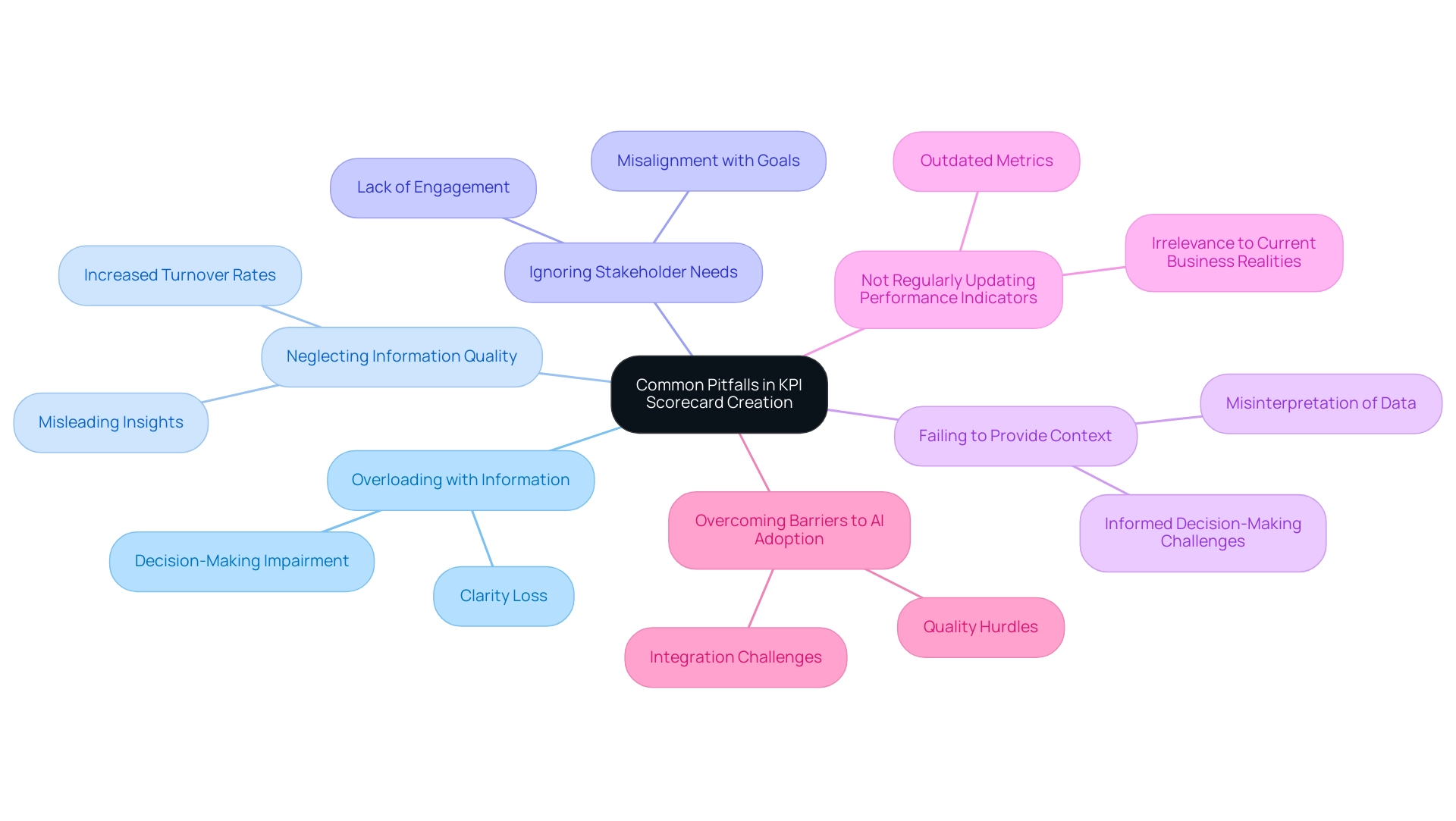

- Follow best practices to avoid common pitfalls in visualization, such as cluttered visuals or misleading representations.

- Investigate options besides waterfall visuals when needed, as other graphical types may offer a more precise representation of your information.

By utilizing these principles, users can leverage the power of BI charts to effectively convey insights, ultimately enhancing decision-making processes. For example, organizations that have strategically selected suitable BI charts have reported significant enhancements in their capability to extract actionable insights from their information. With over 1,500,000 Zebra BI users creating insightful reports, the effect of appropriate visual selection on data insights is evident.

As Raimonds Simanovskis noted, “We are launching AI assistants to help our users to make complex things easier,” which underscores the potential of AI tools in simplifying the selection and use of BI visuals. Furthermore, the transformative impact of Creatum GmbH’s Power BI Sprint is highlighted in a recent testimonial from Sascha Rudloff, Team leader of IT- and Process management at PALFINGER Tail Lifts GMBH, who stated, “Die Ergebnisse des Sprints haben unsere Erwartungen übertroffen und waren ein wichtiger Impuls für unsere Datenanalysestrategie.” This reinforces the importance of proper chart selection and the role of tailored AI solutions from Creatum GmbH in driving data-driven insights and operational efficiency.

Embracing these best practices not only streamlines the visualization process but also drives growth and innovation in a data-rich environment.

Conclusion

In the intricate realm of business intelligence, effective data visualization through BI charts is essential for organizations striving to make informed decisions. By transforming complex datasets into intuitive visuals, these charts enhance understanding and unveil actionable insights that propel strategic actions. This article underscores the necessity of familiarizing oneself with various chart types, including waterfall, bar, and line charts, to effectively convey data insights and navigate market complexities.

The practical guidance on creating and customizing waterfall charts demonstrates how organizations can improve clarity and impact in their visualizations. By adhering to best practices—such as limiting data points, maintaining consistency, and providing context—businesses can optimize the effectiveness of their charts, ensuring that stakeholders can readily extract meaning from the data presented.

As reliance on data continues to escalate, so too does the imperative for organizations to adopt tailored BI solutions that align with their distinct objectives. By leveraging appropriate visualization techniques and following best practices, businesses can unlock the full potential of their data, fostering growth and innovation in an ever-evolving landscape. The insights derived from these visualizations not only inform decision-making but also pave the way for a data-driven future.

Frequently Asked Questions

What are Business Intelligence (BI) visuals?

BI visuals are tools that help organizations analyze and interpret complex datasets by transforming unprocessed information into clear visual representations, enabling stakeholders to quickly recognize insights and trends.

How do BI visuals enhance decision-making?

In the context of Power BI, BI visuals improve communication of insights and facilitate rapid, informed decision-making by providing clear visual data representations.

Why are BI graphs significant for organizations?

BI graphs enhance comprehension of data and promote strategic initiatives by allowing companies to forecast future trends using predictive analytics, which helps them anticipate market shifts and mitigate risks.

What is the trend regarding the adoption of BI charts?

There is a growing reliance on BI charts, with 2025 expected to be a pivotal year for their adoption as organizations increasingly recognize the value of visualization.

How does Creatum GmbH contribute to the field of BI?

Creatum GmbH focuses on customized AI solutions that help businesses leverage information effectively, aligning with the increasing demand for tailored BI solutions.

What role does continuous learning play in business intelligence?

Continuous learning, such as participating in initiatives like MDS@Rice, is crucial for individuals to acquire the latest skills and knowledge necessary to thrive in the data-driven landscape.

Can you provide examples of the impact of BI charts on decision-making?

Real-world examples show that organizations using BI charts, particularly in predictive analytics, have reported enhanced clarity in their analyses, leading to more strategic and informed decisions.

What are the primary chart types available in Power BI?

The primary chart types in Power BI include: Bar and Column Charts for comparing categorical data, Line Graphs for illustrating trends over time, Pie and Donut Graphs for displaying proportions of a whole, and BI Charts for visualizing cumulative effects of sequentially introduced values and relationships between two variables.

What recent updates have been made to Power BI charts?

In 2025, Power BI introduced enhanced features for various visual types, including popular network diagrams for illustrating complex relationships, emphasizing the importance of clarity in design.

How important is it to select the appropriate graph type?

Selecting the right graph type is crucial as it affects the effectiveness of the information narrative being conveyed, with a strong preference shown for certain representations among surveyed individuals.

What benefits have organizations experienced from using Power BI?

Organizations have reported enhanced operational efficiency and informed decision-making through the use of Power BI, as illustrated by successful case studies and testimonials like that of PALFINGER Tail Lifts GMBH.

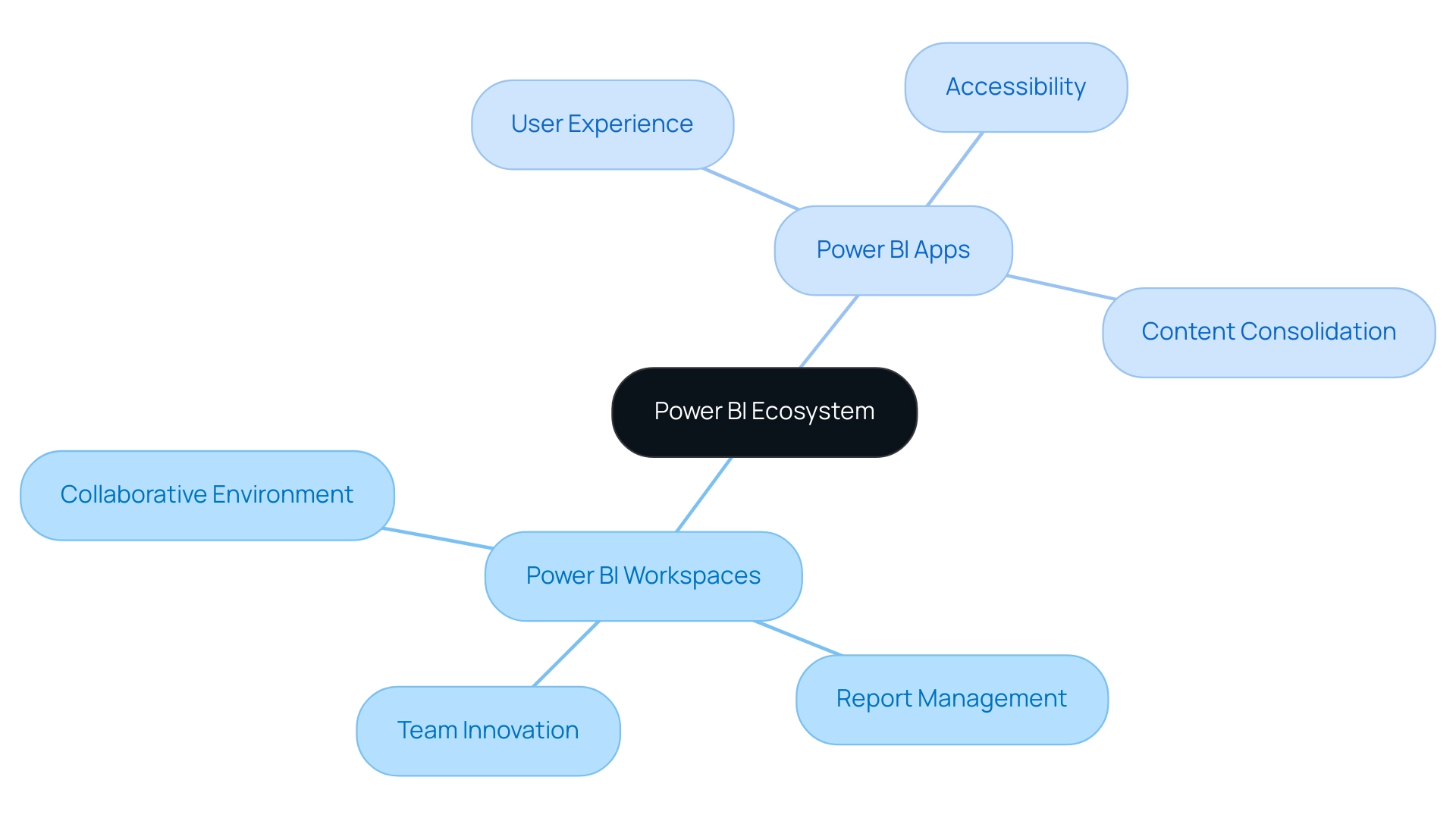

Overview

Power BI apps and workspaces fulfill distinct yet complementary roles within the Business Intelligence ecosystem.

- Apps prioritize user experience and accessibility, streamlining access to curated reports for end-users.

- In contrast, workspaces emphasize collaborative development and content management, facilitating real-time collaboration among developers.

This distinction is crucial, as it highlights how each component enhances operational efficiency and supports data-driven decision-making. Understanding these roles can empower organizations to leverage Power BI more effectively.

Introduction

In the rapidly evolving landscape of data analytics, Power BI emerges as a pivotal tool for organizations aiming to harness data-driven insights. At the core of its functionality are Power BI Apps and Workspaces, each serving a distinct yet complementary role in the data management ecosystem.

- Workspaces act as collaborative hubs where teams can develop and refine reports, fostering innovation and teamwork in data analysis.

- In contrast, Power BI Apps cater to end-users, presenting curated content in an accessible format that enhances user experience and decision-making.

As organizations navigate the complexities of data governance, user engagement, and operational efficiency, understanding the nuances between these two components becomes increasingly vital. This exploration delves into the functionalities, advantages, and practical applications of Power BI Apps and Workspaces, illuminating their integral roles in modern business intelligence strategies.

Understanding Power BI Apps and Workspaces

In the BI ecosystem, the comparison between Power BI apps and workspaces underscores their essential roles, each catering to distinct user needs. A BI Workspace serves as a collaborative environment where teams of developers and analysts can create, manage, and share reports and dashboards. This space is specifically designed for collaborative efforts on models and visualizations, fostering teamwork and innovation in analysis—crucial elements for driving operational efficiency and leveraging Business Intelligence effectively.

Conversely, when examining Power BI apps versus workspaces, it becomes clear that Power BI tools are tailored for end-users, consolidating related reports and dashboards into a single, user-friendly interface. This distinction is vital as it highlights the differing roles these tools play within the management lifecycle. While workspaces focus on the development and enhancement of data insights, apps prioritize experience and accessibility, ensuring stakeholders can easily access the information they require.

As Santhiya Balachandar notes, “Once your app is ready, you can share it with individuals in multiple ways: automatic installation, direct links, or via the Power BI app marketplace.”

Recent statistics reveal that the usage metrics report ranks reports based on view count, with the highest rank reflecting the most viewed content. This metric is essential for organizations to discern which reports hold the most value for their users. However, it is crucial to recognize that the usage metrics report is unsupported in My Workspace and has specific limitations regarding data collection, which can affect how organizations track engagement and address challenges related to data inconsistency.

A case study on the application of BI tools demonstrates their effectiveness in distributing reports and dashboards to a wide audience within organizations. By ensuring that only necessary individuals have access to build and edit content, this method isolates production-ready material and delivers a read-only version to end recipients. The outcome of utilizing Business Intelligence tools has been a simplified access to business insights, enabling users to engage with the most recent information while facilitating secure information sharing and enhancing decision-making through a unified platform for analysis and collaboration.

As organizations continue to explore the capabilities of BI, understanding the distinctions between Power BI apps and workspaces becomes increasingly significant. The latest updates on BI Workspaces features further enhance their functionality, making them indispensable for teams aiming to optimize their management processes. Additionally, there has been recent inquiry regarding how to automatically refresh information in a BI report connected to Fabric Lakehouse, underscoring the ongoing evolution and importance of these tools in contemporary information management.

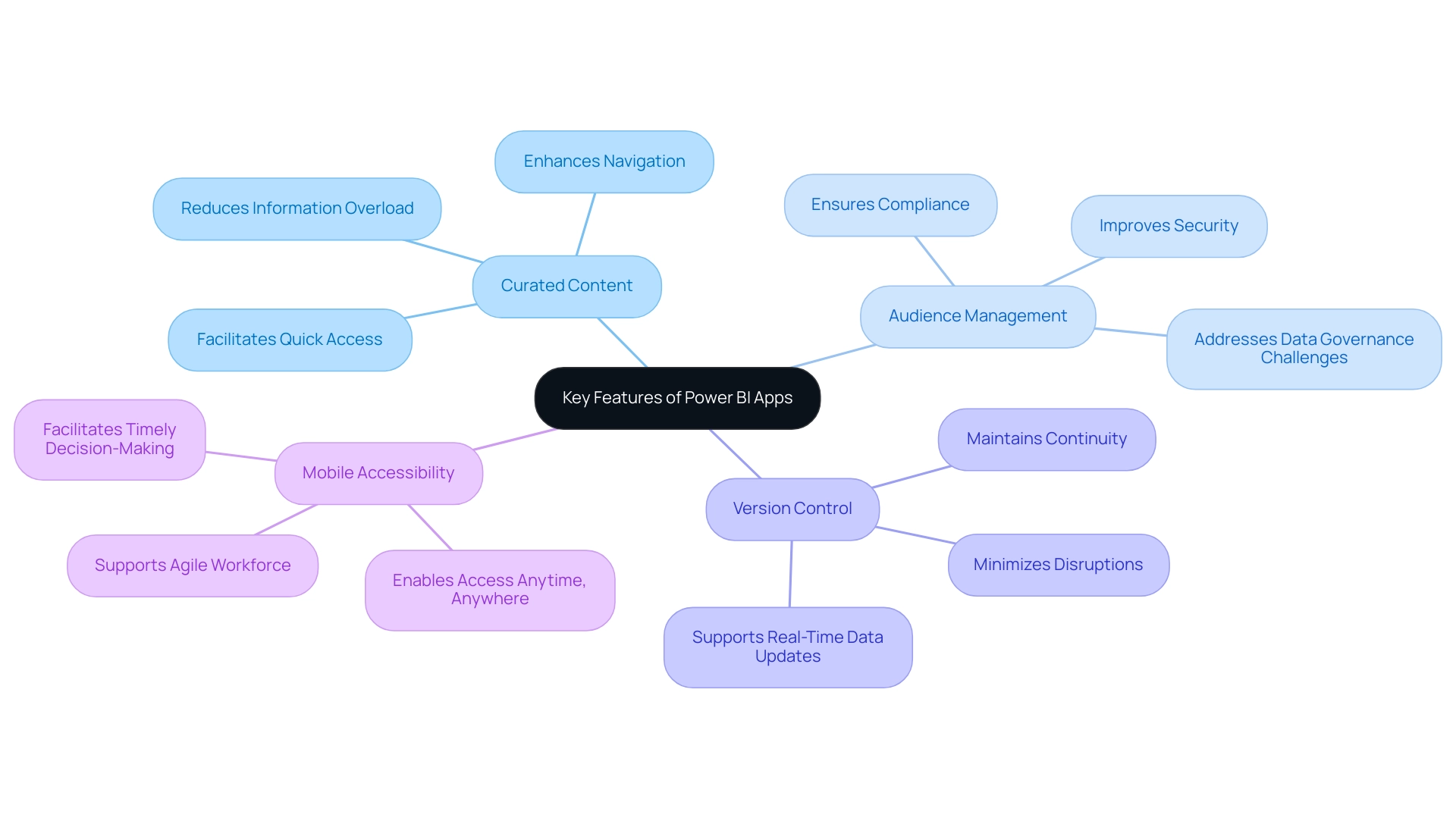

Key Features of Power BI Apps

Business Intelligence (BI) applications are designed to significantly enhance user experience and improve information accessibility through several key features:

-

Curated Content: BI applications provide individuals with a well-structured assortment of related reports and dashboards in one centralized location. This enhances navigation and facilitates quick access to essential information. Such a feature is particularly beneficial in environments where information overload can hinder decision-making, ensuring that users can effectively extract actionable insights.

-

Audience Management: A standout feature in the comparison of Power BI apps versus workspace is the ability for administrators to tailor content visibility based on user roles. This capability ensures that sensitive information is accessible only to authorized personnel, thereby bolstering security and compliance with regulatory standards. It is crucial for addressing data inconsistency and governance challenges in business reporting, especially in the context of Power BI apps versus workspace.

-

Version Control: Business Intelligence applications incorporate a robust change management system, allowing developers to update reports within Power BI apps versus workspace without immediately affecting the end-user experience. This feature is vital for maintaining continuity and minimizing disruptions during updates, which is essential for organizations reliant on real-time data.

-

Mobile Accessibility: In today’s dynamic work environments, these applications are optimized for mobile devices, enabling individuals to access insights anytime and anywhere. This flexibility supports a more agile workforce, facilitating timely decision-making even when users are away from their desks.

Collectively, these features enhance the usability and effectiveness of BI, particularly in the context of Power BI apps versus workspace as a business intelligence tool. Organizations utilizing Business Intelligence applications have reported significant improvements in user experience, with studies indicating that tailored content and mobile accessibility can lead to a 30% increase in user engagement.

As industry experts have noted, the ability to curate content effectively is transformative for data analysts. Curated content not only simplifies the user experience but also fosters better insights and decision-making. Daryl Plummer emphasizes that AI is part of a broader disruption that will compel organizations to reassess their strategies and innovations, aligning with the evolving capabilities of BI applications.

Furthermore, according to Forrester, End User Computing (EUC) technologies will be crucial for organizations in 2023 to sustain productivity and mitigate risks, underscoring the significance of BI solutions in today’s data-driven landscape. Additionally, Creatum GmbH’s extensive services aimed at enhancing customer experience (CX) performance for financial institutions exemplify the practical application of Business Intelligence solutions, demonstrating how customized approaches can lead to substantial and lasting transformation. With anticipated updates in 2025, BI apps are set to introduce even more sophisticated audience management features, further solidifying their role as essential tools for organizations striving to enhance their information strategies.

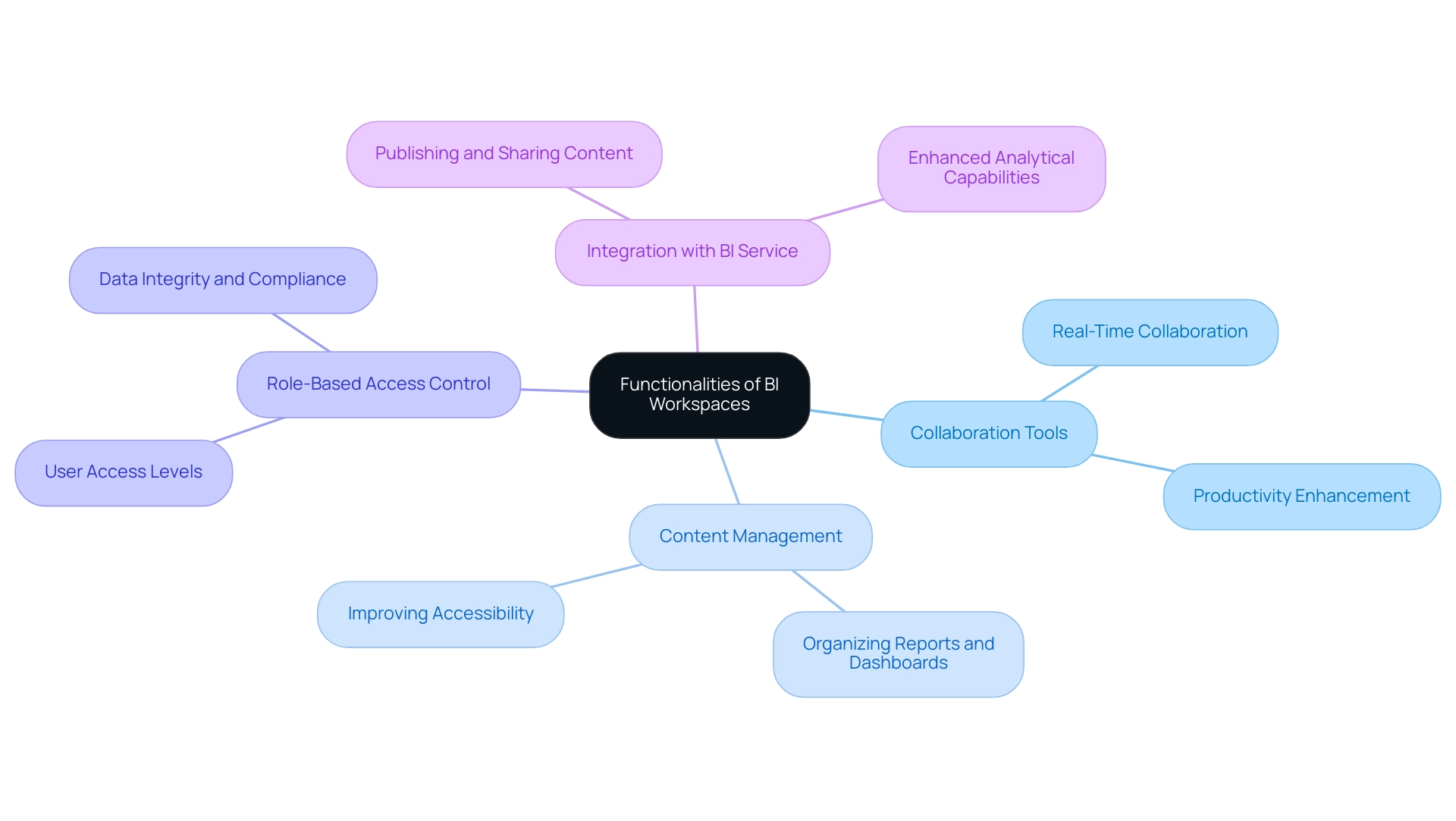

Exploring the Functionalities of Workspaces

When discussing Power BI apps versus workspaces, it is evident that both are essential for fostering collaborative data analysis and report creation. Their key functionalities include:

-

Collaboration Tools: Workspaces empower multiple users to engage in real-time collaboration, enabling the sharing of insights and feedback. This feature is crucial, as statistics indicate that effective collaboration can significantly enhance productivity and decision-making processes. As Tomas Kutac noted, “One of the biggest mistakes teams make is relying solely on BI Desktop.” While it’s effective for building reports, it’s not designed for real-time teamwork.

-

Content Management: Users can systematically organize reports, dashboards, and datasets, streamlining the management of extensive information volumes. This structured approach not only improves accessibility but also enhances the overall efficiency of information handling, addressing common challenges in leveraging insights from the comparison of Power BI apps versus workspaces.

-

Role-Based Access Control: Administrators have the ability to assign varying access levels to users, ensuring that sensitive information remains protected while still promoting collaborative efforts. This security measure is vital in maintaining data integrity and compliance, particularly in regulated environments.

-

Integration with BI Service: Workspaces are seamlessly linked to the BI Service, facilitating the effortless publishing and sharing of content. This integration allows teams to leverage the full capabilities of BI, enhancing their analytical abilities and driving data-driven insights crucial for informed decision-making.

Recent enhancements in BI Workspaces have further improved these functionalities, addressing feedback and evolving collaboration needs. For instance, a user recently expressed a desire to create a metrics report for reports within the same workspace, which garnered 9,990 views, highlighting the growing demand for comprehensive analytics tools. However, it is important to note that duplicate reports may appear in usage metrics due to the deletion and recreation of reports or their inclusion when considering Power BI Apps versus workspaces.

A case study involving national and regional clouds revealed that usage metrics are not available in these environments, ensuring compliance with local regulations while maintaining security and privacy. This underscores the importance of understanding the operational landscape when utilizing BI Workspaces, particularly regarding compliance and security.

Additionally, to refresh the usage metrics report, users must authenticate to enable backend API calls for tenant telemetry, providing a more comprehensive understanding of the functionalities and requirements of BI Workspaces.

In summary, the functionalities of BI Workspaces are essential for teams aiming to enhance their analysis capabilities, driving efficiency and collaboration in a rich environment. Our BI services at Creatum GmbH, including the 3-Day BI Sprint for swift report creation and the General Management App for thorough management, are designed to address inconsistencies and governance challenges, ultimately supporting business growth and innovation.

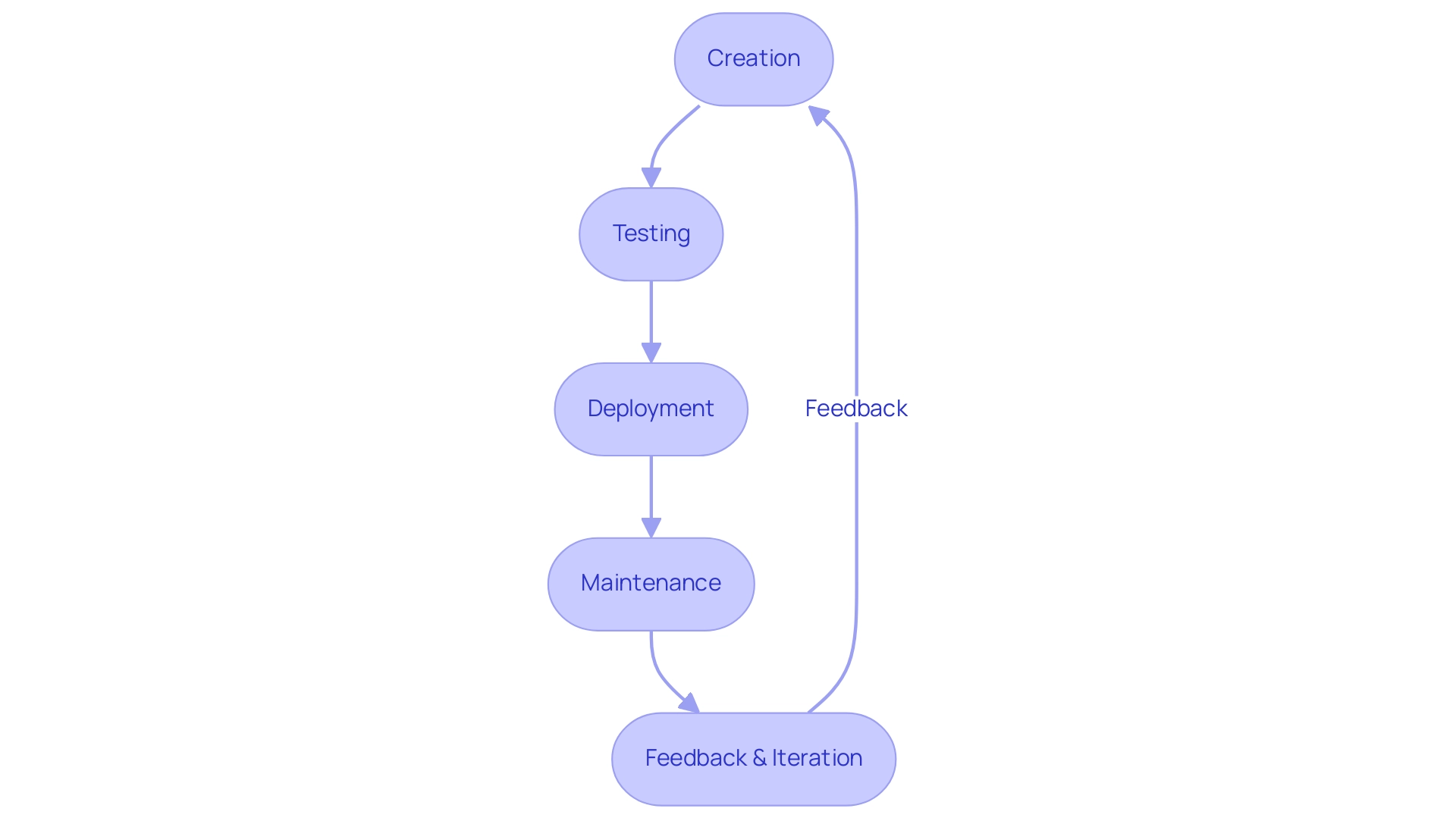

The Power BI App Lifecycle: From Creation to Maintenance

The lifecycle of Power BI Applications encompasses a structured process that involves several critical stages, particularly when comparing Power BI apps and workspaces.

-

Creation: Within Workspaces, developers kick off the process by designing and publishing reports and dashboards tailored to individual needs. This stage is vital as it lays the groundwork for effective data-driven insights, significantly enhancing operational efficiency. However, challenges such as time-consuming report creation and data inconsistencies can impede this process.

-

Testing: Prior to deployment, applications undergo rigorous testing to validate functionality and ensure an optimal user experience. This phase is crucial, as it helps identify potential issues that could obstruct user engagement and the extraction of actionable insights.

-

Deployment: Following successful testing, applications are published, providing end-users with access to curated content that bolsters their decision-making capabilities. This access is essential for effectively leveraging Business Intelligence, especially in the context of Power BI apps versus workspaces, enabling organizations to transform raw information into actionable insights that drive growth and innovation.

-

Maintenance: Ongoing maintenance is critical for keeping Apps relevant and effective. This includes regular data refreshes, report updates, and access management, which collectively contribute to sustained user satisfaction and engagement. Notably, organizations report an average of 3,989 views on posts encouraging interaction, underscoring the importance of active engagement in this phase, particularly in gathering feedback for future iterations.

-

Feedback and Iteration: Continuous enhancement relies on collecting feedback from users, allowing organizations to adjust their applications to meet evolving business needs. This iterative process is supported by step-by-step methods available in the Admin portal for accessing usage metrics across all Power BI apps versus workspaces, ensuring that insights from users directly inform future updates.

Furthermore, case studies on Content Lifecycle Management Approaches illustrate how various strategies can be employed based on team size and project scope, ranging from simpler self-service content publishing for smaller teams to advanced enterprise solutions for larger organizations. As the landscape of BI Applications evolves, staying attuned to feedback and lifecycle management trends is crucial for enhancing the efficiency of these tools. As vanessafvg, a Super User, emphasizes, “If I took the time to answer your question and I came up with a solution, please mark my post as a solution and/or give kudos freely for the effort.”

This underscores the significance of contributions in the app development process. Additionally, tracking user activities in BI is essential for ongoing maintenance and improvement, ensuring that organizations can respond effectively to user needs. Moreover, integrating RPA solutions from Creatum GmbH can further enhance operational efficiency by automating repetitive tasks, thereby allowing teams to concentrate on strategic initiatives.

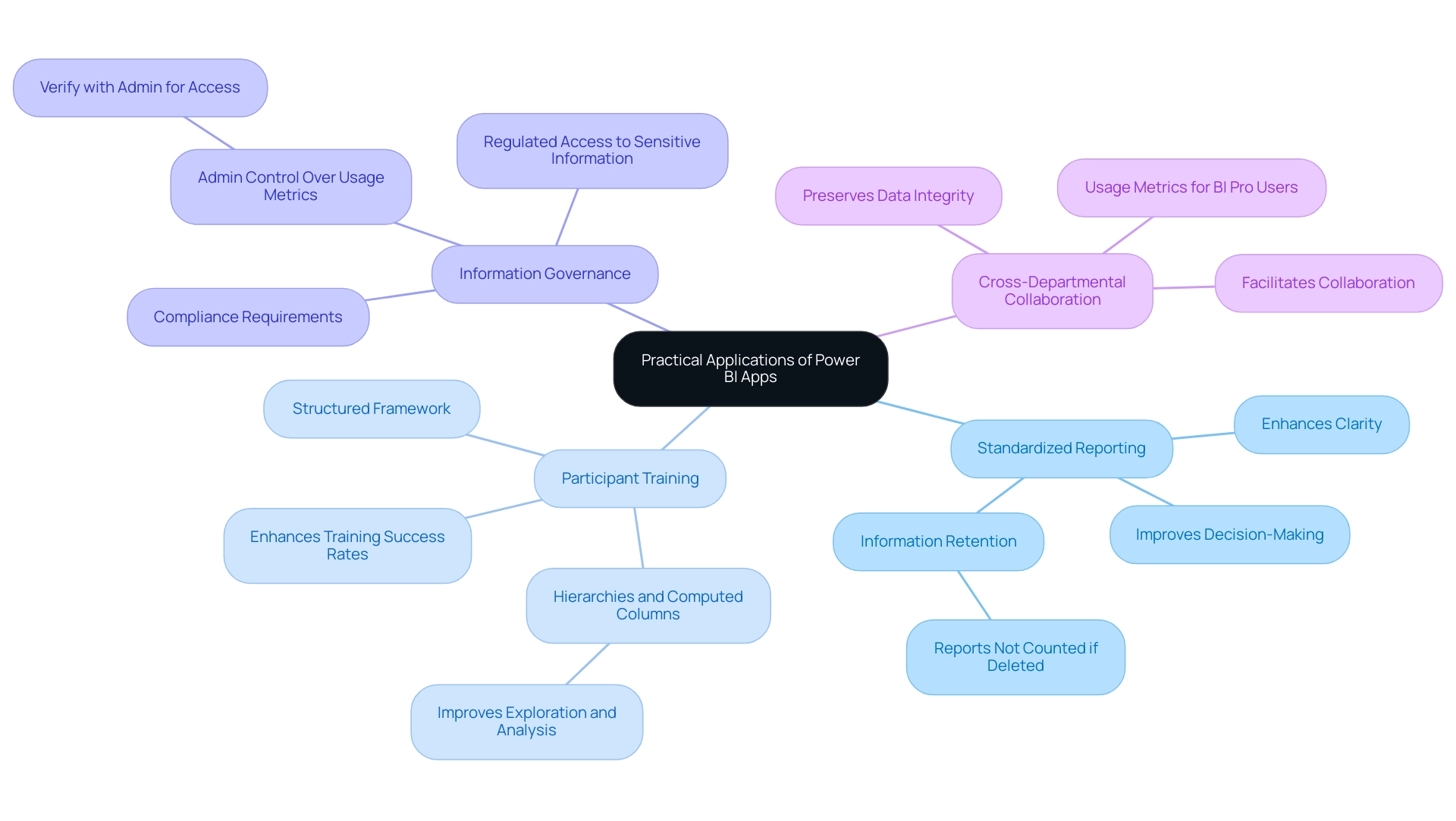

When to Use Power BI Apps: Practical Applications

Business Intelligence applications serve as a vital resource across various organizational contexts, particularly in the following domains:

-

Standardized Reporting: Organizations aiming to distribute consistent reports across multiple departments find Business Intelligence tools to be an effective solution. By facilitating standardized reporting, these applications enhance clarity and ensure alignment among stakeholders with the same insights, ultimately improving decision-making processes. Notably, if reports are removed after being viewed, they are not counted by the admin APIs, underscoring the importance of information retention and management in the context of Power BI Apps versus workspace. This is critical in an information-rich environment where extracting meaningful insights can be challenging, as organizations often grapple with time-consuming report creation and inconsistencies.

-

Participant Training: New individuals frequently encounter challenges in navigating complex data environments. BI Apps provide a structured framework that streamlines access to resources, facilitating learning and adaptation. This structured approach has been shown to significantly enhance training success rates, fostering a more competent workforce. Furthermore, users can establish hierarchies and computed columns in BI, which improves exploration and analysis, further aiding operational efficiency and addressing the absence of actionable direction that many organizations face.

-

Information Governance: In an era where privacy and compliance are paramount, Power BI Tools enable regulated access to sensitive information. Organizations with strict compliance requirements can leverage these applications to ensure that only authorized personnel can access essential data, thereby enhancing governance and security. The case study on admin control over usage metrics illustrates how administrative settings can significantly impact access to features, emphasizing the need for organizations to verify with their admin if they are unable to run usage metrics.

-

Cross-Departmental Collaboration: When various teams require access to the same data insights, Business Intelligence tools excel in facilitating collaboration while preserving data integrity. This capability is crucial for organizations that depend on cross-functional teams to drive initiatives, as it ensures that everyone is working with the same, accurate information. Moreover, usage metrics reports are a feature accessible solely to BI Pro users, which is a significant consideration for organizations contemplating the implementation of BI applications.

These practical applications underscore the strategic importance of BI solutions in enhancing business intelligence initiatives. As organizations increasingly adopt standardized reporting practices, the effectiveness of Business Intelligence tools in streamlining these processes becomes even more evident, with studies indicating that standardized reporting can lead to improved operational efficiency and reduced errors. Additionally, it was revealed at the 2021 Microsoft Business Application Summit that an impressive 97% of Fortune 500 companies now utilize BI, highlighting its widespread adoption and relevance. Looking ahead to 2025, the integration of BI applications into daily operations is expected to evolve, offering even more comprehensive solutions for data management and reporting.

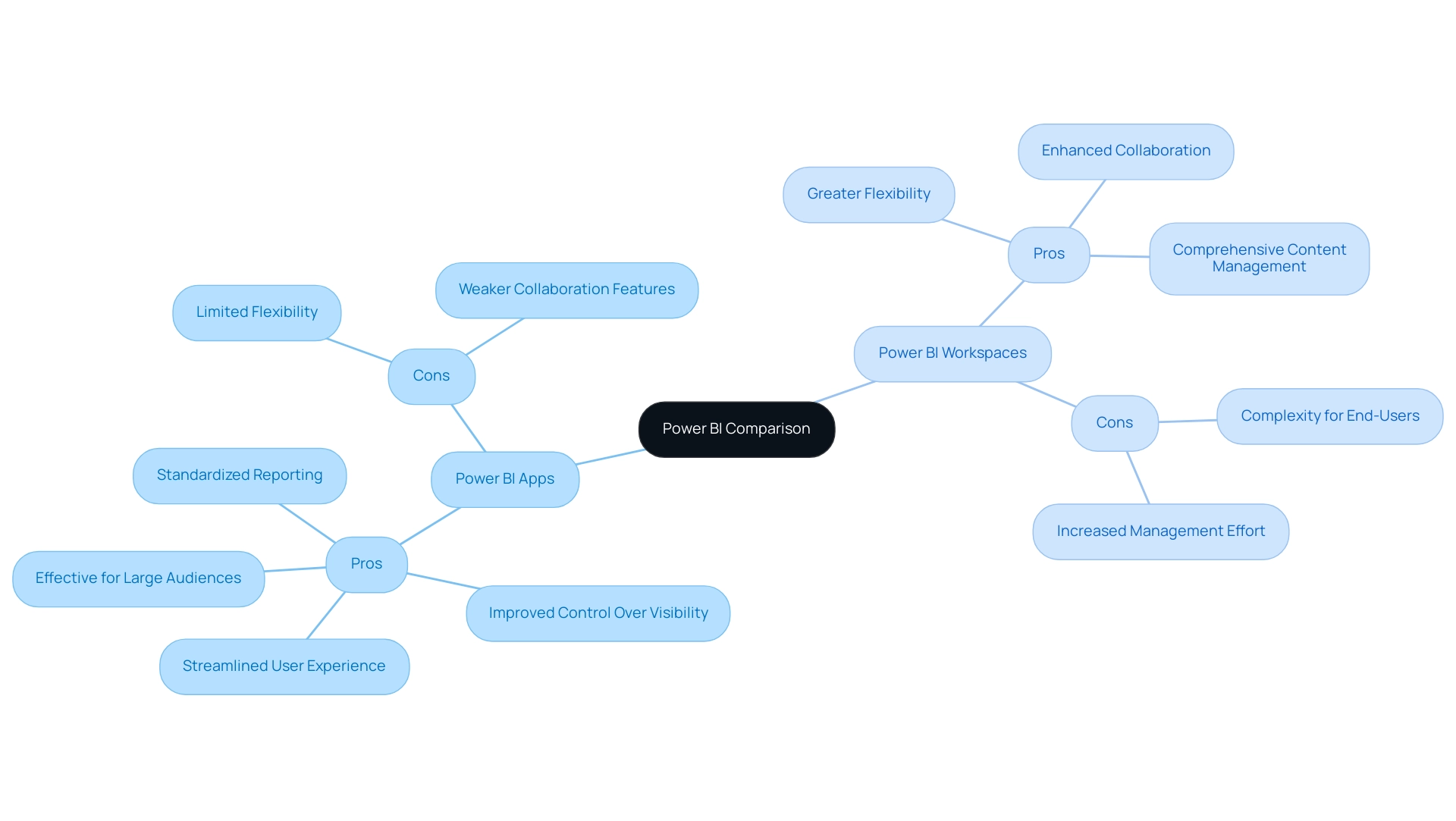

Pros and Cons: Power BI Apps vs Workspaces

In evaluating Power BI Apps versus Workspaces, distinct advantages and disadvantages emerge, particularly within the context of today’s complex AI landscape that businesses navigate:

Power BI Apps present several pros:

- They offer a streamlined user experience with curated content, enabling users to access relevant information swiftly.

- Improved control over information visibility and access ensures sensitive details are shared appropriately.

- They are particularly effective for standardized reporting across various departments, fostering consistency in data presentation.

- Case studies indicate that BI Applications excel in packaging reports for large audiences, enhancing organization and distribution.

However, Power BI Apps also have their cons:

- Developers face limited flexibility compared to Workspaces, which may restrict customization options for advanced users.

- Collaboration features may not match the robustness found in Workspaces, potentially hindering teamwork on data projects.

On the other hand, Power BI Workspaces offer notable advantages:

- They provide greater flexibility for developers to create and manage content, allowing tailored solutions that meet specific organizational needs.

- Enhanced collaboration features facilitate teamwork, making it easier for groups to collaborate on projects and share insights.

- Comprehensive content management capabilities support effective governance and organization of BI assets.

Yet, there are drawbacks to consider:

- The complexity of Workspaces can overwhelm end-users, leading to confusion and underutilization of features.

- They require more management effort to ensure information governance, which can strain resources if not adequately addressed.

When it comes to performance, typical report opening times vary by consumption method and browser type, providing a quantitative measure of the efficiency differences between the two tools. This comparison empowers organizations to discern which tool aligns more closely with their operational objectives, especially when considering the latest user satisfaction ratings and case studies that illustrate the effectiveness of BI Apps in packaging reports for extensive user groups. As Data Analyst Santhiya Balachandar states, “I am passionate about transforming information into actionable insights,” underscoring the importance of effective management tools in enhancing operational efficiency.

Moreover, the transformative impact of Creatum’s BI Sprint has been significant; as noted by Sascha Rudloff, Team Leader of IT and Process Management at PALFINGER Tail Lifts GMBH, “The outcomes of the Sprint surpassed our expectations and were a vital catalyst for our analytics strategy.” By understanding these dynamics and leveraging Creatum’s customized AI solutions, businesses can navigate the intricate AI landscape and make informed decisions that enhance their reporting capabilities and overall management strategies.

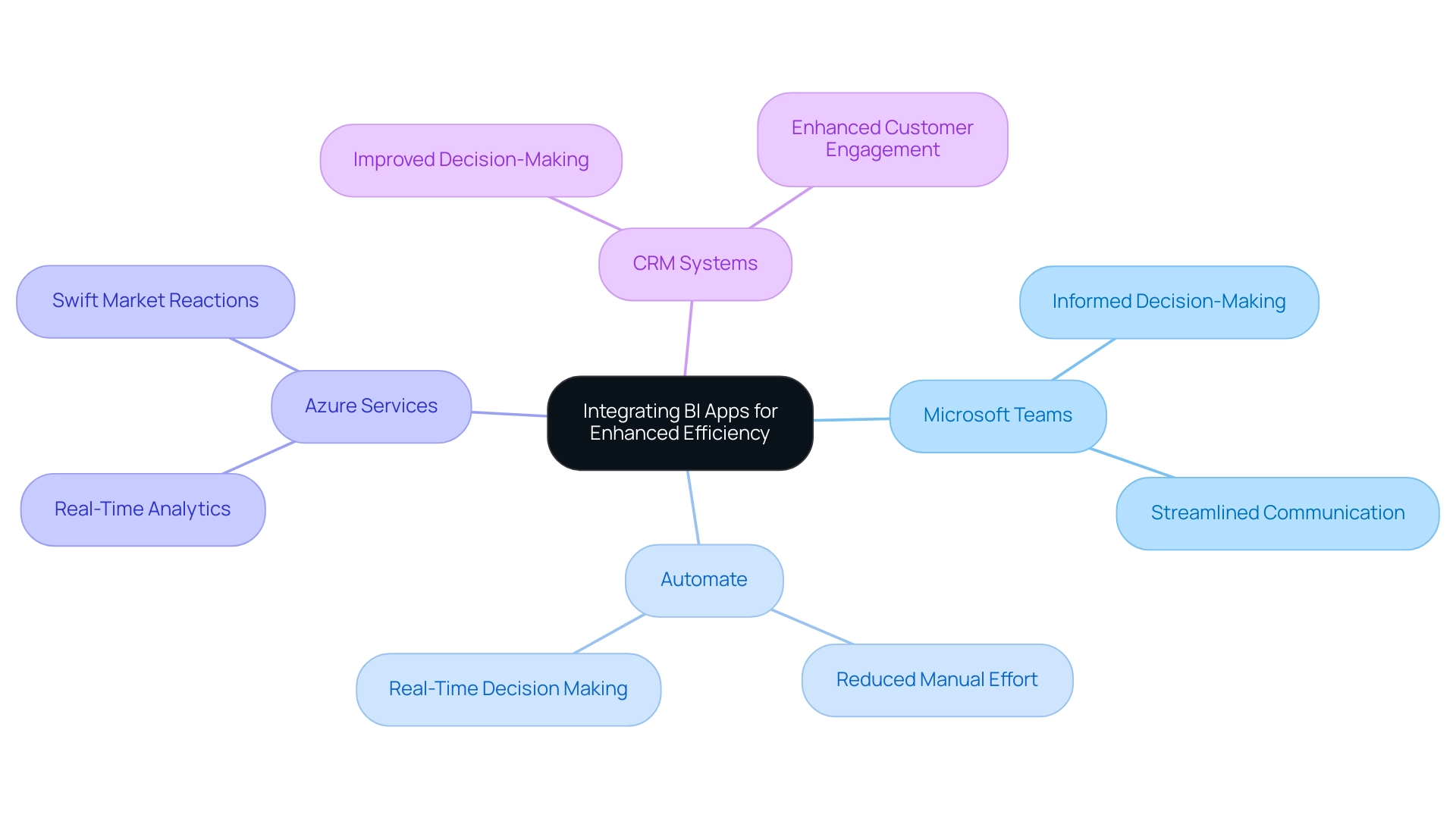

Integrating Power BI Apps with Other Tools for Enhanced Efficiency

Integrating BI applications with other tools can significantly enhance operational efficiency across various business functions. For instance, consider the following integrations:

-

Microsoft Teams: By integrating BI Apps within Teams, users gain access to essential insights directly within their collaboration platform. This fosters a more informed decision-making environment, streamlining communication and ensuring insights are readily available where teams are already working. However, it is crucial to consider limitations such as feature disparities and permissions management, as highlighted in the case study titled “Considerations for BI in Teams.”

-

Automate: Automating workflows between BI and other applications allows organizations to streamline data processes and reduce manual effort. This integration minimizes the risk of errors and accelerates the flow of information, enabling teams to focus on strategic initiatives rather than repetitive tasks. Notably, BI navigation history is saved approximately every 15 seconds, supporting real-time decision-making. This aligns with our 3-Day BI Sprint, where we assist you in creating fully functional reports that can be integrated seamlessly into your workflows.

-

Azure Services: Leveraging Azure for information storage and processing enhances the performance of Business Intelligence applications, enabling real-time analytics. This capability allows businesses to react swiftly to evolving market conditions and make data-driven decisions with confidence, further supported by our extensive BI services that include custom dashboards and advanced analytics.

-

CRM Systems: Integrating Business Intelligence applications with CRM systems empowers organizations to visualize customer information effectively. This integration not only improves decision-making but also enhances customer engagement by providing insights that drive personalized interactions.

These integrations underscore the adaptability of BI applications in enhancing overall business intelligence strategies. As organizations increasingly rely on information to guide their operations, the ability to seamlessly link BI with tools such as Microsoft Teams and Automate becomes essential for enhancing efficiency and achieving operational excellence. By leveraging our tailored AI solutions and RPA capabilities, businesses can boost productivity and drive growth through informed decision-making.

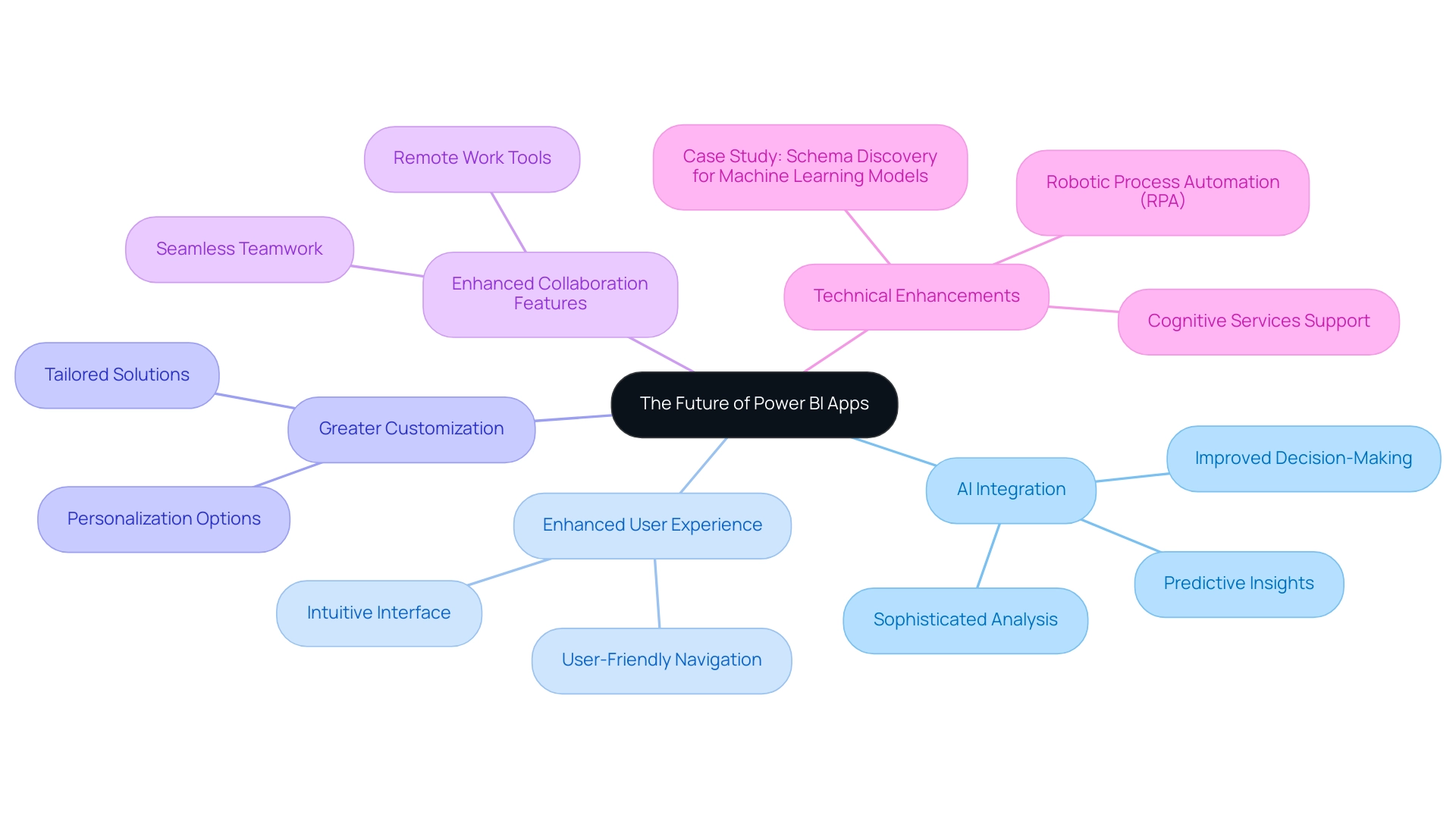

The Future of Power BI Apps: Trends and Innovations

The future of BI Apps is poised for transformative advancements that will redefine their role in business intelligence. Key trends include:

-

AI Integration: The incorporation of advanced AI capabilities will empower users with sophisticated analysis and predictive insights, making complex information accessible to all stakeholders. This integration is anticipated to substantially improve decision-making procedures, as AI’s incorporation in BI streamlines intricate analysis.

-

Enhanced User Experience: Ongoing updates will focus on user-friendly navigation and an intuitive interface, ensuring that users can easily access and understand information.

-

Greater Customization: Future iterations of Business Intelligence tools are likely to provide improved personalization options, enabling organizations to modify applications to meet specific operational requirements and preferences. This aligns with the organization’s distinct value in offering tailored solutions that enhance information quality and simplify AI implementation.

-

Enhanced Collaboration Features: With the rise of remote work, BI Apps will likely introduce more collaborative tools, facilitating seamless teamwork and communication across distances.

Additionally, Cognitive Services are supported for Premium capacity nodes EM2, A2, or P1, further enhancing the technical capabilities of Power BI Apps. Our customized AI solutions, including Small Language Models and GenAI Workshops, will assist organizations in enhancing information quality and streamlining AI implementation.

Furthermore, leveraging Robotic Process Automation (RPA) can streamline manual workflows, boosting efficiency and freeing up teams for more strategic tasks. A relevant case study titled “Schema Discovery for Machine Learning Models” illustrates how data scientists use Python to develop and deploy Machine Learning models, requiring explicit schema generation for web services. Additionally, Creatum GmbH offers a 3-Day BI Sprint for quickly creating professionally designed reports and a General Management App for comprehensive management and smart reviews.

These trends collectively signal a promising future for understanding the differences between Power BI Apps and workspaces, solidifying their position as essential components of effective business intelligence strategies.

Conclusion

Power BI Apps and Workspaces are pivotal in shaping effective data management strategies within organizations. Power BI Workspaces act as collaborative environments, empowering teams to innovate and refine their data reports, thereby enhancing teamwork and operational efficiency. In contrast, Power BI Apps focus on delivering curated content to end-users, ensuring quick and easy access to essential data insights for informed decision-making.

The distinct functionalities of these components underscore their significance in the data analytics landscape. Workspaces bolster collaborative efforts and streamline content management, while Apps prioritize user experience and security, enabling organizations to maintain control over sensitive information. This complementary relationship cultivates a robust environment where data-driven insights can flourish, ultimately driving growth and innovation.

As organizations adapt to the complexities of modern data management, grasping the unique advantages and practical applications of Power BI Apps and Workspaces is crucial. By effectively leveraging these tools, businesses can navigate challenges related to data governance, user engagement, and operational efficiency, positioning themselves for success in the increasingly competitive realm of business intelligence. Embracing the full potential of Power BI not only enhances reporting capabilities but also empowers teams to make strategic decisions that foster sustained organizational growth.

Frequently Asked Questions

What is the primary purpose of a BI Workspace in the Power BI ecosystem?

A BI Workspace serves as a collaborative environment for teams of developers and analysts to create, manage, and share reports and dashboards, fostering teamwork and innovation in analysis.

How do Power BI apps differ from workspaces?

Power BI apps are tailored for end-users and consolidate related reports and dashboards into a user-friendly interface, prioritizing experience and accessibility, while workspaces focus on the development and enhancement of data insights.

What are the ways to share a Power BI app once it is ready?

A Power BI app can be shared through automatic installation, direct links, or via the Power BI app marketplace.

What is the significance of usage metrics reports in Power BI?

Usage metrics reports rank reports based on view count, helping organizations identify which reports are most valuable to users. However, it is important to note that these reports are unsupported in My Workspace and have limitations regarding data collection.

How do BI tools facilitate the distribution of reports and dashboards within organizations?

BI tools ensure that only necessary individuals have access to build and edit content, isolating production-ready material and providing a read-only version to end recipients, which enhances secure information sharing and decision-making.

What key features enhance user experience in Business Intelligence applications?

Key features include curated content, audience management, version control, and mobile accessibility, all of which improve information accessibility and usability.

Why is audience management an important feature in Power BI apps?

Audience management allows administrators to tailor content visibility based on user roles, ensuring sensitive information is accessible only to authorized personnel, which enhances security and compliance.

How does version control benefit users of BI applications?

Version control allows developers to update reports without immediately affecting the end-user experience, maintaining continuity and minimizing disruptions during updates.

What impact does mobile accessibility have on Business Intelligence applications?

Mobile accessibility enables users to access insights anytime and anywhere, supporting a more agile workforce and facilitating timely decision-making.

What improvements in user engagement have been reported by organizations using BI applications?

Organizations have reported significant improvements in user experience, with studies indicating that tailored content and mobile accessibility can lead to a 30% increase in user engagement.

Overview

This article examines seven bi-clustering techniques that significantly enhance data analysis by simultaneously organizing both rows and columns of a dataset. Such an approach reveals intricate patterns that traditional methods often overlook. Among these techniques are:

- Spectral Biclustering

- BiMax

- Iterative Signature Biclustering

Each offering unique applications in critical fields like bioinformatics and marketing analytics. These methods not only drive innovation but also improve decision-making in data-rich environments, showcasing their transformative potential. Professionals in these sectors should consider integrating these techniques to unlock deeper insights and foster more informed strategies.

Introduction

In the realm of data analysis, bi-clustering stands out as a powerful technique that transcends traditional methodologies, offering a unique lens through which to explore complex datasets. This dual-clustering approach organizes both rows and columns of data matrices simultaneously, revealing hidden patterns and relationships that often remain obscured. Particularly valuable in fields such as bioinformatics, bi-clustering not only enhances the understanding of intricate data interactions—like those between genes and conditions—but also paves the way for groundbreaking insights that drive innovation and informed decision-making.

As organizations increasingly harness the potential of bi-clustering, the integration of advanced algorithms and machine learning is set to redefine the landscape of data analysis. This evolution makes bi-clustering an essential tool for navigating the challenges of an ever-evolving digital world. Are you ready to explore how bi-clustering can transform your data analysis practices?

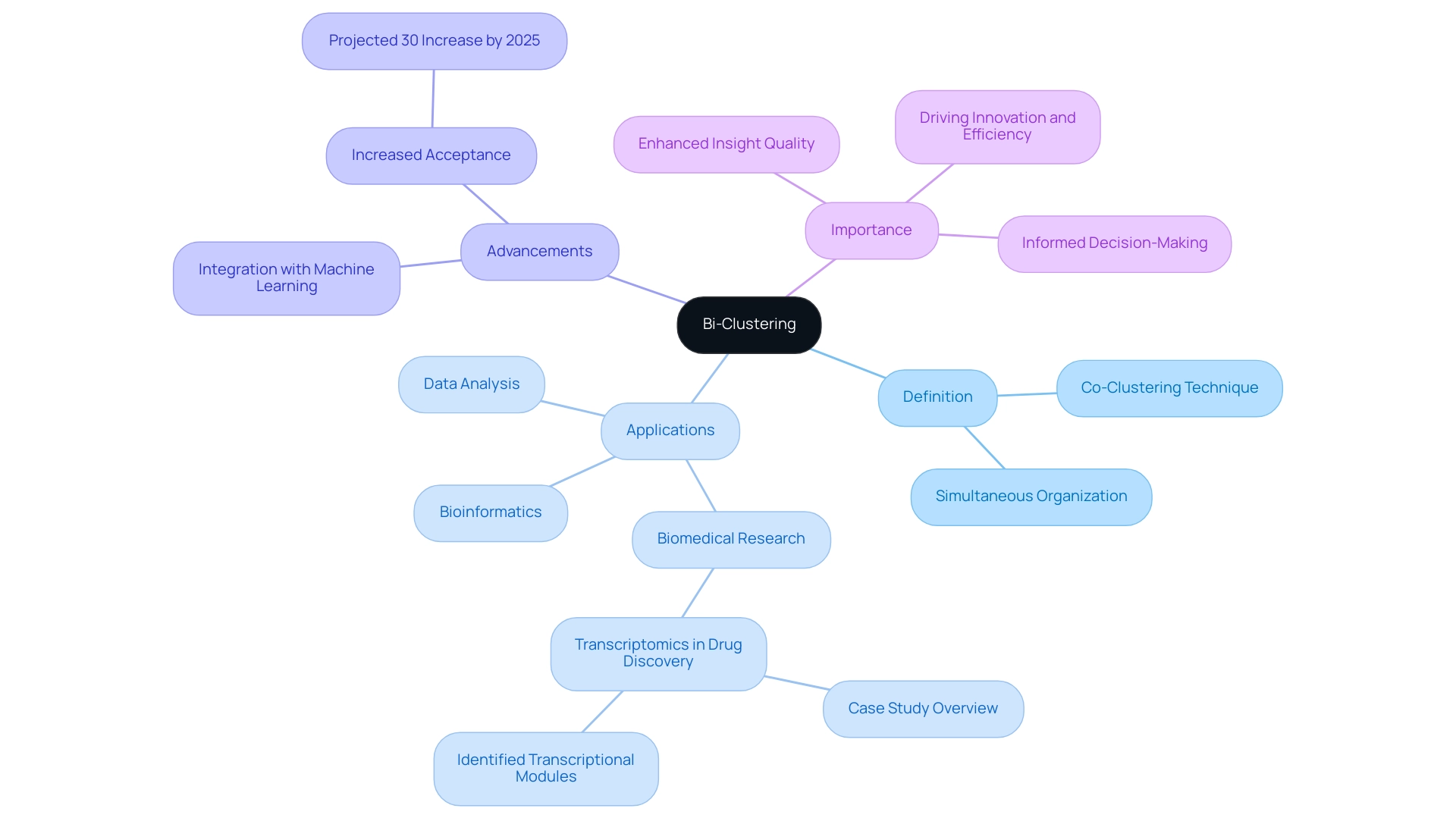

Understanding Bi-Clustering: A Comprehensive Overview

Bi clustering, often referred to as co-clustering or two-mode clustering, is an advanced mining technique that simultaneously organizes both rows and columns of a matrix. This approach is particularly advantageous in contexts where the interplay between two distinct types of information must be examined, such as the relationship between genes and conditions in bioinformatics. By employing bi clustering to cluster both dimensions, this technique uncovers intricate patterns that traditional clustering methods may overlook, thereby offering a more comprehensive insight into the underlying data structure.

Recent advancements in dual-clustering methods have significantly enhanced their applicability across various fields. For instance, in biomedical research, clustering algorithms have been effectively utilized to analyze complex datasets, leading to breakthroughs in understanding disease mechanisms. A notable case study involves the examination of transcriptomic profiles from eight drug discovery projects, which successfully identified transcriptional modules associated with desired therapeutic effects.

Statistics indicate that the application of dual clustering in information evaluation is on the rise, with a notable increase of 30% in acceptance among researchers and institutions projected for 2025. This expanding trend underscores the growing integration of dual clustering with machine learning methods, further enhancing the precision and effectiveness of information examination procedures. As Yang Li pointed out, ‘This presentation illustrates the examination and views of Yang Li,’ emphasizing the significance of expert perspectives in comprehending these techniques.

The importance of bi clustering in information analysis cannot be overstated. It not only enhances the quality of insights derived from complex datasets but also facilitates more informed decision-making. As organizations continue to navigate the challenges of data-rich environments, the ability to extract meaningful patterns through bi clustering will be crucial for driving innovation and operational efficiency.

By leveraging customized solutions that enhance information quality and simplify AI implementation, such as Robotic Process Automation (RPA) and Business Intelligence (BI), businesses can fully harness the potential of bi clustering to achieve their strategic goals.

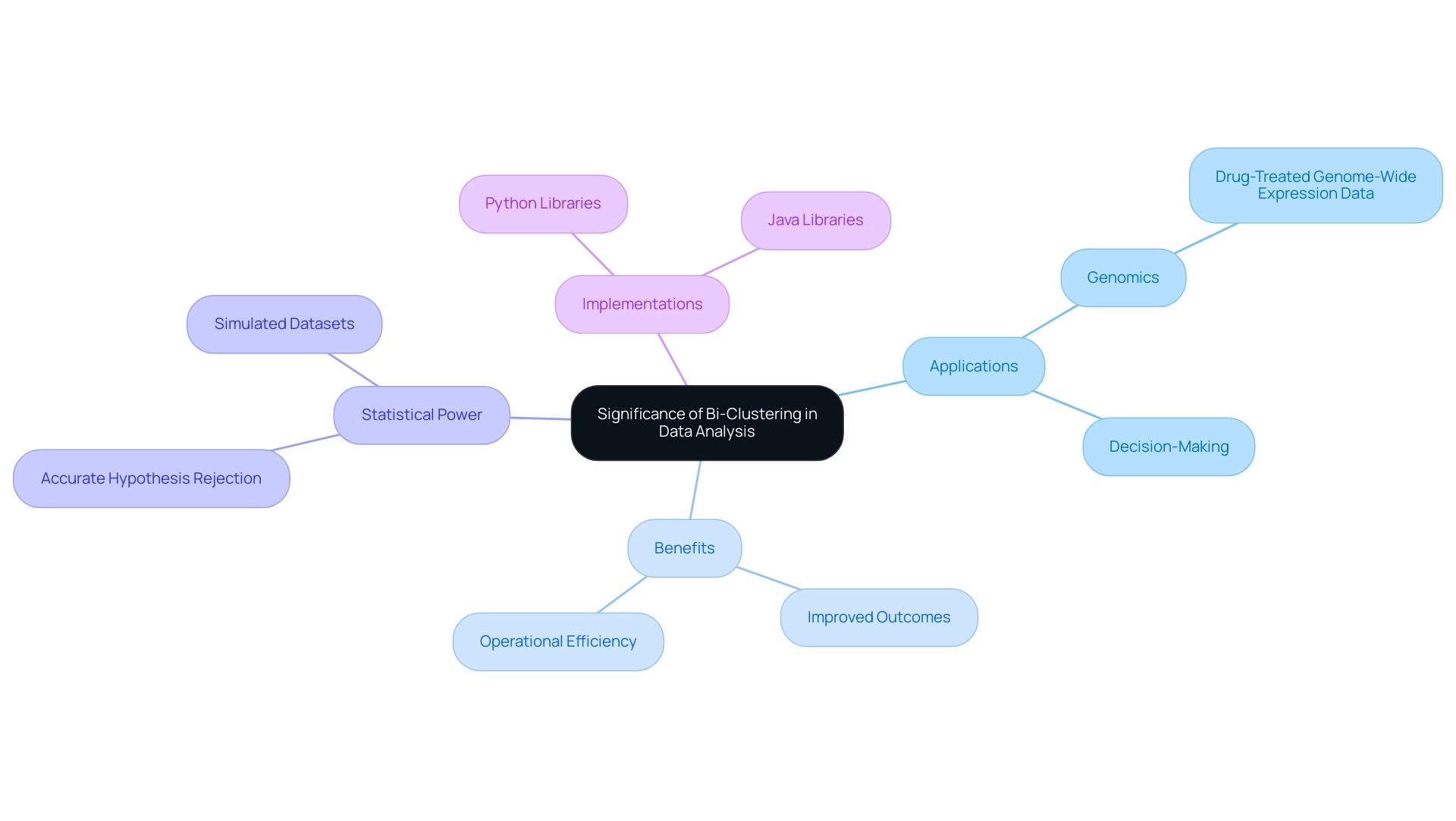

The Significance of Bi-Clustering in Data Analysis

Bi clustering serves as a pivotal method in information evaluation, empowering analysts to unveil hidden patterns and connections within complex datasets. This technique proves particularly beneficial in genomics, where bi clustering aids in identifying gene groups exhibiting similar expression patterns across diverse conditions. A notable case study on bi clustering within drug-treated genome-wide expression data exemplified its efficacy in revealing drug-induced gene modules.

The results indicated that subsequent conservation and enrichment evaluations could validate gene-drug connections, offering crucial insights into the impact of medications on gene expression.

The influence of dual clustering extends beyond genomics; it significantly enhances decision-making processes across various fields. By leveraging dual clustering, organizations can formulate more informed strategies, ultimately leading to improved outcomes. As of 2025, the benefits of dual clustering in decision-making are increasingly recognized, with statistical analyses underscoring its importance.

For instance, the probability of accurately rejecting the null hypothesis—known as statistical power—can be markedly improved through effective clustering techniques. This relevance is underscored by simulated datasets for cluster evaluation, which have showcased various configurations for subgroup sizes and feature differences, assessing both statistical power and accuracy.

Moreover, popular implementations of bi clustering are predominantly developed in Python and Java, with an array of libraries available to facilitate its application. Experts emphasize the growing importance of dual grouping in information evaluation. Jing Zhao, an assistant research scientist at Sanford Research, highlights that as organizations strive for data-driven decisions, the capacity to extract meaningful insights from complex datasets becomes essential.

By incorporating bi clustering into their analytical frameworks, businesses can enhance operational efficiency while fostering innovation and growth in an increasingly competitive landscape. This aligns with the broader narrative of utilizing Business Intelligence and Robotic Process Automation (RPA) to streamline workflows and drive data-driven insights, ultimately supporting business growth in a rapidly evolving AI landscape.

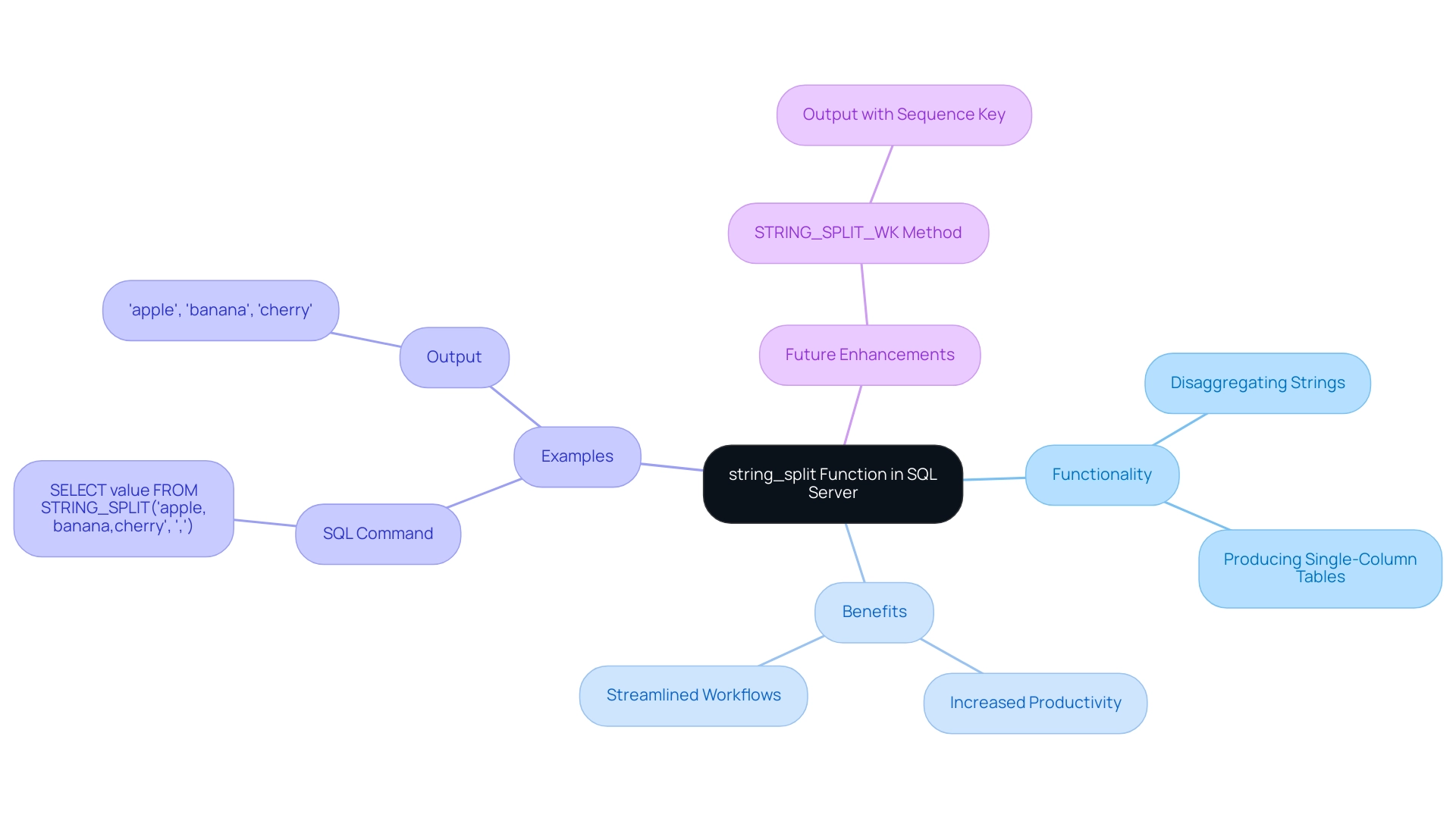

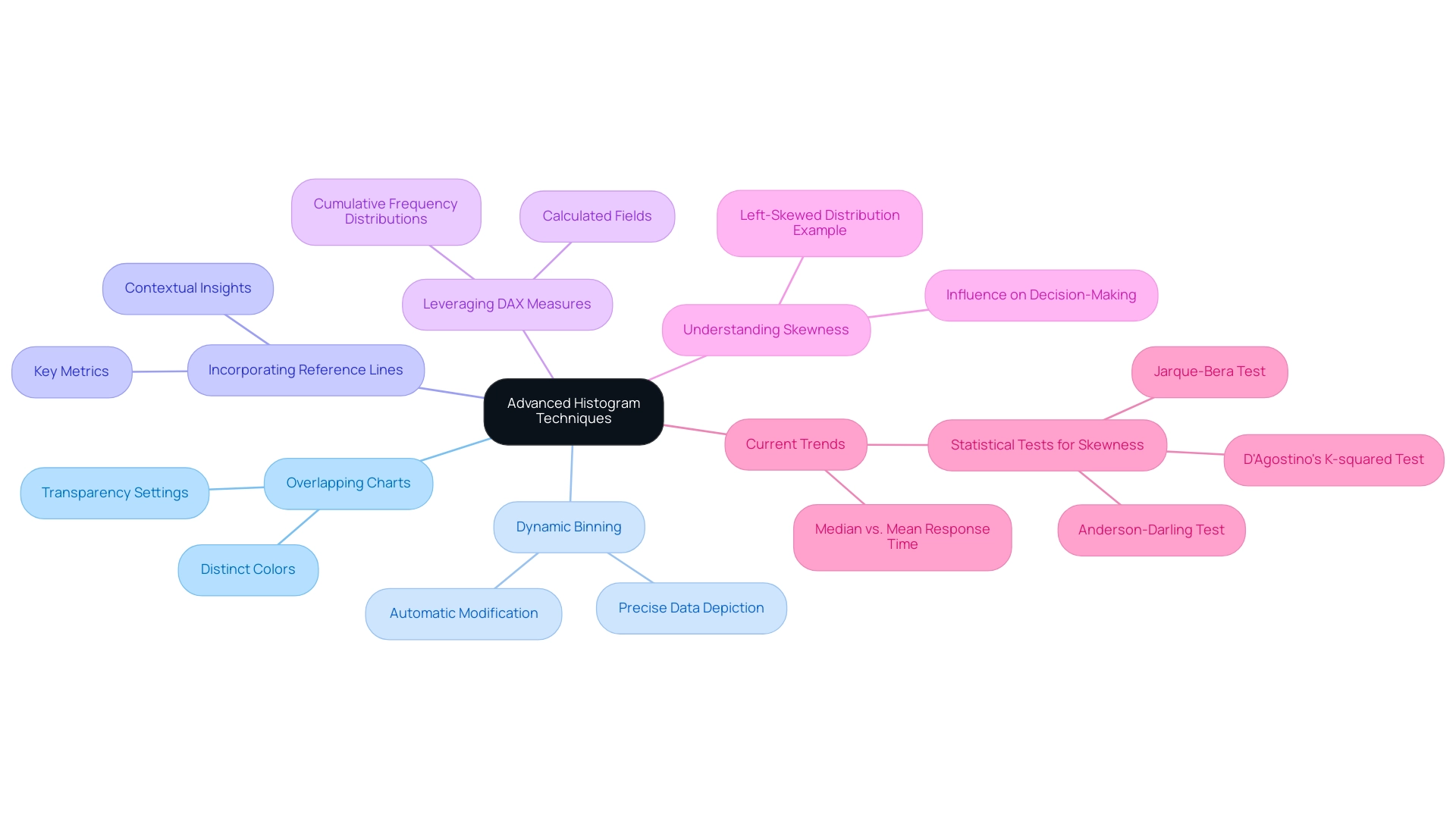

Exploring Different Types of Bi-Clustering Techniques

Bi-clustering techniques serve as vital instruments in analysis, each offering unique methodologies and applications tailored to specific types and analytical goals. Prominent techniques include:

-

Spectral Biclustering: This method leverages the spectral properties of matrices to uncover clusters within the information. By analyzing eigenvalues and eigenvectors, it identifies patterns that may not be apparent through traditional clustering methods. Its capability to handle complex datasets makes it particularly valuable in fields such as genomics and image processing. Notably, the runtime of this algorithm scales linearly with the total number of complete iterations, enhancing its efficiency in processing large collections.

-

BiMax Biclustering: Designed for binary information, BiMax focuses on discovering maximal biclusters, which are submatrices exhibiting uniformity in both rows and columns. This method is especially efficient for applications like market basket exploration, where understanding co-occurrence patterns is vital.

-

Iterative Signature Biclustering: This strategy enhances clusters repeatedly based on correlation patterns, allowing dynamic modifications as new information is introduced. Its adaptability makes it suitable for real-time data analysis, particularly in environments where data is continuously evolving.

Each of these techniques has its strengths, making them applicable to various data scenarios. For instance, a recent performance evaluation of dual-cluster algorithms demonstrated that the proposed dual-cluster method outperformed traditional one-way clustering techniques in identifying true latent patterns, achieving the highest average Rand index criterion. This highlights the effectiveness of bi-clustering in revealing insights that might be overlooked by other methodologies. The evaluation was guided by 35 cancer datasets, ensuring a robust assessment of clustering precision.

As analytics progresses, the latest dual-clustering techniques continue to emerge, providing improved capabilities for analysis. Understanding these techniques and their applications is essential for utilizing information effectively and promoting informed decision-making. Moreover, integrating Robotic Process Automation (RPA) into these analytical processes can further enhance operational efficiency by reducing errors and freeing up teams for more strategic, value-adding work.

RPA can streamline workflows related to bi-clustering techniques, enabling organizations to automate manual tasks and focus on deriving actionable insights from information. Additionally, our tailored AI solutions offer customized methods that enhance quality and simplify AI implementation, ultimately driving growth and innovation in analysis. As noted by Ricardo J. G. B. Campello, both authors read and approved the final manuscript, underscoring the collaborative effort in evaluating these methodologies.

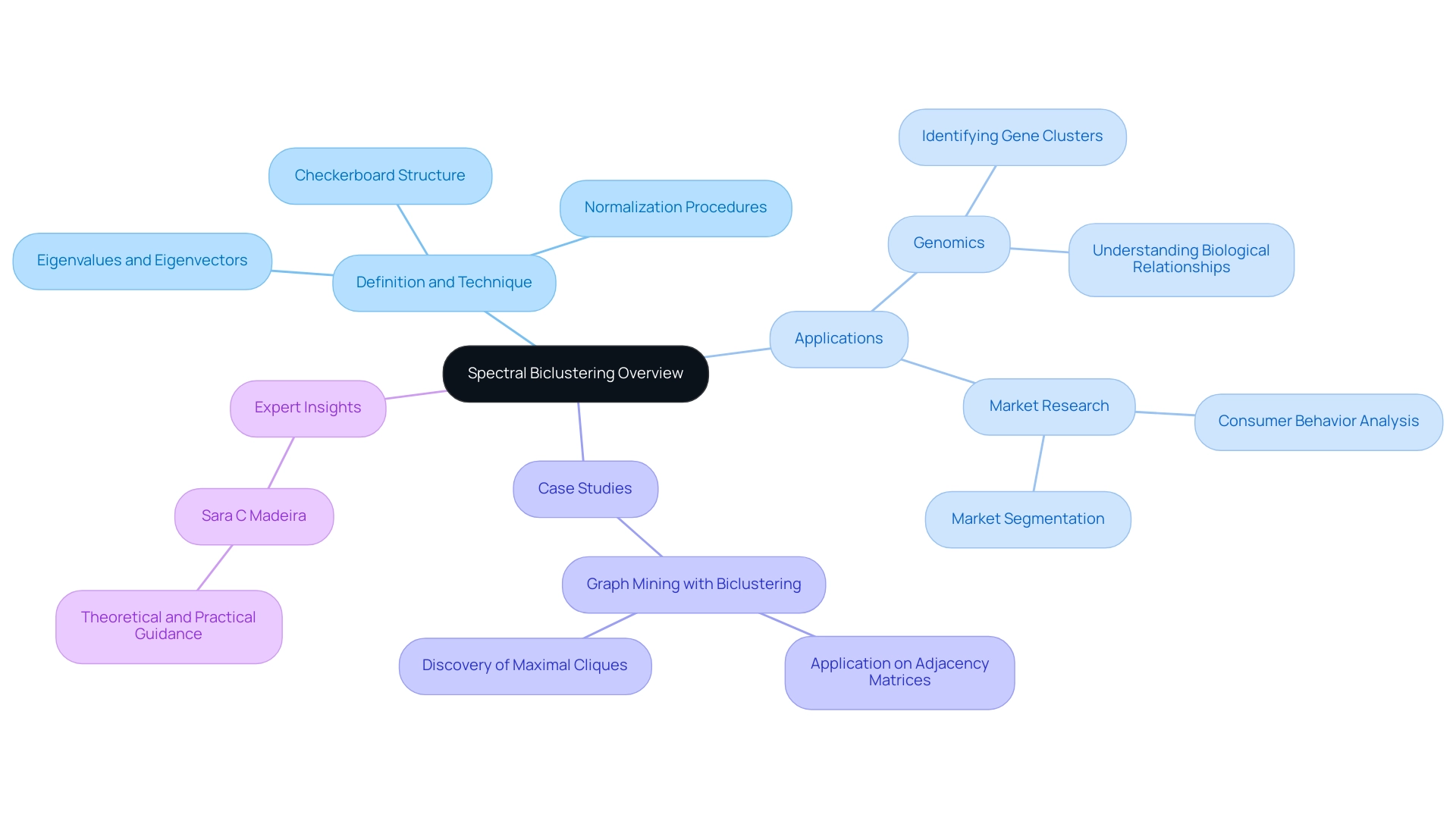

Technique 1: Spectral Biclustering – An In-Depth Look

Bi clustering, particularly Spectral Biclustering, represents a sophisticated analytical technique that leverages the eigenvalues and eigenvectors of a matrix to identify clusters within datasets effectively. This method excels in scenarios marked by a checkerboard structure, where relationships between points are not immediately apparent. By transforming the information into a spectral domain, analysts uncover intricate connections between rows and columns, establishing bi clustering as an invaluable asset across various fields, especially in genomics and market research.

The efficacy of Spectral Biclustering is underscored by its capacity to process large datasets with remarkable efficiency. For instance, algorithms tested on a computer equipped with 2.27 GHz dual quad-core Intel Xeon CPUs and 48 GB of main memory have shown significant performance in managing complex data structures. Normalization procedures are integral to this modeling process, enabling bi clustering to discern bidirectional structures, thereby enhancing the clarity and accuracy of the analysis.

In the realm of genomics, the applications of bi clustering, specifically Spectral Biclustering, are particularly noteworthy. It has proven instrumental in identifying clusters of genes and conditions, facilitating a deeper understanding of biological relationships. A compelling case study titled “Graph Mining with Biclustering” illustrates this application, demonstrating how bi clustering algorithms can be employed on adjacency matrices to uncover coherent modules within networks.

This approach has led to the discovery of maximal cliques, providing new insights into the interpretation of relationships within complex genomic information through bi clustering.

Expert opinions further emphasize the importance of this method in enhancing information evaluation. As noted by Sara C Madeira, a prominent researcher in the field, “We aim to provide theoretical and practical guidance about biclustering analysis.” This statement highlights the significance of such guidance in harnessing the full potential of bi clustering.

The ongoing exploration of this technique continues to reveal its transformative impact on information interpretation, particularly in the rapidly evolving field of genomics, where bi clustering plays a crucial role.

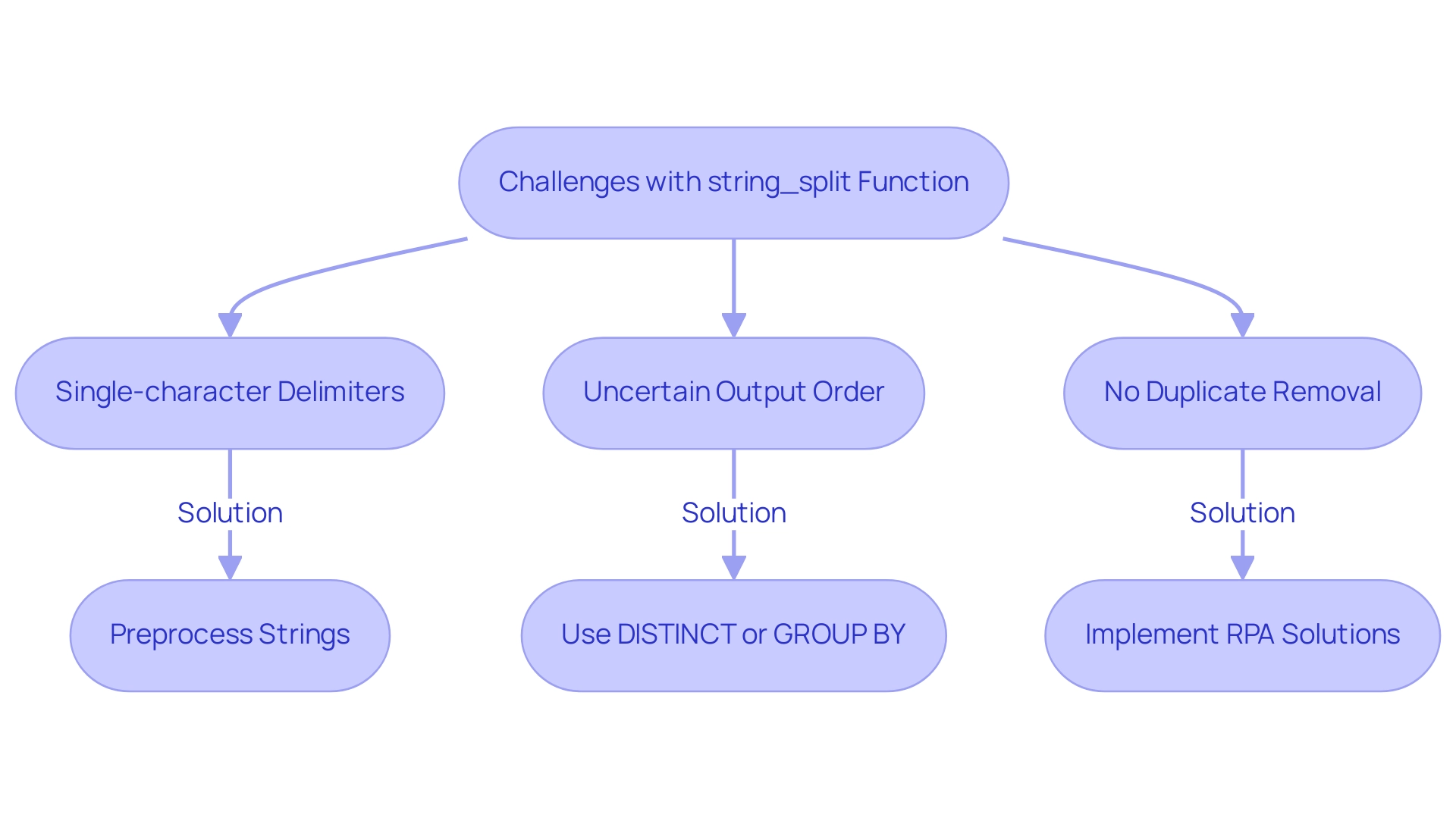

Technique 2: BiMax Biclustering – Key Features and Applications

Bi clustering stands out as a powerful technique specifically designed to identify maximal biclusters within binary matrices. This method works by recursively partitioning the information into submatrices that exclusively contain 1’s, thereby revealing groups of items that share common attributes. Its application in bioinformatics is particularly noteworthy, especially in analyzing gene expression data, where it effectively uncovers co-expressed genes across various conditions.

The efficiency of BiMax in processing binary data has made it a favored choice among researchers engaged in bi clustering within the field.

As we look to 2025, the significance of bi clustering using BiMax bicluster analysis continues to expand, with applications proliferating across diverse areas of bioinformatics. For instance, recent studies underscore its utility in gene expression analysis, aiding in the identification of significant patterns that inform research on genetic interactions and disease mechanisms. The synergy of bicluster analysis with RNA-seq data further enhances the capacity to study individual cell heterogeneity, offering deeper insights into cellular behaviors and responses.

Moreover, the computational efficiency of bi clustering through BiMax bicluster analysis is pivotal to its adoption. Despite the inherent challenges posed by NP-completeness in grouping algorithms, advancements in search strategies have bolstered its performance, establishing it as a viable option for large datasets. A notable case study involving the subsystem analysis of HIV-1 interactions utilized BiMax to identify 279 significant sets of host proteins interacting with the virus, showcasing its practical application in real-world scenarios.

This case study highlights the effectiveness of BiMax in facilitating the construction of a bi clustering distance matrix, essential for understanding complex biological interactions.

Furthermore, the dataset accession number for Buettner (E-MTAB-2805) serves as a solid reference point for readers interested in specific sources related to cluster analysis research. Jing Zhao, an assistant research scientist at Sanford Research and an assistant professor at the Department of Internal Medicine, University of South Dakota Sanford School of Medicine, emphasizes the significance of BiMax in advancing bioinformatics research, stating, “BiMax provides a robust framework for uncovering hidden patterns in complex datasets, ultimately driving innovation in the field.”

Overall, bi clustering with BiMax dual clustering not only simplifies analysis in bioinformatics but also empowers researchers to derive meaningful insights from intricate information sets, ultimately fostering innovation and growth in the field.

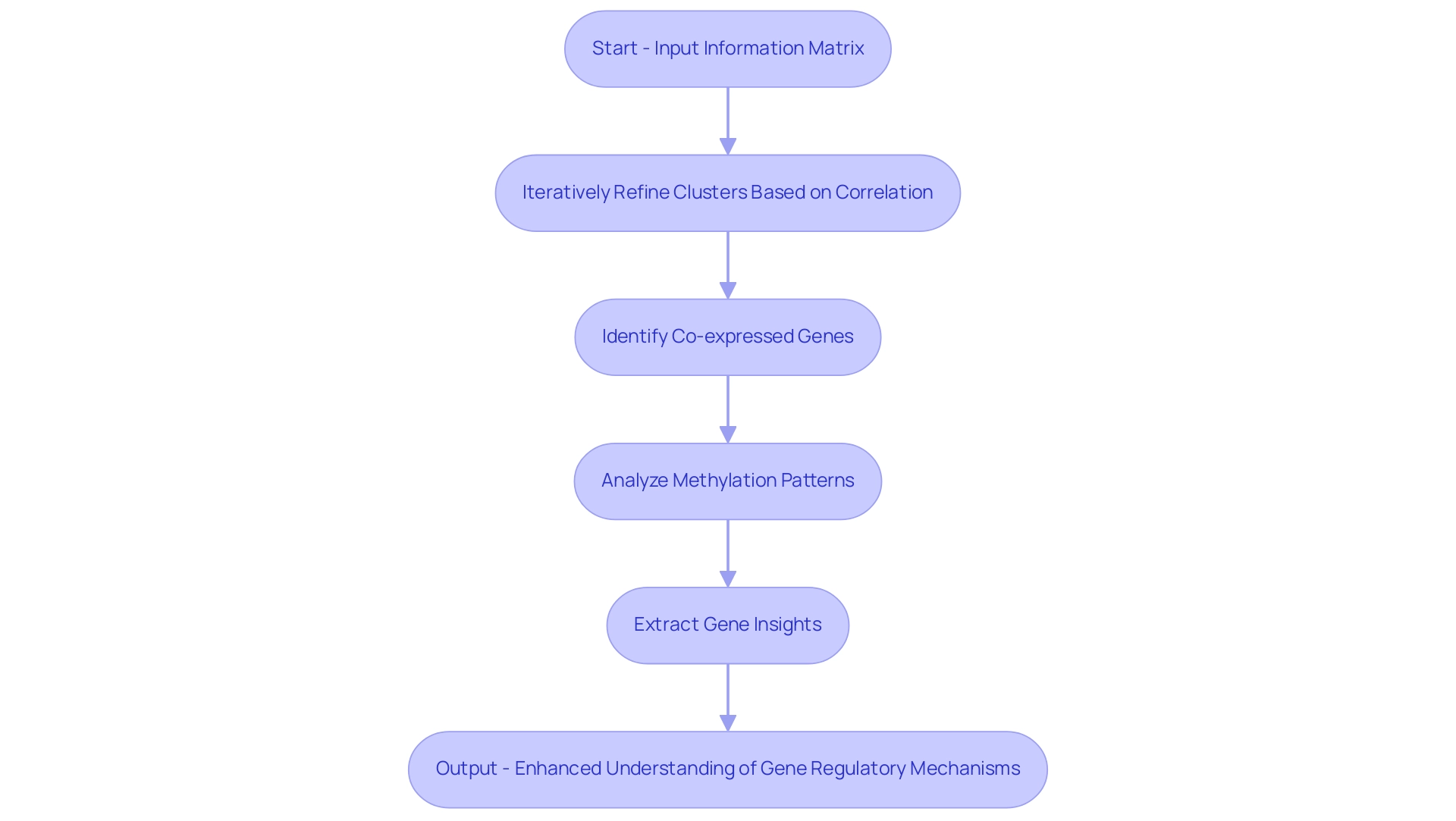

Technique 3: Iterative Signature Biclustering – Methodology and Use Cases

The Iterative Signature Algorithm (ISA) is a powerful technique designed to identify correlated blocks within information matrices. By iteratively refining clusters based on the correlation between rows and columns, ISA proves particularly effective for analyzing gene expression information. This method has been instrumental in various biological studies, revealing co-expressed genes and shedding light on intricate gene regulatory mechanisms.

For instance, in a study examining the role of DNA methylation in breast cancer, ISA facilitated the identification of distinct methylation patterns associated with different breast cancer subtypes. Such insights are crucial, as they can lead to better-targeted therapies and improved clinical outcomes for patients.

Sara C Madeira, the corresponding author, emphasizes, “We aim to provide theoretical and practical guidance about bi clustering analysis,” underscoring the significance of ISA in this field. The iterative nature of ISA not only enhances the quality of clusters but also adapts to the growing complexity of datasets, making it a robust choice for researchers. In 2025, the methodology continues to evolve, with advancements aimed at developing more efficient bi clustering algorithms to manage increasingly large datasets.

Statistics indicate that ISA has successfully extracted 1,906 genes from a dataset of 17,815 genes, demonstrating its effectiveness in distilling meaningful insights from vast amounts of information. The pattern of bi clustering simplifies its description, aiding in the identification of relationships and noise, which is invaluable for researchers navigating the complexities of modern biological studies. As the landscape of biological research grows, the uses of ISA in revealing relationships and minimizing interference in evaluations remain invaluable, establishing it as a crucial instrument for researchers aiming to navigate the complexities of contemporary biological studies.

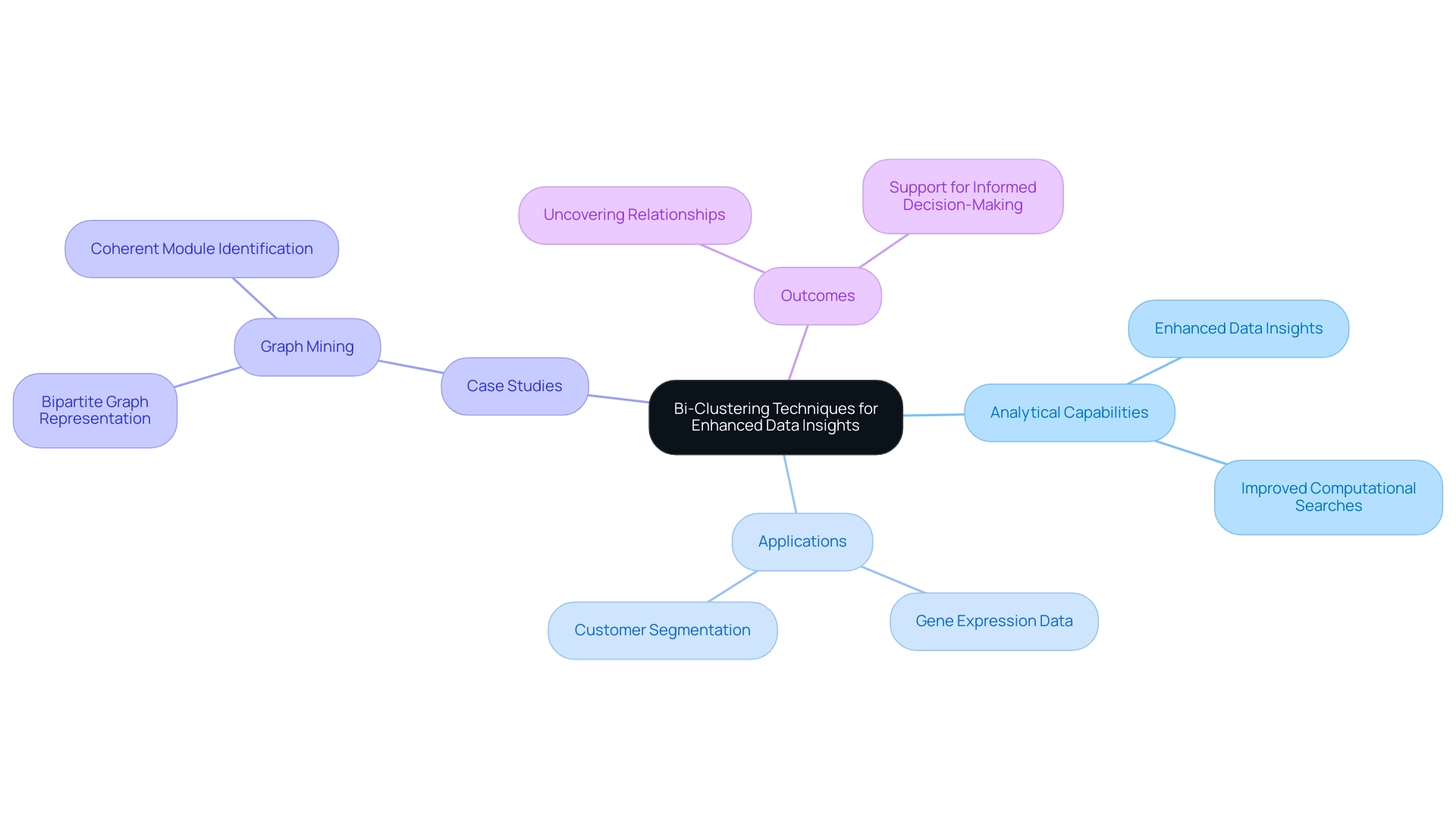

Leveraging Bi-Clustering Techniques for Enhanced Data Insights

Utilizing dual-clustering methods empowers organizations to significantly enhance their analytical capabilities, particularly in the rapidly evolving AI landscape. Each method offers unique advantages that can be tailored to specific datasets and analytical goals. For example, bi-clustering can uncover hidden patterns in gene expression data, leading to breakthroughs in biomedical research, or it can effectively segment customers in marketing analytics, facilitating targeted campaigns that resonate with distinct consumer groups.

As data volumes continue to surge—projected to reach 20.3 billion IoT connections by 2025—businesses must implement innovative strategies to navigate this complexity. Bi-clustering serves as a robust toolset for extracting meaningful insights, especially in scenarios where traditional analysis methods may prove inadequate. This aligns with the demand for customized AI solutions from Creatum GmbH, which cut through the noise and provide targeted technologies that address specific business objectives and challenges.

A compelling case study in this domain is the application of dual clustering in graph mining, illustrating how relationships between entities can be revealed within networks. By representing a matrix as a bipartite graph, grouping algorithms can identify coherent modules, enhancing the understanding of network structures. This approach has demonstrated effectiveness in both binary and weighted networks, showcasing the versatility of bi-clustering across various contexts.

The impact of dual-clustering on information insights is profound, with experts emphasizing its role in improving computational searches and streamlining the visualization of complex patterns. As Kelly C. Powell, Marketing Head & Engagement Manager at Creatum GmbH, aptly states, “We collect your requirements, we offer a solution, we succeed together!” This collaborative approach is essential as organizations prioritize information governance—an area of focus for 60% of information leaders—making combined clustering techniques crucial for managing and extracting value from the growing volume of information.