Overview

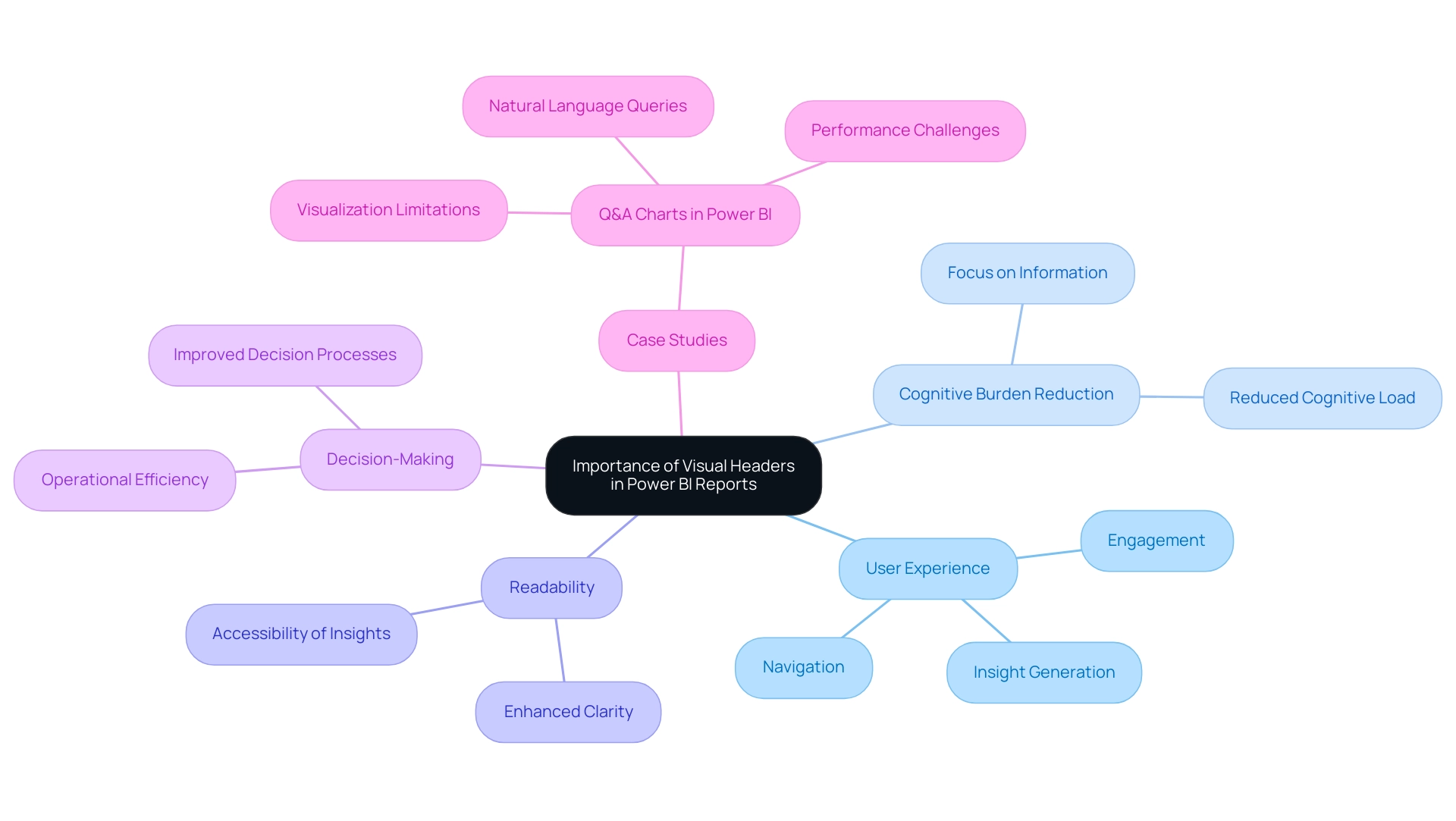

This article explores best practices for harnessing BI images in Power BI to elevate reporting standards. High-quality, relevant visuals are not just enhancements; they are essential tools that significantly improve clarity and engagement. Statistics reveal that user performance and stakeholder interaction see marked increases when visuals are strategically integrated into BI reports. This strategic use of visuals is not merely a recommendation; it is a necessity for effective communication in today’s data-driven environment.

Introduction

In the realm of data visualization, the integration of Business Intelligence (BI) images in Power BI is revolutionizing how organizations convey insights. From logos to infographics, these visuals not only elevate the aesthetic appeal of reports but also significantly enhance comprehension. Studies indicate that understanding can increase by up to 40% with the strategic use of visuals. As stakeholders increasingly demand clearer and more engaging presentations of complex data, the role of images becomes crucial.

This article explores the importance of BI images, outlines best practices for their integration, and details the technical aspects of adding and formatting these visuals in Power BI. Ultimately, it aims to guide organizations toward more effective data storytelling and informed decision-making.

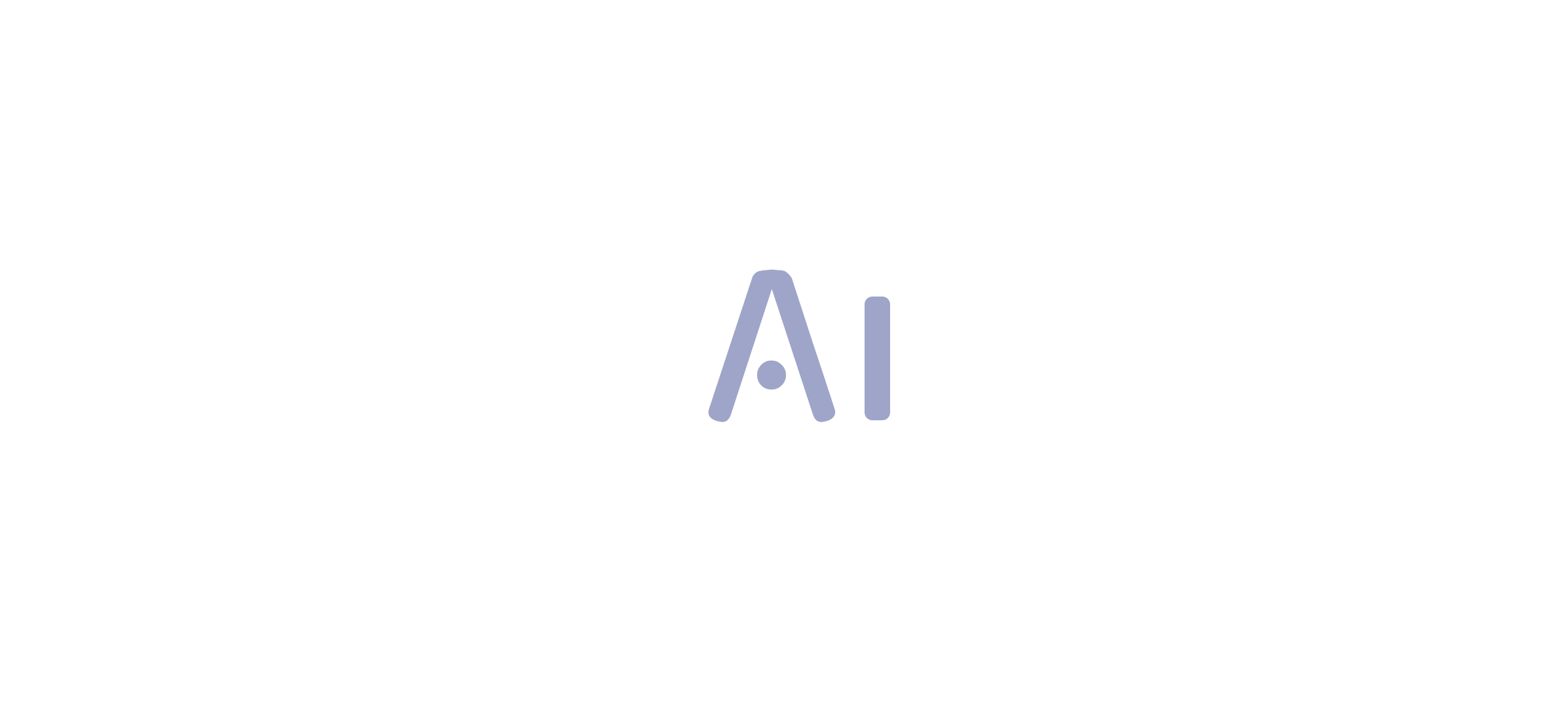

Understanding BI Images in Power BI: An Overview

BI images in Power BI are crucial for enhancing data visualization and storytelling. These visuals, ranging from logos and icons to infographics, significantly improve the clarity and impact of documents. Recent statistics indicate that documents featuring visuals can enhance understanding by as much as 40%, demonstrating their effectiveness in conveying complex information intuitively.

Among 2,751 evaluations of business intelligence tools on TrustRadius, the average word count is 367 words, highlighting the depth of information typically expected in BI documents.

The integration of BI visuals not only elevates the aesthetic quality of documents but also fosters a more engaging experience for stakeholders. For instance, organizations that utilize visuals in their BI dashboards report a 30% increase in stakeholder engagement, facilitating the extraction of actionable insights from data. This is particularly relevant when considering the challenges of time-consuming documentation processes and information inconsistencies that many face when leveraging insights from Power BI dashboards.

Expert insights underscore the importance of BI visuals in enhancing information representation. In 2025, data visualization specialists emphasize that strategically placed visuals can significantly improve document clarity, allowing users to grasp key insights swiftly. Menahil Shahzad from Analytico states, “At Analytico, we turn complexity into clarity, assisting businesses like yours attain measurable growth,” reinforcing the value of BI visuals in achieving clarity and driving growth.

Furthermore, the features of BI services, such as the 3-Day BI Sprint for rapid report creation and the General Management App for comprehensive oversight, directly address the demand for efficient reporting and actionable guidance. Case studies reveal that companies have successfully transformed their reporting processes by leveraging BI images in Business Intelligence, leading to more informed decision-making. For example, user satisfaction rates vary significantly among BI tools, with Tableau Desktop and Looker users reporting higher satisfaction compared to Microsoft BI users.

This context illustrates the effectiveness of BI tools, particularly in relation to BI.

As trends evolve, the role of BI visuals in Business Intelligence continues to expand, with innovative strategies emerging for effective visual utilization. By understanding and implementing best practices for BI visuals, organizations can enhance their reporting capabilities and derive greater value from their data. Creatum GmbH is well-positioned to assist businesses in navigating these advancements, ensuring they can overcome outdated systems through intelligent automation and tailored AI solutions.

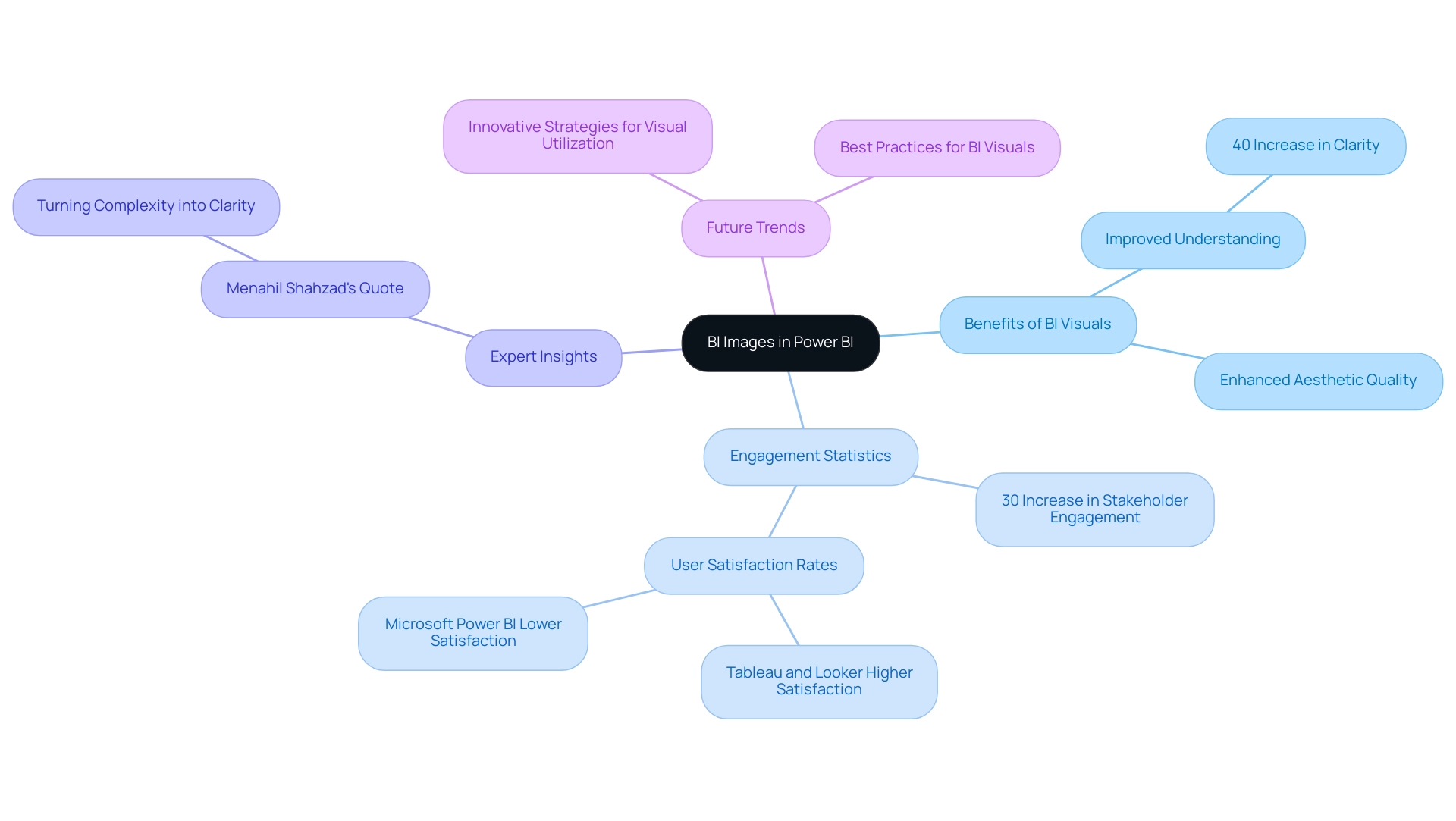

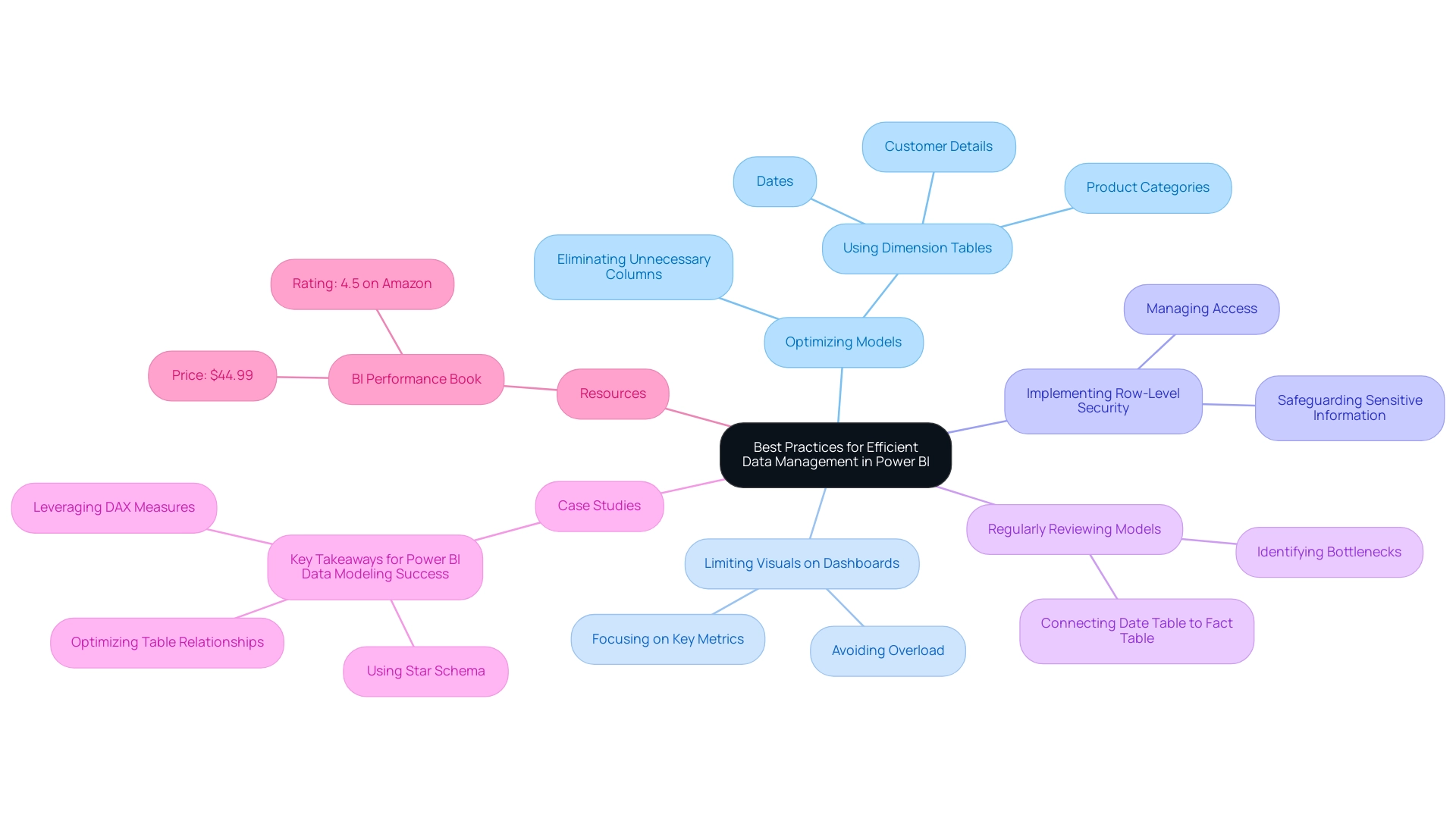

Best Practices for Integrating Images into Power BI Reports

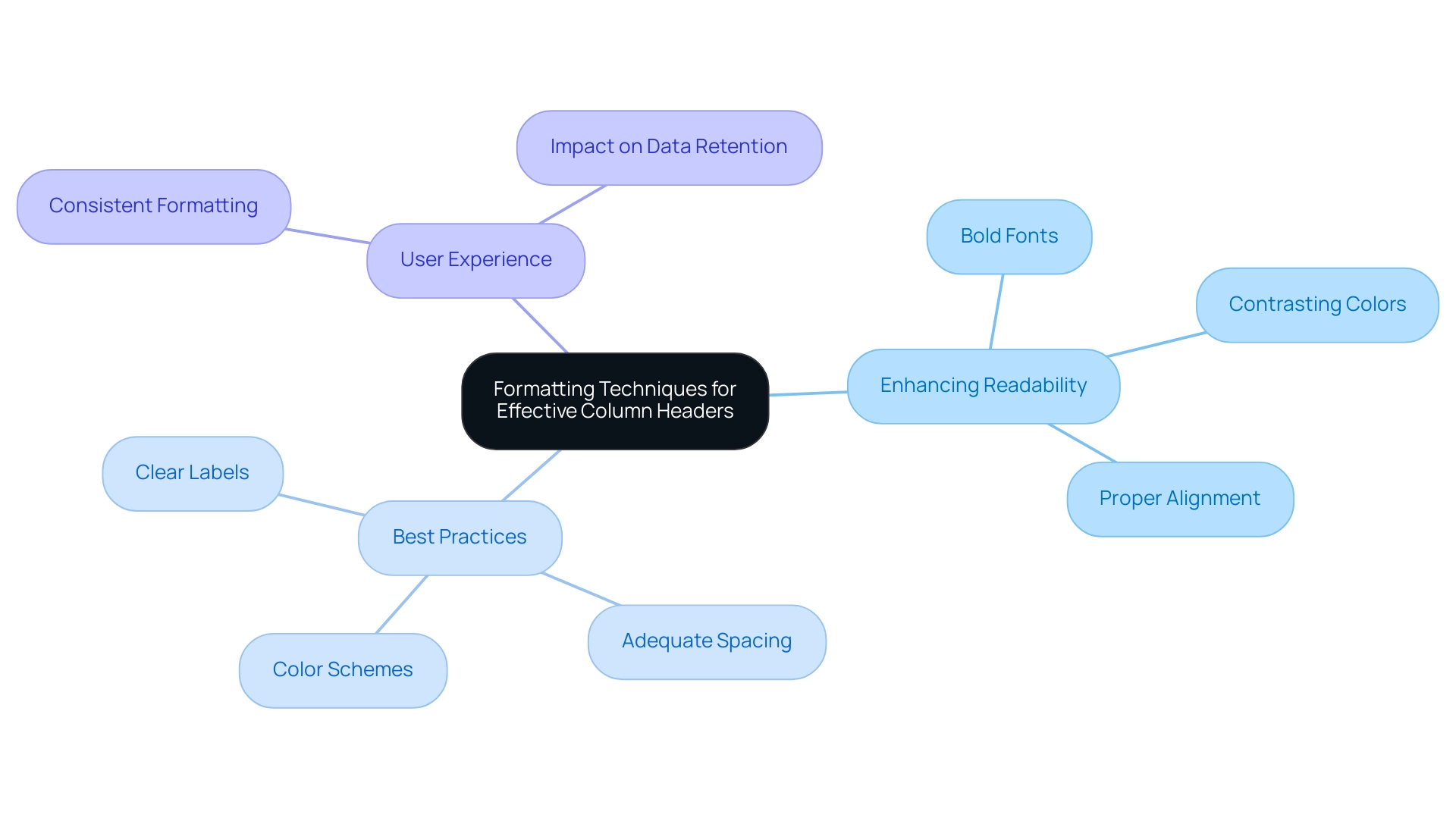

Incorporating bi images into Power BI presentations significantly enhances the clarity and engagement of your information displays, especially when leveraging Business Intelligence (BI) and Robotic Process Automation (RPA) for operational efficiency. Yet, challenges such as time-consuming document creation and data inconsistencies can impede the effectiveness of these insights. To achieve optimal results, consider the following best practices:

-

Utilize Superior Visuals: High-resolution visuals are essential for maintaining clarity and professionalism in your documents. Research indicates that content featuring high-quality visuals can lead to a 323% improvement in user performance when following directions, illustrating the critical role of visual quality in effective communication. Furthermore, statistics reveal that Pinterest users exposed to immersive, actionable ads were 59% more likely to recall the brand, underscoring the significance of quality visuals in enhancing brand recall in reports.

-

Optimize Graphic Size: Oversized visuals can slow document loading times, adversely affecting user experience. It is vital to reduce file sizes without sacrificing quality, ensuring your documents load swiftly and efficiently. Studies demonstrate that documents with optimized bi images can significantly decrease loading times, thereby enhancing overall performance—crucial when utilizing BI tools to drive data-driven insights.

-

Maintain Consistency: A consistent style across images—such as uniform color schemes and sizes—creates a cohesive visual narrative throughout your report. This consistency not only enhances aesthetics but also aids the audience’s comprehension of the information presented, which is essential for effective decision-making driven by BI insights.

-

Relevance: Images must directly relate to the data being showcased. Relevant visuals enhance understanding and retention, while unrelated visuals can distract and confuse the audience. For instance, case studies indicate that users are more likely to engage with content that includes pertinent visuals, leading to increased recall and action. The case analysis on Pinterest’s influence on purchases highlights how effective visuals can sway consumer behavior, emphasizing the importance of relevant graphics in BI reports.

-

Accessibility: Ensuring that visuals are accessible to all users, including those with visual impairments, is paramount. Including alternative text descriptions for visuals not only enhances accessibility but also improves the user experience for everyone.

By adhering to these best practices, you can harness the potential of bi images in BI to create documents that are not only visually appealing but also effective in communicating essential insights. Moreover, content featuring visuals receives up to 40% more shares than content lacking images, reinforcing the importance of graphics in boosting engagement and supporting the overarching objectives of BI and RPA, including solutions like EMMA RPA and Automate from Creatum GmbH, in driving business growth.

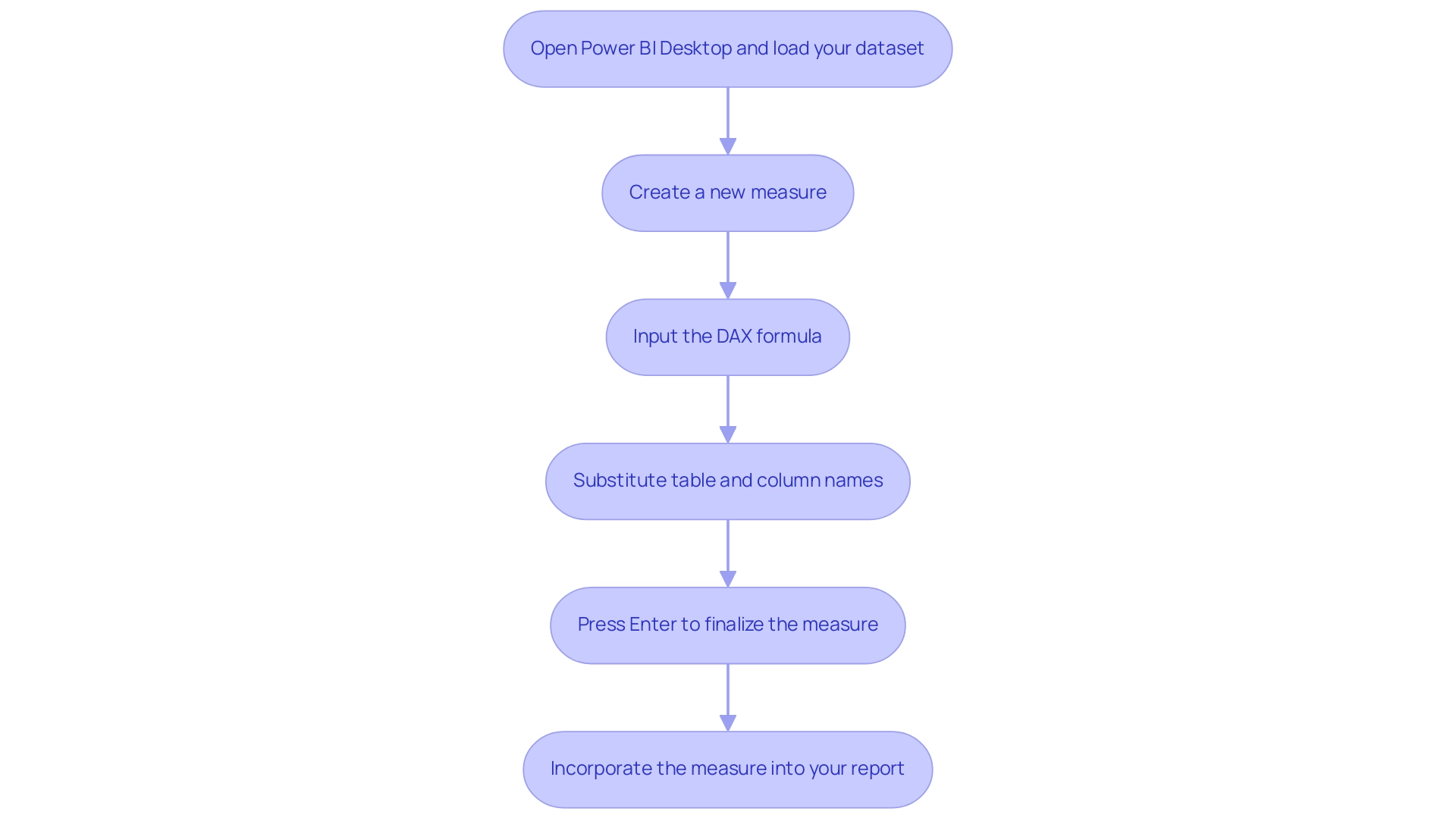

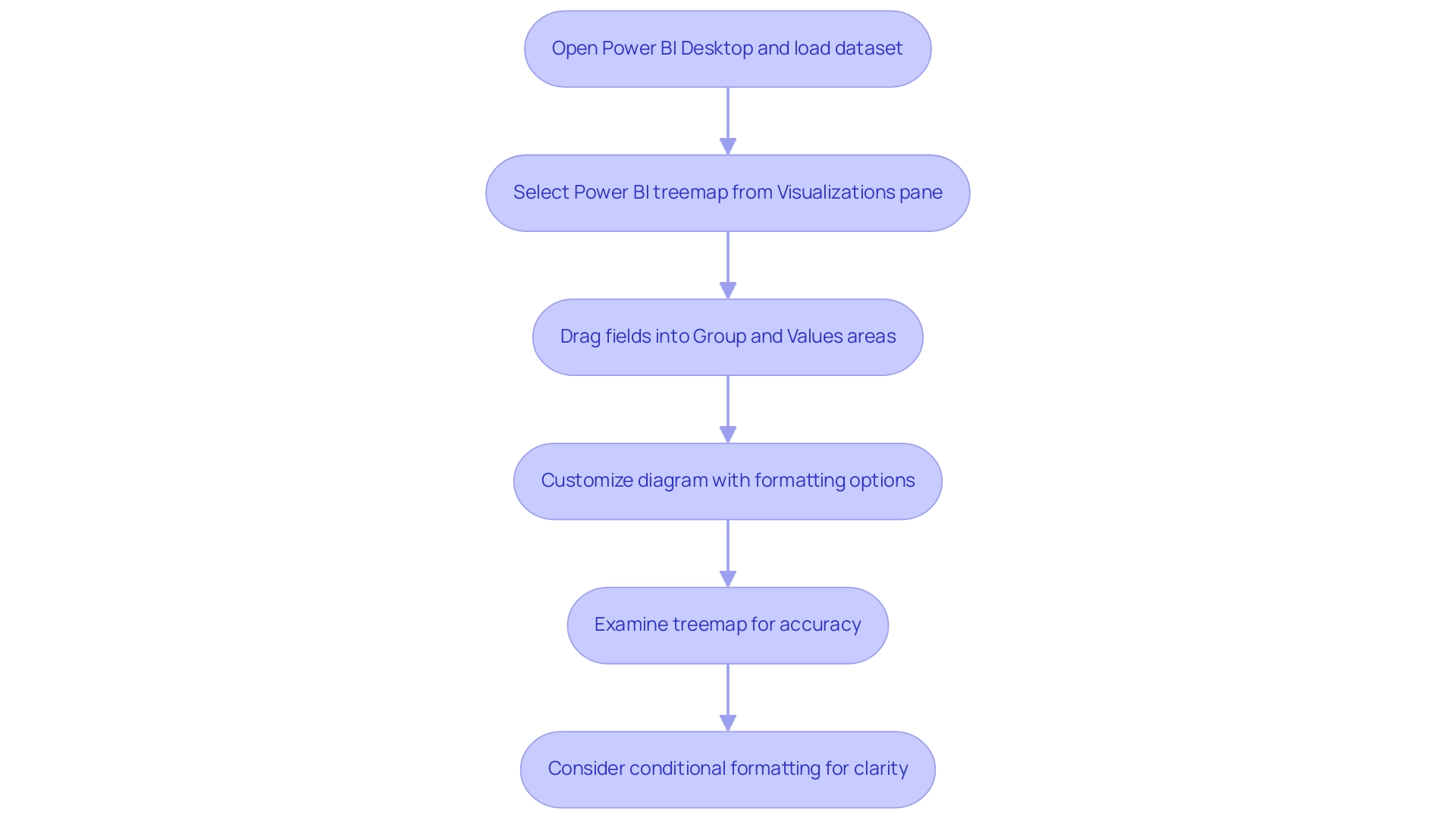

Technical Guide: How to Add and Format Images in Power BI

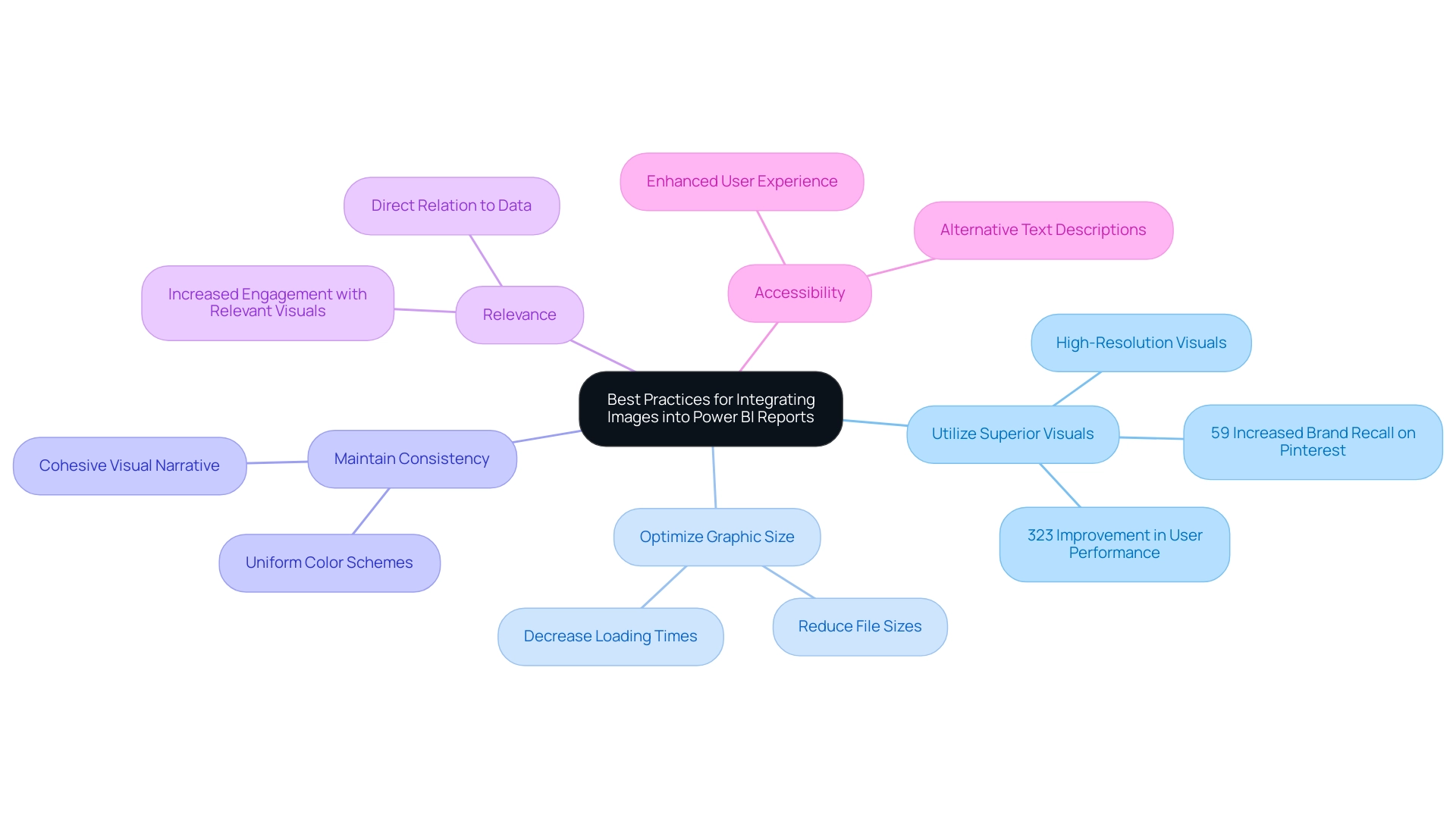

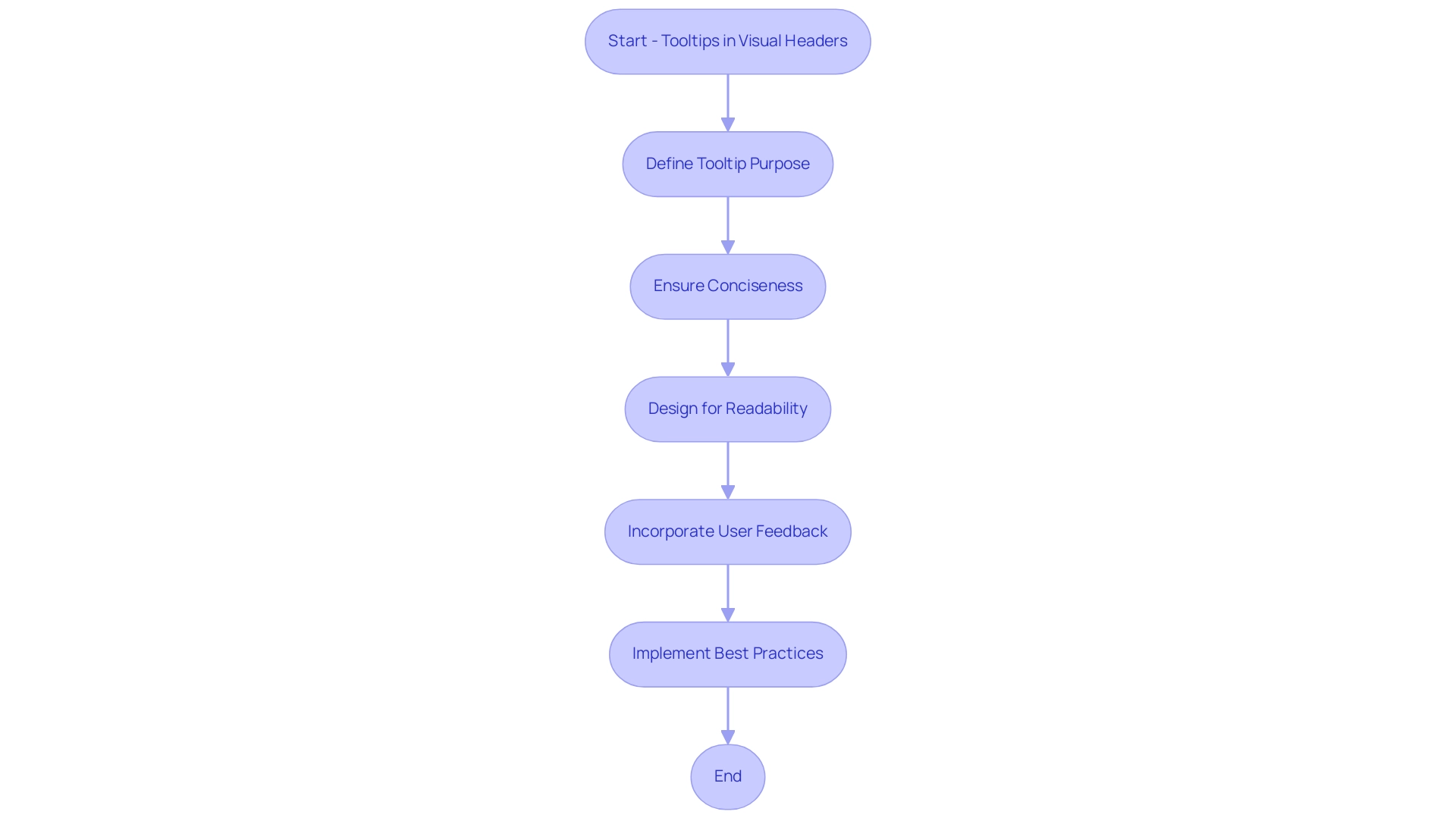

Incorporating and formatting BI images in Power BI is a straightforward process that significantly enhances your reports. However, it is crucial to consider privacy concerns when uploading photos. Avoid uploading images with embedded location data (EXIF GPS), as visitors to the website can download and extract this information.

This consideration is particularly important for users of Creatum GmbH’s platform. Follow these steps to effectively incorporate visuals:

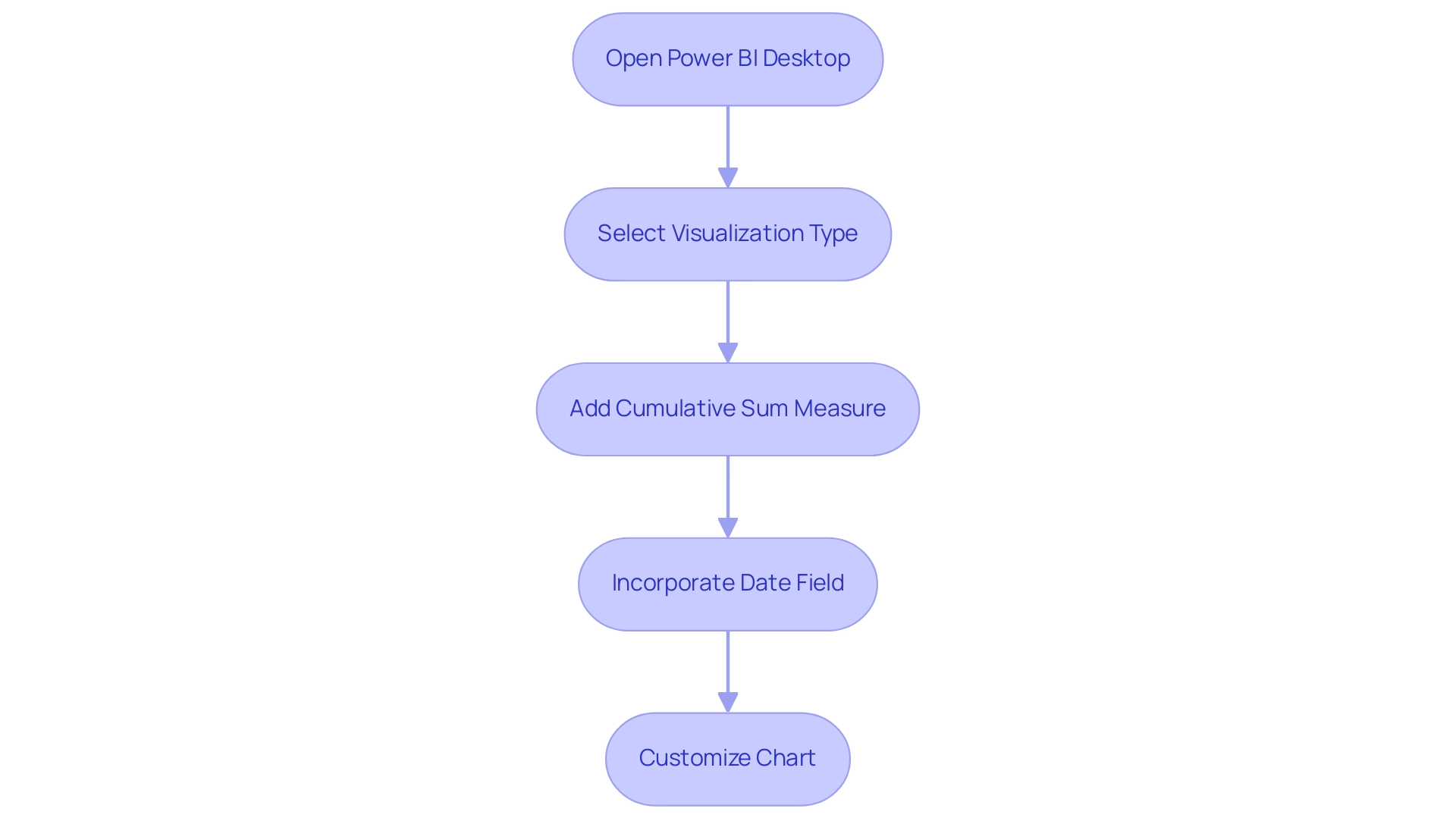

- Insert an Image: Start by navigating to the ‘Insert’ tab in Power BI Desktop and selecting ‘Image’. You can either choose a file from your local machine or provide a URL for online visuals.

- Format the Picture: After inserting the image, resize and position it as needed. Utilize the formatting pane to adjust various properties, including border styles, shadow effects, and transparency levels, ensuring the visual element complements your report’s design.

- Set Visual URL: For visuals hosted online, confirm that the URL is publicly accessible. In your data model, designate the column type as ‘Image URL’ to ensure proper display within tables or matrices.

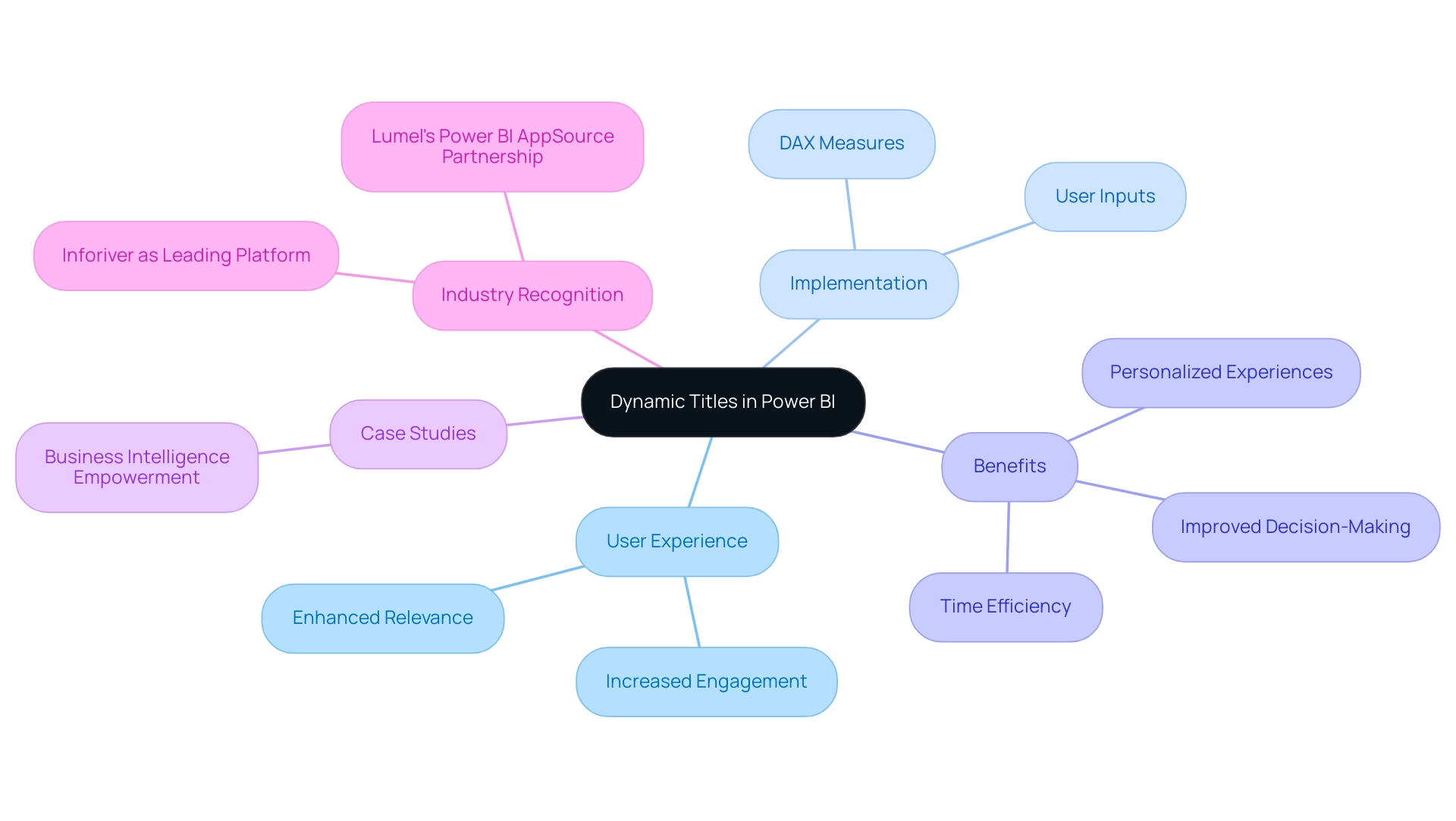

- Dynamic Visuals: To create engaging visuals that respond to user interactions, employ DAX measures to dynamically change the displayed graphic based on slicer selections. This feature allows for a more personalized reporting experience.

- Evaluation: Always review your document across various devices and screen sizes to ensure visuals display properly. This step is crucial for maintaining a professional appearance and ensuring accessibility. Effectively incorporating BI images can lead to better user engagement and understanding.

A recent case study on Power BI usage highlighted how visualizing interactions can guide organizations in optimizing their resources. By identifying which documents are actively used, teams can focus on maintaining and enhancing those that provide the most value while addressing underutilized materials. Notably, outcomes from the execution log are arranged in descending order, beginning with the most recent execution, aiding in comprehending document usage.

As of 2025, statistics indicate that documents containing BI images experience a higher engagement rate, making it crucial to remain informed on best practices for visual formatting. Professional guidance underscores the significance of clarity and relevance in visual selection, ensuring that each visual component contributes value to the overall narrative of the document. Additionally, it is essential to consider the challenges in utilizing insights from BI dashboards, such as the time-consuming creation of documents, inconsistencies in information, and the absence of actionable guidance, to enhance the overall effectiveness of your reporting.

The Importance of Image Quality and Selection in Reporting

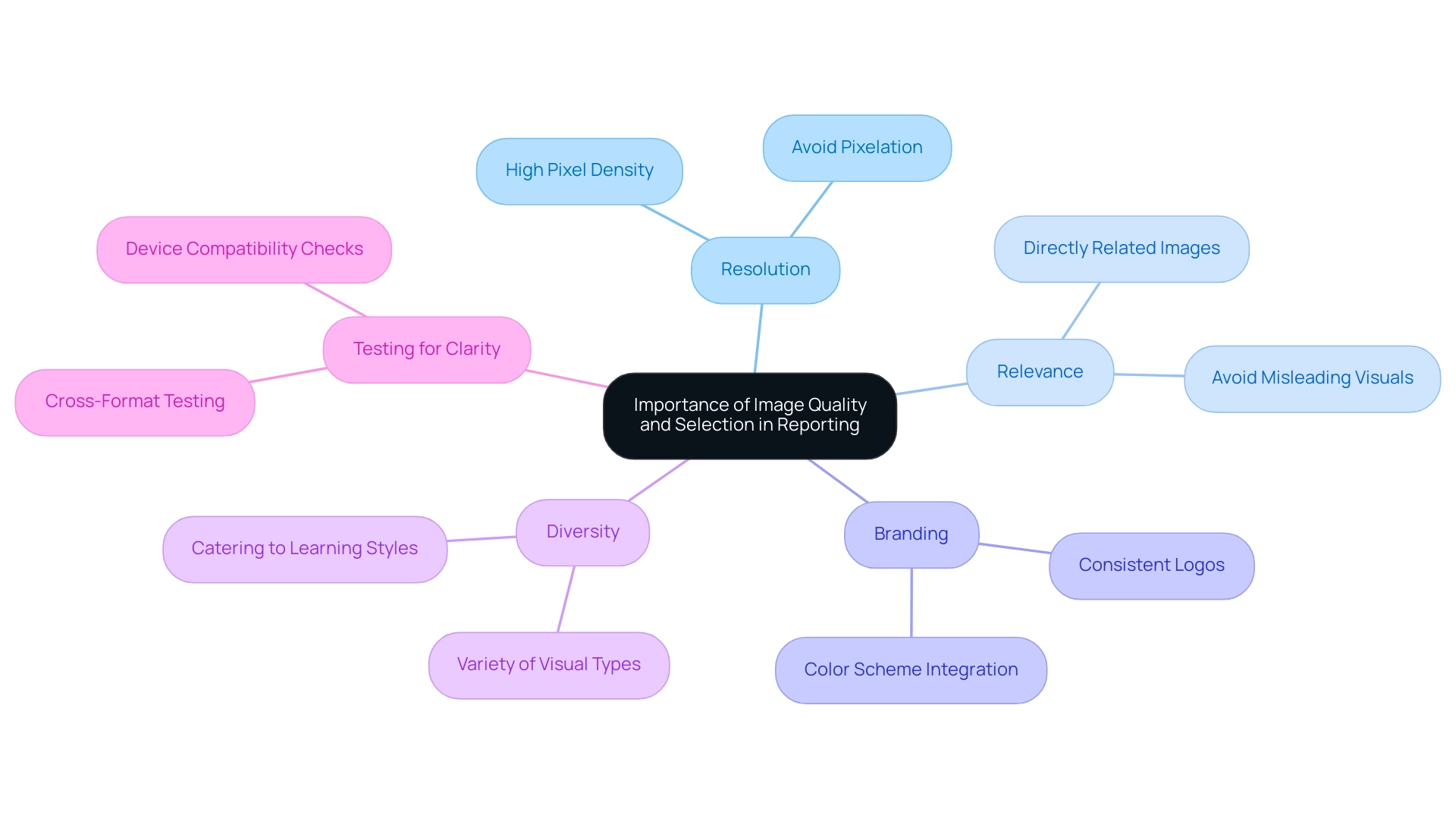

The quality and variety of BI images in Power BI documents are crucial for shaping how data is perceived and understood. This is particularly relevant given the frequent challenges such as the time-consuming nature of document creation and the data inconsistencies that arise from a lack of governance strategy. High-quality visuals, including BI images, not only bolster the credibility of reports but also enable more informed decision-making by providing clear, actionable guidance. Below are essential considerations for optimizing image use in reporting:

- Resolution: Ensure that BI images possess a resolution that meets display requirements. Low-resolution BI images can appear pixelated, undermining professionalism and clarity, potentially exacerbating confusion stemming from inconsistent information.

- Relevance: Select BI images that are directly related to the information being presented. Irrelevant BI images can mislead the audience and obscure the intended message, leading to misinterpretations and complicating the creation process.

- Branding: Integrate brand elements such as logos and color schemes to maintain consistency and reinforce brand identity. This not only enhances recognition but also fosters trust in the report’s findings, which is essential when stakeholders navigate intricate information.

- Diversity: Employ a variety of visual types—BI images, charts, icons, and photographs—to address different facets of the data and engage the audience effectively. This diversity caters to various learning styles and preferences, making the information more accessible and actionable.

- Testing for Clarity: Always test how BI images render across different formats and devices to ensure they retain clarity and impact. This step is vital in a data-rich environment where the effectiveness of BI images can significantly influence decision-making performance and reduce the time spent on report adjustments.

Research indicates that while user preferences for visualizations exist, they do not significantly correlate with actual judgment accuracy or efficiency. This suggests that the impact of visualizations may be broader than previously believed, underscoring the necessity for high-quality visuals that resonate with a diverse audience. In 2025, the importance of visual quality, particularly BI images, in business intelligence reporting cannot be overstated, as it directly affects how information is interpreted and acted upon.

Experts in branding emphasize that well-selected BI images enhance report credibility, reinforcing the notion that effective visual communication is vital to successful business intelligence strategies. Furthermore, the case study titled “User Preferences in Visualization” illustrates how user preferences can influence decision-making performance, providing a real-world example that highlights the significance of effective visual aids. Additionally, as noted by Leisti and Häkkinen, decision time data from earlier experiments supports the idea that strategic selection approaches, particularly those utilizing BI images, can yield more consistent outcomes, aligning with the audience’s interest in operational efficiency.

At Creatum GmbH, we recognize that addressing these challenges is essential for effectively leveraging insights from BI dashboards.

Overcoming Challenges: Solutions for Effective Image Use in Power BI

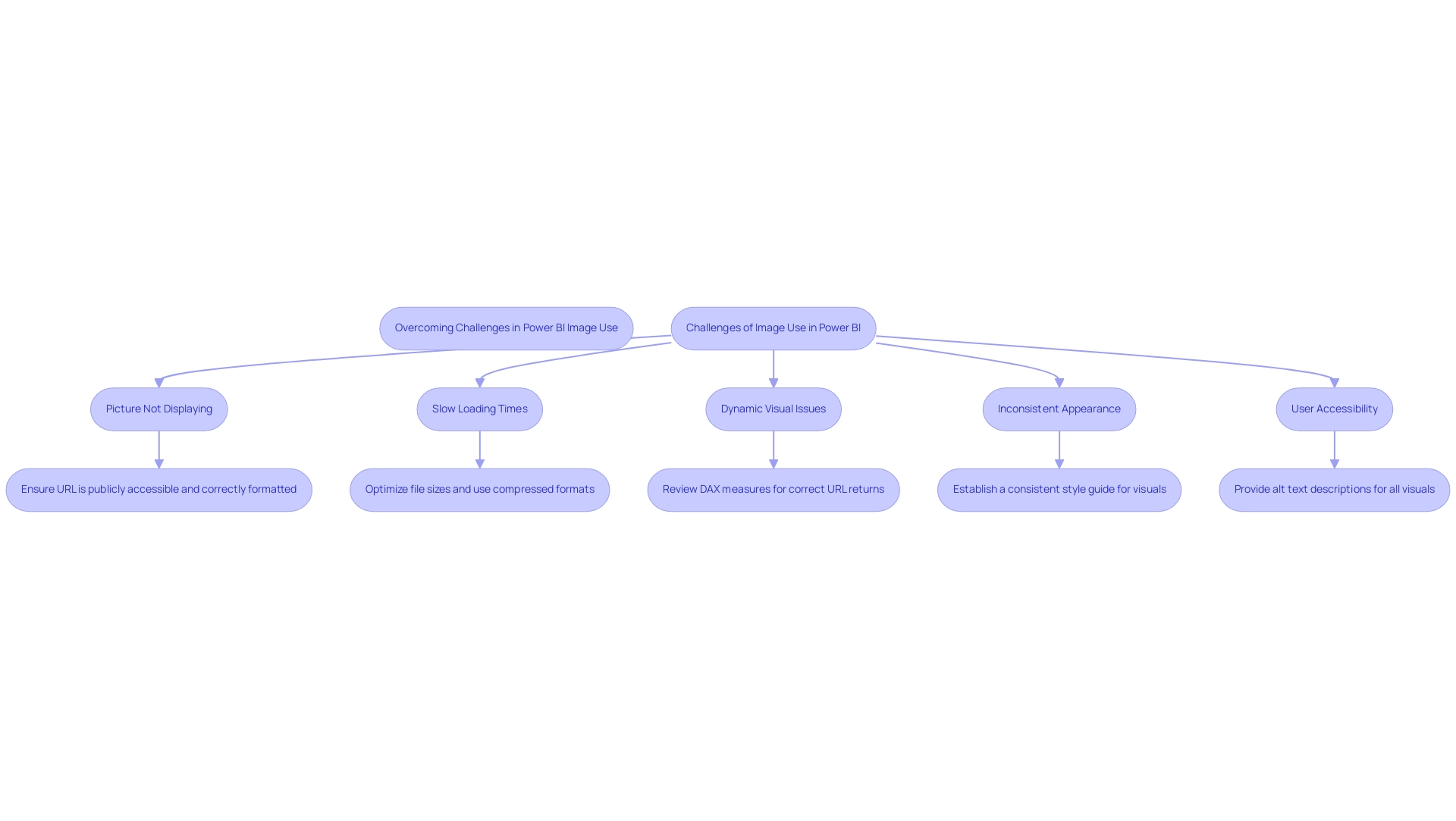

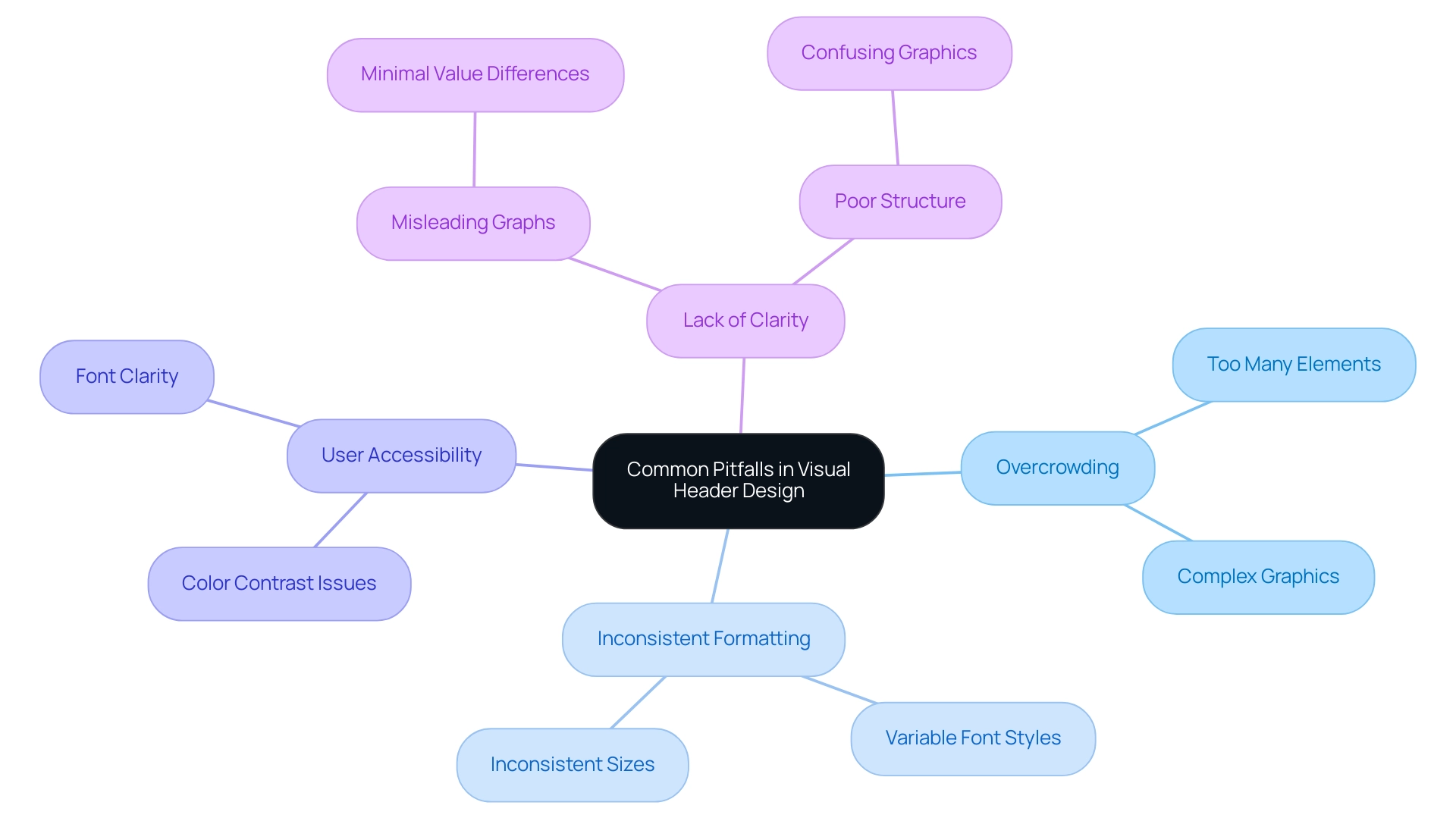

Incorporating BI images can present a range of difficulties that might obstruct reporting efficiency. Common issues include:

-

Picture Not Displaying: Ensure that the URL is publicly accessible and correctly formatted. Testing the URL in a web browser can confirm its functionality.

-

Slow Loading Times: Optimize file sizes prior to uploading them to Power BI. Utilizing compressed formats such as JPEG or PNG can significantly reduce file sizes while maintaining visual quality, thus improving loading times. Research indicates that properly optimized visuals can decrease loading times by as much as 30%, allowing your team to concentrate on more strategic, value-enhancing tasks.

-

Dynamic Visual Issues: If dynamic visuals fail to display correctly, review the DAX measures utilized. Ensure these measures accurately return the appropriate URLs based on user selections, which is essential for dynamic reporting and informed decision-making.

-

Inconsistent Appearance: Establish a consistent style guide for visuals to achieve a cohesive look across reports. Regularly reviewing and refreshing visuals to reflect branding modifications will assist in maintaining consistency and professionalism in reporting, addressing the frequent issue of inconsistencies in information.

-

User Accessibility: To adhere to accessibility standards, provide alt text descriptions for all visuals. This practice ensures that users with visual impairments can comprehend the content, thereby enhancing the overall user experience and trust in the data presented.

Furthermore, using the Performance Analyzer in BI Desktop can assist in measuring element performance and resource utilization, offering insights into how BI images affect overall efficiency. It is crucial to note that BI requires internet access for its online and paid applications, which may pose challenges for users needing offline access.

In 2025, case studies reveal that organizations overcoming image-related challenges have successfully implemented these solutions, resulting in enhanced report clarity and user engagement. As noted by data analysts, addressing these common issues related to BI images is vital for maximizing the effectiveness of BI reporting. Utpal Kar emphasizes this complexity, stating, “This is one of the major BI drawbacks as Microsoft has designed BI in a very complex manner.”

This underscores the importance of effective strategies in navigating the challenges associated with image usage in Power BI, especially in the context of time-consuming report creation and the need for actionable guidance.

Conclusion

Incorporating Business Intelligence (BI) images into Power BI reports is not merely an enhancement; it is a transformative approach that significantly boosts the effectiveness of data visualization and storytelling. High-quality visuals can improve comprehension by up to 40%, making complex information more accessible and engaging for stakeholders. By adhering to best practices—such as using high-resolution images, optimizing their size, and ensuring relevance and consistency—organizations can create reports that not only look professional but also facilitate informed decision-making.

The technical guidance provided demonstrates that adding and formatting images in Power BI is a manageable task that can yield substantial benefits. Understanding the nuances of image selection allows organizations to craft narratives that resonate with their audience, ultimately driving better engagement and understanding of the data at hand. Moreover, addressing common challenges associated with image use—like loading times and accessibility—ensures that reports remain effective and inclusive.

In summary, the strategic use of BI images in Power BI is essential for enhancing report clarity and stakeholder engagement. As organizations continue to navigate the complexities of data reporting, leveraging high-quality visuals will be crucial in transforming data into actionable insights. The commitment to effective data storytelling through visuals not only improves comprehension but also reinforces the importance of making informed decisions based on clear and compelling evidence.

Overview

Power BI storytelling is crucial for crafting engaging data presentations that effectively convey insights through a narrative framework. This approach not only enhances audience retention but also improves understanding. By emphasizing:

- Clarity

- Tailored messaging

- Integration of visuals

we demonstrate that these elements significantly elevate the impact of presentations. Ultimately, this facilitates better decision-making within organizations.

Introduction

In a world inundated with data, weaving compelling narratives from numbers has become an indispensable skill for professionals. Data storytelling in Power BI transcends mere presentation; it merges data, narrative, and visuals to create engaging and memorable experiences. This approach simplifies complex information and enhances retention, making insights accessible and actionable. As businesses increasingly rely on data-driven strategies, mastering the art of storytelling can lead to significant improvements in productivity and operational efficiency. With the right techniques, organizations can transform raw data into powerful stories that resonate with audiences and drive strategic decisions.

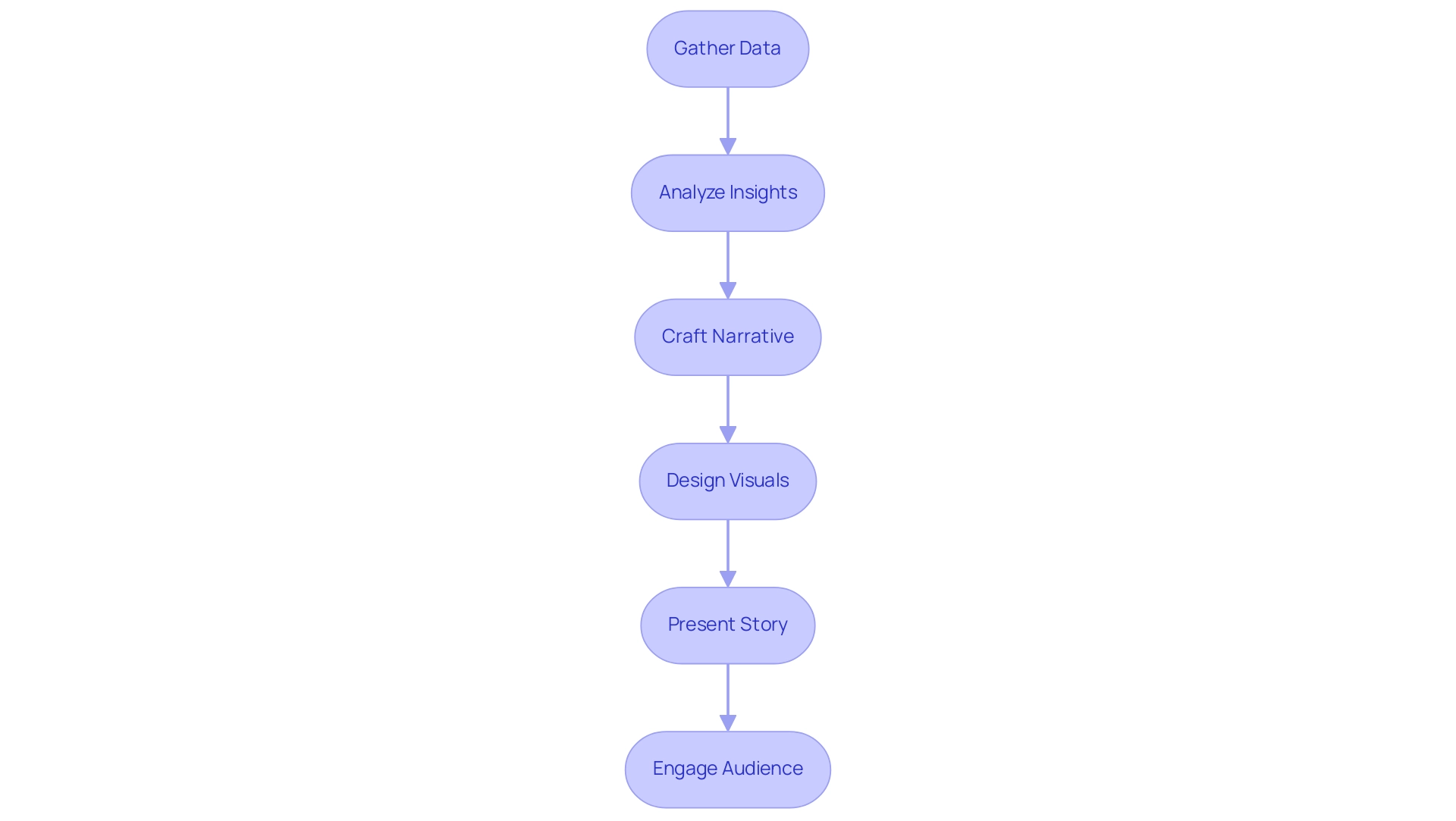

Understanding Data Storytelling in Power BI

The effective method of Power BI storytelling merges information, narratives, and visuals to create presentations that are not only cohesive but also captivating. This approach enables analysts to distill complex information into relatable and comprehensible formats. By integrating information within a storytelling framework, users can effectively highlight key insights and emphasize the significance of the information being communicated.

This narrative method not only engages the audience but also greatly enhances information retention, making it a crucial skill for professionals utilizing Power BI storytelling.

In 2025, the impact of information narratives in business presentations is underscored by recent statistics, revealing that presentations featuring stories can yield a 15% boost in productivity compared to those relying solely on text. Raja Antony, a Content Writer at Visme, notes, ‘instructions with visuals lead to a 15% boost in productivity compared to instructions with solely text,’ reinforcing the argument regarding the efficacy of information narration. Additionally, a market survey indicated that 39% of attendees were uncertain about their organization’s data-driven culture, highlighting the necessity for compelling narratives to bridge this gap.

The importance of narrative in presentations cannot be overstated; it transforms raw data into compelling stories that resonate with audiences. Effective examples of information narration through Power BI storytelling demonstrate how organizations can leverage these techniques to enhance their marketing efforts and successfully convey their brand messages. With Creatum GmbH’s Power BI services, including the 3-Day Power BI Sprint for rapid report creation and the General Management App for comprehensive management, businesses can ensure efficient reporting and actionable insights.

Moreover, the integration of AI solutions like Small Language Models and GenAI Workshops can significantly enhance information quality and improve the narrative process. As information visualization tools become more accessible to small and medium enterprises, the potential for meaningful narratives expands, enabling these organizations to utilize insights for strategic advantage.

Ultimately, Power BI storytelling involves mastering information narration, which transcends mere presentation; it is about crafting a narrative that engages, informs, and drives action, ensuring that the insights derived from information are both memorable and actionable. For those keen on advancing their skills in this domain, completing a registration form and paying a tuition fee for relevant courses can provide invaluable training. Furthermore, addressing the challenges faced by participants in presenting pertinent information frameworks due to insufficient domain knowledge underscores the essential role of effective narrative communication in overcoming these hurdles.

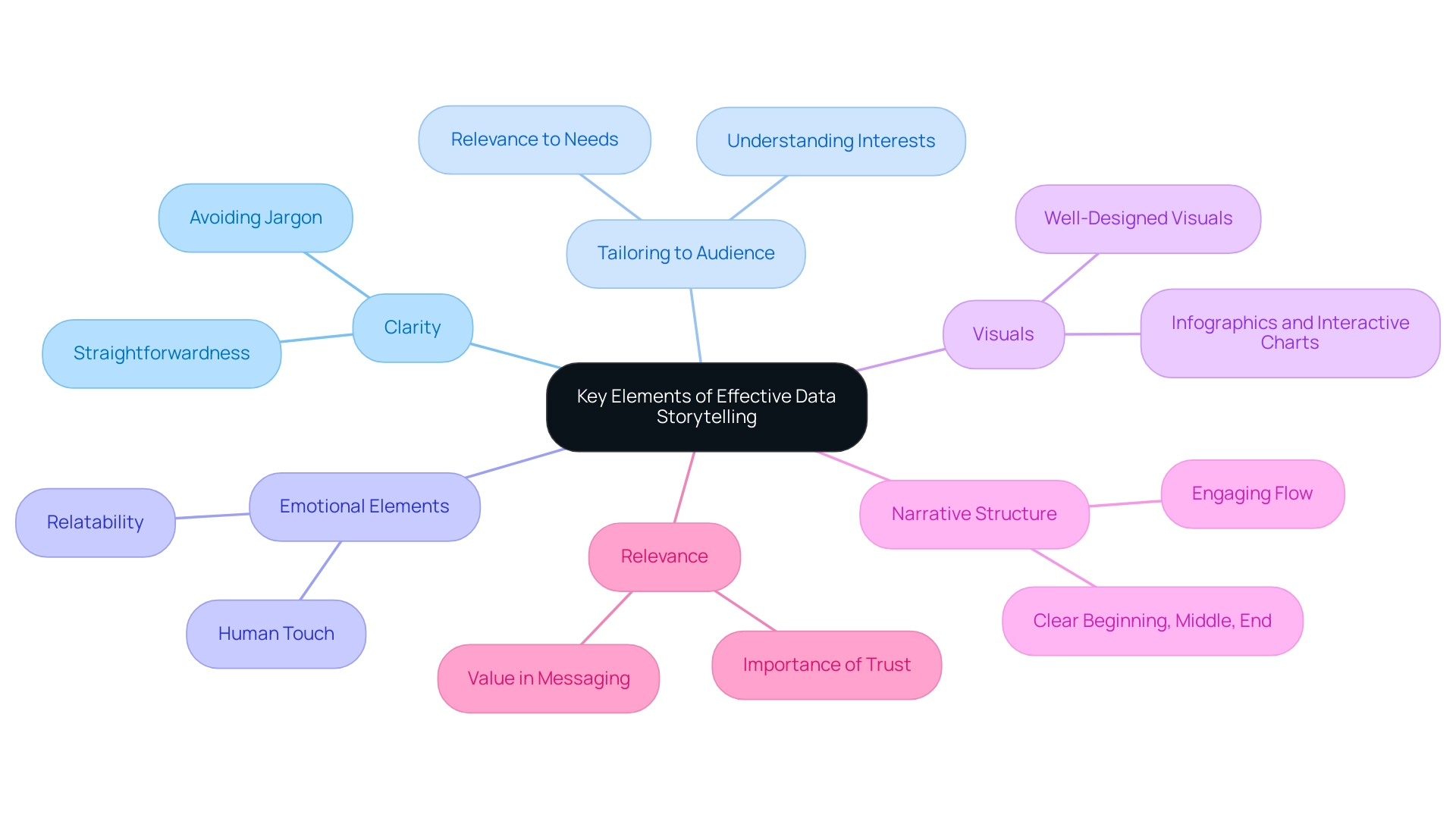

Key Elements of Effective Data Storytelling

Effective Power BI storytelling hinges on several essential elements that enhance understanding and engagement. Clarity is crucial; a clear narrative should be straightforward and free of jargon that could confuse listeners. This clarity enhances Power BI storytelling, ensuring that the message is easily grasped and facilitating better decision-making.

Tailoring the story to the listeners’ specific interests and needs is vital. Presenting information through Power BI storytelling resonates with the audience, capturing their attention and underscoring the significance of what is being shared. In the context of operational efficiency, utilizing Robotic Process Automation (RPA) from Creatum GmbH can streamline workflows, allowing for more pertinent information presentation that aligns with business objectives.

Incorporating emotional elements into the story can significantly enhance relatability and memorability. When Power BI storytelling incorporates a human touch, it fosters a deeper connection with the audience, making the insights more impactful. The strategic use of visuals is essential for complementing the narrative in Power BI storytelling.

Well-designed visuals are essential for Power BI storytelling, as they simplify complex information, making it more digestible and engaging. For instance, incorporating infographics or interactive charts can greatly enhance Power BI storytelling by transforming raw numbers into compelling stories. This is especially important as organizations increasingly depend on information-driven insights to enhance operational efficiency, as observed in the case study of a mid-sized company that improved its processes through GUI automation, achieving a 70% reduction in entry errors and an 80% increase in workflow efficiency.

A well-organized narrative with a clear beginning, middle, and end leads viewers through the information journey. This structure not only aids understanding but also supports Power BI storytelling, keeping the audience engaged and allowing them to follow the narrative seamlessly.

In 2025, the emphasis on clarity and relevance in information presentations is more critical than ever. Statistics show that businesses emphasizing clear communication in their information narratives experience enhanced operational efficiency and decision-making results. For instance, Walmart’s global sales crossed $600 billion, highlighting the significance of operational efficiency in a competitive market.

Additionally, Brinda Gulati predicts that the worldwide Big Data analytics market will attain $549.73 billion by 2028, emphasizing the increasing significance of information narratives in business strategy. As organizations navigate a data-rich environment, the ability to utilize Power BI storytelling to present information clearly and relevantly will be a key differentiator in achieving strategic goals. Furthermore, a case study on mobile commerce trends shows that nearly 60% of Canadians used mobile phones for online purchases, illustrating how effective information presentation can enhance customer engagement and streamline processes.

The recent news indicating that pharmaceutical and healthcare companies scored highest in corporate communications clarity further emphasizes the importance of trust and value in messaging, which ties back to the relevance and clarity in storytelling. Before implementing RPA and GUI automation, many companies faced challenges such as manual information entry errors and slow software testing, which hindered their operational efficiency.

Proven Strategies for Crafting Compelling Data Narratives

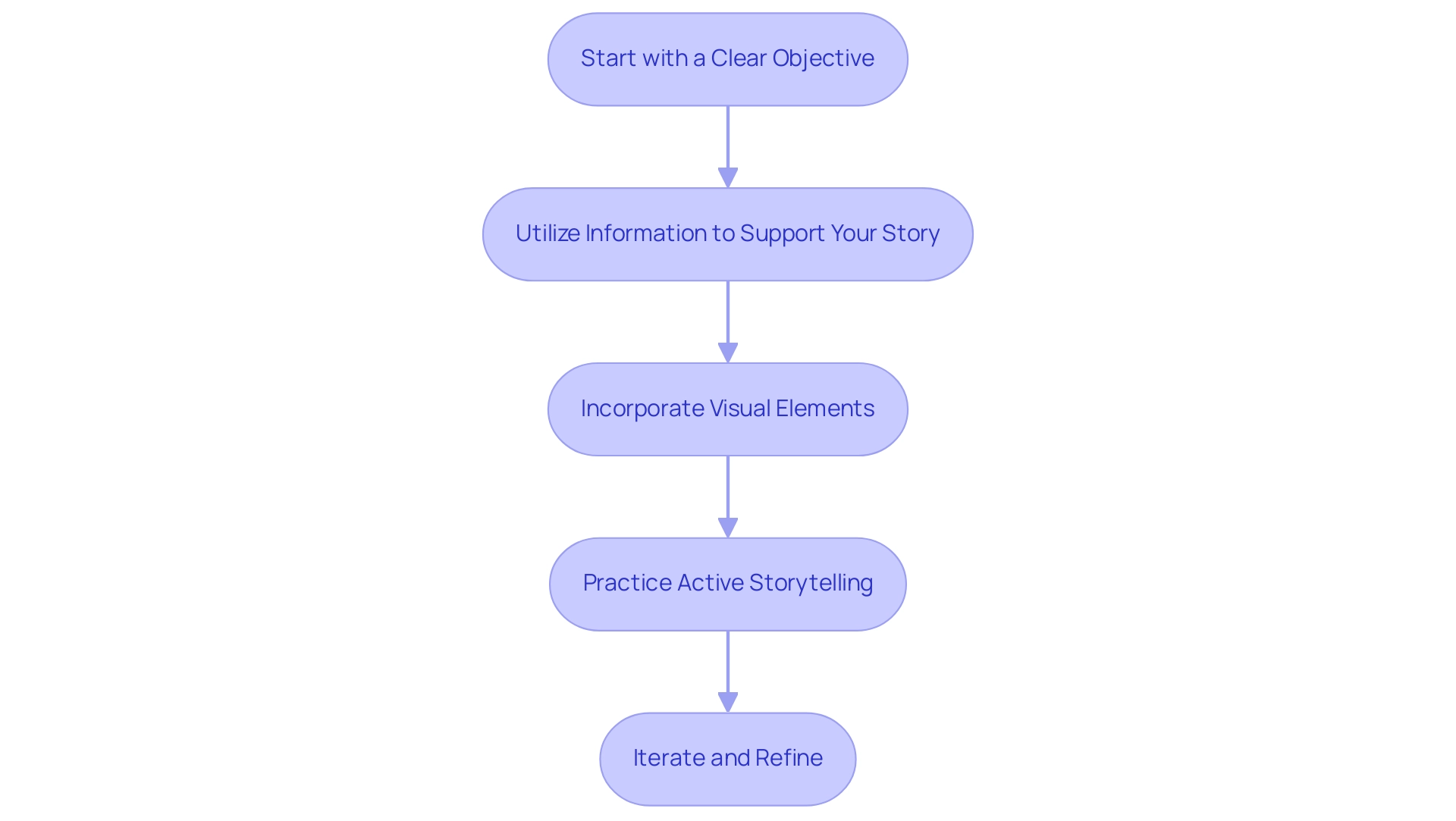

To create compelling data narratives in Power BI, implementing the following strategies is essential:

-

Start with a Clear Objective: Establishing a well-defined goal for your presentation is crucial. Research indicates that presentations with clear objectives are 70% more likely to resonate with audiences, leading to better retention and engagement. This underscores the importance of a structured approach to Power BI storytelling for effective communication.

-

Utilize Information to Support Your Story: Every statistic should serve to reinforce your narrative. By harmonizing your information with your message, you create a unified narrative that connects with your listeners. For instance, gathering Google Trends information via web scraping can uncover valuable insights into consumer interests and behaviors, demonstrating how information can enhance narratives. This approach not only improves understanding but also fosters trust in the information presented.

-

Incorporate Visual Elements: Visual aids such as charts, graphs, and infographics are vital for illustrating key points. Effective use of visuals can increase viewer engagement by up to 80%, making complex data more accessible and memorable. Leveraging Power BI storytelling features, like the 3-Day Power BI Sprint, can assist you in swiftly producing professionally crafted reports that enhance visual narrative.

-

Practice Active Storytelling: Engage your audience by incorporating interactive elements into your presentation. Asking questions and encouraging participation can significantly enhance the narrative experience, making it more dynamic and relatable. As Imed Bouchrika, PhD Co-Founder and Chief Data Scientist, states, “The learning process is predetermined and goes on a straight line,” highlighting the importance of a structured narrative.

-

Iterate and Refine: Continuously seek feedback on your presentations to identify areas for improvement. Iteration based on audience responses enhances the effectiveness of your storytelling and demonstrates a commitment to delivering value. By enabling companies to derive significant insights through business intelligence, you can convert raw information into actionable insights that drive decision-making. Furthermore, utilizing RPA solutions like Power Automate can enhance workflow automation, tackling challenges such as time-consuming report creation and inconsistencies. Moreover, the General Management App can improve operational efficiency, ensuring that your information stories are not only engaging but also actionable.

By utilizing these approaches, you can convert your presentations into engaging stories that exemplify Power BI storytelling, which not only inform but also motivate action. At Creatum GmbH, we are committed to assisting you in realizing the full potential of your information.

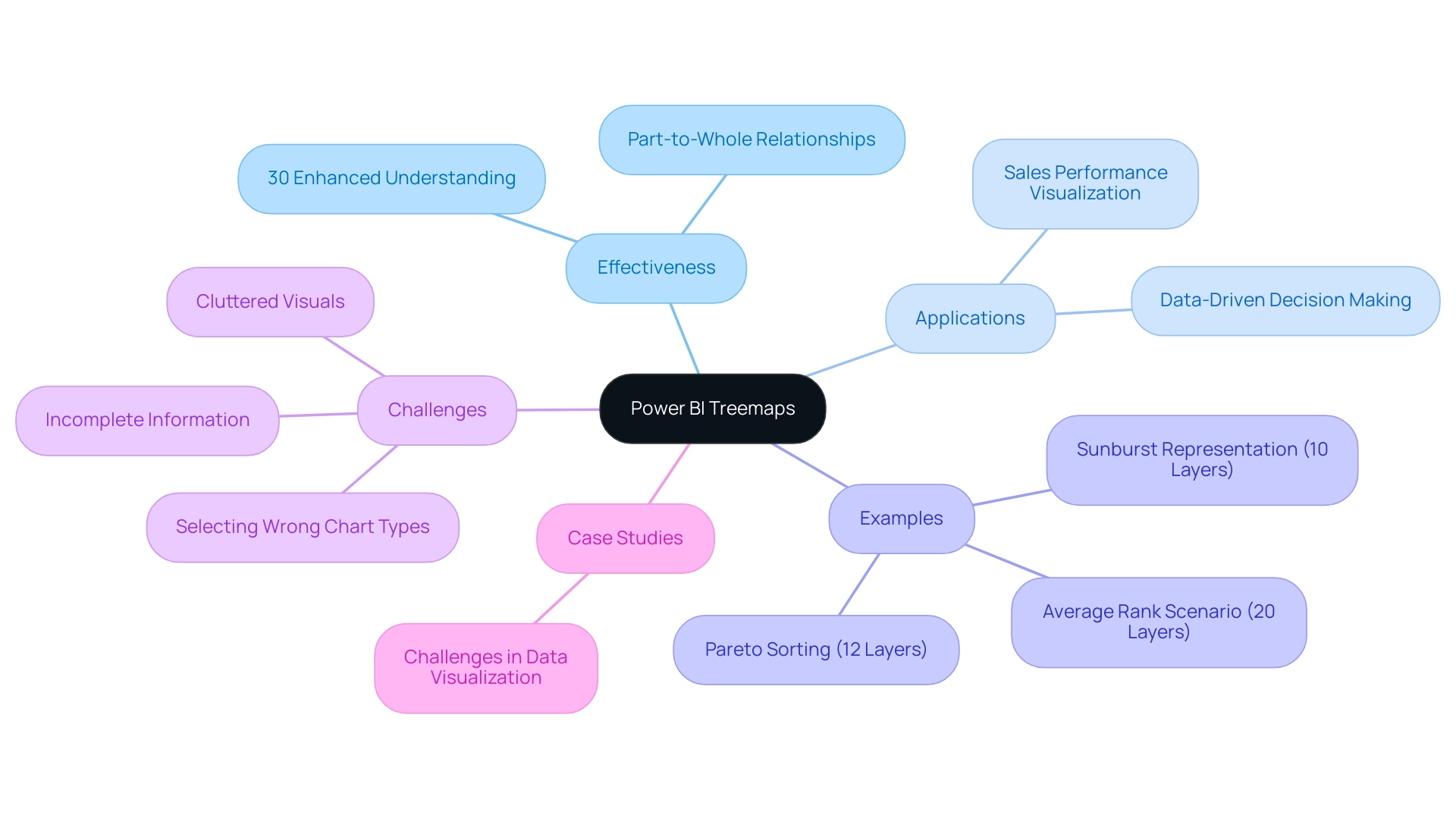

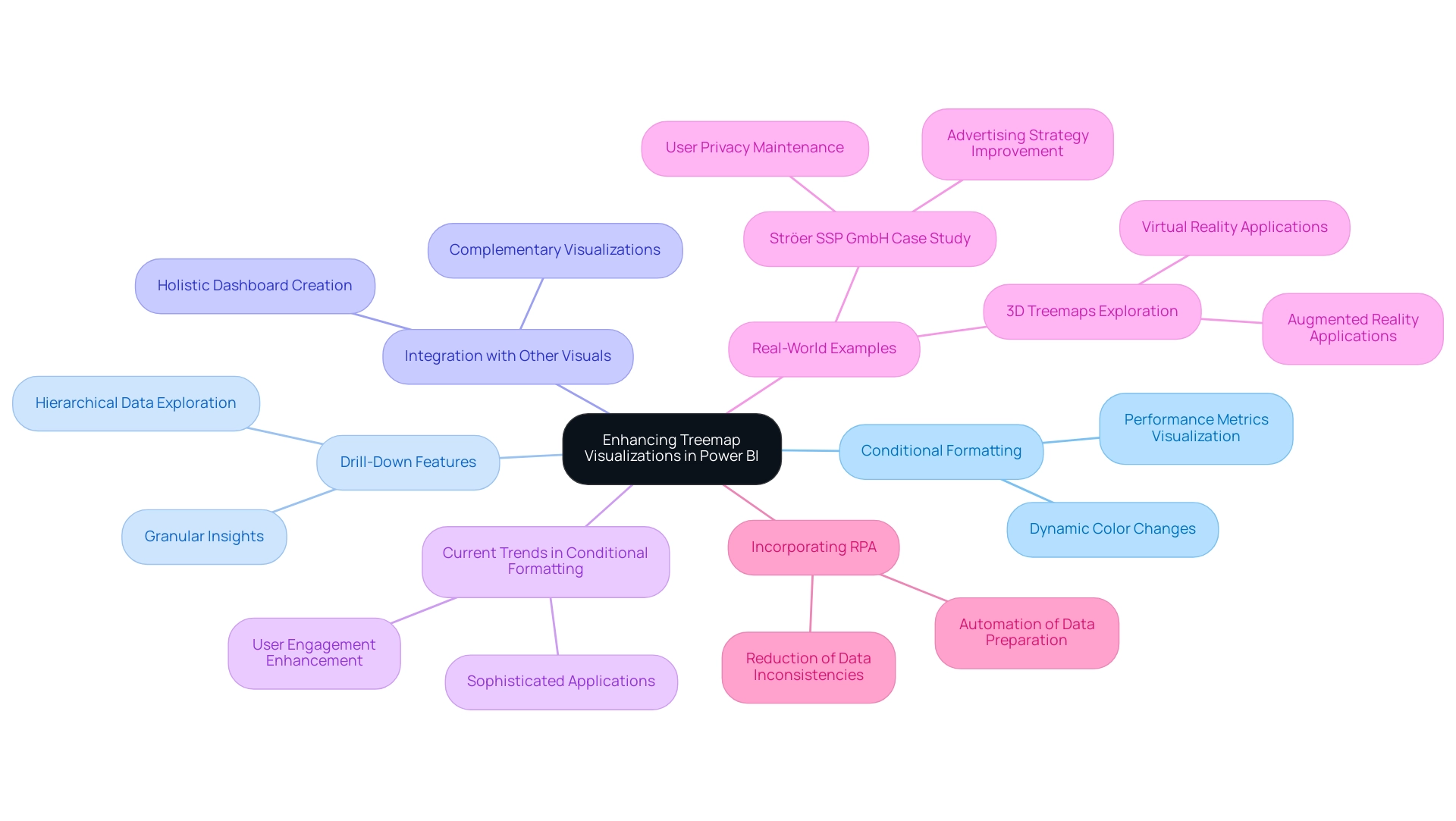

Leveraging Visualizations to Enhance Your Data Story

Visualizations are critical for crafting engaging narratives that highlight Power BI storytelling. To maximize their impact, consider these essential best practices:

-

Choose the Right Type of Visualization: Selecting the appropriate visualization is fundamental. For example, line charts are ideal for illustrating trends over time, while bar charts effectively compare different categories. Understanding the essence of your information will guide you in making the right choice.

-

Keep It Simple: Clarity is paramount. Overloading visuals with excessive information can obscure the main message. Strive for simplicity to ensure that your audience can swiftly grasp the insights you wish to convey.

-

Use Color Wisely: Color enhances aesthetics and influences interpretation. Thoughtful color selections can highlight essential information and evoke emotional responses. However, ensure your color palette is accessible; studies indicate that too many colors can increase user interpretation time by up to 20%.

-

Incorporate Interactive Elements: Adding interactive features such as filters and drill-down options empowers users to engage with the information on a deeper level. This interactivity fosters exploration and can lead to more meaningful insights, addressing the common challenge of extracting actionable guidance from Power BI dashboards.

-

Provide Contextual Information: Context is vital for understanding the significance of the information presented. Clear titles, annotations, and explanations can transform raw data into a narrative that resonates with viewers, enhancing their understanding and retention. Emphasizing this point can significantly improve the effectiveness of your visualizations, particularly in addressing inconsistencies.

-

Ensure Accessibility: Designing dashboards with accessibility in mind is essential. For instance, considering users with visual impairments can greatly enhance user satisfaction and adoption rates, as demonstrated in the case study titled “Ensuring Accessibility in Dashboards.” Accessible dashboards not only broaden your audience but also elevate overall engagement.

-

Enhance Relationships: Simplifying measurements and optimizing connections in Power BI can lead to quicker DAX query execution and improved analysis efficiency. This practice is crucial for enhancing overall dashboard performance and mitigating the time-consuming nature of report creation.

By adhering to these best practices, you can create impactful visualizations that enhance Power BI storytelling, effectively showcasing information and narrating a compelling story that drives informed decision-making. Leveraging Business Intelligence and RPA solutions like EMMA RPA and Power Automate from Creatum GmbH can further bolster operational efficiency and business growth. Book a free consultation to learn more.

Engaging Your Audience: Tailoring Stories for Impact

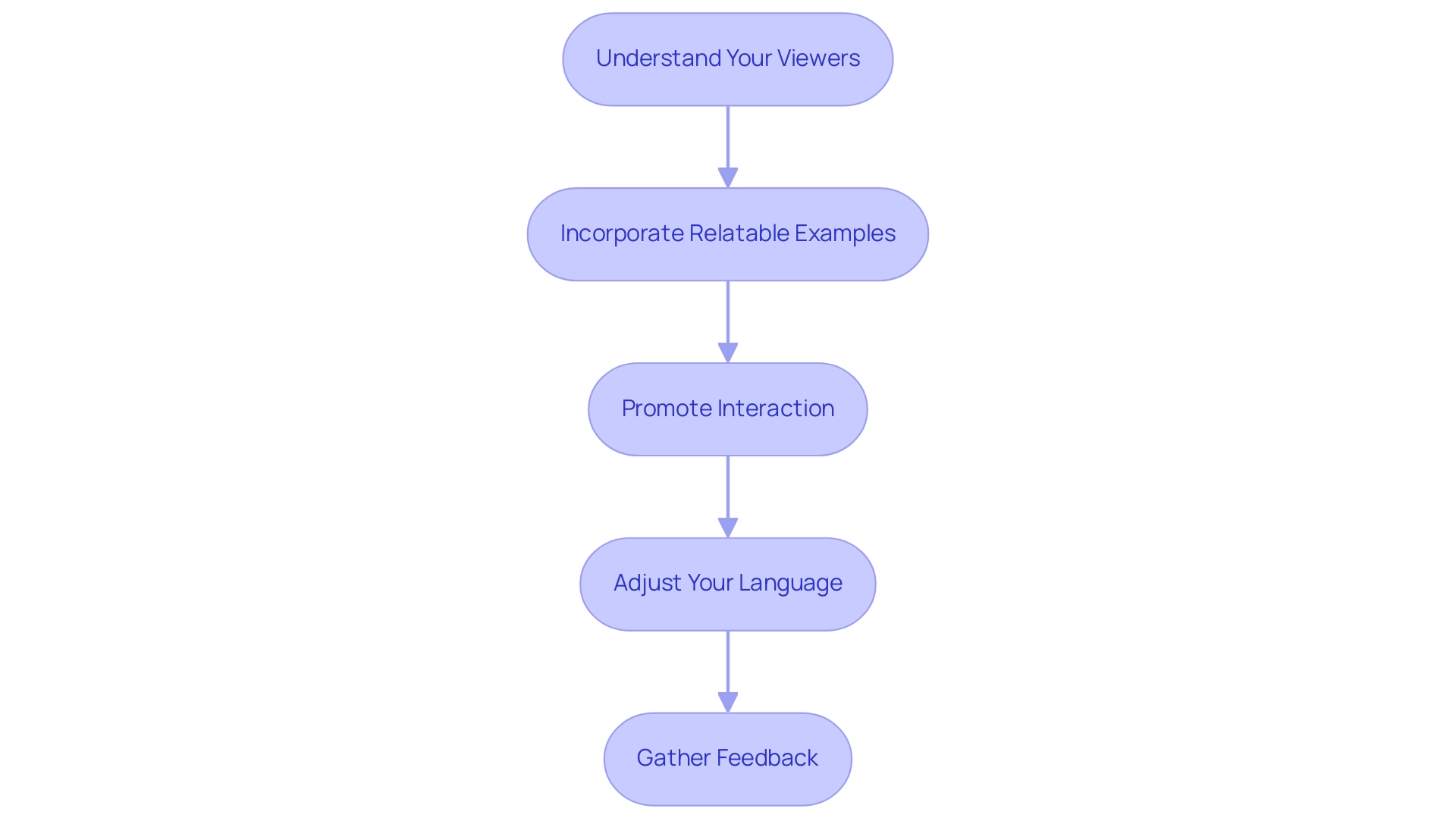

Captivating your listeners is essential for impactful Power BI storytelling, particularly within the vast AI environment. To maximize impact, consider the following strategies:

-

Understand Your Viewers: Conduct thorough research on the background, interests, and familiarity with the information. This knowledge allows you to tailor your narrative to their specific needs and expectations. As highlighted by Invesp, companies with robust omnichannel engagement strategies retain 89% of their customers, underscoring the importance of understanding your target demographic in Power BI storytelling. Furthermore, utilizing customized AI solutions from Creatum GmbH can assist you in recognizing the appropriate technologies that align with the challenges faced by your users in navigating the complexities of AI.

-

Incorporate Relatable Examples: Use examples that resonate with people’s experiences or challenges. This connection fosters relatability and enhances the overall engagement of your presentation. By integrating insights derived from Business Intelligence, you can utilize Power BI storytelling to transform raw data into relatable narratives that drive home your points.

-

Promote Interaction: Encourage a two-way dialogue by inviting questions and discussions throughout your presentation. This interaction not only keeps the viewers engaged but also allows for real-time clarification of concepts. In a world where 38.7% of people use Facebook monthly, leveraging familiar platforms can enhance engagement, especially when discussing complex AI solutions.

-

Adjust Your Language: Adapt your terminology to match the group’s level of expertise. Avoid overly technical jargon if those you are addressing may not be familiar with it, ensuring that your message is accessible and clear. The need for agility and innovation in engaging strategies is crucial, especially as successful content marketing in 2025 emphasizes personalization and diverse content formats. Tailored AI solutions from Creatum GmbH can also simplify complex concepts, making them more digestible for your viewers.

-

Gather Feedback: After your presentation, solicit feedback to gauge what resonated with the listeners and identify areas for improvement. This practice not only enhances future presentations but also demonstrates your commitment to audience engagement. Findings from the case study titled “Future of Customer Engagement” show that investments in AI and automation are crucial for tackling challenges such as privacy concerns and fulfilling changing customer expectations, further highlighting the significance of incorporating these technologies into your narrative approach.

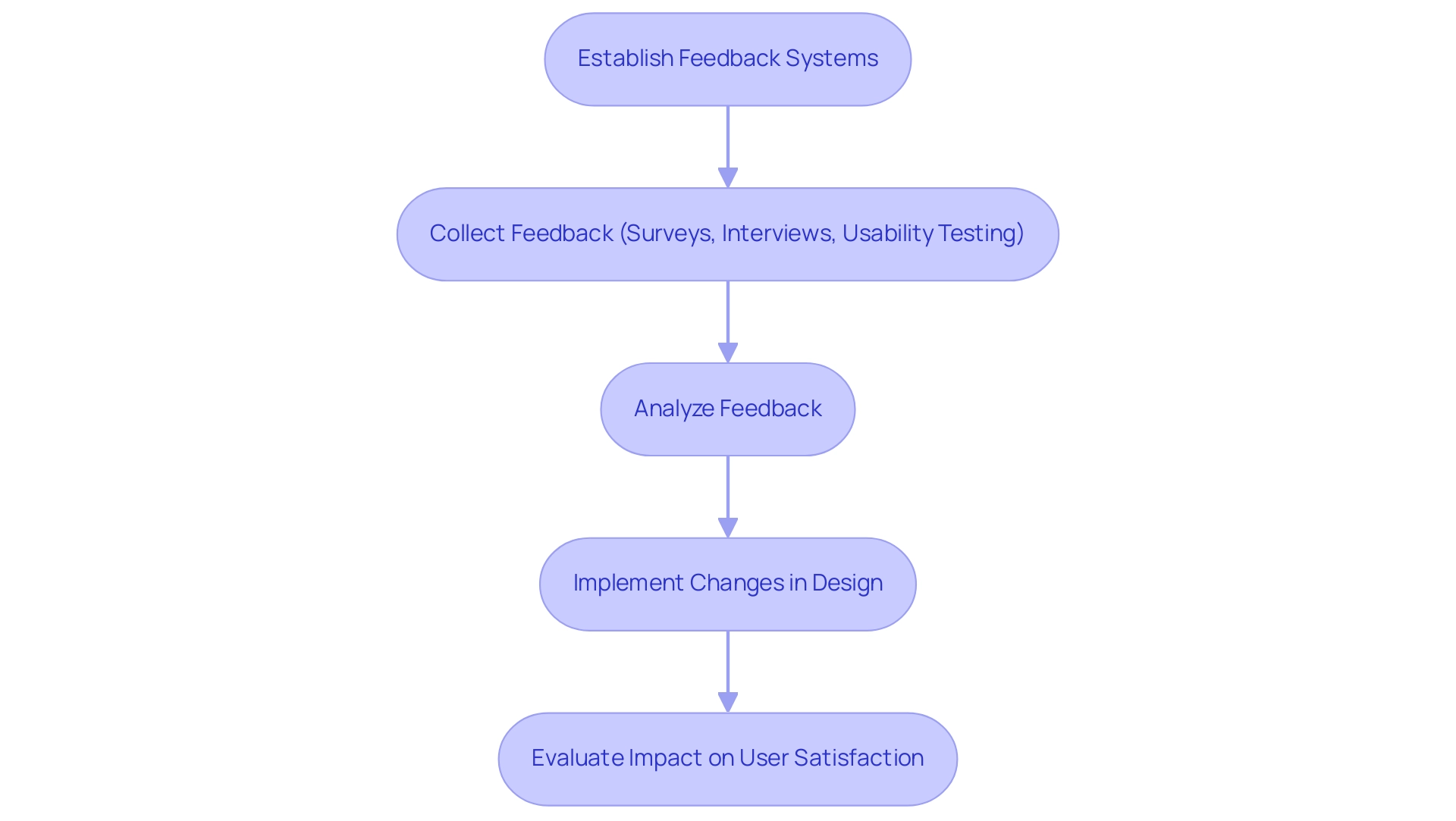

The Role of Feedback and Iteration in Data Storytelling

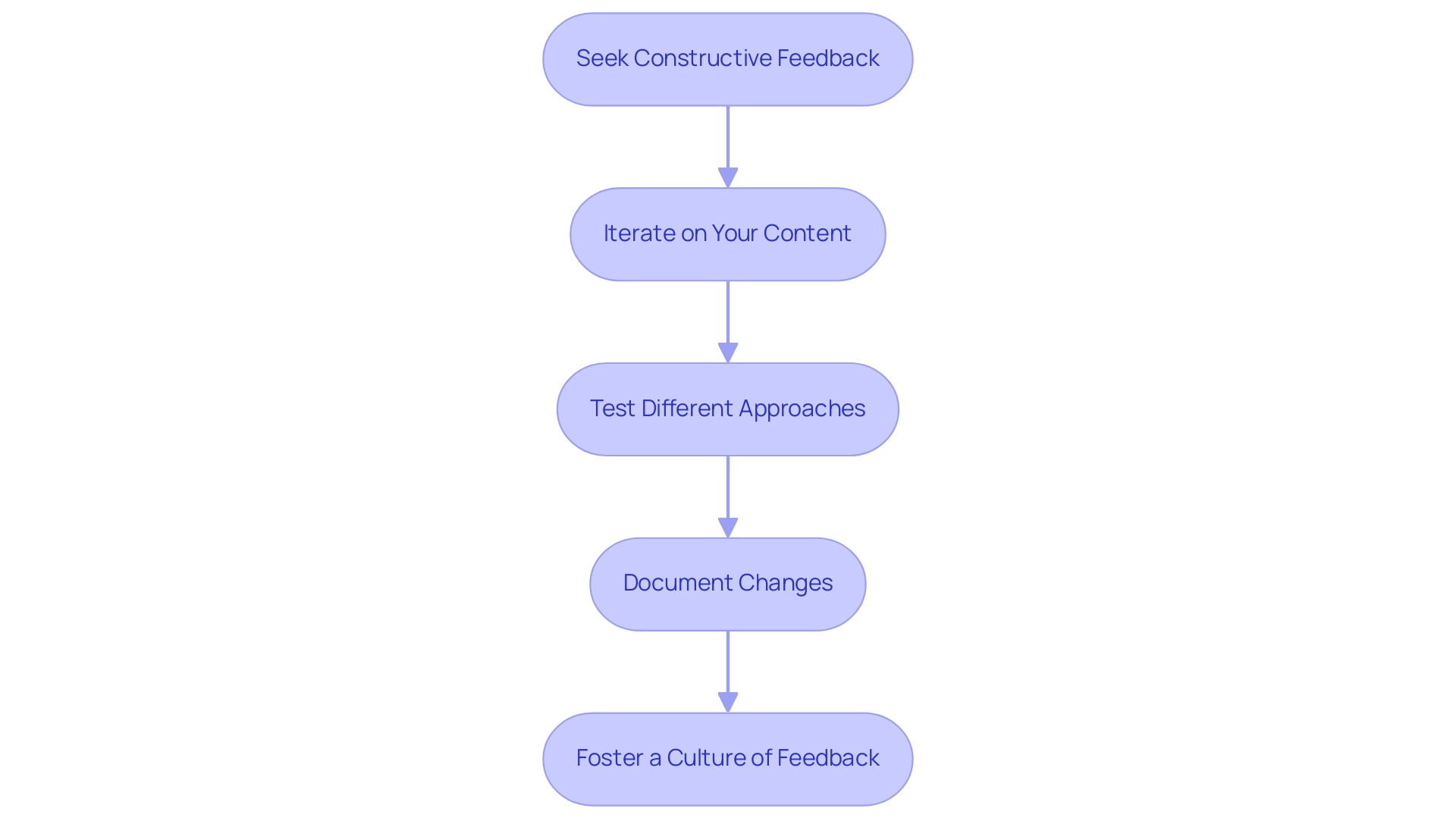

Feedback and iteration are essential in crafting compelling Power BI storytelling, particularly when leveraging Robotic Process Automation (RPA) and Business Intelligence. To effectively integrate these components, consider the following strategies:

-

Seek Constructive Feedback: After your presentation, actively solicit specific feedback on what resonated with your audience and which aspects fell short. This targeted approach can uncover insights that may not be immediately apparent, helping you enhance your story with the efficiency that RPA provides.

-

Iterate on Your Content: Utilize the feedback received to refine your narrative, visuals, and overall presentation style. Continuous improvement through iteration is crucial; companies embracing data-driven performance management—supported by Business Intelligence—are 1.5 times more likely to outperform competitors across key business metrics. This illustrates the advantages of adopting data-driven approaches in performance management.

-

Test Different Approaches: Experiment with various narrative techniques and formats to identify which resonate most effectively with your audience. This experimentation can lead to innovative methods of presenting information, particularly when combined with insights derived from Business Intelligence tools.

-

Document Changes: Maintain a record of modifications made based on feedback. This documentation will help you identify which strategies yield the best results, facilitating a more informed approach in future presentations and ensuring streamlined processes through RPA.

-

Foster a Culture of Feedback: Encourage colleagues to share their insights on your presentations. Establishing an atmosphere that prioritizes ongoing enhancement can significantly boost the efficacy of your storytelling efforts, ultimately fostering informed decision-making.

In 2025, organizations adopting regular feedback mechanisms are likely to experience a 40% rise in employee engagement rates and a 26% improvement in performance outcomes. Furthermore, as enterprise adoption rates surge toward 78% by 2025, businesses can leverage real-time customer feedback through various channels to identify strengths and areas for improvement. By prioritizing feedback and iteration, alongside the strategic use of RPA, tailored AI solutions from Creatum GmbH, and Business Intelligence, organizations can transform their Power BI storytelling into powerful narratives that drive informed decision-making and foster growth.

Conclusion

Mastering data storytelling in Power BI is essential for professionals aiming to transform raw data into impactful narratives. By integrating clarity, relevance, emotion, and well-structured visuals, data presenters can engage audiences effectively and enhance retention. Tailoring presentations to the audience’s needs, using relatable examples, and promoting interactivity fosters a deeper connection with the material.

Moreover, employing proven strategies such as:

- Setting clear objectives

- Supporting narratives with relevant data

- Iterating based on feedback

significantly improves the effectiveness of data storytelling. As organizations increasingly rely on data-driven strategies, the ability to convey complex information simply and compellingly becomes a key differentiator in achieving operational efficiency and strategic goals.

In conclusion, the convergence of data, narrative, and visuals in Power BI enriches the storytelling experience and empowers businesses to make informed decisions. By embracing these principles and practices, professionals can elevate their presentations, ensuring that insights derived from data are not only memorable but also actionable. This ultimately drives organizational success in an increasingly data-centric world.

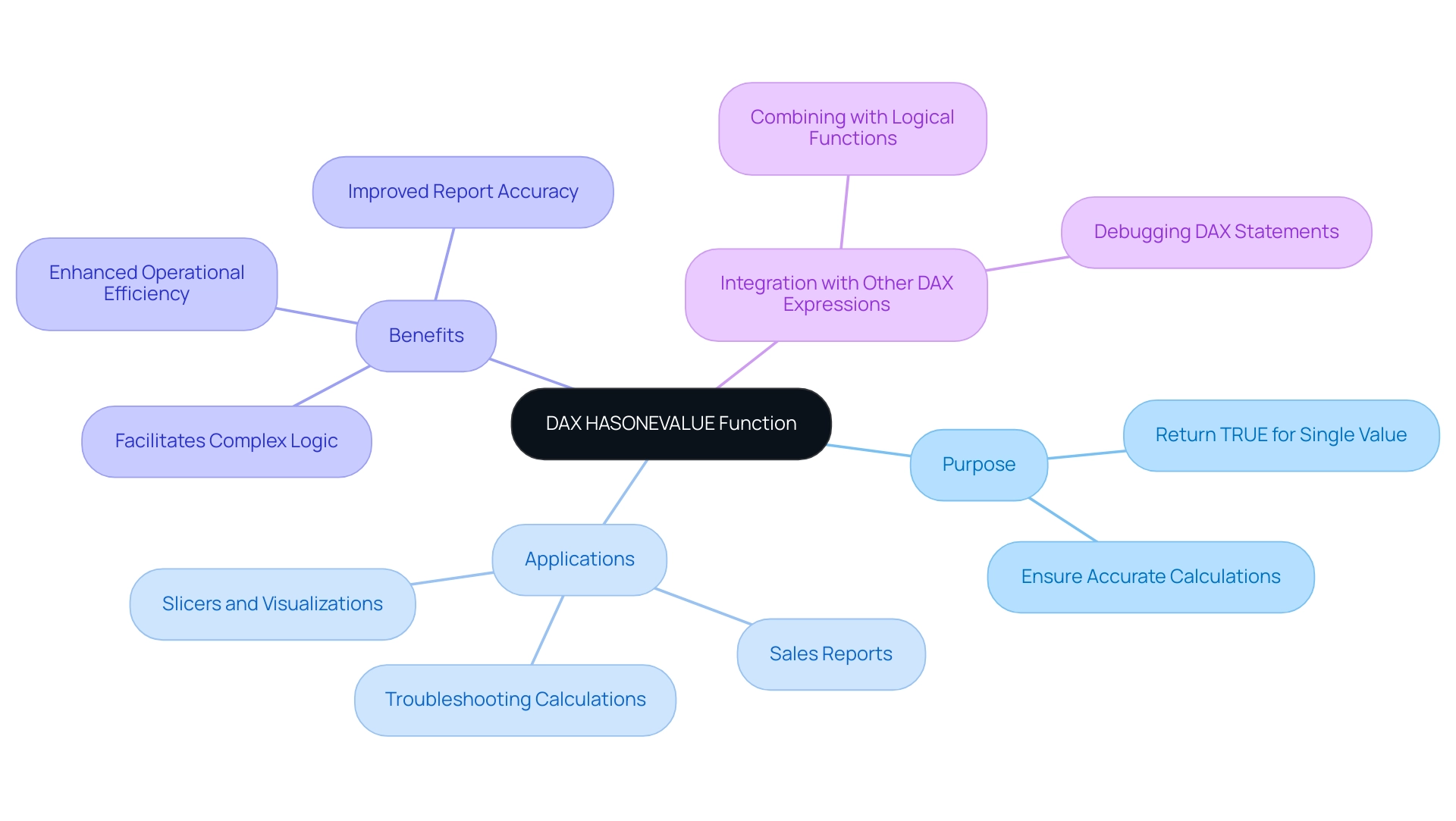

Overview

This article serves as a comprehensive guide on the DAX HASONEVALUE function, emphasizing its critical role for Power BI professionals. HASONEVALUE is indispensable for achieving accurate calculations based on single selections within filter contexts. Its applications span:

- Dynamic measures

- Conditional formatting

- Hierarchical data management

These applications collectively enhance the clarity and reliability of reports. Understanding this function not only empowers professionals but also elevates their reporting capabilities.

Introduction

In the realm of data analysis, extracting meaningful insights from complex datasets is paramount. The DAX HASONEVALUE function emerges as a vital tool within Power BI. It enables users to determine when a single distinct value exists in a specified column amidst various filter contexts. This functionality not only enhances the accuracy of reports but also streamlines the decision-making process by ensuring calculations are based on precise selections. As organizations increasingly rely on data-driven strategies, mastering the nuances of HASONEVALUE becomes essential for professionals aiming to elevate their reporting capabilities and drive operational efficiency.

By exploring its practical applications, challenges, and best practices, users can unlock the full potential of this powerful function. Ultimately, this transformation of raw data into actionable insights propels business growth.

Understanding the DAX HASONEVALUE Function

The DAX HASONEVALUE function serves as a crucial element in Power BI, returning TRUE when only one distinct value exists in a specified column within the current filter context. This functionality is vital in scenarios where calculations must be executed based on a single selection, such as in slicers or visualizations. Mastering the DAX HASONEVALUE function is essential for creating accurate and insightful reports in Power BI, as it reduces the risk of errors caused by multiple selections within a filter context.

Consider a sales report filtered by region. By applying a specific measure to the ‘Region’ column, it will yield TRUE when DAX HASONEVALUE verifies that a single region is selected. This allows for the computation of metrics tailored to that region, ensuring the insights derived are both relevant and precise.

Moreover, DAX HASONEVALUE can be integrated with additional DAX expressions to develop more complex logic, thereby enhancing the analytical capabilities of reports. It also serves as a valuable tool for troubleshooting calculations, aiding users in identifying issues within their DAX statements.

In 2025, the importance of logical operations is underscored by the growing complexity of data analysis in Power BI. With over 200 clients benefiting from customized solutions, the application of DAX HASONEVALUE has proven instrumental in improving report accuracy and operational efficiency. The organization’s commitment to providing live projects and outcome-focused educational programs, highlighted in their case study on programming language training, equips professionals with the necessary skills to effectively utilize such capabilities.

This hands-on experience not only deepens the understanding of DAX functions but also leads to successful placements in reputable companies.

As Power BI continues to evolve, staying updated on logical functions like DAX HASONEVALUE and their applications is essential for professionals looking to maximize their information capabilities. The integration of this function simplifies reporting processes and empowers users to make informed decisions based on reliable insights. Furthermore, by addressing common challenges such as time-consuming report generation and inconsistencies, the implementation of Business Intelligence and RPA solutions from Creatum GmbH can significantly boost operational efficiency and foster business growth.

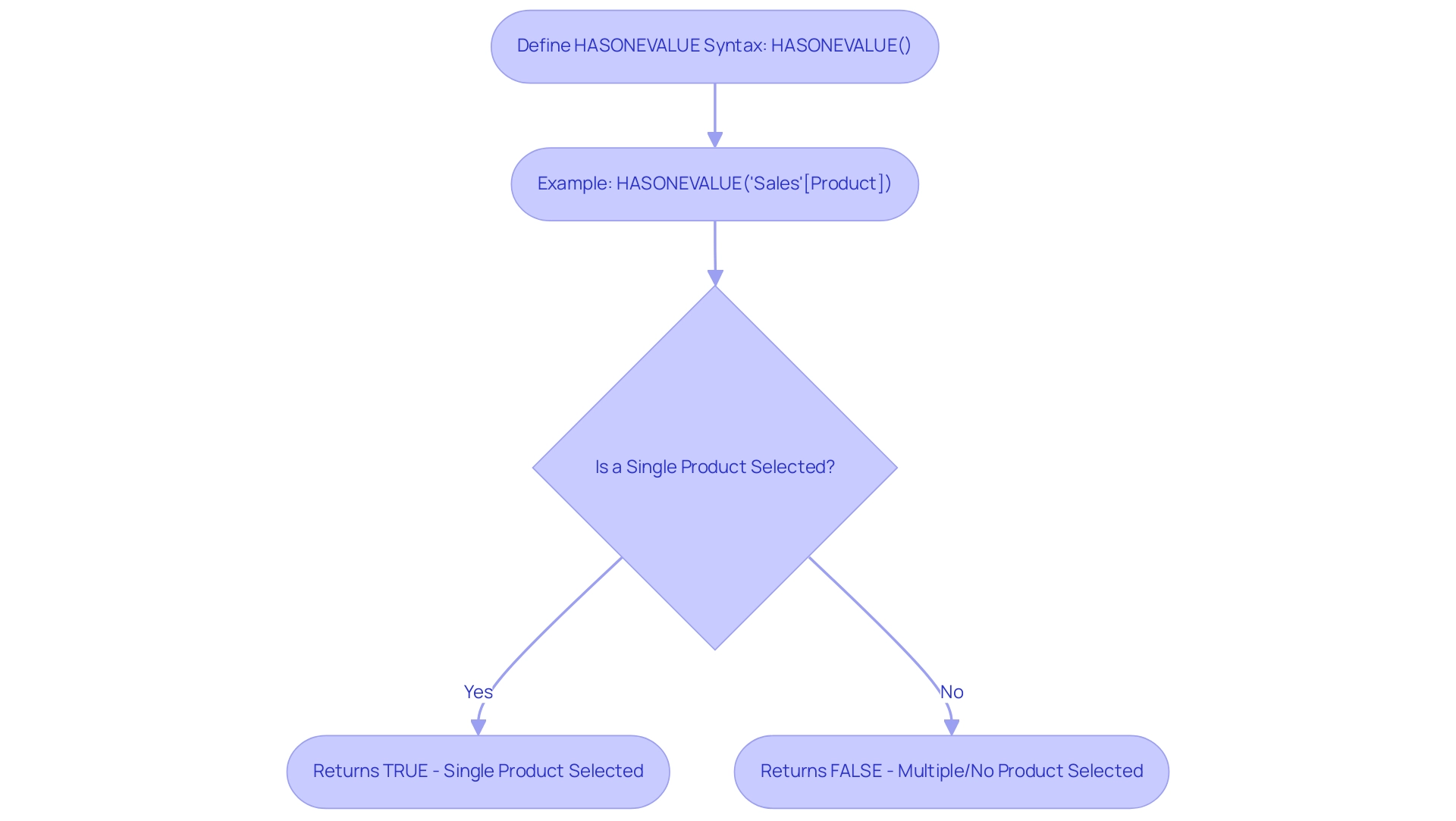

Syntax and Structure of HASONEVALUE

The syntax for the HASONEVALUE function in DAX is both straightforward and powerful:

HASONEVALUE(<ColumnName>)

: This parameter identifies the specific column you wish to evaluate, originating from a table within your data model.

Example: To determine if there is only one value present in the ‘Product’ column, you would use the following expression:

HASONEVALUE('Sales'[Product])

This expression evaluates the current filter context and returns TRUE if a single product is selected; otherwise, it returns FALSE.

Understanding the dax hasonevalue function is essential for efficient information modeling in Power BI, particularly when managing intricate collections. For instance, in a recent case study, a company utilized this feature to optimize their reporting procedures, ensuring that only relevant information was displayed based on user choices. This not only enhanced the accuracy of their reports but also improved user experience by reducing clutter.

The organization also focused on enhancing data quality through AI solutions and streamlining AI implementation, underscoring the importance of leveraging tools like dax hasonevalue in practical applications.

In 2025, updates to DAX syntax improved how expressions function, making it crucial for professionals to remain informed about these changes. Best practices suggest employing dax hasonevalue alongside other DAX operations, such as RELATED, to create dynamic reports that respond intelligently to user inputs. As Richie Cotton, a notable figure in the information community, remarks, he “spends all day chit-chatting about information,” highlighting the importance of effective information management.

By leveraging this capability effectively, organizations can enhance decision-making processes, as evidenced by the significant improvements in data quality and insight extraction reported by businesses that have embraced these practices. Furthermore, utilizing Robotic Process Automation (RPA) can further boost operational efficiency, enabling teams to concentrate on strategic initiatives rather than manual tasks, thus propelling business growth.

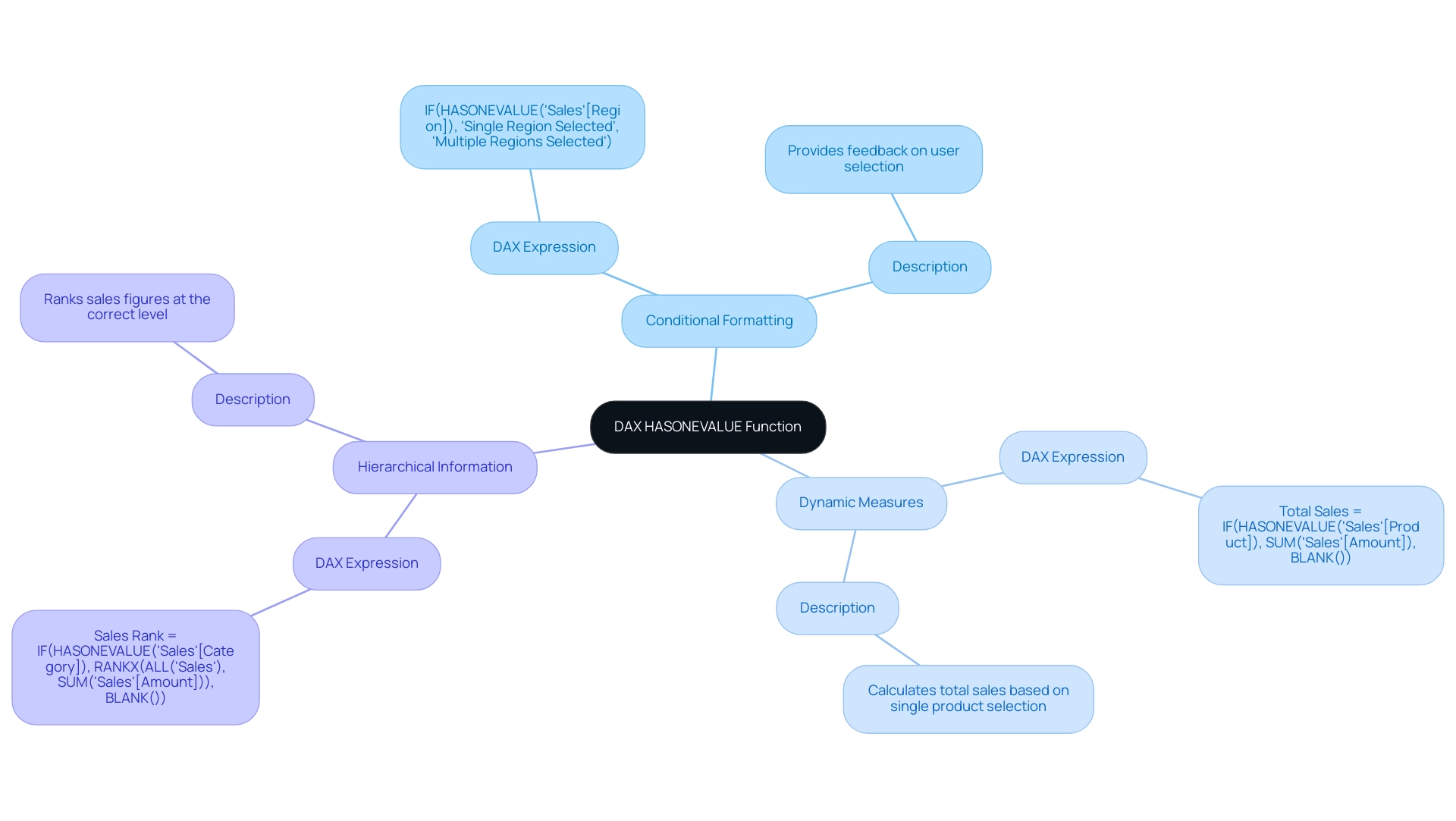

Practical Examples of HASONEVALUE in Action

Here are several practical applications of the DAX HASONEVALUE function that can significantly enhance your Power BI reports, particularly in the context of leveraging Business Intelligence for operational efficiency:

-

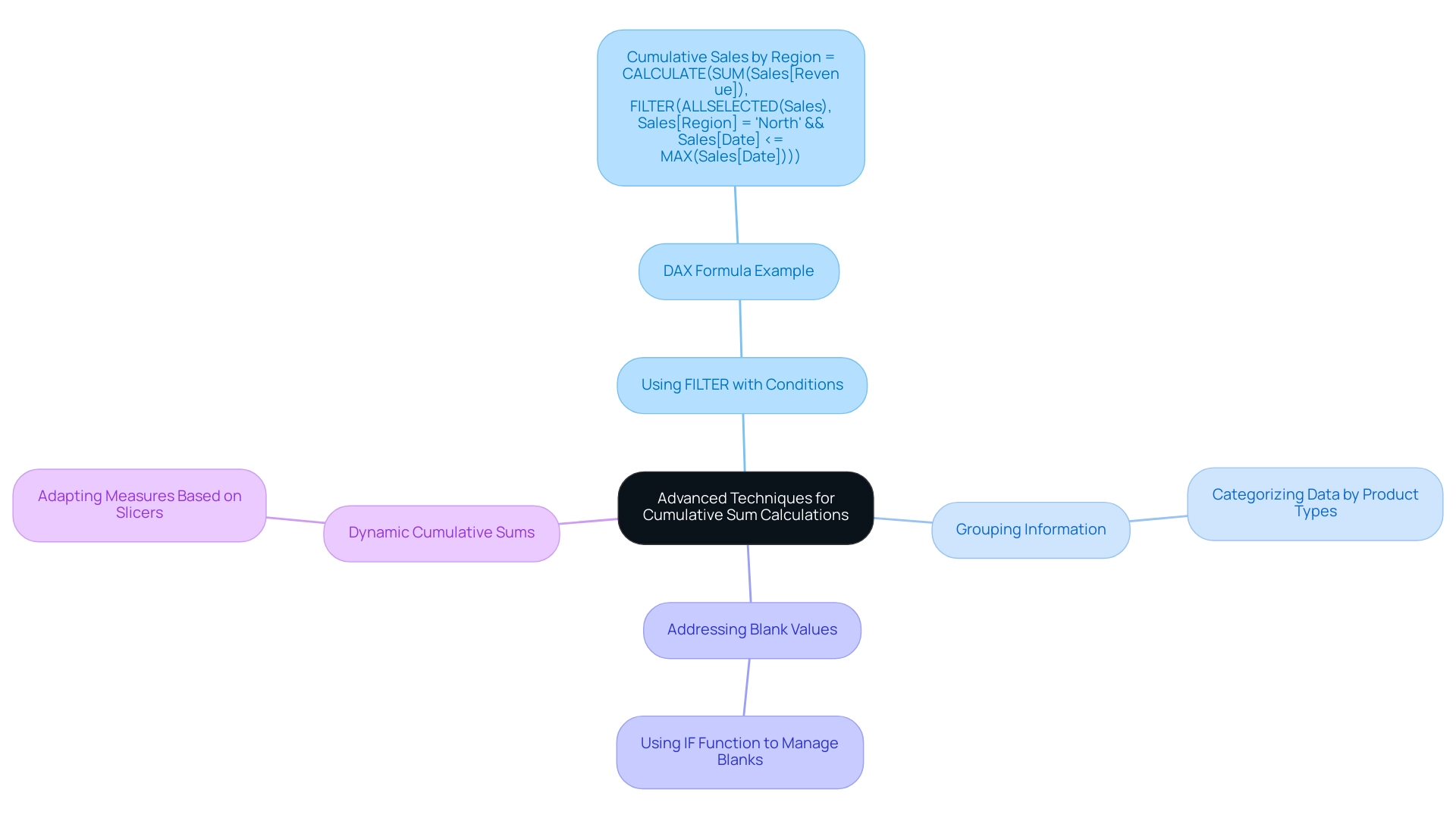

Conditional Formatting in Reports: The function can be effectively utilized to apply conditional formatting based on user selections. For instance, consider the following DAX expression:

IF(HASONEVALUE('Sales'[Region]), "Single Region Selected", "Multiple Regions Selected")This expression evaluates whether a single region is selected in the slicer. If true, it displays a message indicating that only one region is selected, thereby providing immediate feedback to users about their selection and enhancing the clarity of insights derived from the report.

-

Dynamic Measures: Another powerful application of this function is in the creation of dynamic measures that adapt based on user input. For example:

Total Sales = IF(HASONEVALUE('Sales'[Product]), SUM('Sales'[Amount]), BLANK())This measure calculates the total sales amount only when a single product is selected. If multiple products are chosen, it returns a blank, ensuring that the information presented is relevant and precise, thus addressing the challenge of inconsistencies in report creation.

-

Hierarchical Information: When managing hierarchical structures, a single value check guarantees that calculations are performed at the correct level. For instance:

Sales Rank = IF(HASONEVALUE('Sales'[Category]), RANKX(ALL('Sales'), SUM('Sales'[Amount])), BLANK())This DAX formula ranks sales figures only when a single category is selected, preventing misleading rankings that could arise from multiple selections. This approach preserves the integrity of the analysis and enhances the clarity of insights derived from the report, ultimately driving informed decision-making.

These examples demonstrate the versatility of this capability in Power BI, allowing users to produce more interactive and insightful reports. Furthermore, features such as CROSSFILTER and RELATED can enhance HASONEVALUE by enabling more intricate relationships and calculations. As mentioned in September 2017, there were 60 entries in the archives, highlighting the evolution of DAX capabilities over time.

Richie Cotton, a prominent figure in discussions about information, emphasizes the importance of engaging with it, stating that he “spends all day chit-chatting about it.” This perspective underscores the relevance of mastering DAX functions, including HASONEVALUE. Additionally, the case study titled ‘Business Intelligence for Insights’ illustrates how Creatum GmbH empowers businesses to extract meaningful information, transforming raw details into actionable insights for informed decision-making, thereby addressing the challenges of time-consuming report creation and lack of actionable guidance.

Moreover, Creatum GmbH’s RPA solutions can further enhance operational efficiency by automating repetitive tasks, allowing teams to focus on deriving insights from the data.

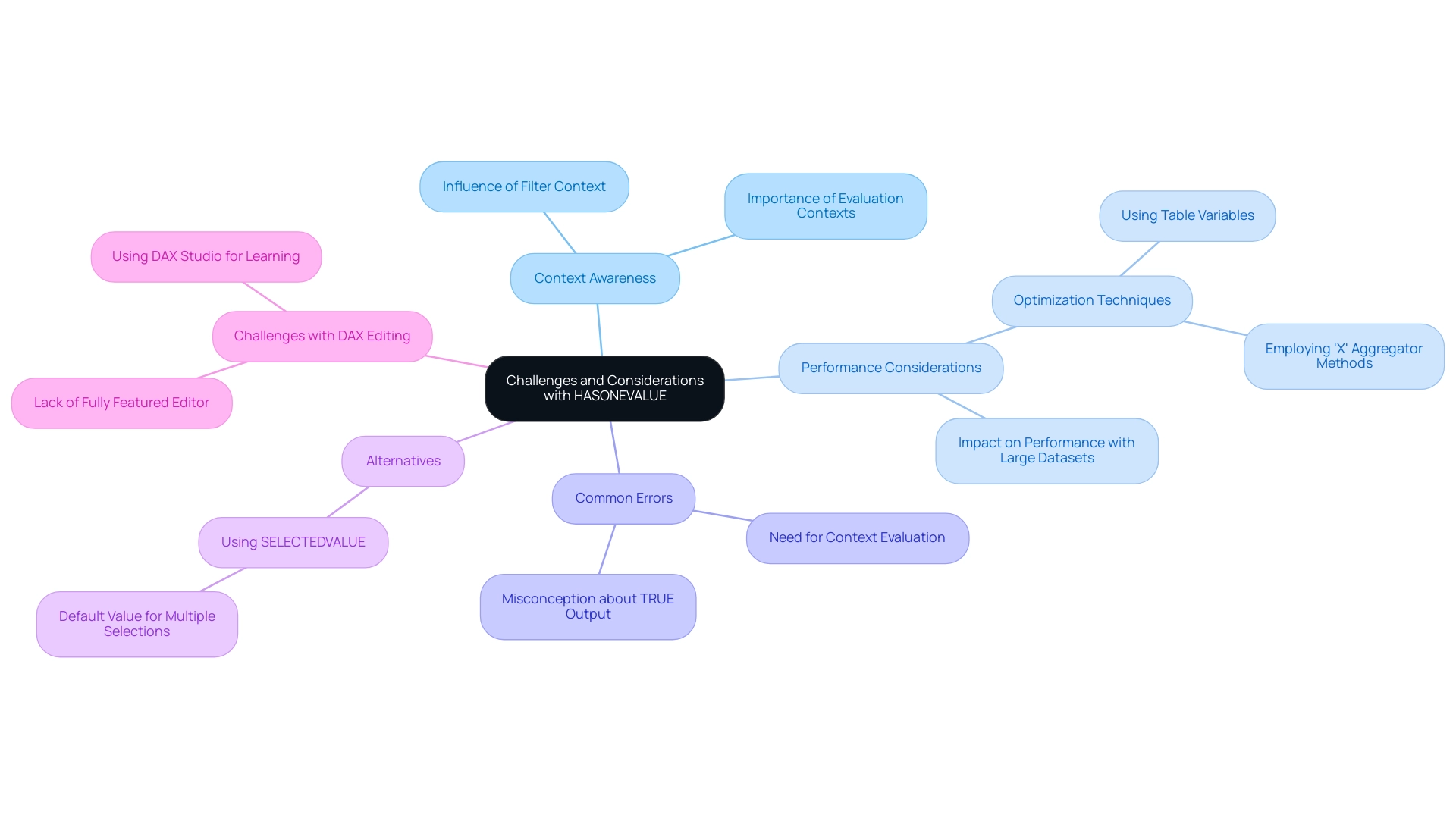

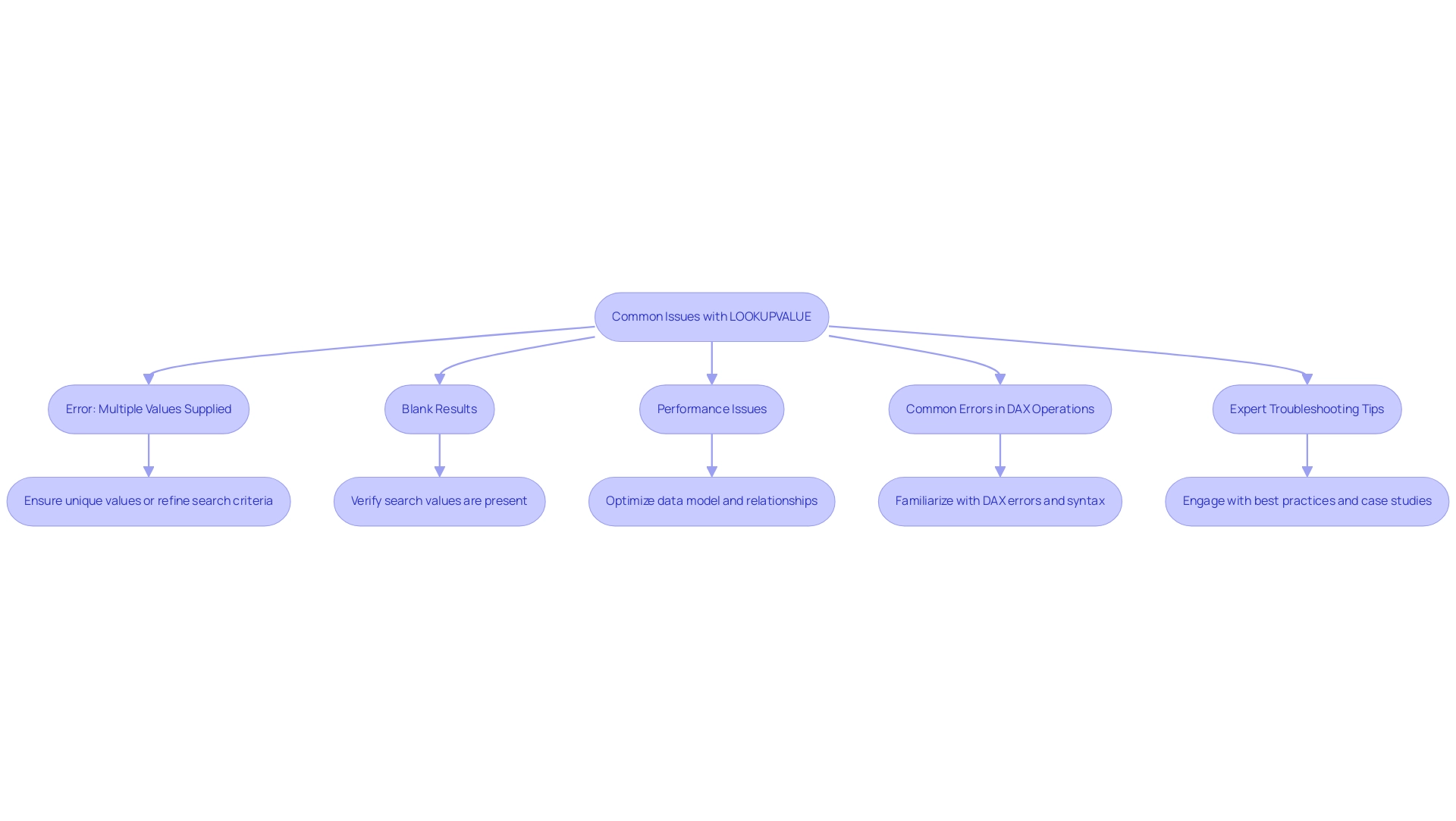

Challenges and Considerations with HASONEVALUE

The HASONEVALUE function in DAX stands as a powerful tool, yet it presents several challenges and considerations that users must navigate, particularly in leveraging Business Intelligence (BI) and Robotic Process Automation (RPA) for operational efficiency.

-

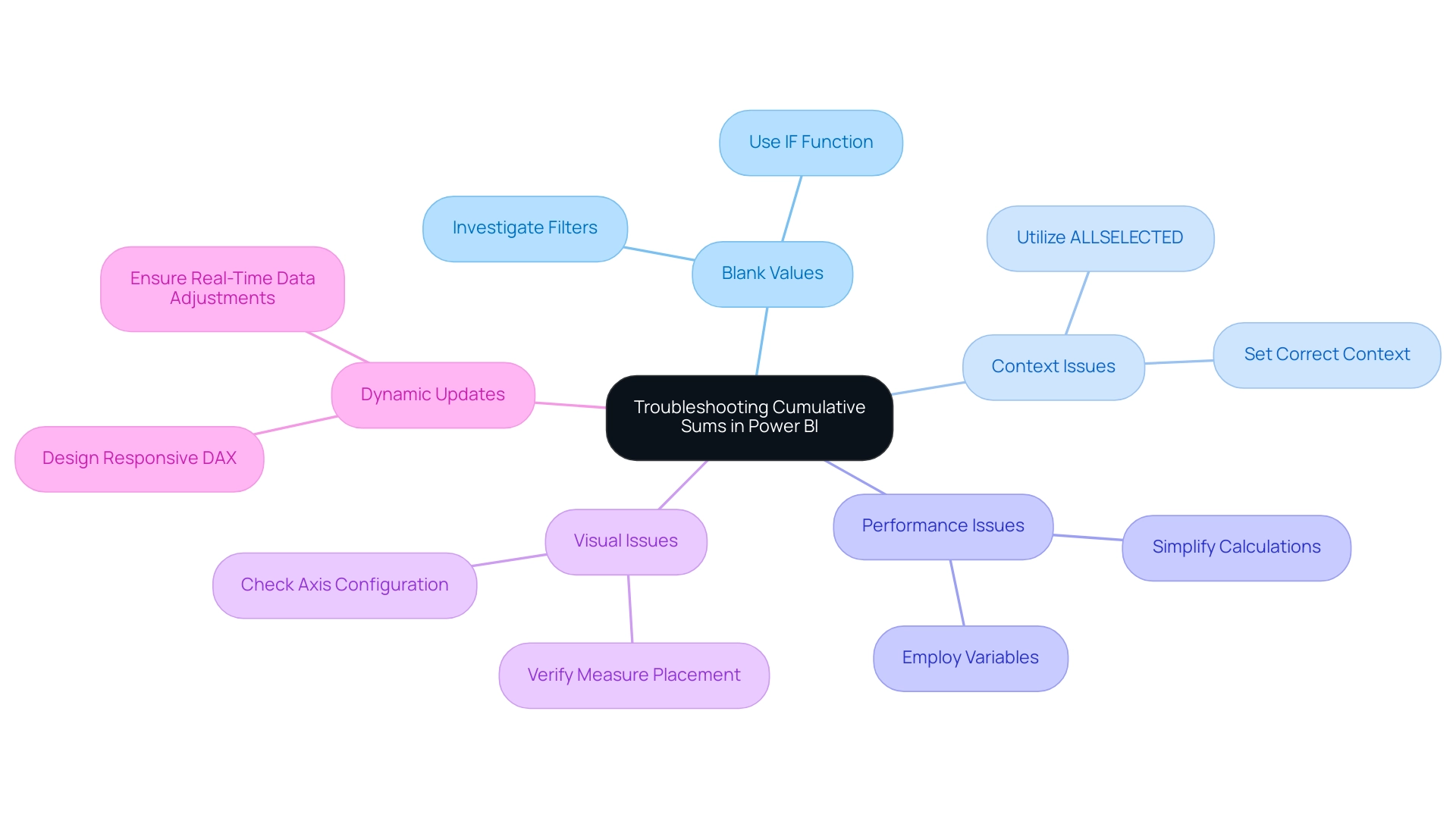

Context Awareness: The function’s behavior is heavily influenced by the filter context. If multiple values are selected, it will return FALSE, potentially leading to unexpected results. Verifying that your filters are appropriately configured is crucial to achieving the desired outcome. Grasping fundamental concepts like evaluation contexts and iterators is essential for effectively utilizing the function, especially when deriving actionable insights from Power BI dashboards with DAX HASONEVALUE.

-

Performance Considerations: When used within intricate DAX expressions, this function can significantly impact performance, particularly with large datasets. Conducting performance tests and optimizing your DAX code is essential for maintaining efficiency and responsiveness in your reports. Employing a coding approach that includes table variables and ‘X’ aggregator methods can prove more effective than using CALCULATE, boosting performance and ensuring seamless operation of your BI tools.

-

Common Errors: A prevalent misconception is that DAX HASONEVALUE will return TRUE when there is only one value in the model, regardless of the filter context. To ensure accuracy, always evaluate the current filter context before relying on the output. This understanding is crucial for overcoming the challenges of data inconsistencies that can arise in BI reporting.

-

Alternatives: In scenarios where flexibility is required, consider using the SELECTEDVALUE method. This alternative can return a default value when multiple selections are made, providing a more adaptable solution in certain contexts and enhancing the overall user experience in BI applications.

-

Challenges with DAX Editing: The absence of a fully featured editor for DAX can complicate the writing of complex models. Tools like DAX Studio can enhance the learning experience and help mitigate these challenges, allowing users to focus on leveraging RPA solutions like EMMA RPA and Power Automate to automate repetitive tasks and improve efficiency.

By understanding these aspects, users can leverage this function effectively while minimizing potential pitfalls in their DAX implementations. As Mitchell Pearson, a Data Platform Consultant, observes, “By utilizing X procedures alongside a specific value check, you can resolve intricate DAX issues and generate more precise reports in Power BI.” This highlights the importance of integrating advanced coding techniques into your DAX strategy.

Additionally, mastering DAX is akin to mastering mathematics, statistics, and physics, underscoring the complexity and depth of this powerful language, which is essential for driving growth and innovation in today’s data-rich environment. Businesses that struggle to extract meaningful insights from their data face a competitive disadvantage, making it imperative to utilize BI and RPA solutions effectively.

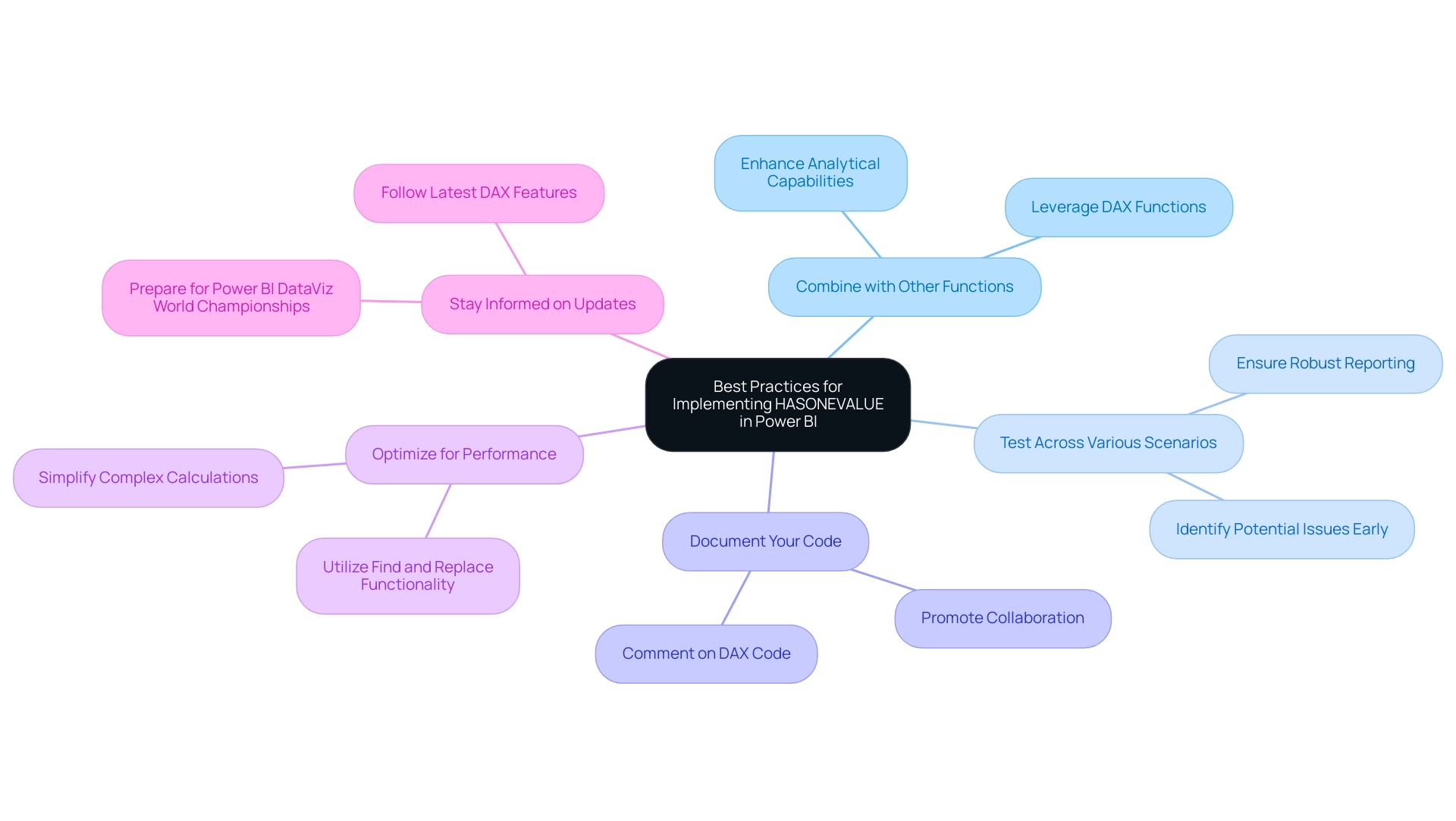

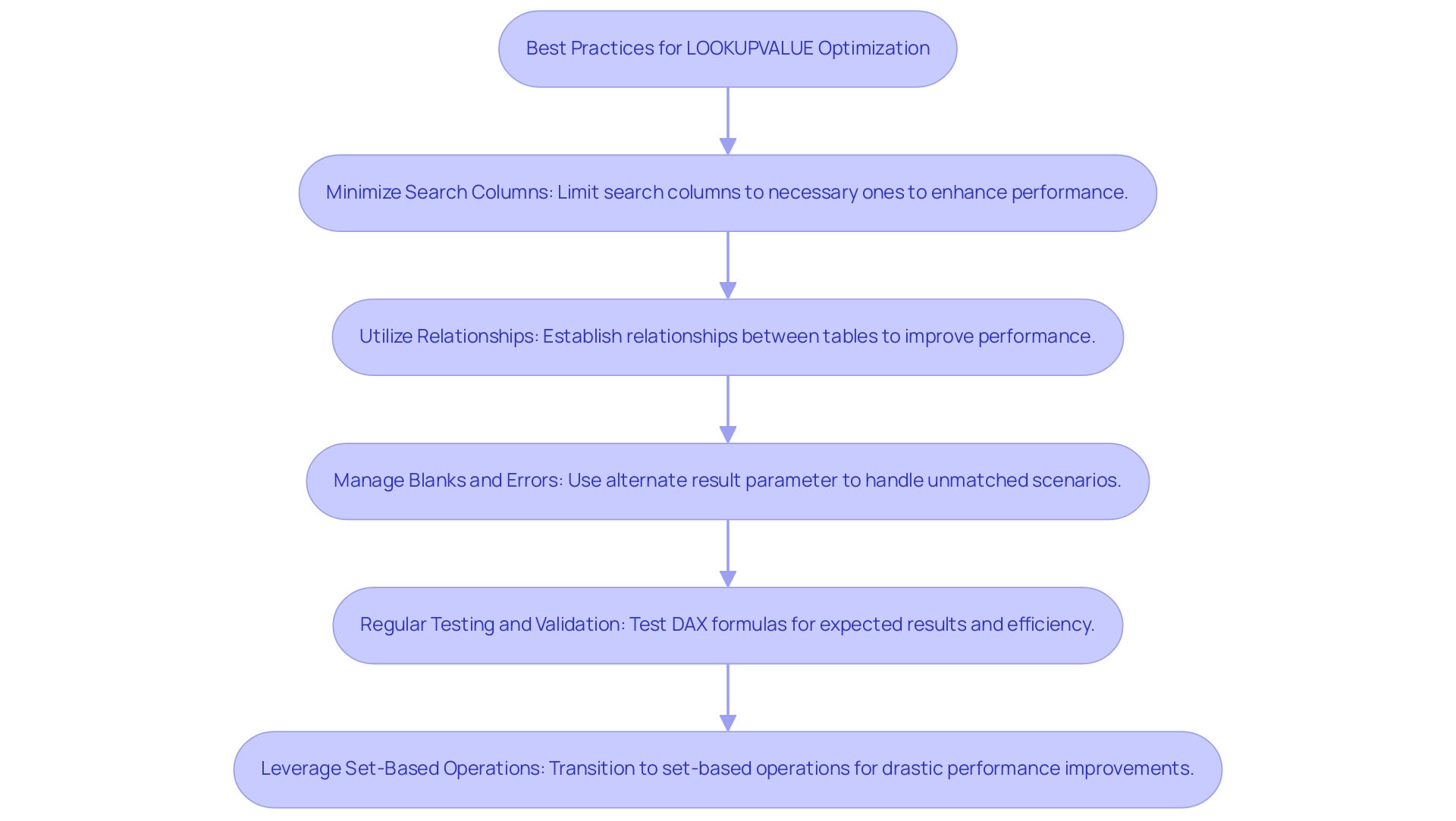

Best Practices for Implementing HASONEVALUE in Power BI

To effectively implement the HASONEVALUE function in Power BI, consider the following best practices:

-

Combine with Other Functions: Leverage a single value function alongside other DAX functions such as IF, SWITCH, or CALCULATE. This combination allows for the creation of more intricate and dynamic measures, enhancing the analytical capabilities of your reports. As emphasized in the case study named “Importance of DAX for Business Analysis,” mastering DAX is essential for evaluating growth percentages and year-over-year comparisons, which highlights the significance of utilizing effective techniques to tackle challenges such as time-consuming report generation and data inconsistencies.

-

Test Across Various Scenarios: It is crucial to test your DAX expressions in different filter contexts. This practice ensures that the expressions behave as intended, helping to identify potential issues early in the development process and ensuring robust reporting that provides clear, actionable guidance to stakeholders.

-

Document Your Code: Clear documentation is key. Commenting on your DAX code to explain the rationale behind using dax hasonevalue, especially in complex expressions, makes it easier for future users to maintain and understand the code. This promotes collaboration and knowledge sharing, which is essential for overcoming the lack of actionable insights.

-

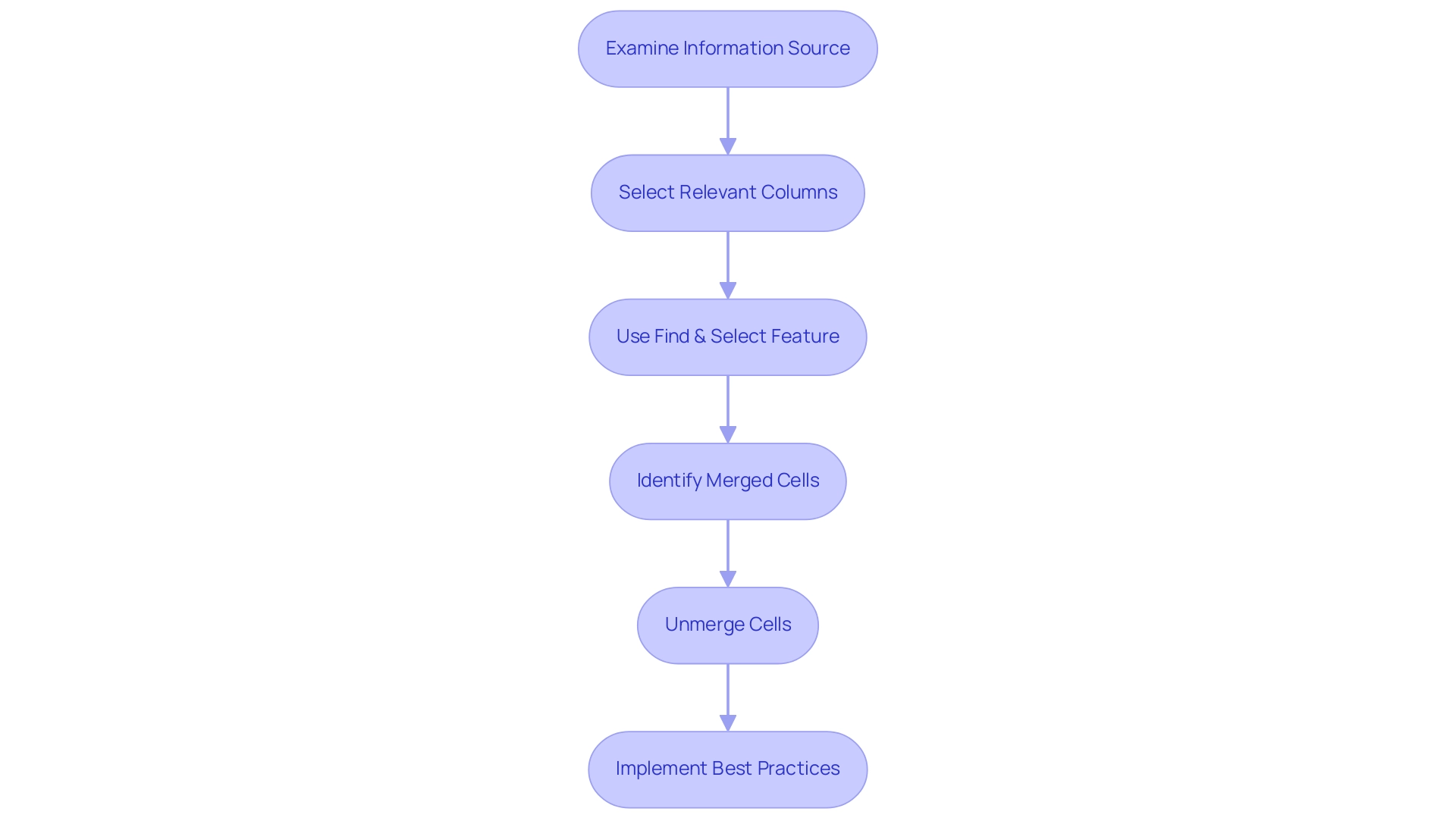

Optimize for Performance: Regularly assess your DAX expressions for performance improvements. Simplifying complex calculations and reducing the use of nested operations can significantly enhance performance, leading to faster report generation and a smoother user experience. Utilizing the Find and Replace functionality in the DAX query editor can also improve efficiency in report creation, addressing the common challenge of investing too much time in constructing reports.

-

Stay Informed on Updates: The landscape of Power BI and DAX capabilities is continually evolving. Staying informed about the latest updates and optimizations can offer new features that improve the capabilities of DAX and other expressions, ensuring that your skills and reports remain advanced. Additionally, with the upcoming Power BI DataViz World Championships taking place from February 14 to March 31, 2025, this is a timely opportunity to enhance your skills in preparation for the competition.

By adhering to these best practices, Power BI professionals can maximize the effectiveness of the dax hasonevalue function, ultimately driving better insights and decision-making within their organizations. As Douglas Rocha, a statistics enthusiast, aptly puts it, “Can you do statistics in Power BI without DAX?” Yes, you can, you can do it without measures as well and I will teach you how at the end of this tutorial.

This highlights the essential role DAX plays in unlocking the full potential of Power BI and addressing the challenges of leveraging insights effectively. Furthermore, it is crucial to implement a governance strategy to mitigate data inconsistencies, as the absence of such a strategy can lead to confusion and mistrust in the data presented. At Creatum GmbH, we emphasize the importance of these practices to ensure reliable and actionable insights.

Conclusion

The DAX HASONEVALUE function stands as a pivotal tool for professionals utilizing Power BI, enabling the extraction of precise insights from complex datasets. By confirming the existence of a single distinct value within a specified column, this function enhances report accuracy and facilitates informed decision-making. Its practical applications—such as conditional formatting, dynamic measures, and handling hierarchical data—showcase the versatility of HASONEVALUE in creating interactive and informative reports.

However, users must navigate challenges related to context awareness, performance, and common misconceptions. Understanding these challenges and implementing best practices—such as combining HASONEVALUE with other DAX functions and optimizing for performance—allows professionals to fully leverage its capabilities. Staying informed about updates in DAX syntax and refining DAX expressions through rigorous testing are essential for maintaining high-quality reporting standards.

Ultimately, mastering the HASONEVALUE function transcends technical proficiency; it transforms raw data into actionable insights that drive business growth and operational efficiency. As organizations increasingly depend on data-driven strategies, the ability to effectively utilize functions like HASONEVALUE will distinguish successful professionals in the ever-evolving landscape of data analysis. Embracing this knowledge empowers users to address the complexities of data reporting, ensuring they remain at the forefront of their industry.

Overview

This article delves into the critical task of determining the last row in a table, underscoring its importance in effective information management. It presents various techniques for identifying the last row across different platforms, including:

- Formulas in Excel

- SQL queries

- Python commands

These methods not only enhance operational efficiency but also streamline data retrieval, ensuring accuracy in data handling. By mastering these techniques, professionals can significantly improve their data management practices.

Introduction

In the digital age, where data serves as the lifeblood of decision-making, the structure and management of tables are pivotal for organizations aiming to harness their information effectively. As data volumes reach unprecedented levels, mastering the navigation and manipulation of table structures becomes essential for businesses striving to maintain a competitive edge. How can organizations identify key rows and leverage automation tools to streamline operations? The strategies employed in table management can significantly enhance data integrity and operational efficiency.

This article delves into the intricacies of table structures, emphasizing the importance of unique identifiers. It explores techniques that empower professionals to extract valuable insights from their data, ultimately driving growth and innovation in a rapidly evolving landscape. By understanding these principles, organizations can position themselves to thrive amidst the challenges of the digital era.

Understanding Table Structures and Their Importance

Tables serve as the backbone of information management across various platforms, including databases and spreadsheets. Comprised of rows and columns, each row signifies a distinct record, while each column denotes a specific attribute of that record. Mastery of layout arrangements is essential for efficient information handling and retrieval, particularly in identifying key entries. For instance, which option is the last row in a table often contains the latest information or summary insights?

Looking ahead to 2025, the significance of organized charts in information handling is underscored by the astonishing forecast that worldwide information volume will reach 181 zettabytes. This surge is driven by advancements in AI, IoT, and cloud computing. Such exponential growth presents both challenges and opportunities for organizations, necessitating innovative information management strategies. Companies that prioritize these strategies—such as integrating Robotic Process Automation (RPA) solutions like EMMA RPA and Microsoft Power Automate—are better positioned to extract actionable insights and drive transformation.

Current trends indicate that well-structured arrangements improve information retrieval efficiency, enabling professionals to swiftly find and manage details. For example, in a customer database, a thoughtfully organized table can streamline access to customer information, enhancing operational workflows. Moreover, high-quality information is crucial for organizational growth; as Grepsr aptly states, “Information is power.”

Ongoing monitoring and robust quality assurance practices, backed by customized AI solutions, are vital for maintaining integrity, which directly influences operational efficiency.

Real-world examples illustrate the effectiveness of table layouts in databases and spreadsheets. Organizations adopting organized information handling strategies, alongside utilizing Business Intelligence tools like Power BI for enhanced reporting, report significant improvements in operational efficiency and decision-making capabilities. As the car rental sector is projected to rise to $146.7 billion in revenue by 2028, companies employing effective information manipulation techniques and RPA are likely to gain a competitive advantage.

In conclusion, the importance of structural arrangements in information management cannot be overstated. As the landscape evolves, embracing these structures and integrating RPA will empower organizations to navigate the complexities of information, ultimately driving growth and innovation.

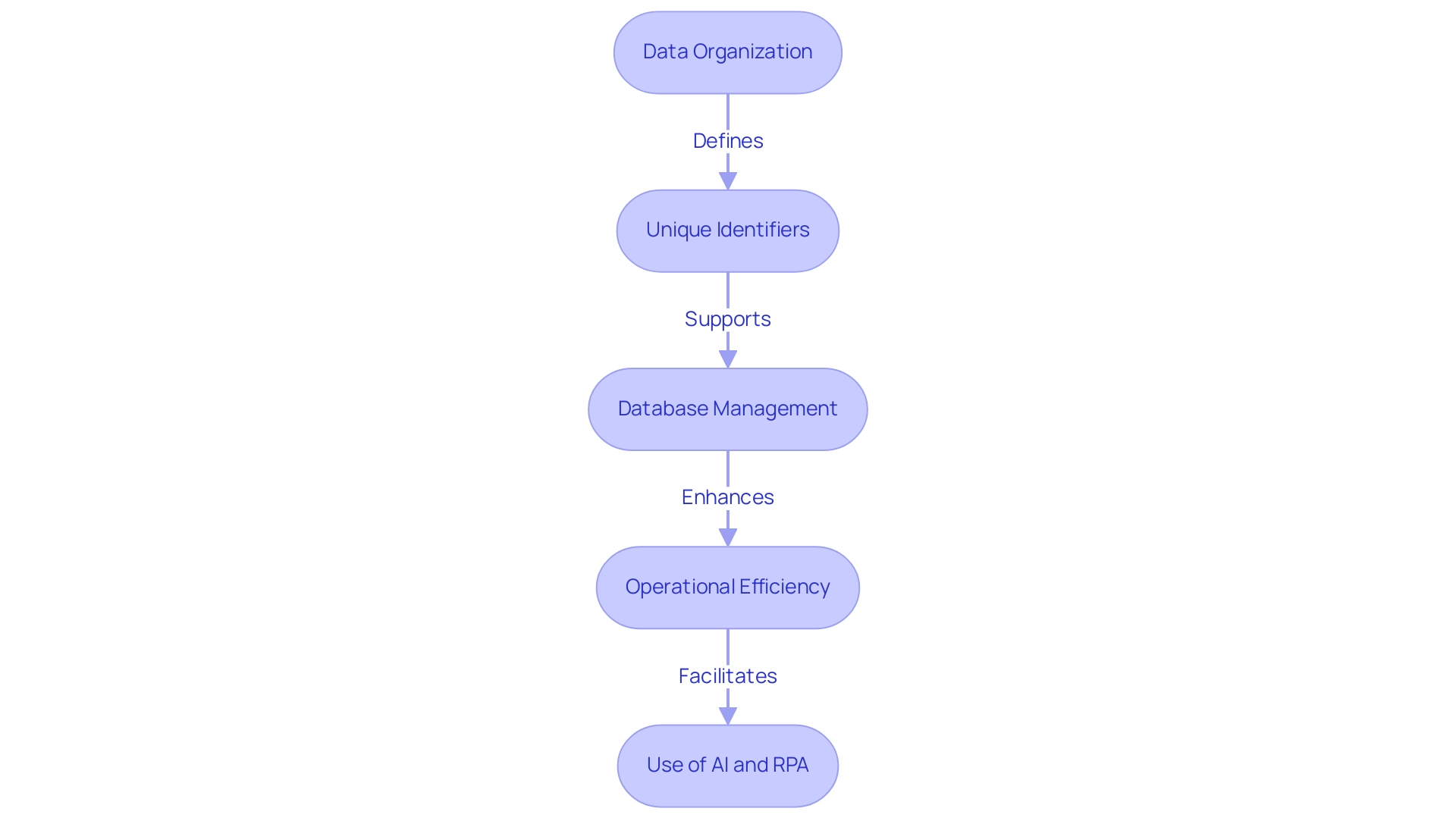

Identifying Rows: Key Characteristics and Functions

Rows in a grid are fundamental to data organization, serving as the primary structure for managing information. Each row typically encompasses multiple fields that align with the columns, creating a cohesive dataset. Key characteristics of rows include their sequential arrangement and the presence of unique identifiers, such as primary keys, which are essential for distinguishing one row from another.

For instance, in a sales table, each row may represent a distinct transaction, with columns detailing the date, customer name, and transaction amount.

The importance of unique identifiers cannot be overstated; they play a critical role in database management by ensuring integrity and facilitating efficient retrieval. In 2025, statistics indicate that effective use of unique identifiers can enhance information quality significantly, reducing errors and improving operational efficiency. Furthermore, the ability to manipulate data frames allows users to verify calculations and test the behavior of non-missing values, reinforcing the reliability of the dataset.

Significantly, the overall padding between columns is 32px, which can influence the visual arrangement of information and should be taken into account when creating tables.

Understanding the features of rows is essential for determining which of the following options is the last row in a table, as it often signifies the most recent entry or the conclusion of processing tasks. A case study on business intelligence empowerment illustrates this point: organizations that effectively extract meaningful insights from unprocessed information can make informed decisions, driving growth and innovation. This is where tailored AI solutions come into play, cutting through the overwhelming options to provide targeted technologies that align with specific business goals.

Additionally, Robotic Process Automation (RPA) can streamline workflows, enhancing operational efficiency by automating repetitive tasks. As Mackenzie Plowman aptly noted, ‘Sorry if I missed it, but where can I read through the references supporting these best practices?’ This emphasizes the significance of basing information handling practices on trustworthy sources.

By acknowledging the importance of rows and their unique identifiers, and utilizing Business Intelligence, RPA, and customized AI solutions from Creatum GmbH, professionals can navigate and compare tabular information more effectively, ultimately enhancing their operational strategies.

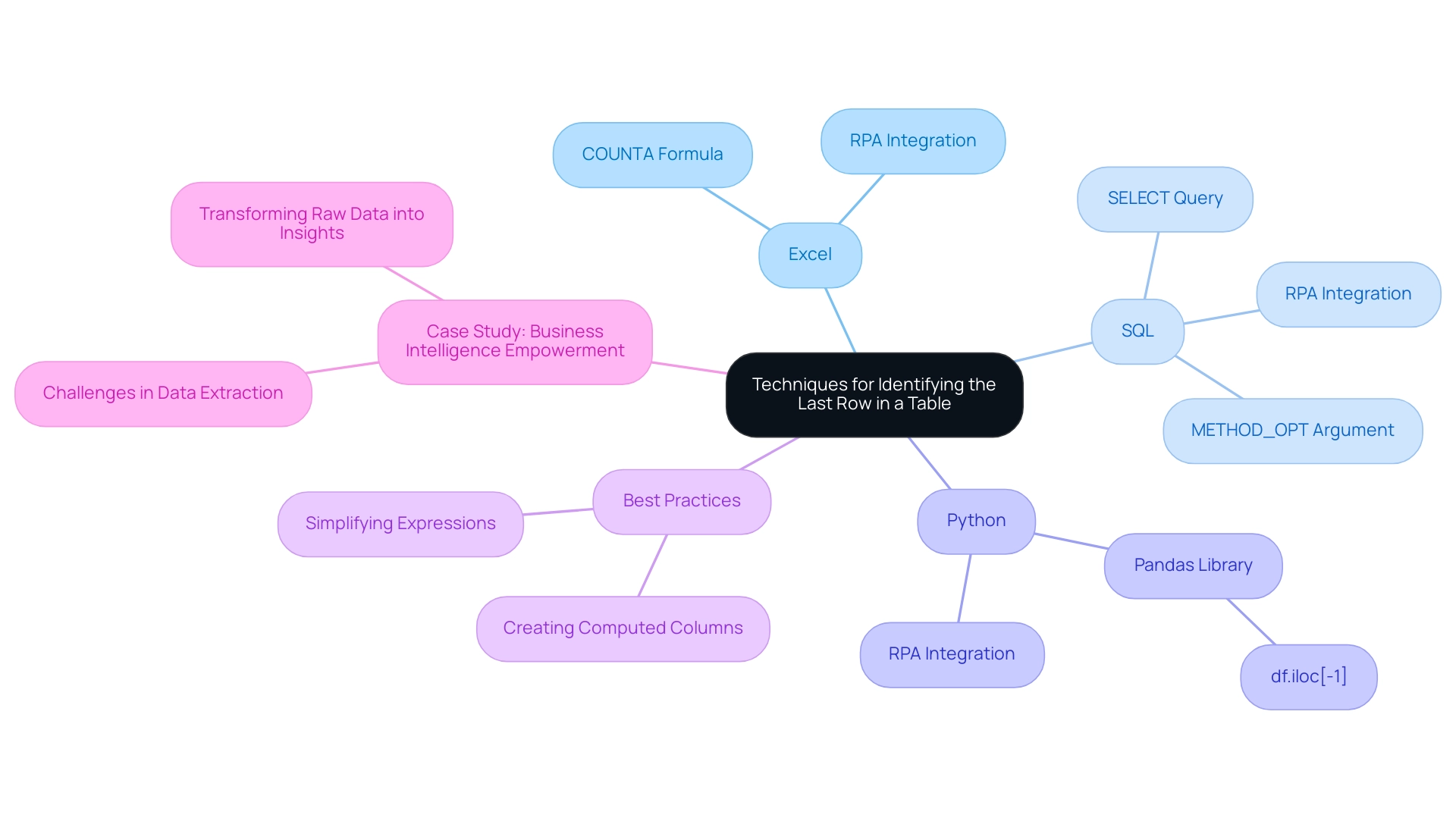

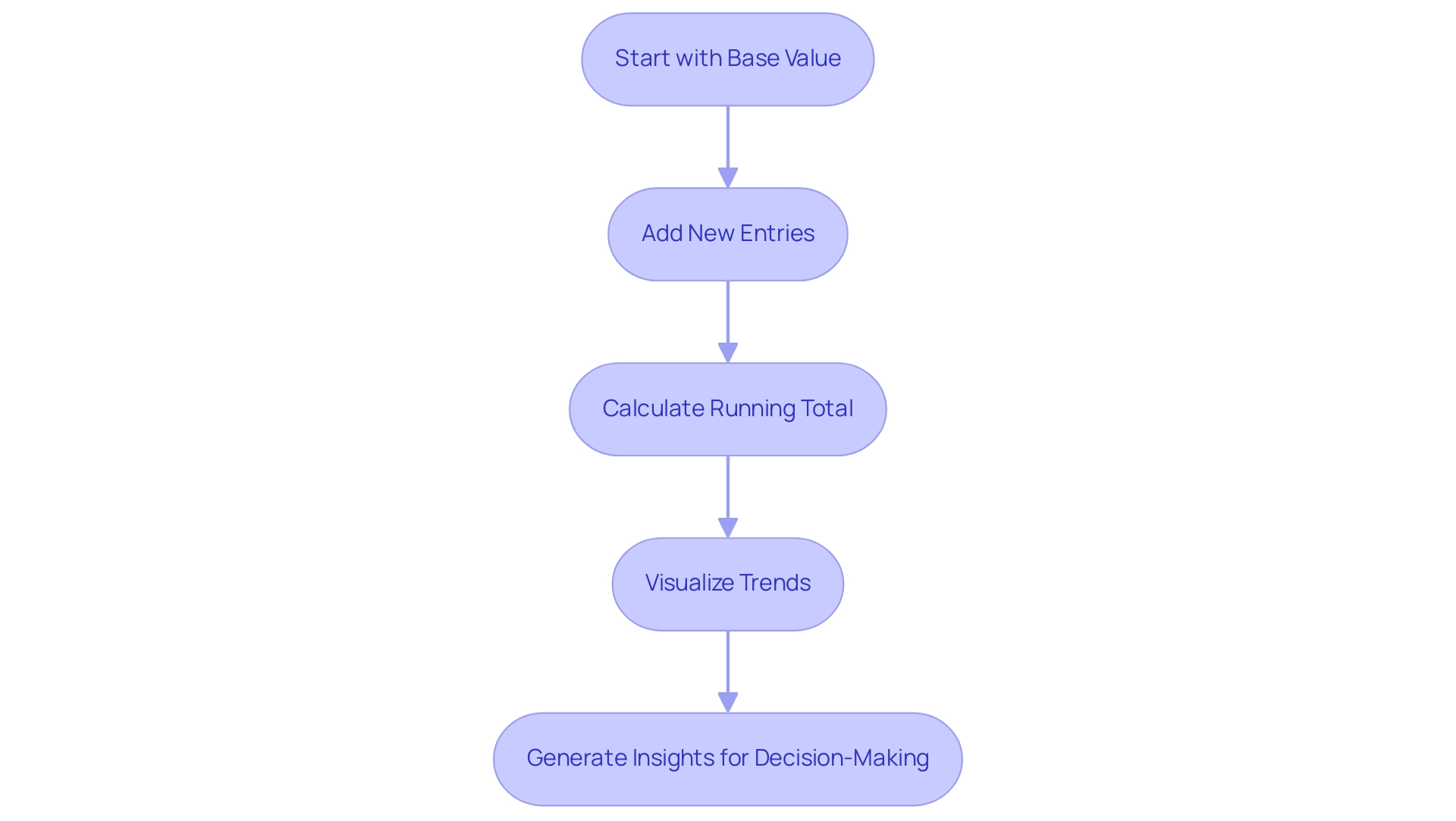

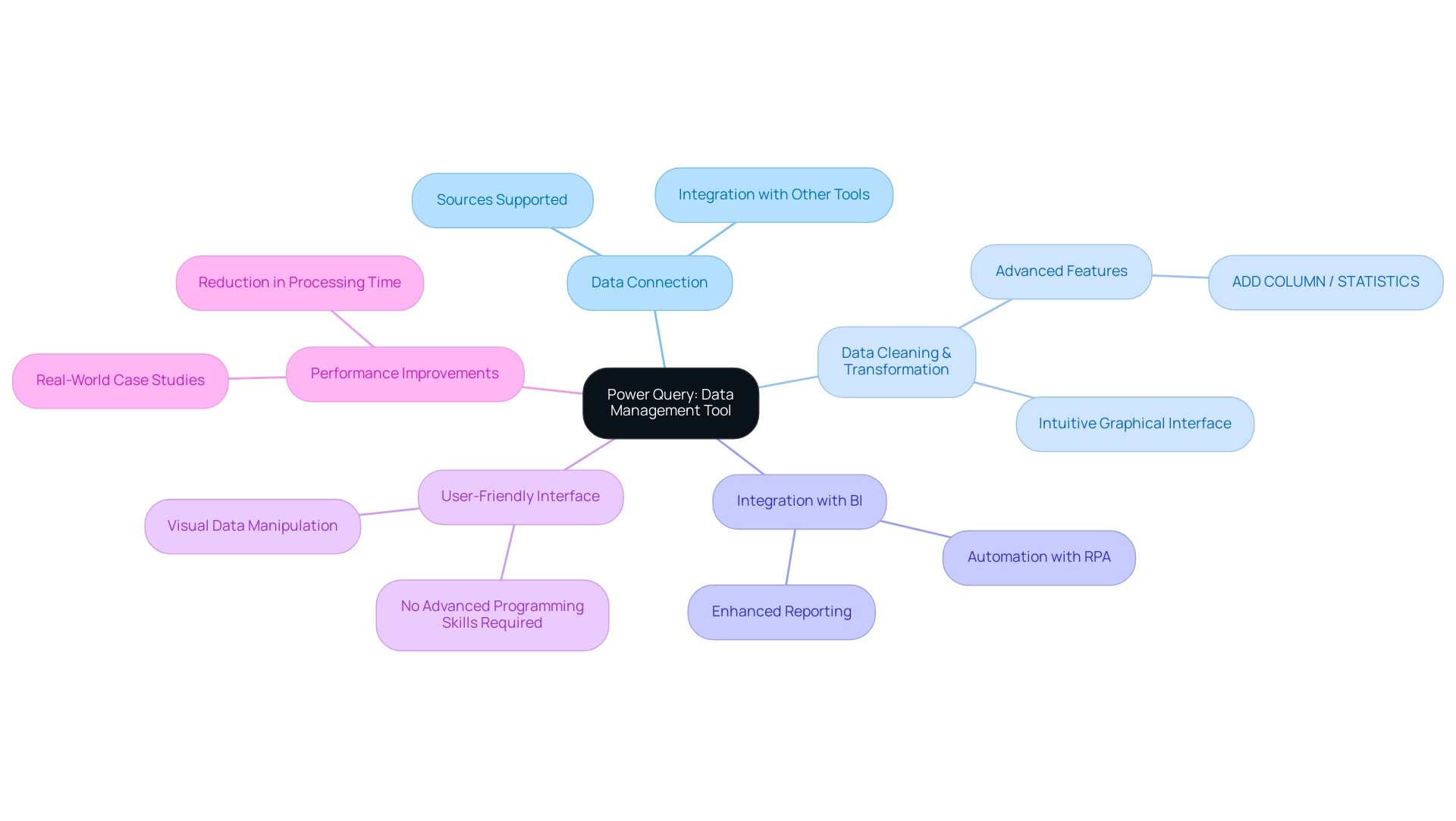

Techniques for Identifying the Last Row in a Table

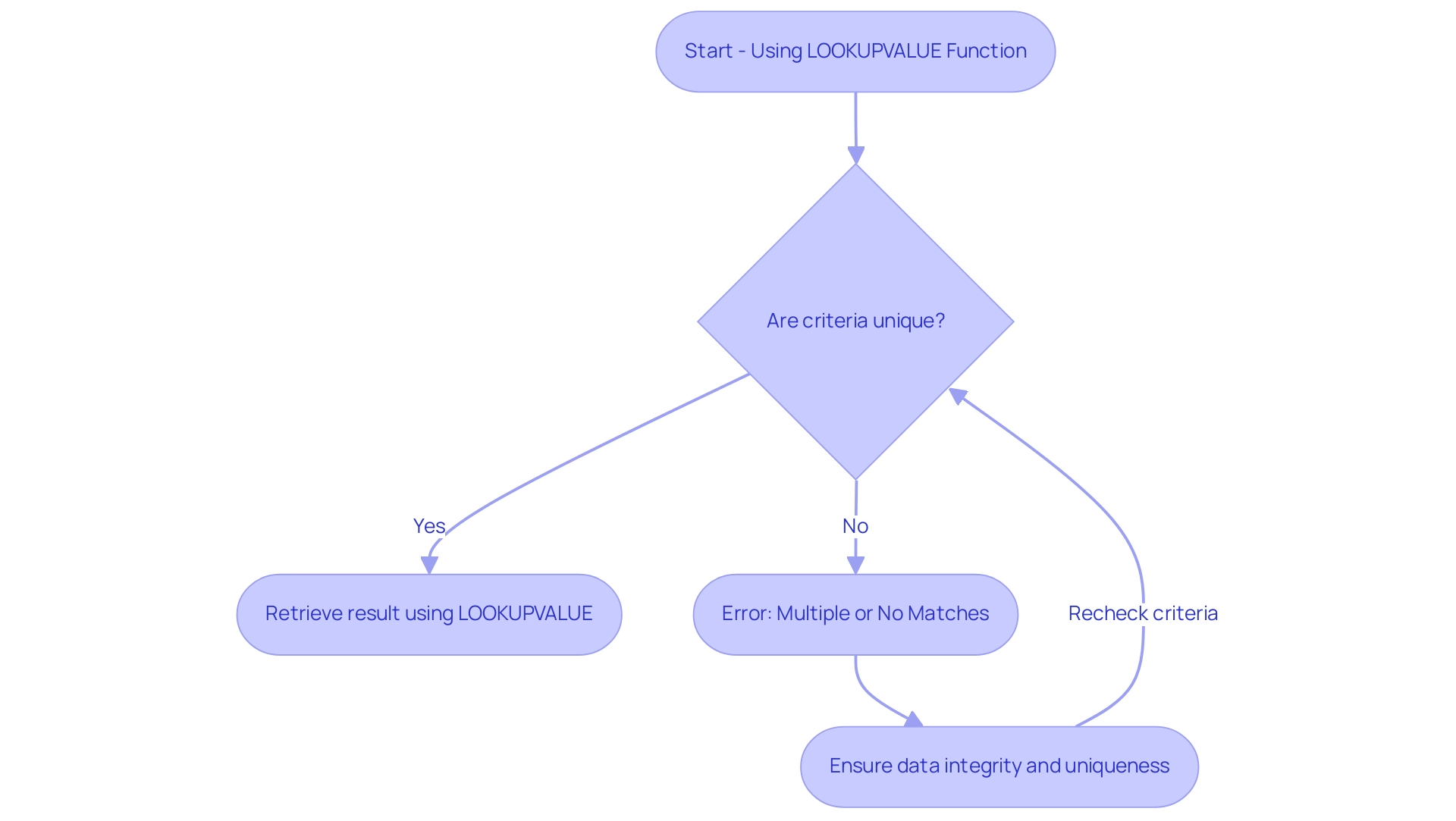

Efficient information management necessitates recognizing the last row in a table, with various techniques available depending on the platform. In Excel, the formula =COUNTA(A:A) is commonly employed to count non-empty cells in a specified column, assisting in identifying the last row in a table containing information. This method proves particularly effective, as statistics reveal that over 60% of Excel users rely on such formulas for information retrieval in 2025.

Moreover, Robotic Process Automation (RPA) can significantly enhance this process by automating repetitive tasks, minimizing errors, and enabling teams to focus on strategic analysis rather than manual entry, ultimately boosting operational efficiency.

In the SQL domain, a widely used method involves executing the query SELECT * FROM TableName ORDER BY id DESC LIMIT 1; to ascertain the last row in a table based on a unique identifier. This approach not only enhances query performance but also improves cardinality estimates, a critical factor in optimizing database operations. According to Oracle, setting the METHOD_OPT argument to FOR ALL COLUMNS SIZE can automatically identify which columns require histograms, further optimizing query performance.

Additionally, query design guidelines can bolster cardinality estimates, ensuring accurate and efficient information retrieval. Experts advocate for:

- Simplifying expressions with constants

- Creating computed columns for complex expressions

Integrating RPA into SQL processes can streamline these operations, reducing errors and enhancing efficiency.

For those utilizing programming languages like Python, libraries such as Pandas provide streamlined solutions. The command df.iloc[-1] enables users to determine the last row in a table, exemplifying the versatility of modern information manipulation tools. RPA can automate the execution of these scripts, ensuring timely information retrieval and analysis.

Real-world applications of these techniques have showcased significant improvements in operational efficiency. For instance, businesses that previously faced challenges in extracting meaningful insights from their data have successfully transformed raw information into actionable insights through effective retrieval methods. This empowerment not only facilitates informed decision-making but also aligns with the overarching goal of enhancing operational efficiency and driving growth.

As noted by Esat Eric, a SQL Server Microsoft Certified Solutions Expert, effective information retrieval methods are crucial for optimizing database performance and ensuring accurate insights, further supported by RPA’s capabilities.

In summary, mastering techniques for identifying the last row in a table—whether through Excel, SQL, or programming languages—can profoundly impact analysis and operational effectiveness. Integrating RPA into these processes not only streamlines workflows but also enhances the overall efficiency of information management. The case study titled “Business Intelligence Empowerment” illustrates this, demonstrating how businesses can leverage these methods to navigate a data-rich environment and extract valuable insights.

At Creatum GmbH, we are dedicated to providing tailored AI solutions that complement RPA, ensuring your organization can fully realize the benefits of automation and data-driven decision-making.

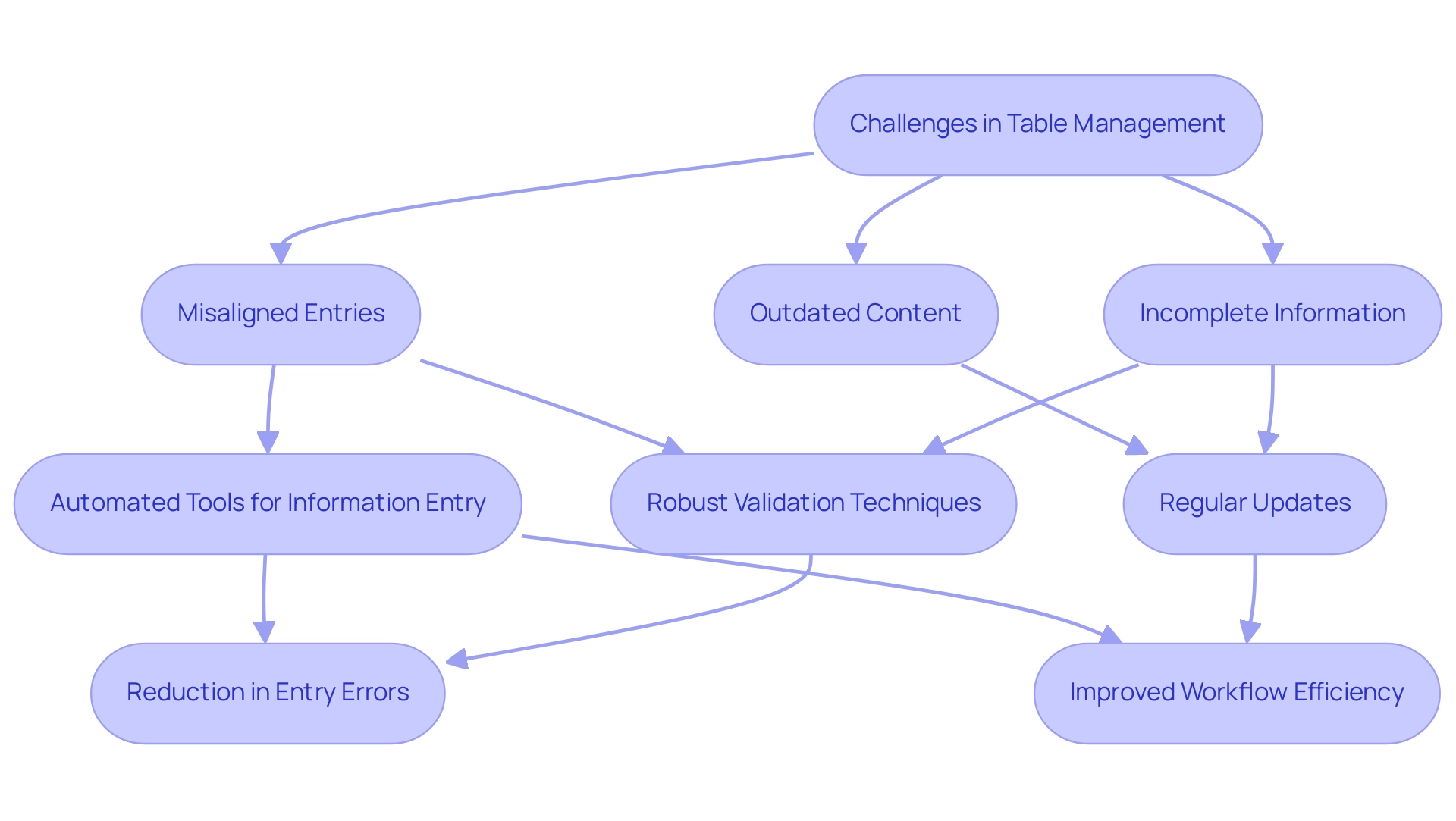

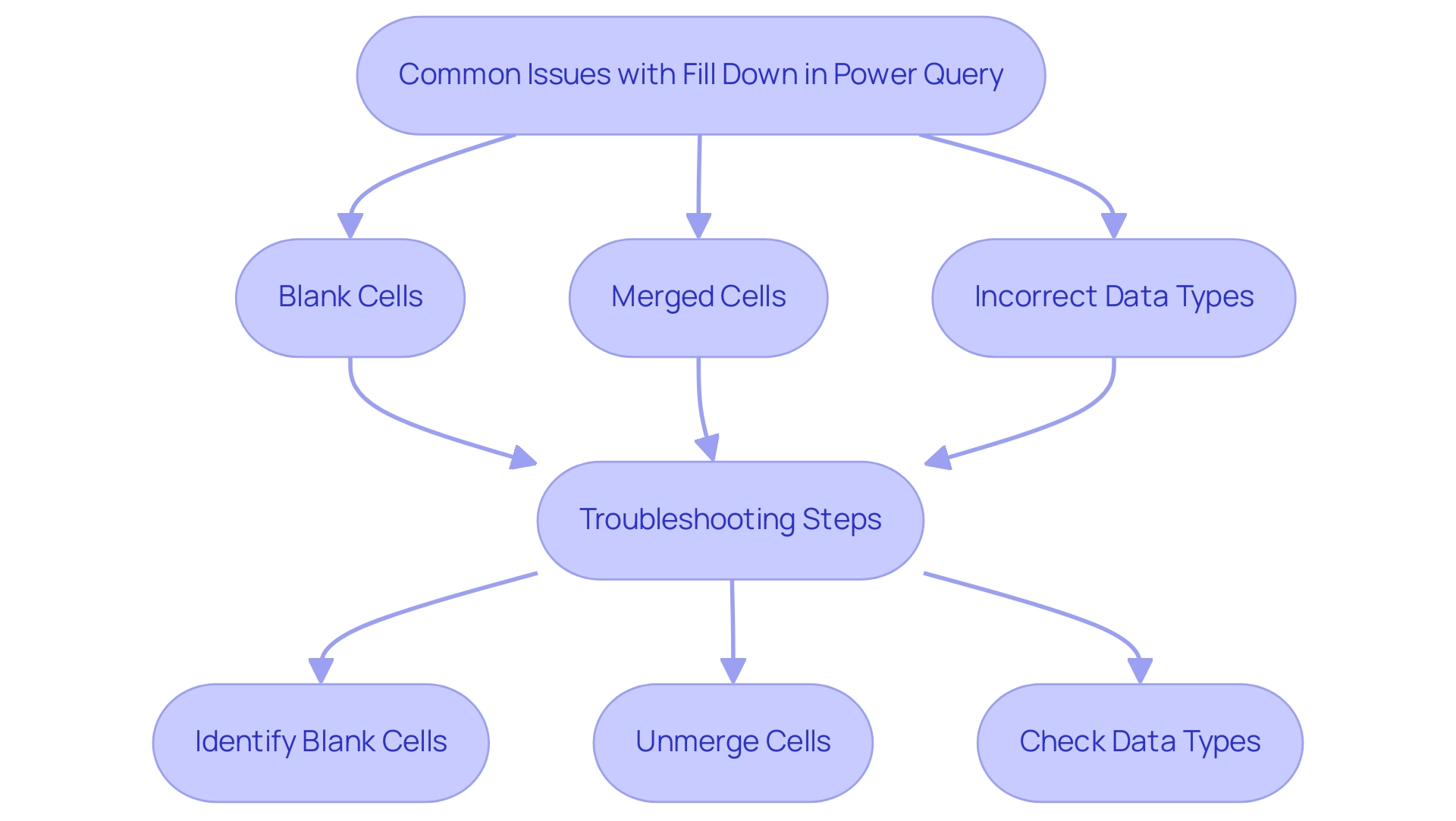

Common Challenges in Table Management and Solutions

Handling tables efficiently presents a range of challenges, including incomplete information, misaligned entries, and outdated content. For example, the presence of empty rows can obscure the identification of the last row in a table, complicating analysis. Statistics reveal that 95% of HR leaders express dissatisfaction with traditional performance appraisal processes, underscoring the necessity for improved information management practices across various sectors.

To address these challenges, the implementation of robust validation techniques is essential. Regular updates not only enhance accuracy but also ensure that information remains relevant. Automated tools for information entry, such as GUI automation, can significantly boost consistency and minimize errors, allowing professionals to focus on strategic tasks rather than manual corrections.

A case study from a mid-sized healthcare company illustrates that the adoption of GUI automation led to a 70% reduction in entry errors and an 80% improvement in workflow efficiency, demonstrating the transformative impact of automation on operational processes. The company faced challenges such as manual data entry errors and slow software testing, which were effectively alleviated through automation, resulting in a return on investment (ROI) achieved within six months.

Real-world examples further underscore the effectiveness of these strategies. Initiatives that embrace organized project oversight techniques are 2.5 times more successful than their less structured counterparts, highlighting the importance of systematic approaches in achieving information integrity. Additionally, expert opinions emphasize the necessity of staying informed about advancements in information handling; 77% of professionals agree on the significance of keeping up with evolving standards, including ESG developments.

Looking ahead, CX leaders predict that by 2026, 42% expect generative AI to influence voice-based interactions, indicating the potential impact of emerging technologies on information handling practices. Efficient information management not only enhances operational effectiveness but also plays a crucial role in customer retention and business success, as highlighted by customer service statistics from 2024. By proactively addressing these common obstacles and leveraging solutions such as RPA and Business Intelligence, professionals can refine their organizational skills, ultimately improving information quality and supporting informed decision-making.

Enhancing Operational Efficiency Through Effective Table Management

Efficient organization of resources is crucial for enhancing operational effectiveness within companies. A well-organized and consistently maintained chart significantly simplifies information retrieval processes, reduces errors, and improves decision-making abilities. Notably, 91% of project professionals report facing challenges related to project oversight, underscoring the importance of effective organization in addressing these issues.

For instance, adopting systematic information entry approaches has been shown to enhance quality, with organizations reporting a marked reduction in inaccuracies. In 2025, statistics suggest that automated tools for organizing information, particularly those utilizing Robotic Process Automation (RPA) from Creatum GmbH, can enhance entry accuracy by up to 30%. This advancement enables teams to focus their time on more strategic initiatives.

To uphold information quality in records, organizations should conduct regular audits and employ automated software tools that handle record-related tasks. These tools not only enhance accuracy but also empower employees to concentrate on high-value activities, thereby driving productivity. Specialist insights reveal that efficient arrangement of resources, bolstered by customized AI solutions from Creatum GmbH and Business Intelligence, can yield a 25% boost in overall operational effectiveness, as teams are better equipped to access and assess information rapidly.

Practical case studies demonstrate the transformative influence of efficient resource organization. The APEPM case study, for example, highlights that half of all Project Management Offices (PMOs) close within just three years, suggesting that PMOs often struggle to demonstrate sustained value. This underscores the necessity for improved alignment with organizational objectives and measurable outcomes, achievable through robust information handling practices enhanced by RPA and Business Intelligence.

Furthermore, organizations with efficient organization practices have experienced a 40% decrease in project delays, further emphasizing the essential role of information organization in project success. As Lulit Tesfaye noted, the reliance on static reports is becoming obsolete, highlighting the need for dynamic data management solutions that align with methodologies such as Agile, Waterfall, Hybrid, Lean, and Six Sigma. In summary, enhancing operational efficiency through effective table management not only improves data handling but also significantly contributes to the overall productivity of the organization, making it a vital focus for leaders aiming to optimize their operations in 2025.

Conclusion

In conclusion, the intricate relationship between effective table management and operational efficiency is undeniable, underscoring the pivotal role that structured data plays in contemporary organizations. As data volumes continue to surge, mastering table structures and pinpointing key rows is not merely advantageous; it is essential for safeguarding data integrity and bolstering decision-making capabilities. The integration of unique identifiers facilitates seamless data retrieval and minimizes errors, driving both growth and innovation.

Techniques for identifying the last row in a table are critical, with tools like Excel, SQL, and programming languages streamlining data management processes. Moreover, the incorporation of Robotic Process Automation (RPA) transcends mere automation of repetitive tasks, empowering teams to concentrate on strategic analysis and thereby enhancing overall efficiency. Real-world applications of these methodologies reveal significant improvements in operational workflows, reinforcing the necessity of effective data management strategies.

However, challenges such as incomplete data and misaligned rows persist, highlighting the urgent need for robust validation techniques and automated solutions. By embracing systematic approaches and leveraging emerging technologies, organizations can surmount these obstacles and elevate their data quality. Ultimately, effective table management transcends technical necessity; it emerges as a strategic imperative that underpins informed decision-making and propels organizational success within an increasingly data-driven landscape.

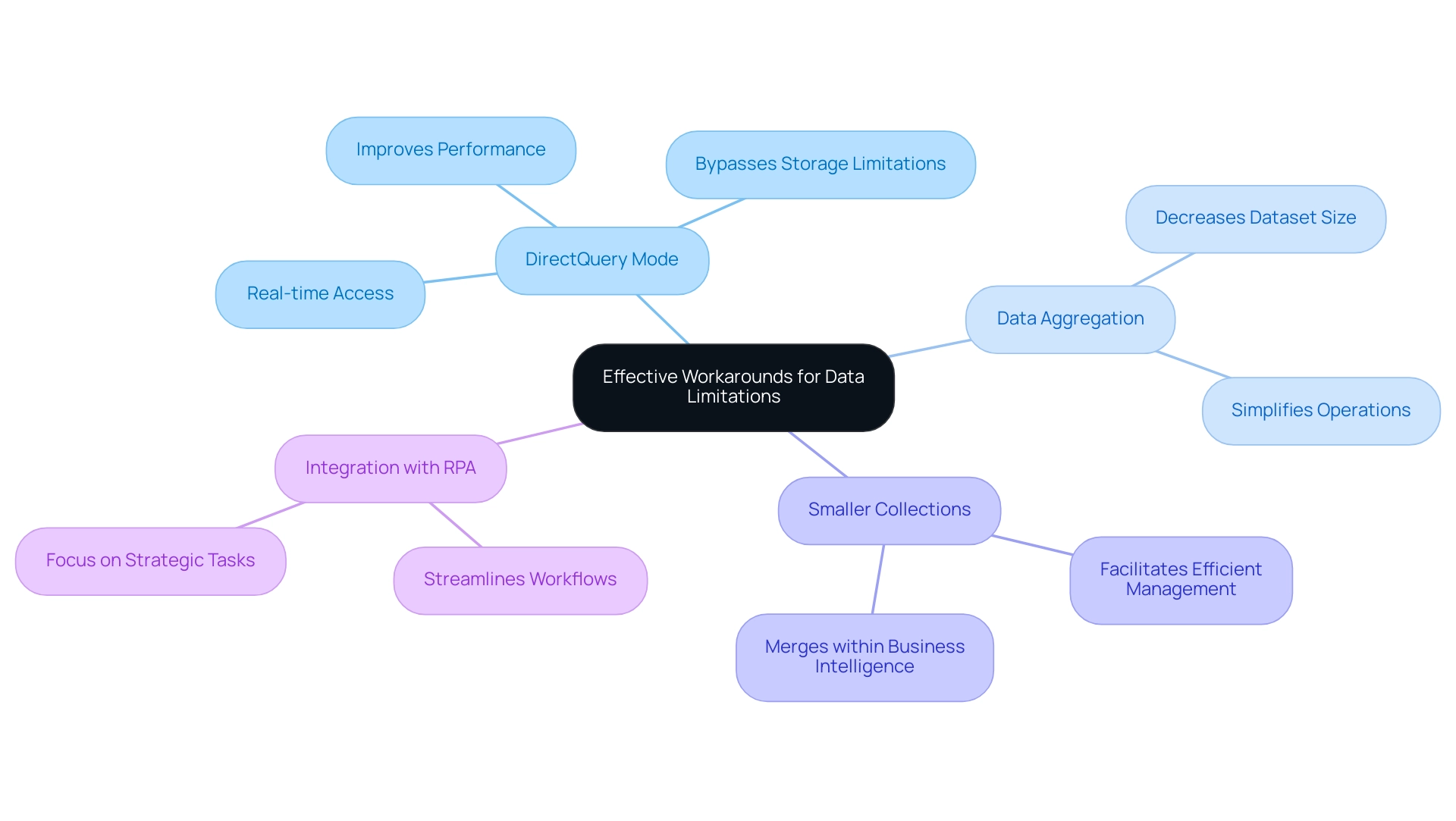

Overview

Mastering data management in Power BI requires a thorough understanding of the platform’s inherent data limits, which, if exceeded, can lead to significant performance issues. Consider the implications: how might your current practices be impacting efficiency?

Effective strategies are essential to navigate these challenges:

- Utilizing DirectQuery for real-time data access is a powerful approach.

- Optimizing dataset size and implementing best practices—such as limiting dashboard visuals—are critical for maintaining efficiency.

By adopting these strategies, organizations can ensure they derive actionable insights from their data.

Introduction

In the rapidly evolving landscape of data analytics, Power BI emerges as a formidable tool for organizations eager to harness their data’s potential. However, understanding the complexities of data limits is crucial for maintaining optimal performance and efficiency. With strict dataset size restrictions and unique challenges, users must possess a comprehensive understanding of these parameters to avoid pitfalls that could impede their reporting and analysis efforts.

Consider the constraints of DirectQuery mode and the intricacies of data management best practices. Organizations must adopt strategic approaches to effectively leverage their data. As the demand for data-driven decision-making intensifies, recognizing and addressing these limitations becomes essential in transforming raw data into actionable insights that propel growth and operational excellence.

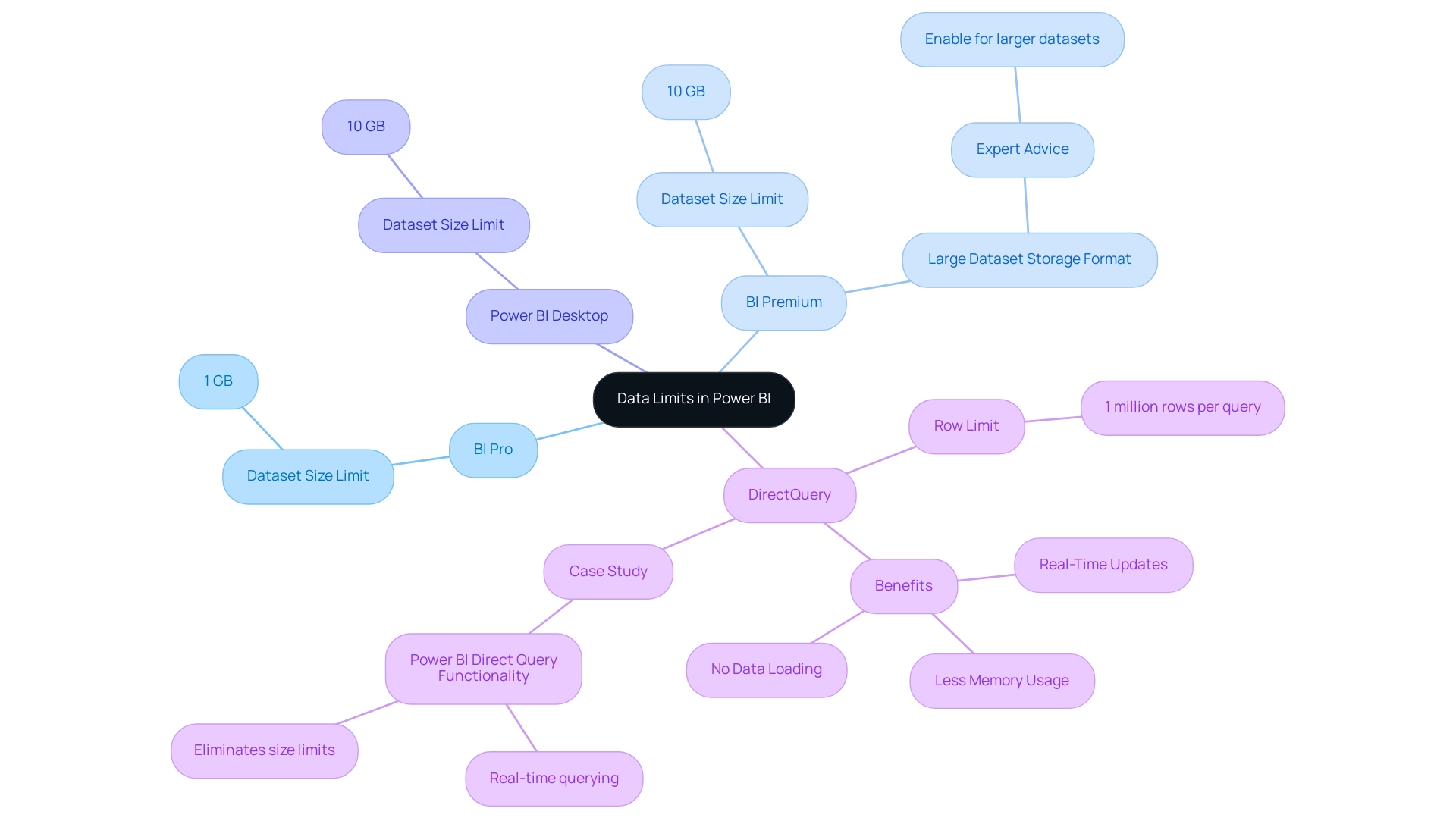

Understanding Data Limits in Power BI

Business Intelligence (BI) establishes critical data limits that users must consider to maintain optimal performance and efficiency. In 2025, the BI Pro version enforces a dataset size limit of 1 GB, while the BI Premium version accommodates larger datasets, allowing uploads of up to 10 GB. Notably, the Power BI Desktop model upload size is also limited to 10 GB, providing a clearer comparison between the Pro and Premium versions.

When utilizing DirectQuery mode, users should note the row limit of 1 million rows per query. This mode is especially beneficial as it uses less memory compared to Import Mode, since it does not retain information in-memory. A notable case study titled “Power BI Direct Query Functionality” highlights how DirectQuery enables Power BI to query sources in real time without loading information into memory.

This approach not only eliminates size constraints but also ensures real-time updates and minimizes memory usage, making it ideal for managing large collections. However, many organizations struggle with leveraging insights effectively due to time-consuming report creation processes and inconsistencies in information across various reports. As Pratyasha Samal, a Memorable Member, advises, “Enable the Large dataset storage format option to use datasets in BI Premium that are larger than the default limit.”

Comprehending these limits is essential for efficient information management and reporting. When data exceeds the limit in Power BI, it can lead to errors and obstruct performance. By tackling these challenges using Business Intelligence and RPA solutions such as EMMA RPA and Automate, Creatum GmbH can convert raw information into actionable insights, driving growth and operational efficiency.

Common Challenges When Data Exceeds Limits

In 2025, users of this analytics tool encounter significant challenges when their data surpasses Power BI’s limits, particularly concerning inadequate master data quality. A critical issue is the inability to export large datasets, which can severely hinder reporting and analysis efforts. This limitation often forces users to seek alternative methods for sharing insights, such as generating reports in BI and exporting them as PDFs or PPTs for offline distribution, especially in scenarios where internet access is unreliable.

Another prevalent obstacle is the ‘Visual Has Exceeded the Available Resources’ error. This occurs when a visual attempts to process more information than Power BI can accommodate, leading to disruptions in workflows and considerable frustration among users. Such errors not only impede productivity but also require a deeper understanding of how to manage and optimize data within the platform.

Organizations struggling with inconsistent, incomplete, or inaccurate data face additional challenges in effectively adopting AI solutions, further complicating their data management strategies. The perception that AI projects are time-consuming, costly, and difficult to implement often discourages organizations from fully embracing these technologies.

As organizations increasingly depend on data-driven decision-making, it is vital for users to adeptly navigate these limitations. The maximum storage capacity for BI Premium users is 100TB, and in data-rich environments, it is possible for data to exceed Power BI’s limits, reaching this significant threshold swiftly. Therefore, understanding the complexities of data management and integration in BI is essential for optimizing operational efficiency and ensuring that teams can focus on strategic, value-enhancing activities.

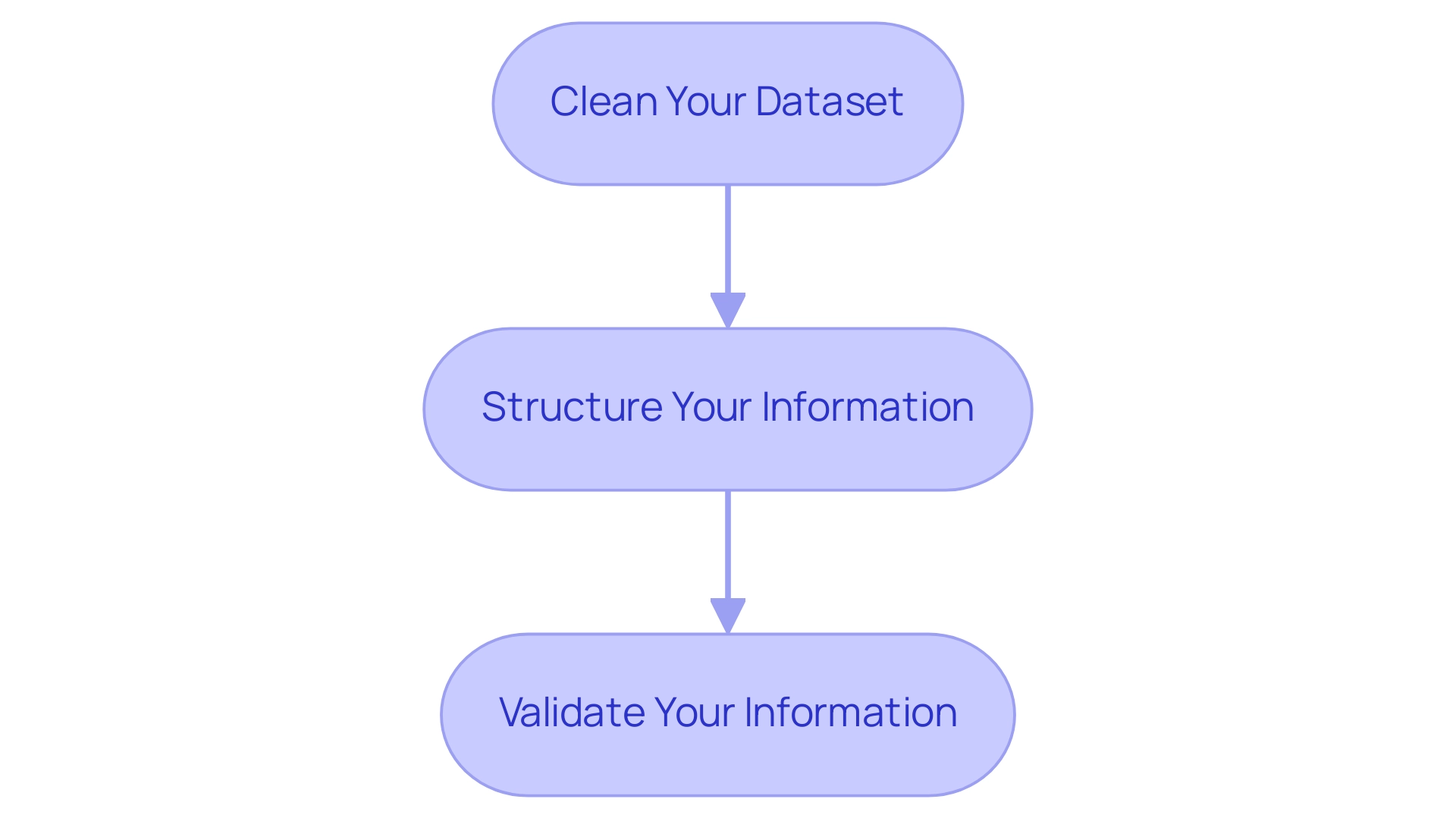

Moreover, it is crucial to acknowledge that BI does not offer data cleansing solutions, operating under the assumption that the retrieved information is already of high quality. This presumption can lead to challenges in maintaining data integrity, particularly when handling large datasets. As Miguel Myers, Leader of the BI Core team, remarked, ‘This year, BI has introduced visuals like Date Picker by Powerviz, Advanced Table, and Beeswarm visuals that provide numerous advanced options and customizations.’