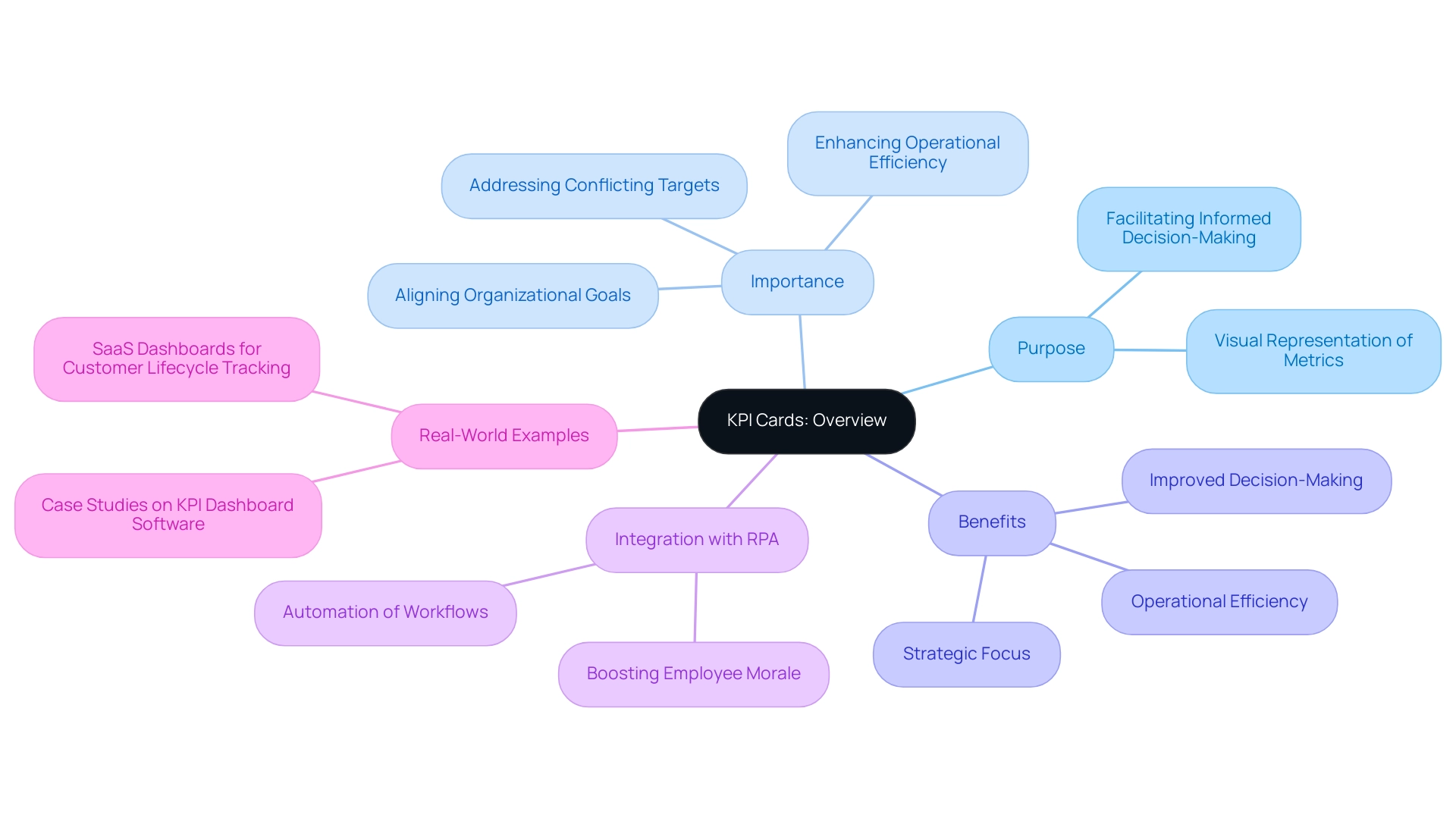

Overview

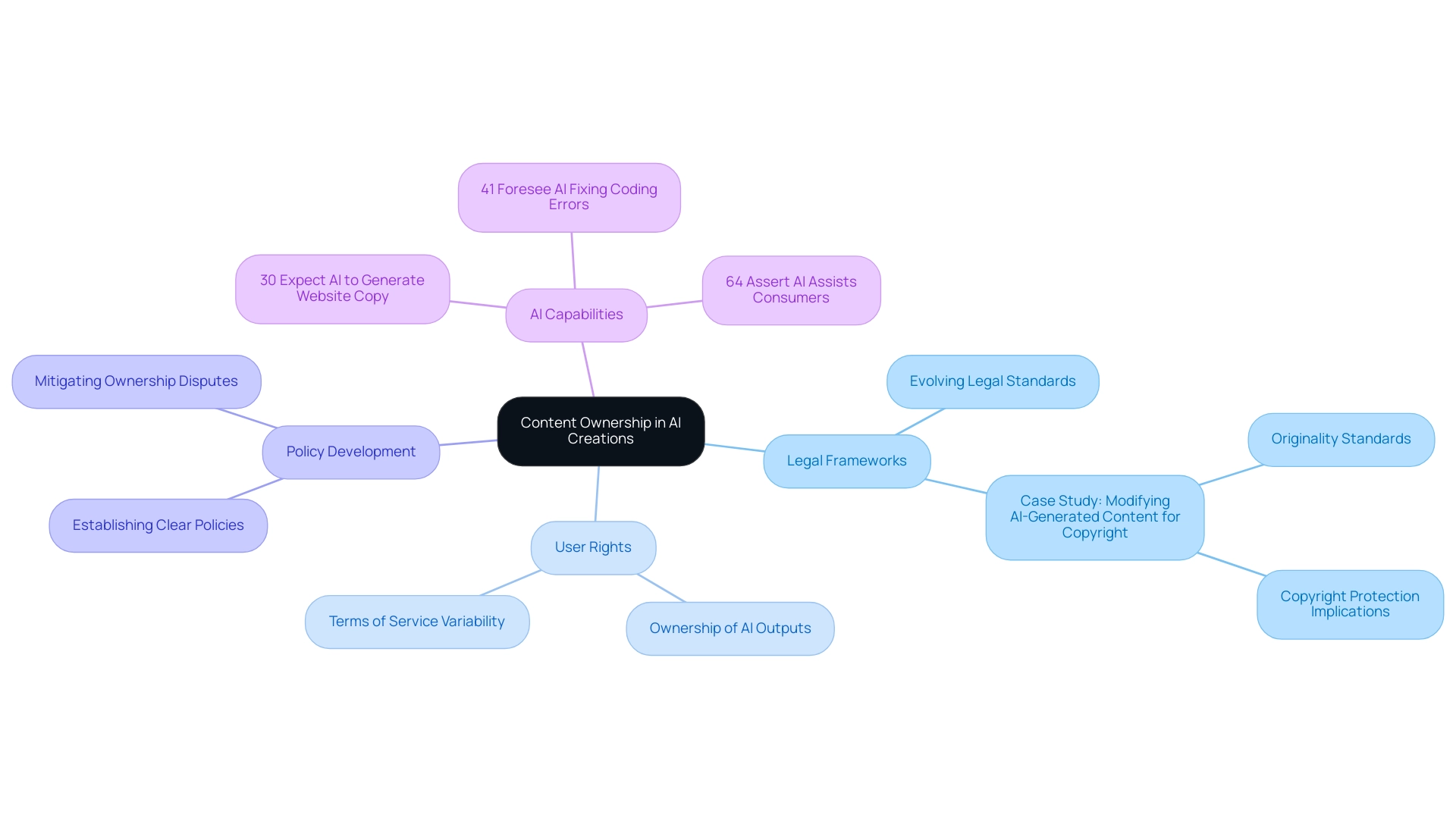

Mastering DAX SUM with filters is crucial for professionals seeking to elevate their data analysis skills. This capability enables precise calculations tailored to specific criteria through the CALCULATE function. Various practical applications of DAX SUM with filters, such as:

- Sales performance analysis

- Year-over-year comparisons

illustrate its significance. These techniques not only drive informed decision-making but also enhance operational efficiency, underscoring their value in a competitive landscape.

Introduction

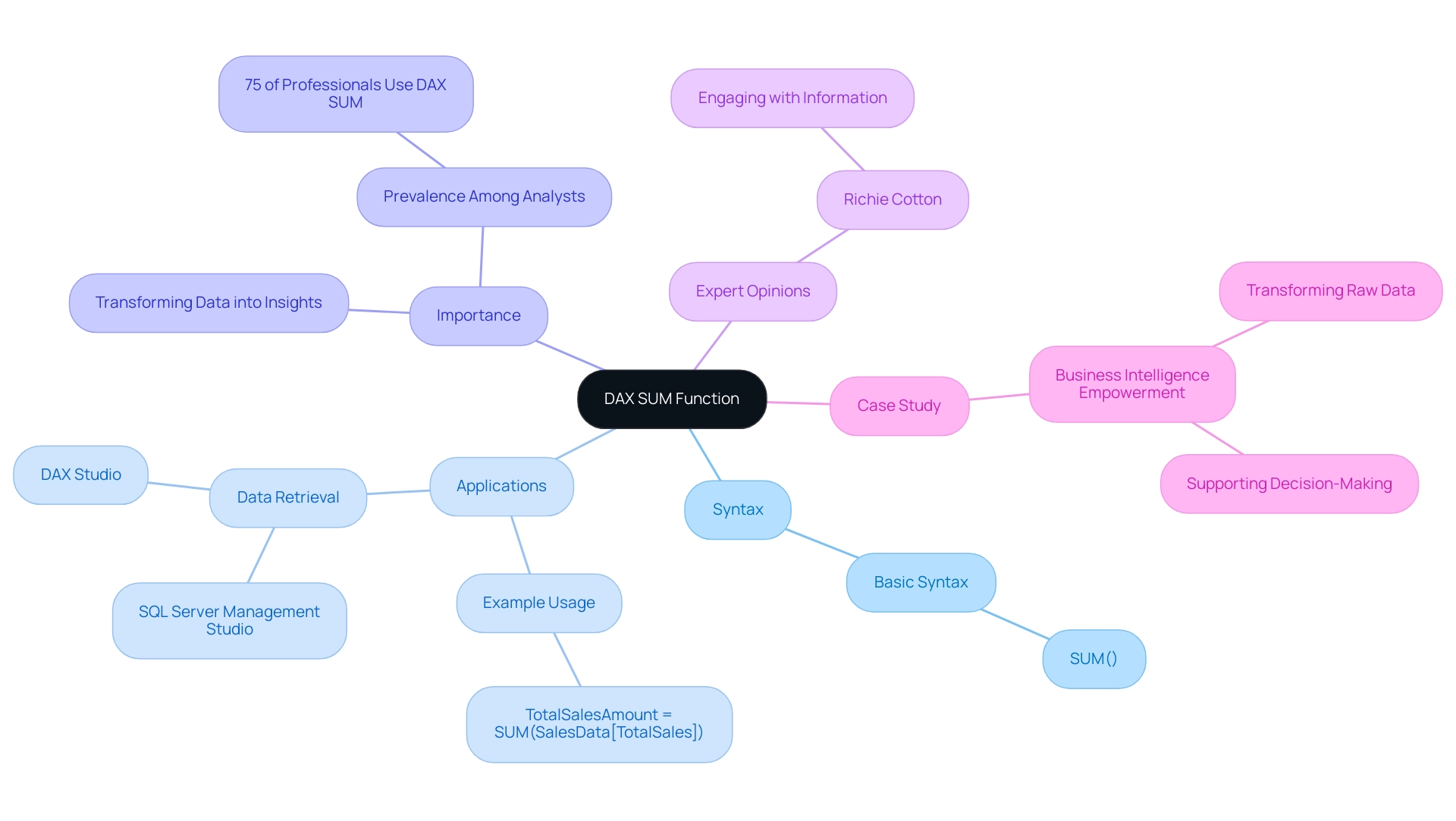

In the realm of data analysis, the DAX SUM function stands as a cornerstone for professionals seeking to derive meaningful insights from their datasets. As organizations increasingly rely on data-driven decision-making, understanding how to effectively utilize this powerful aggregation tool becomes paramount. With its straightforward syntax and crucial role in platforms like Power BI, mastering the DAX SUM function not only streamlines report creation but also enhances the accuracy of business intelligence efforts.

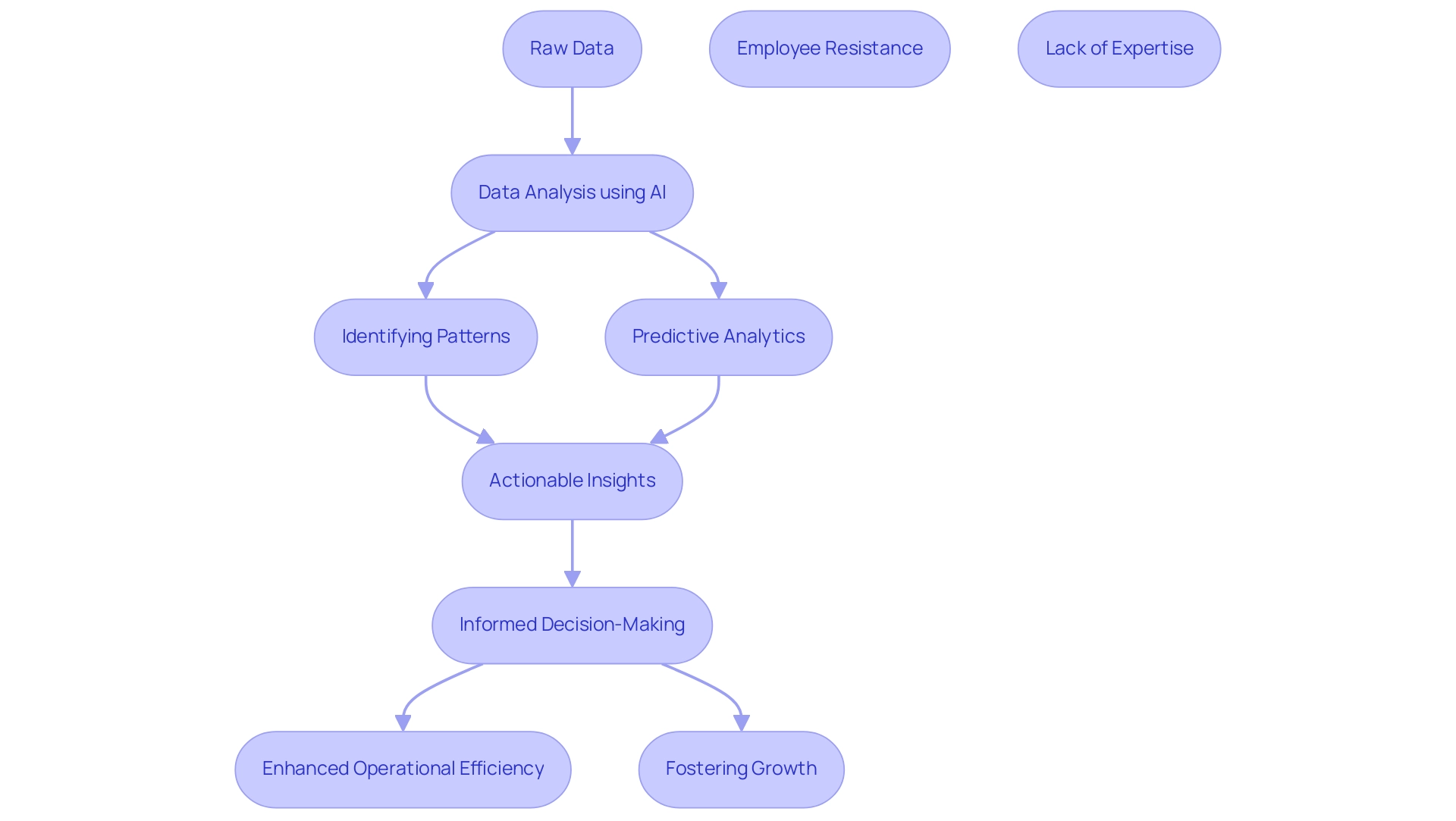

This article delves into the intricacies of DAX SUM, exploring its applications, the importance of filters, and practical strategies for overcoming common challenges. Ultimately, it equips data analysts with the knowledge to transform raw data into actionable insights.

Understanding DAX SUM: The Foundation of Data Analysis

The DAX SUM formula serves as a fundamental tool for aggregating numerical data within a specified column, characterized by its straightforward syntax: SUM(<Column Name>). For example, to calculate total sales from a column named ‘Sales’, the formula SUM(Sales) is employed. This feature is essential for executing basic aggregations in Power BI, enabling users to effectively summarize information for insightful reports and dashboards. Addressing challenges such as time-consuming report creation and inconsistencies is critical in today’s data-driven environment.

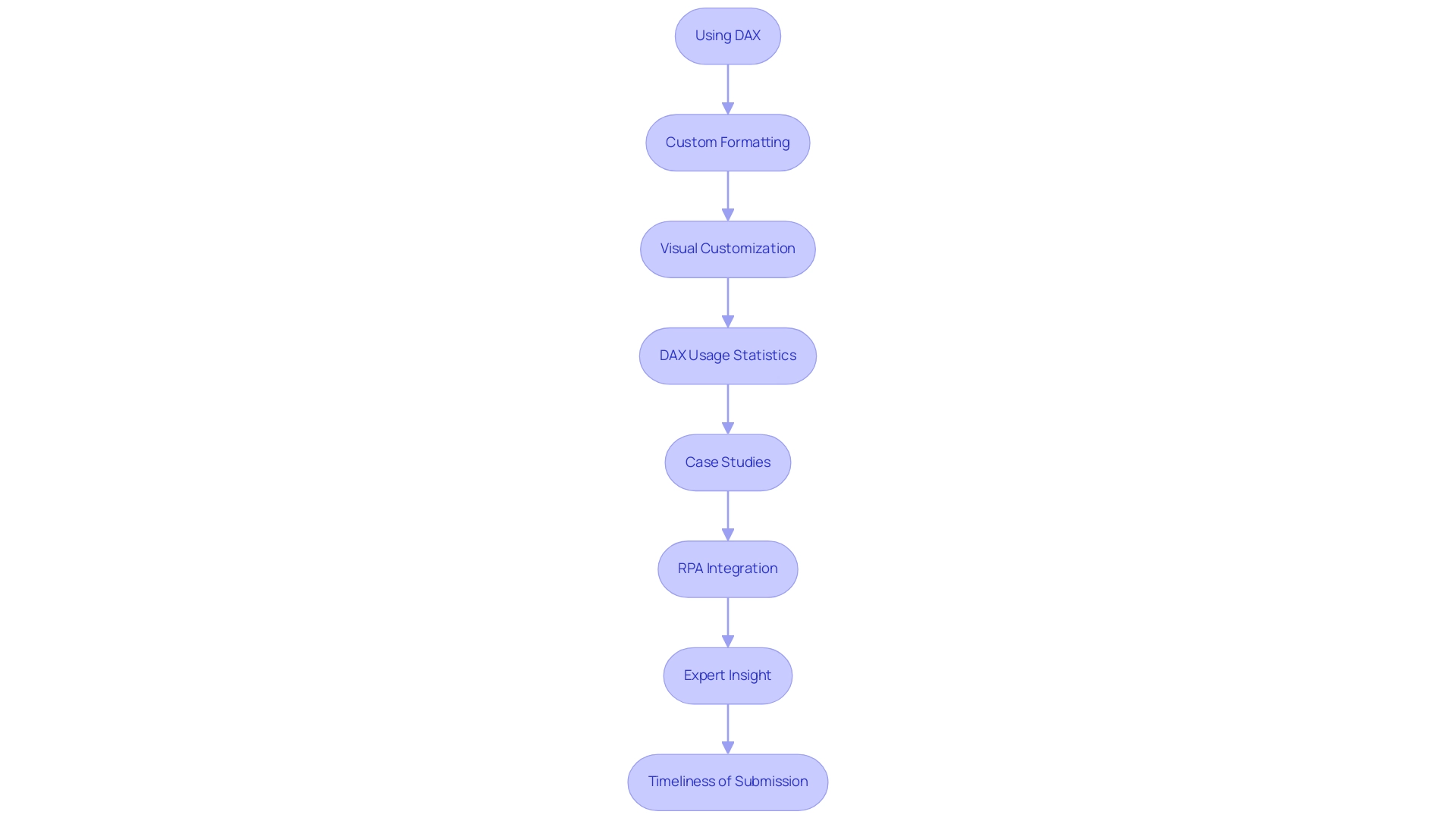

As we look to 2025, the prevalence of the DAX SUM function among analysts remains significant, with approximately 75% of professionals relying on it for aggregation tasks. This underscores its importance in the realm of Business Intelligence, where transforming raw data into actionable insights is vital for informed decision-making and fostering growth. The ability to generate and execute DAX queries in applications like SQL Server Management Studio and DAX Studio further enhances its utility, facilitating effortless data retrieval and manipulation.

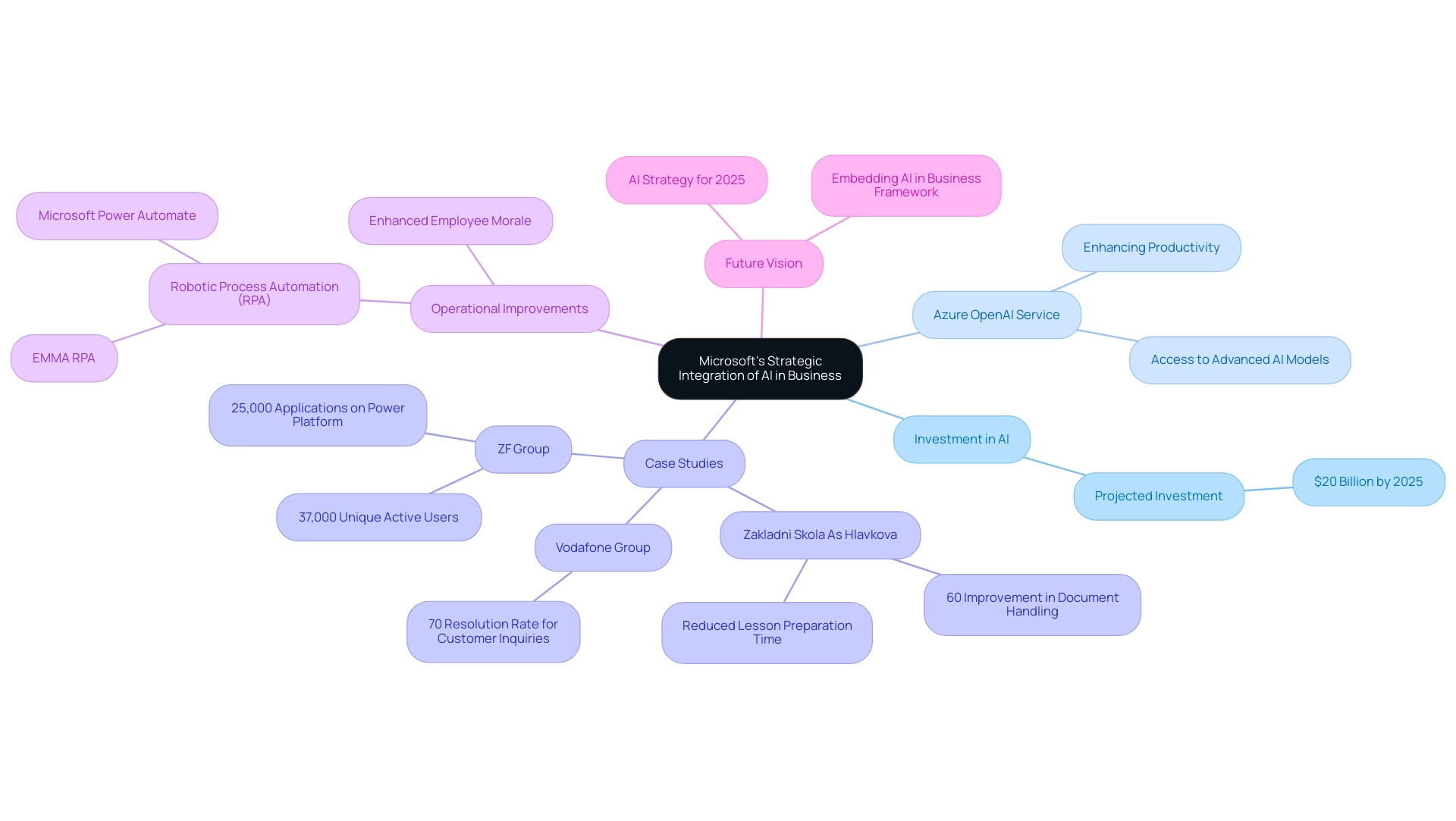

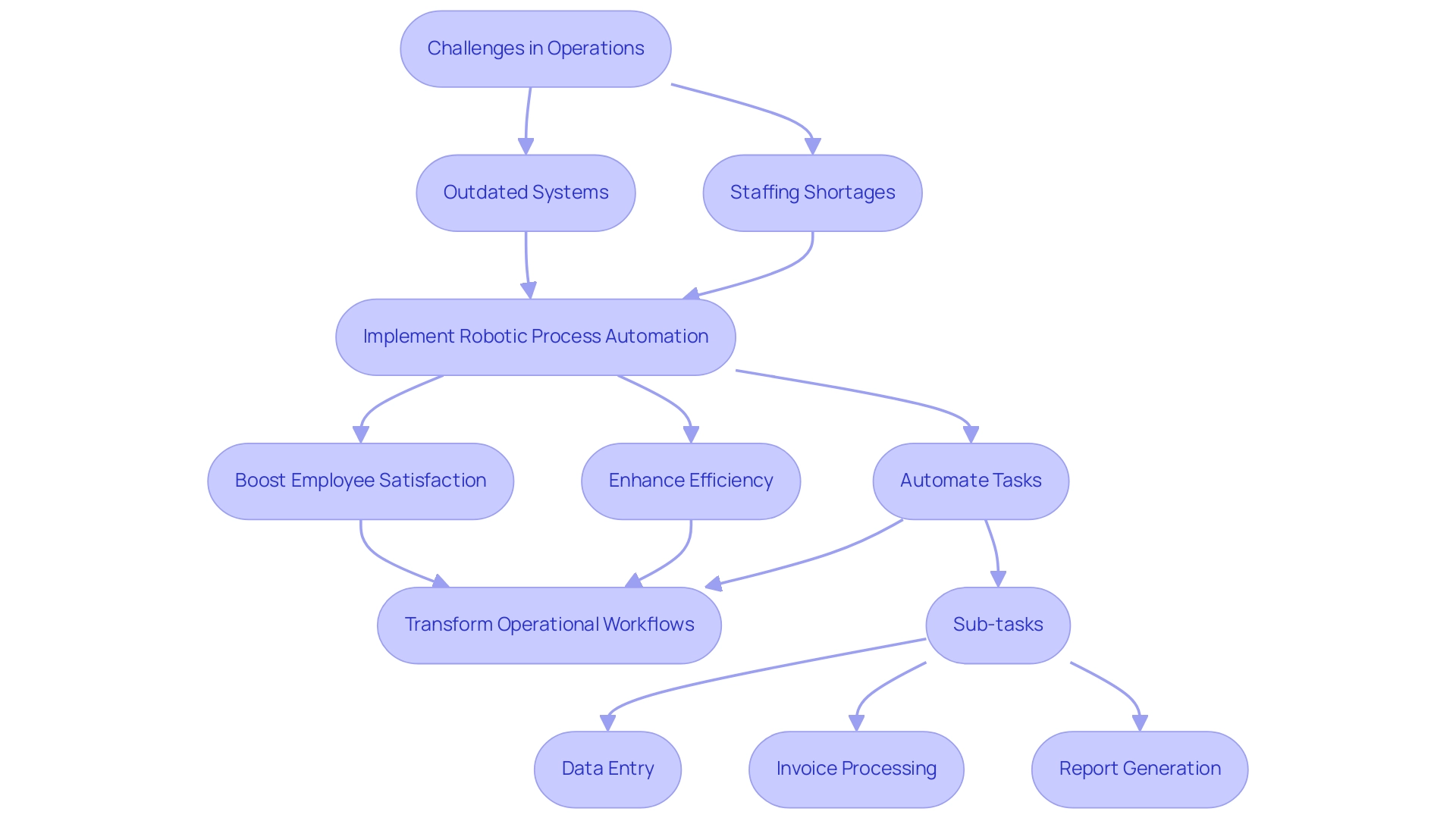

Mastering the effective use of DAX SUM with filters is crucial for those aiming to excel in DAX analysis. A pertinent case study, ‘Business Intelligence Empowerment’, illustrates how organizations leveraged DAX SUM with filters to convert raw sales data into actionable insights, significantly enhancing informed decision-making and supporting growth. This case study exemplifies the direct impact of the DAX SUM operation on business outcomes, aligning with the operational efficiency goals pursued by RPA solutions such as EMMA RPA from Creatum GmbH.

Expert opinions consistently highlight the significance of performing a DAX SUM with filter operation in Power BI, especially for information aggregation. Noted analytics expert Richie Cotton asserts, “Engaging with information is crucial for deriving meaningful insights.” This perspective reinforces the role of the DAX SUM with filter formula in streamlining the aggregation process, thereby enhancing the overall analytical capabilities of Power BI users.

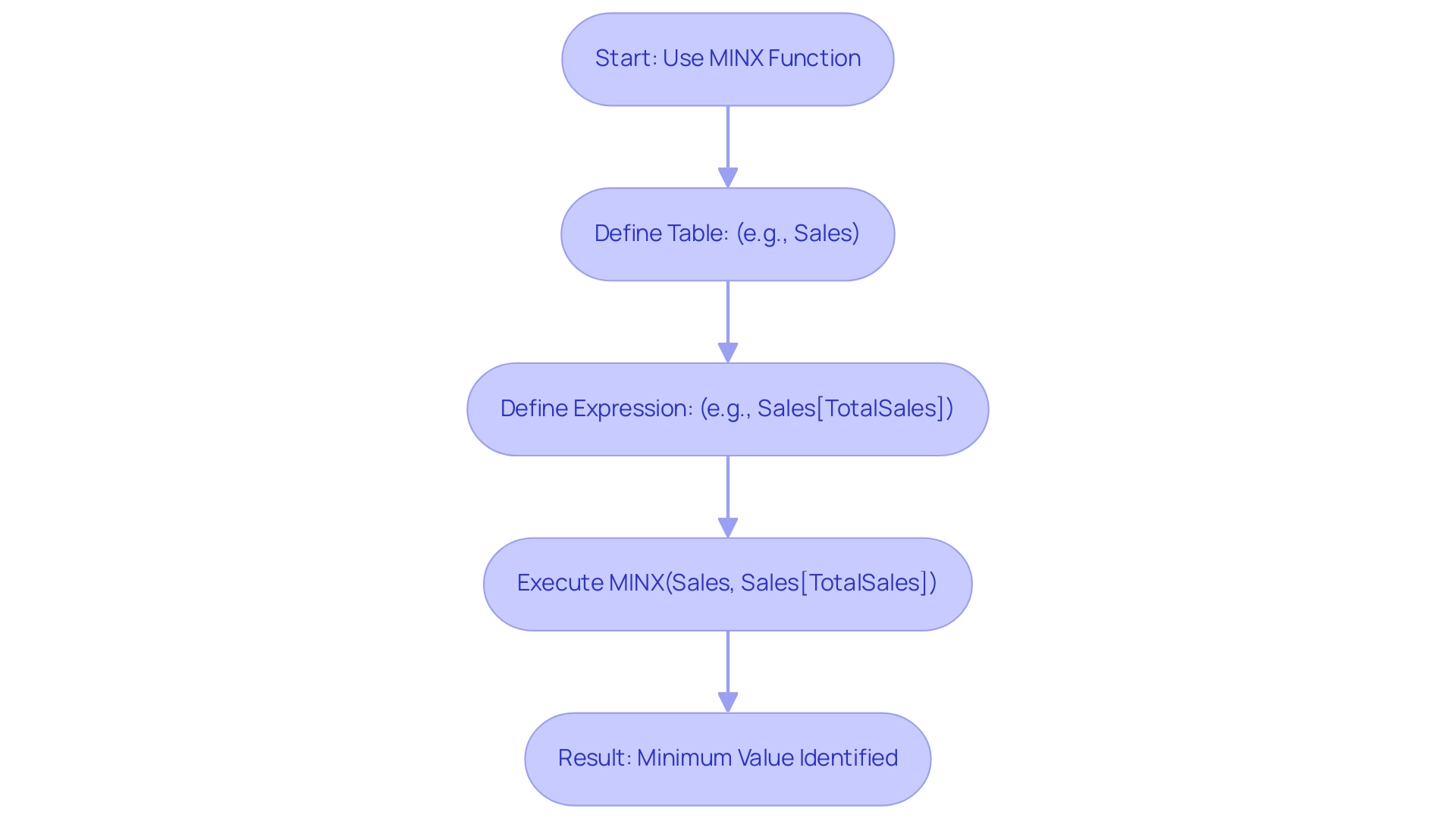

Real-world applications of the DAX SUM capability in Power BI showcase its versatility. For instance, if you have a table named ‘SalesData’ with a column ‘TotalSales’, calculating the total sales amount can be accomplished with:

TotalSalesAmount = SUM(SalesData[TotalSales])

This straightforward implementation exemplifies how the DAX SUM function can be utilized to provide quick and accurate data summaries, solidifying its status as a cornerstone of effective data analysis. Furthermore, mastering DAX is best achieved through software designed for information modeling, ensuring users fully comprehend the nuances of these powerful capabilities. To explore how Creatum GmbH’s solutions can enhance your information analysis capabilities, book a free consultation today.

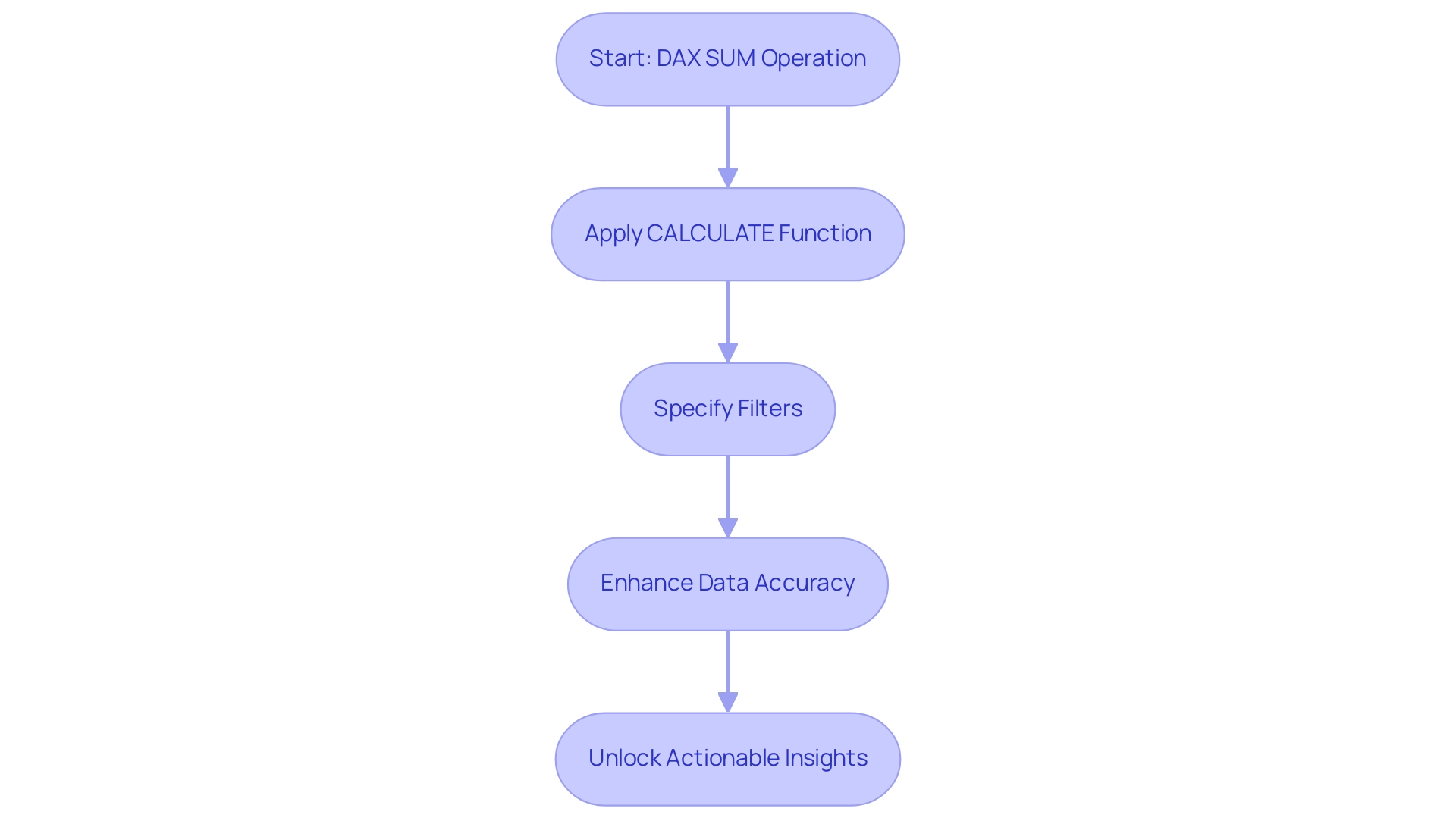

The Role of Filters in DAX SUM: Enhancing Data Accuracy

The DAX sum with filter is essential for refining the information processed by the SUM operation, enabling precise calculations tailored to specific criteria. By leveraging the CALCULATE function, users can effectively apply DAX sum with filter within their DAX formulas. For instance:

TotalSalesWest = CALCULATE(SUM(SalesData[TotalSales]), SalesData[Region] = "West")

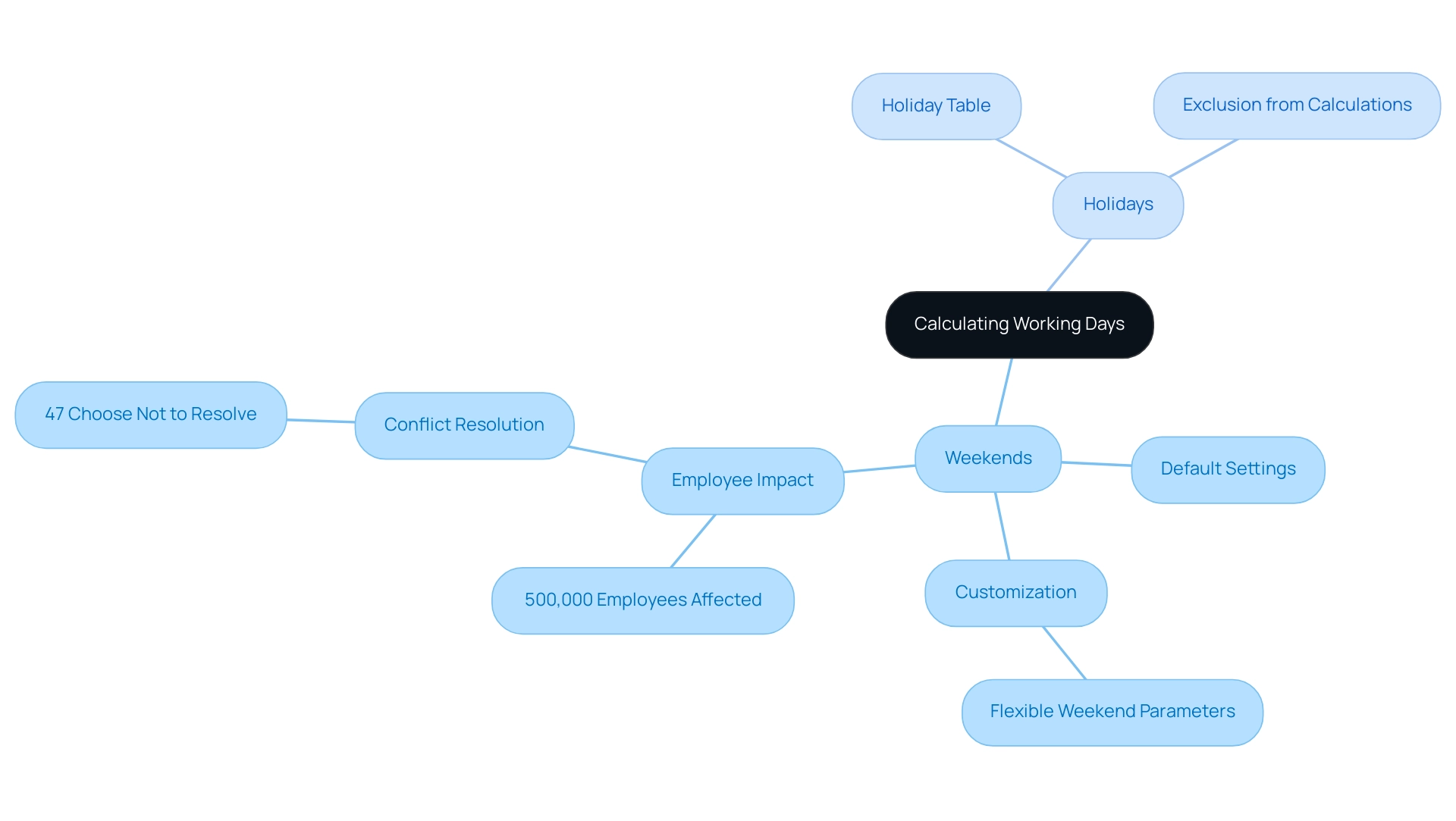

This formula computes the total sales for the ‘West’ region, illustrating how targeted filtering enhances data accuracy. In 2025, the impact of filters on DAX calculations is underscored by the fact that well-structured filters significantly improve the reliability of insights obtained from analysis, which is crucial for driving business growth through informed decision-making.

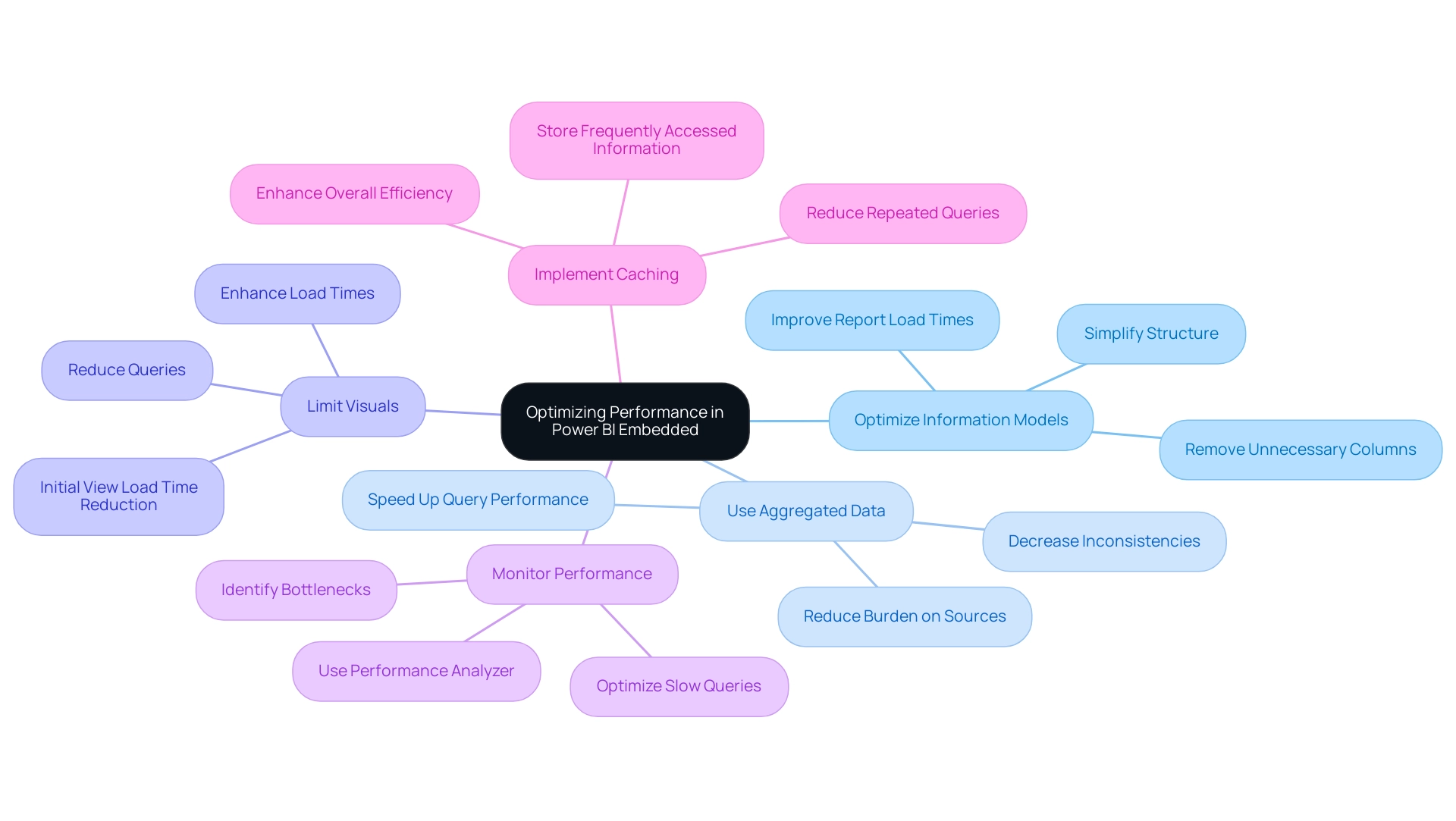

Current trends in information filtering methods emphasize the importance of understanding filter context, as highlighted in a recent case study titled ‘Optimization Techniques for Filter Context in Power BI.’ This study reveals that employing strategies such as simplifying DAX calculations and utilizing tools like ALL, REMOVEFILTERS, and KEEPFILTERS can lead to substantial performance improvements in Power BI reports. By mastering these techniques, users can unlock actionable insights that drive informed decision-making, addressing challenges like time-consuming report creation and information inconsistencies.

Moreover, expert opinions in 2025 suggest that the CALCULATE function remains a cornerstone of effective DAX usage, particularly in scenarios requiring nuanced information analysis. The ability to manipulate filter context not only enhances calculation accuracy but also empowers professionals to derive meaningful insights from complex datasets. As Rutuja Dinde aptly notes, “With the right approach, filter context becomes a powerful tool for unlocking actionable insights.”

Incorporating the DAX sum with filter through slicers in Power BI visuals or directly within DAX formulas allows for focused analysis, ensuring that the information examined is relevant and actionable. This strategic use of filters is essential for optimizing information accuracy and enhancing overall operational efficiency, critical in today’s information-rich environment. Furthermore, for those eager to expand their knowledge, the forthcoming FabCon Vegas event from March 31 to April 2 offers a $400 discount with code FABINSIDER for conference registration, presenting a fantastic opportunity to learn more about DAX and analysis techniques.

Additionally, Creatum GmbH’s RPA solutions can enhance these DAX functions by automating repetitive tasks, thus improving efficiency and addressing staffing shortages, ultimately supporting better information management and insight generation.

Practical Applications: Using DAX SUM with Filters in Real Scenarios

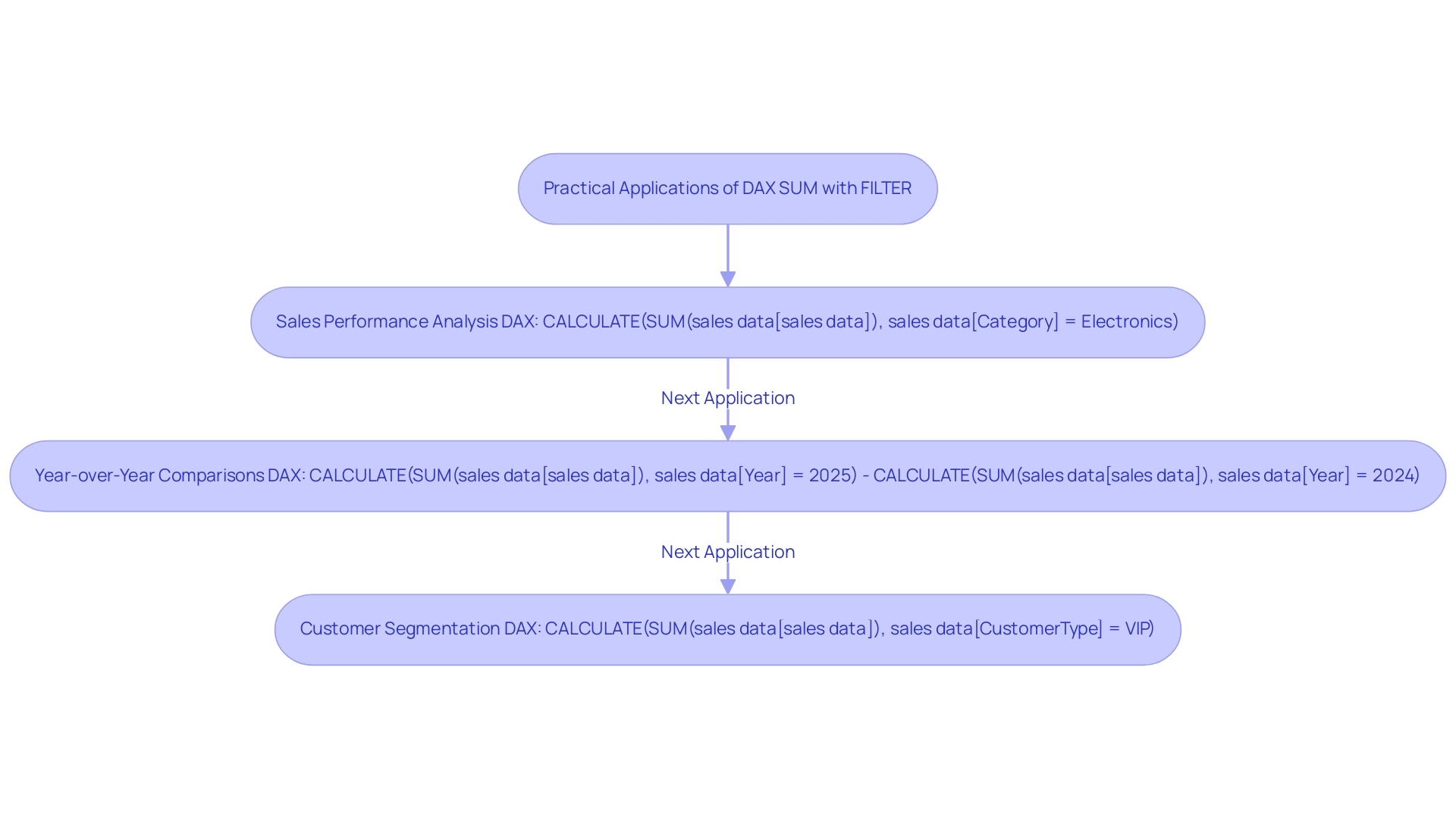

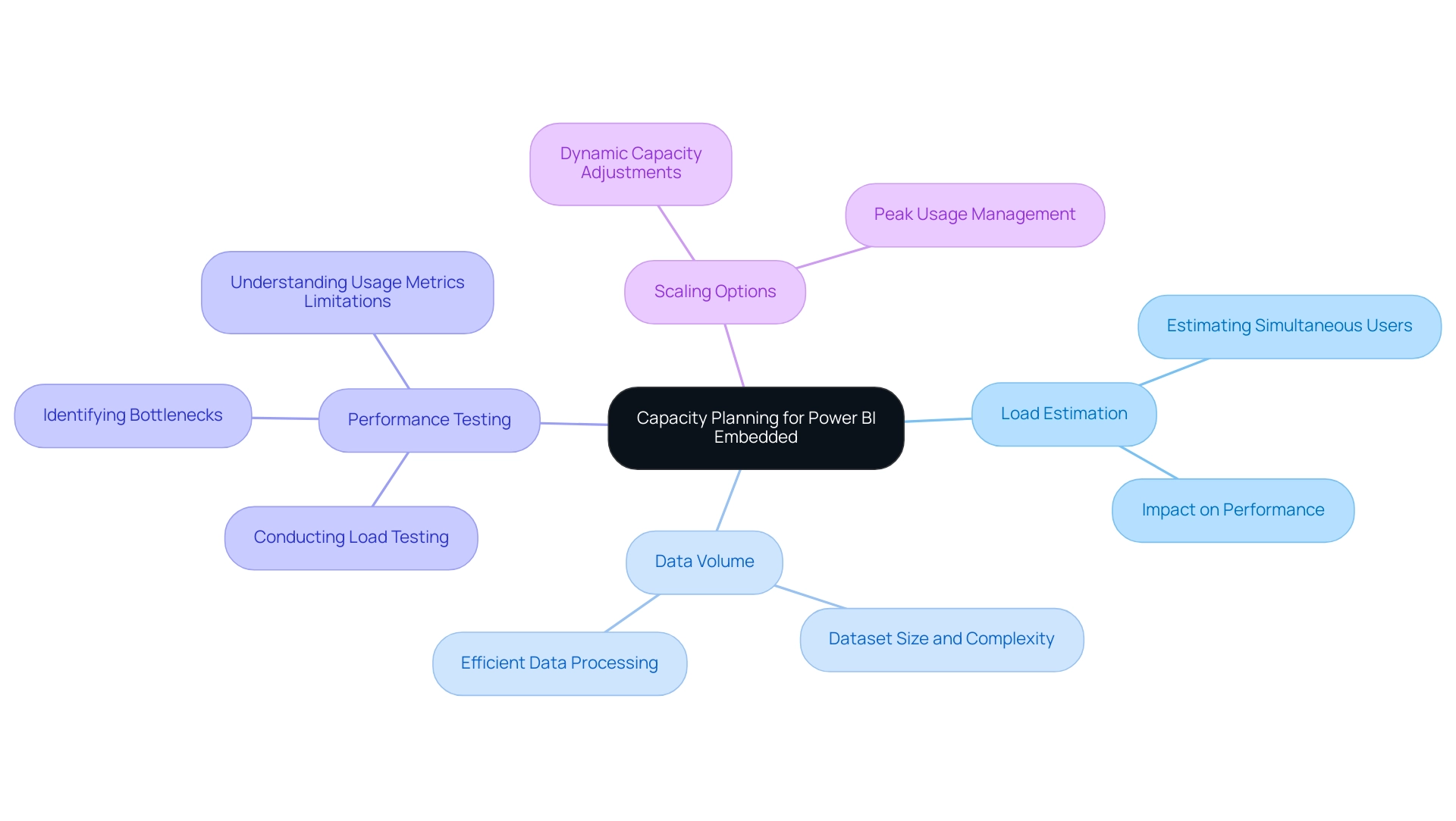

Utilizing DAX SUM with FILTER significantly enhances analytical capabilities, providing precise insights into sales performance and growth trends. In today’s data-rich environment, where extracting meaningful insights is crucial for maintaining a competitive edge, several practical applications emerge:

-

Sales Performance Analysis: Calculating total sales for specific products or categories allows businesses to focus on their most profitable segments. For instance:

total electronics sales = CALCULATE(SUM(sales data[sales data]), sales data[Category] = "Electronics")This formula enables analysts to isolate sales data for electronics, facilitating targeted marketing strategies and effective inventory management. Custom visual files in Power BI prove especially beneficial when prepackaged visuals fall short of business requirements, enabling tailored insights that address inaccuracies and enhance operational efficiency.

-

Year-over-Year Comparisons: Understanding sales growth is essential for strategic planning. By comparing current year sales to previous years, organizations can assess their performance trajectory:

sales growth = CALCULATE(SUM(sales data[sales data]), sales data[Year] = 2025) - CALCULATE(SUM(sales data[sales data]), sales data[Year] = 2024)This calculation provides a clear view of year-over-year growth, crucial for evaluating business health and forecasting future sales. Notably, the Y-axis of the graph illustrates the switchable measure, either Profit or Sales, highlighting the versatility of DAX SUM in performance analysis and the importance of actionable guidance in decision-making.

-

Customer Segmentation: Analyzing sales performance across different customer segments reveals valuable insights into purchasing behaviors. For example:

total sales vip = CALCULATE(SUM(sales data[sales data]), sales data[CustomerType] = "VIP")This measure aids businesses in understanding the contribution of VIP customers to overall sales, guiding loyalty programs and personalized marketing efforts. A Data Analyst with seven years of experience, who transitioned to an Associate Data Engineer role, found success through a Certification course, underscoring the importance of enhancing analytical skills to overcome challenges in leveraging insights.

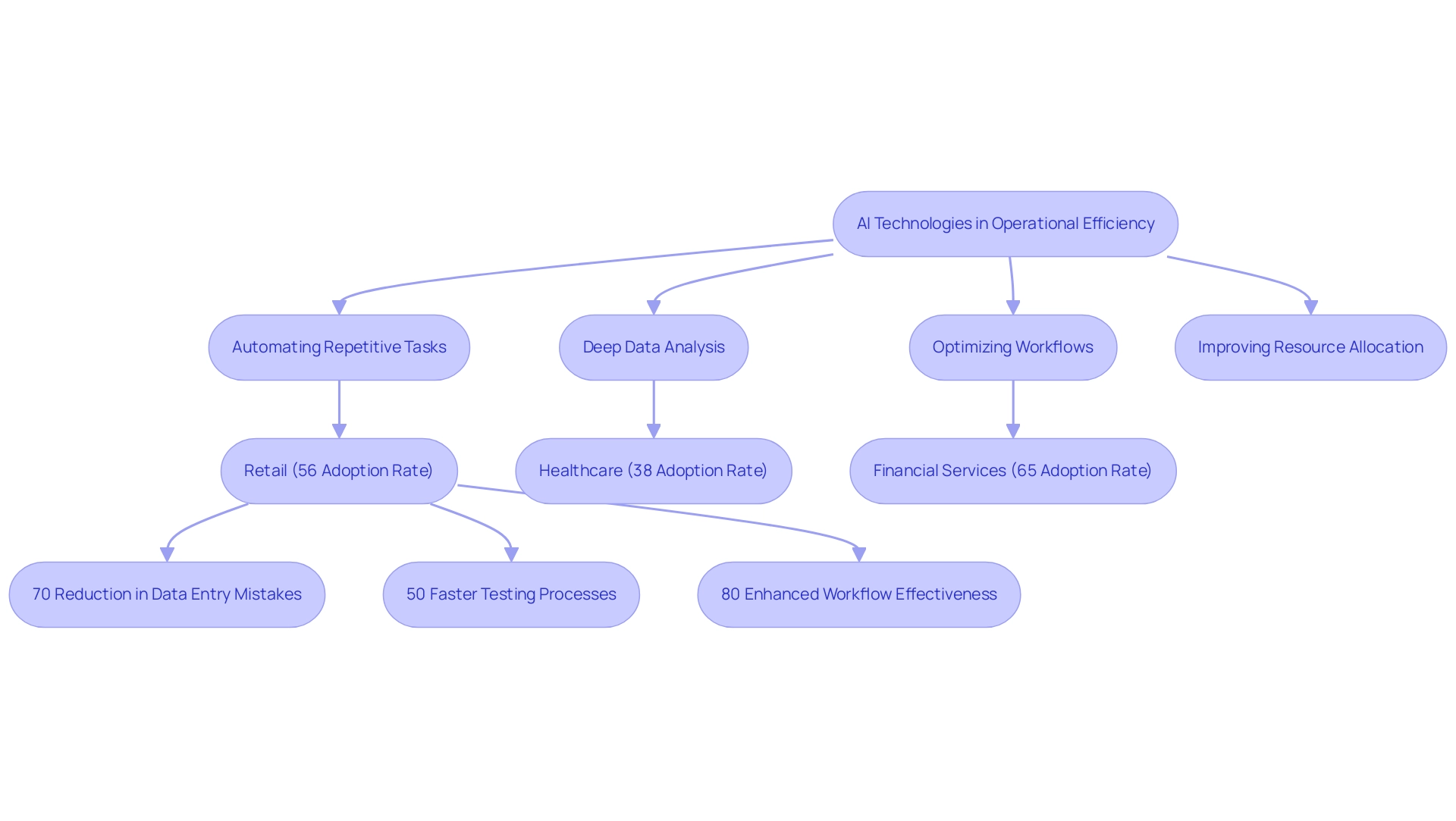

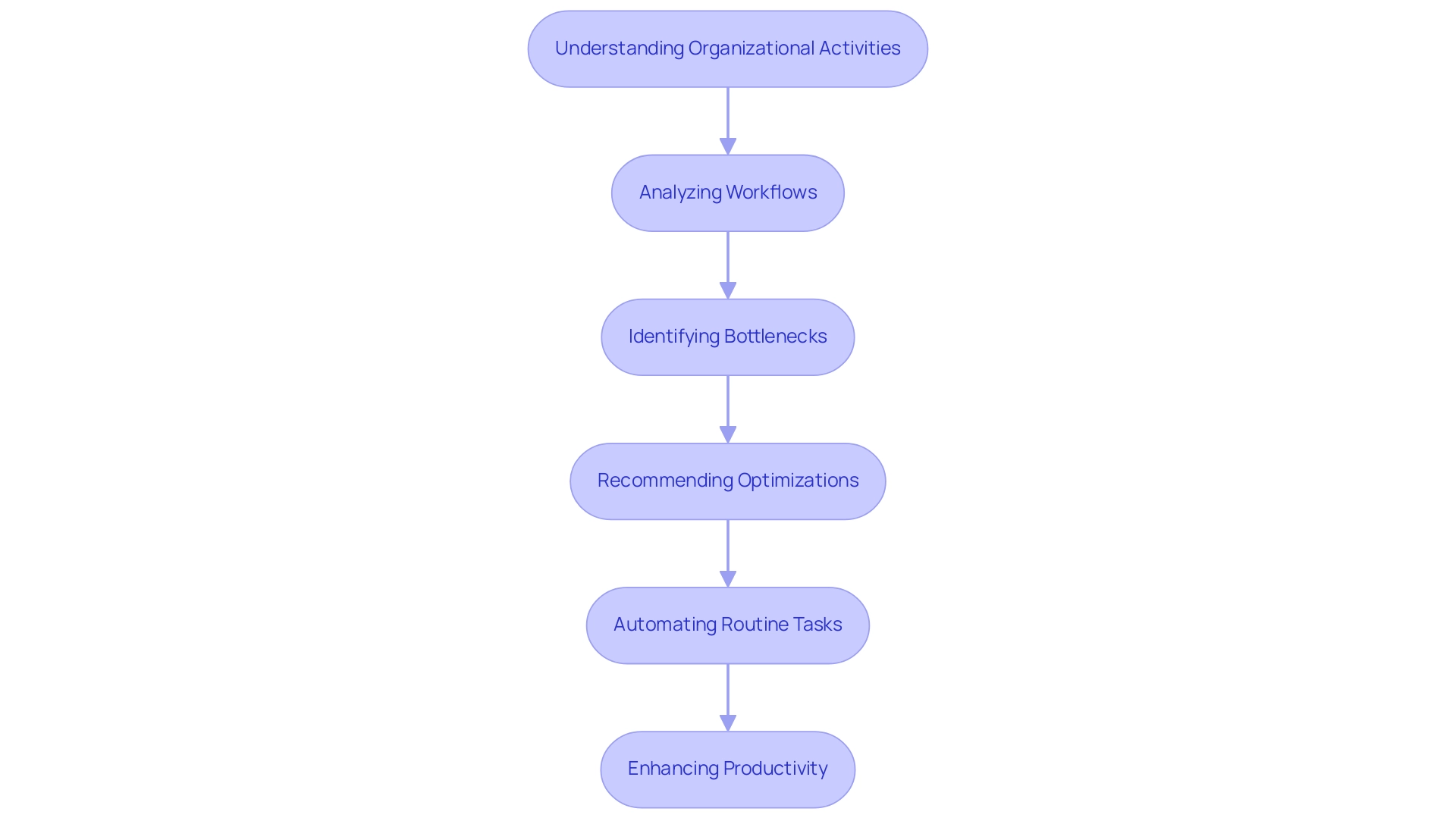

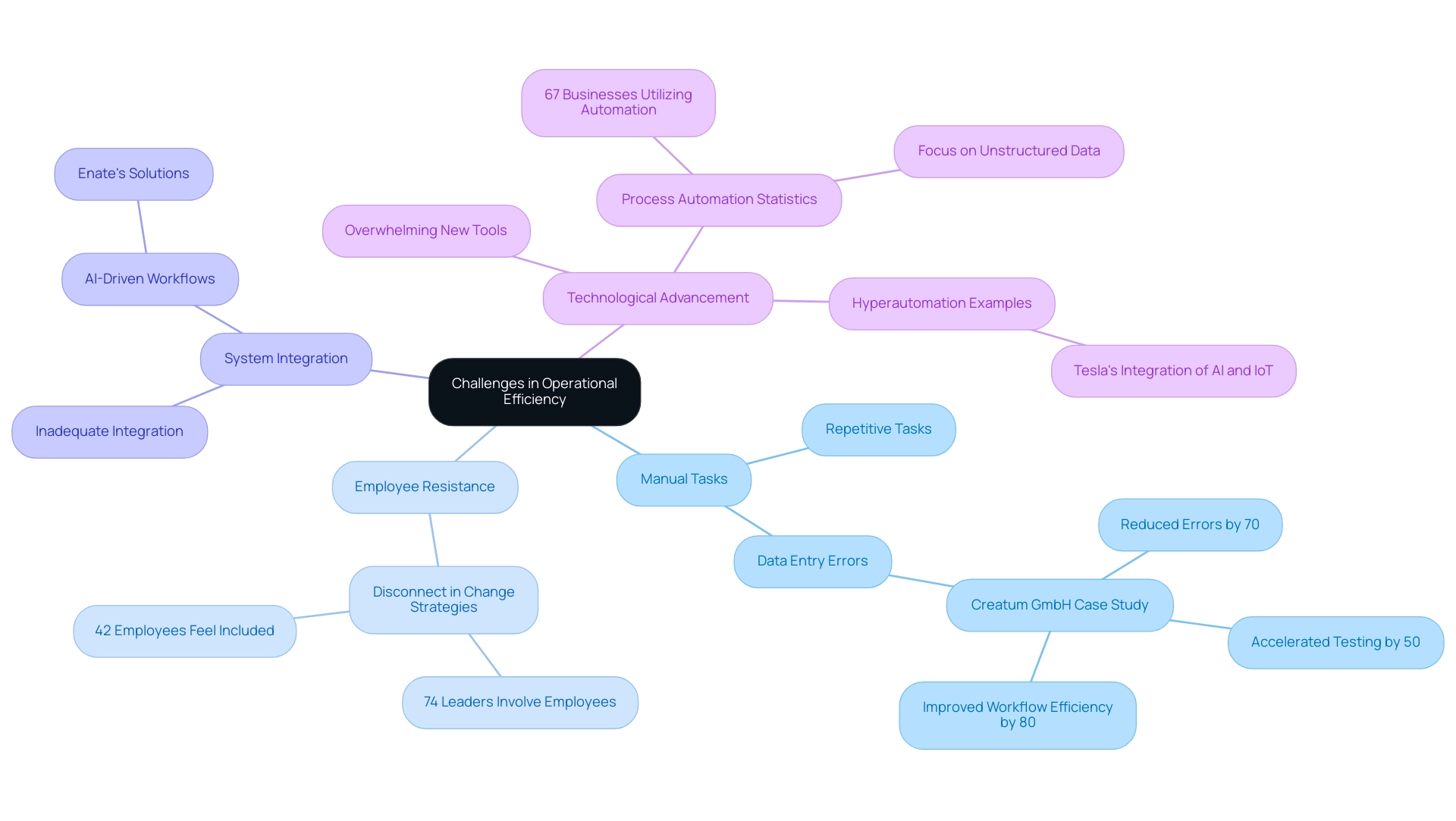

Beyond these DAX applications, integrating RPA solutions like EMMA RPA and Power Automate significantly enhances operational efficiency. By automating repetitive information preparation tasks, organizations can free up valuable time for analysts to concentrate on deriving insights from their findings. These RPA tools optimize workflows and ensure information consistency, ultimately resulting in more precise and prompt decision-making.

These examples illustrate how DAX SUM with FILTER can effectively extract actionable insights from data, driving informed decision-making and enhancing operational efficiency. In 2025, organizations leveraging these techniques are expected to see significant improvements in year-over-year sales growth, reinforcing the importance of data-driven strategies in today’s competitive landscape. As professionals such as Business Analysts, Business Owners, and Business Developers increasingly utilize Power BI, mastering DAX functions alongside RPA solutions becomes essential for success.

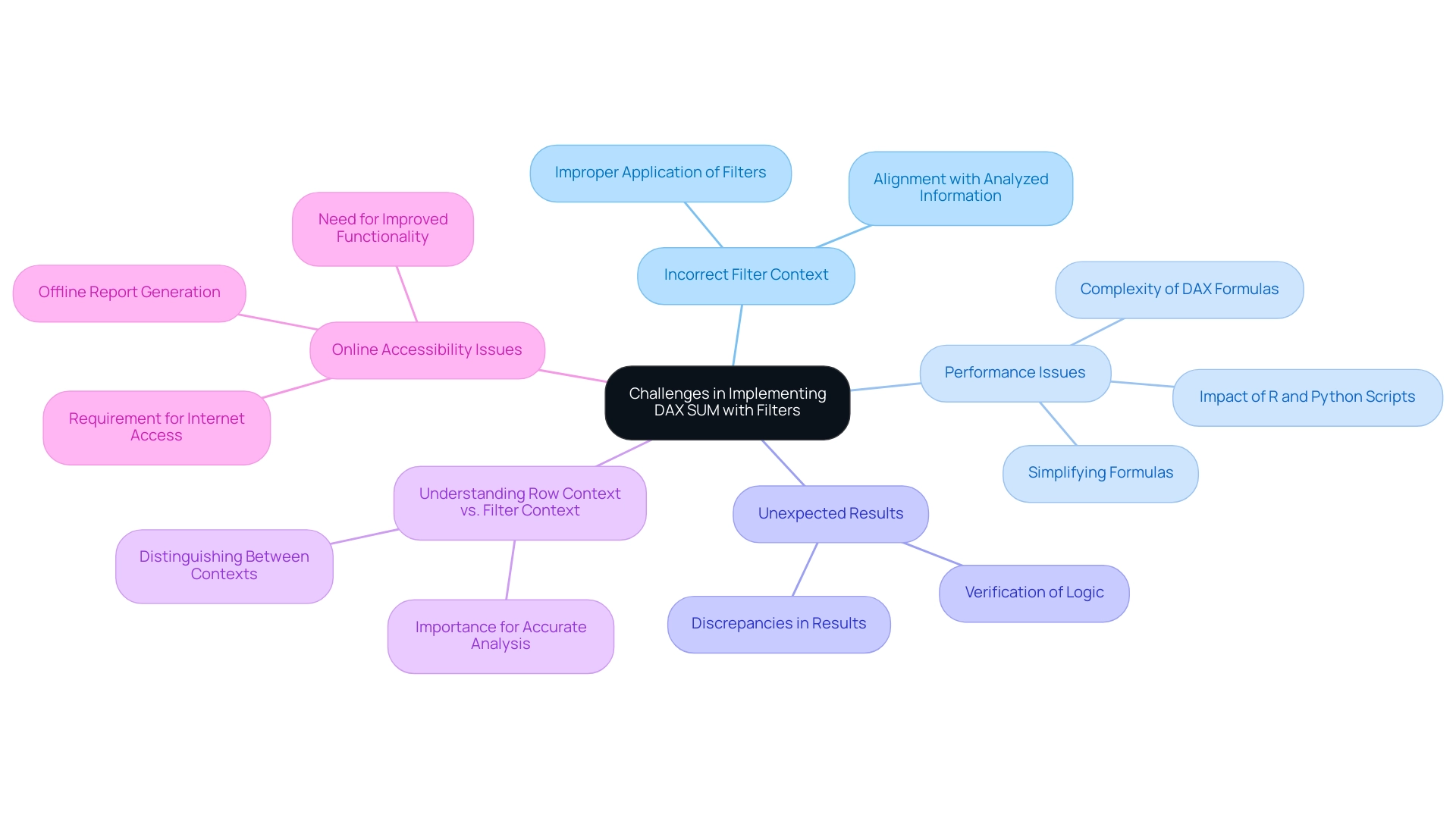

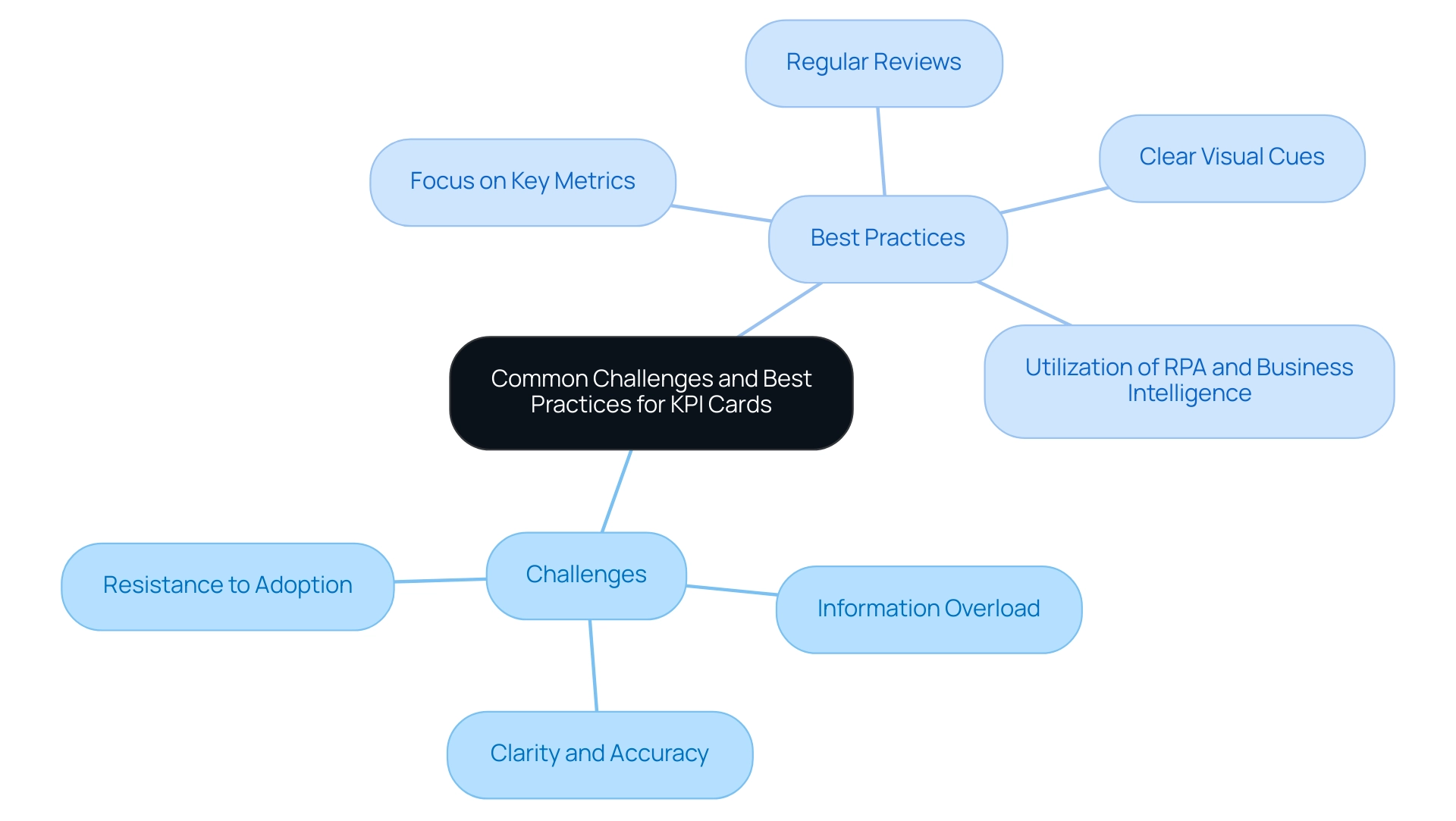

Common Challenges in Implementing DAX SUM with Filters

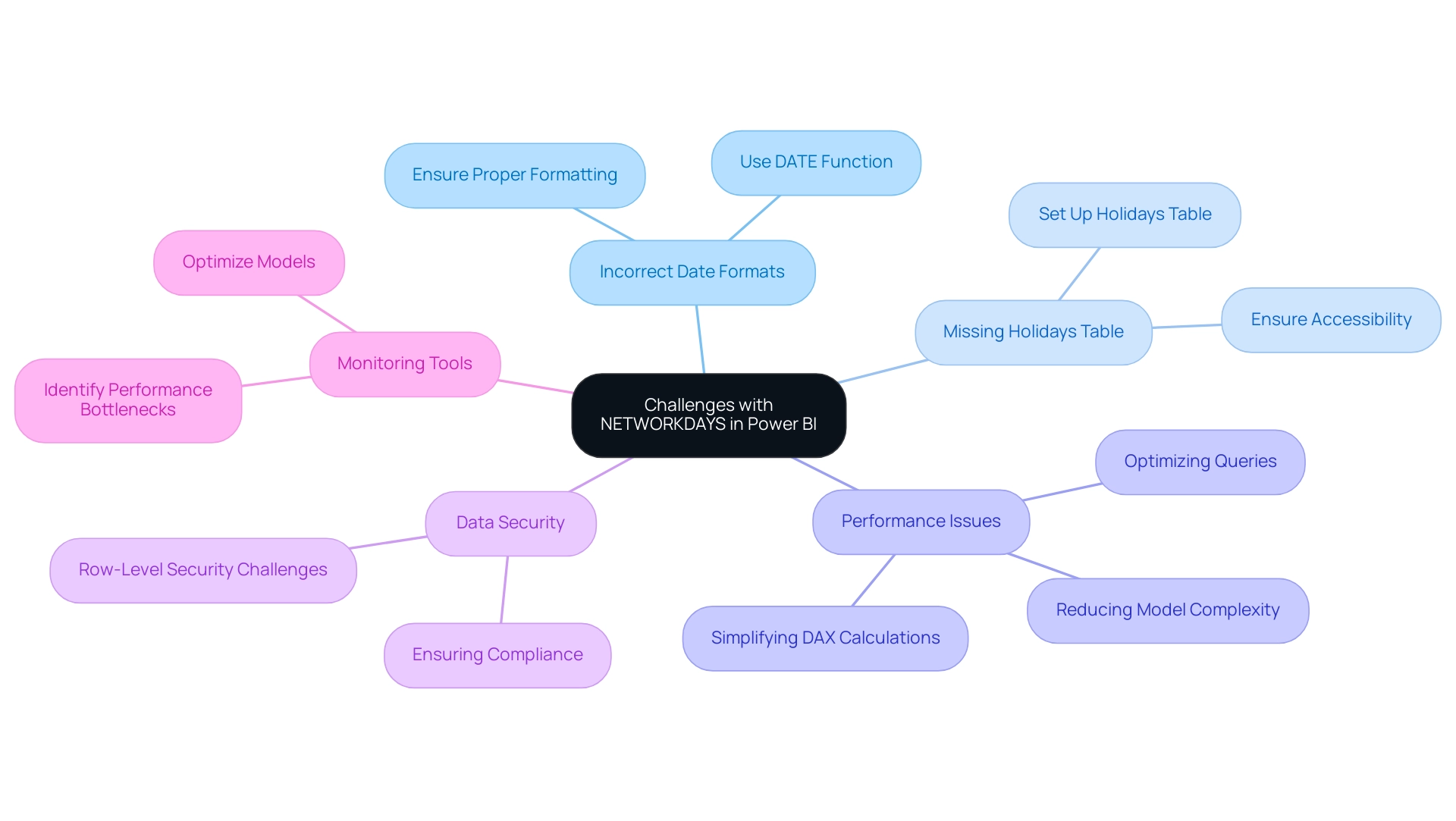

Utilizing DAX SUM in conjunction with filters can significantly enhance your data analysis capabilities. However, it is essential to be aware of several prevalent challenges:

-

Incorrect Filter Context: One of the most common pitfalls is the improper application of filters. If the filter context is not accurately established, the filters may yield unexpected results. To maintain accuracy in your calculations, ensure that your filters align with the specific information being analyzed for the DAX SUM with filter.

-

Performance Issues: The complexity of DAX formulas, particularly those incorporating multiple filters, can lead to performance degradation. Statistics indicate that intricate calculations can significantly slow down processing times. Additionally, running R and Python scripts in Power BI can further impact performance due to their resource-intensive nature. To mitigate this, consider simplifying your formulas or utilizing variables to streamline calculations. This approach aligns with the principles of Robotic Process Automation (RPA), which aims to boost operational efficiency by reducing manual, repetitive tasks.

-

Unexpected Results: Analysts often encounter discrepancies between expected and actual results, frequently due to overlapping filters or flawed logic within the DAX formula. It is advisable to rigorously verify your logic and conduct tests with sample datasets to ensure that your calculations produce the intended outcomes. This verification process is essential, particularly in the context of utilizing Business Intelligence tools to derive actionable insights from information.

-

Understanding Row Context vs. Filter Context: A common source of confusion lies in distinguishing between row context and filter context. Misunderstanding how DAX evaluates expressions in these different contexts can lead to calculation errors. Gaining a solid grasp of these concepts is vital for accurate information analysis and avoiding common mistakes, particularly when dealing with poor master quality that can hinder effective decision-making.

-

Online Accessibility Issues: As highlighted in a case study, Power BI requires internet access for its service, which can be problematic for users needing offline access or those with poor connectivity. This limitation can affect the implementation of DAX SUM with filter, leading users to generate reports in PDF or PPT format for offline sharing. Addressing these accessibility challenges is essential for ensuring that your analysis remains robust and reliable.

Utpal Kar noted, “This is one of the major Power BI drawbacks as Microsoft has designed Power BI in a very complex manner.” This complexity can worsen the challenges encountered by analysts when utilizing DAX operations. By tackling these challenges proactively, professionals can improve their proficiency in implementing DAX SUM with filter, leading to more reliable and insightful analysis that ultimately supports informed decision-making in their organizations.

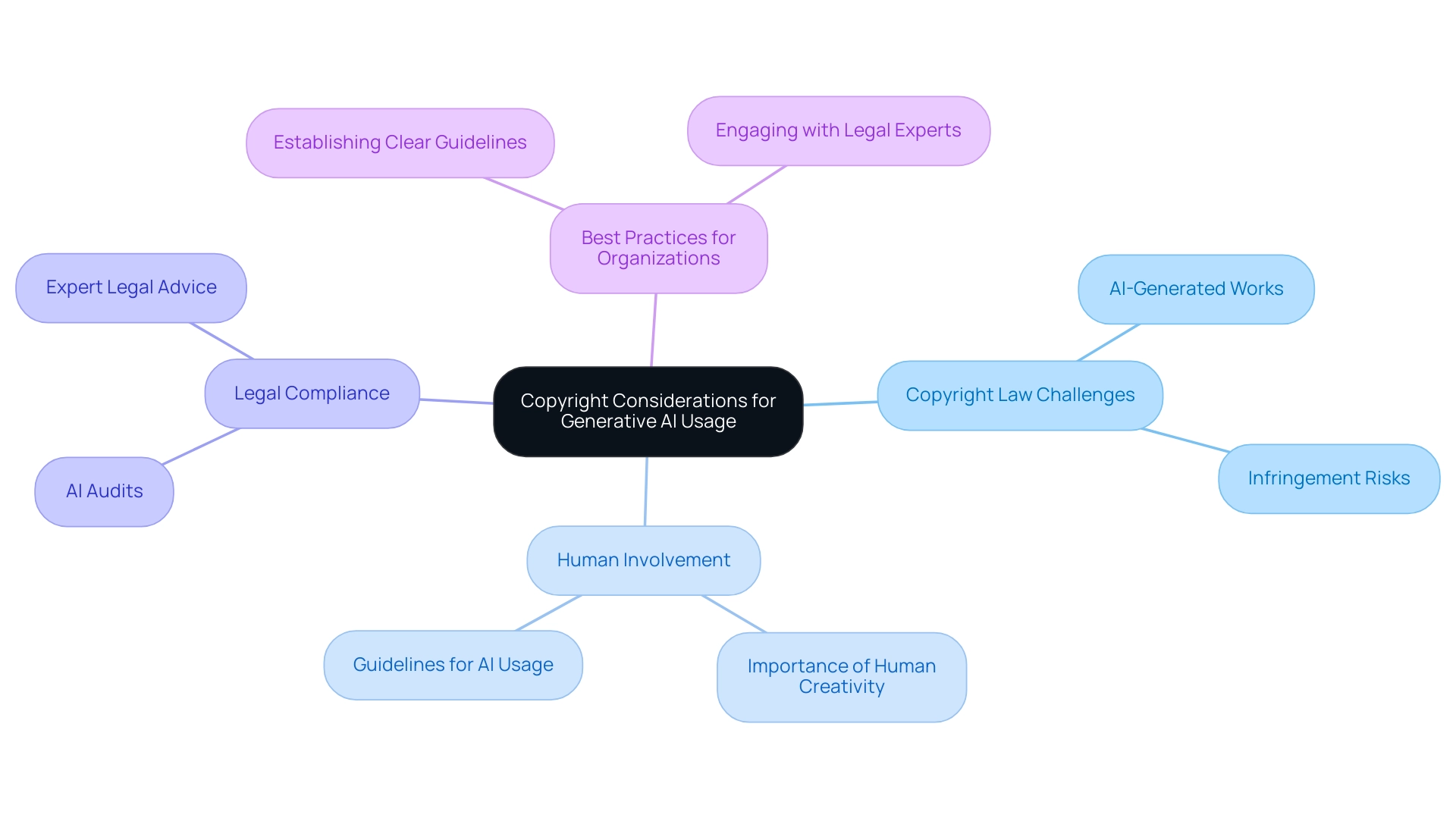

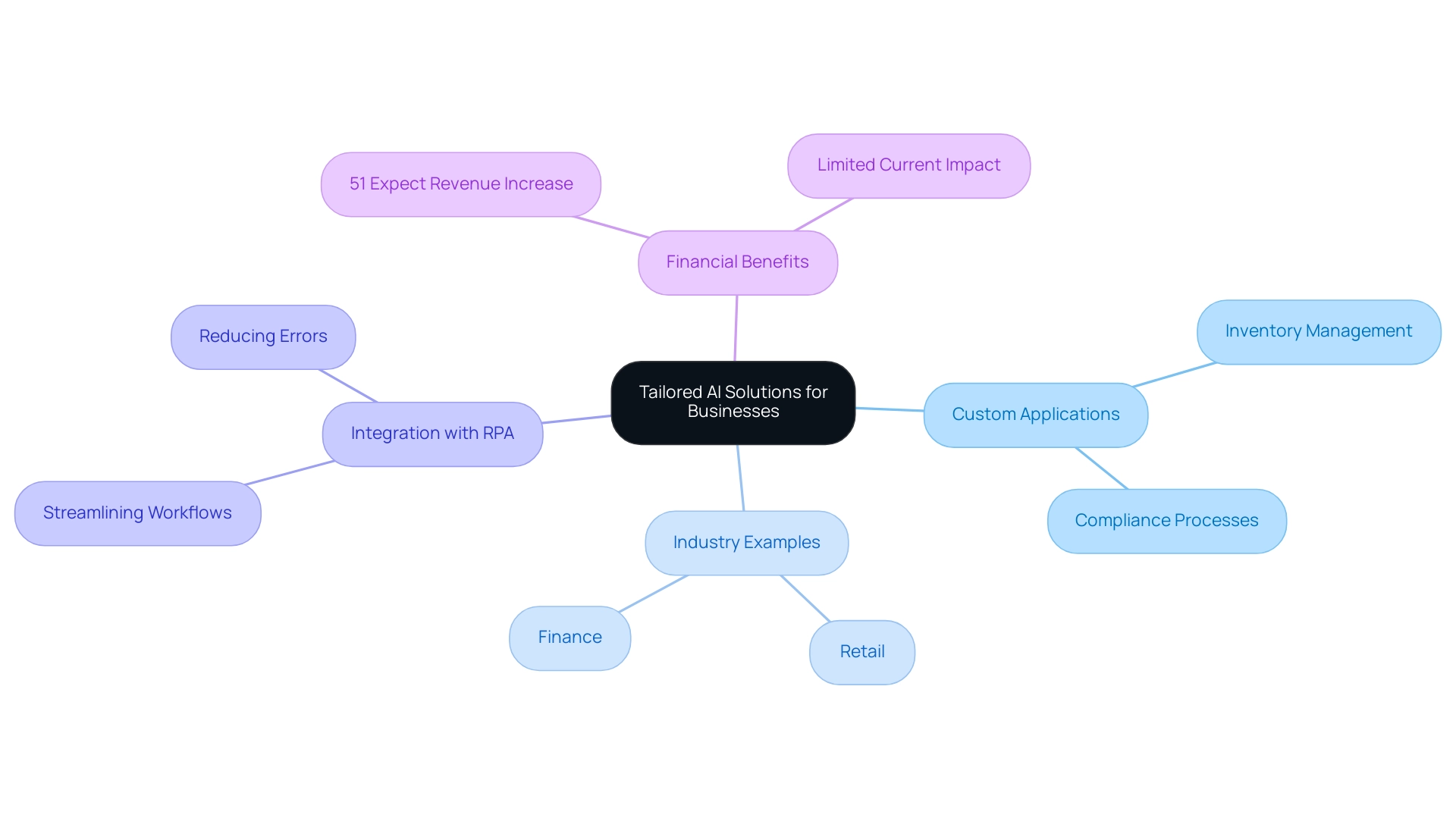

Additionally, tailored AI solutions can play a crucial role in overcoming these challenges by providing targeted technologies that align with specific business goals. Organizations often face hurdles in adopting AI due to concerns about integration and resource allocation. By utilizing AI, companies can optimize their information processes, enhance information quality, and improve the overall efficiency of DAX operations in Power BI.

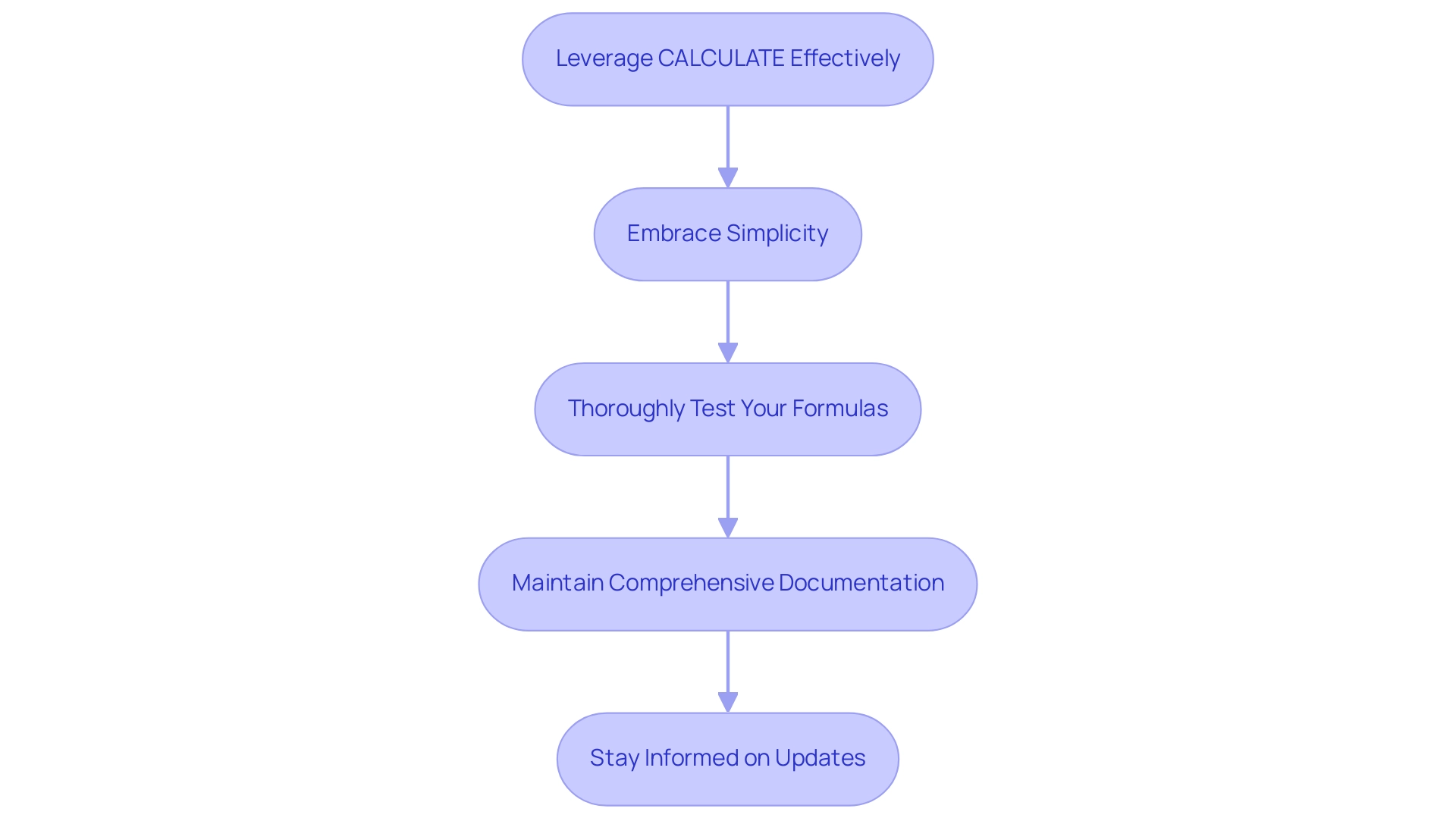

Best Practices for Mastering DAX SUM with Filters

To effectively master DAX sum with filter, consider the following best practices:

-

Leverage CALCULATE Effectively: Always encapsulate your SUM operation within CALCULATE when applying filters. This ensures the correct context is applied, allowing for accurate calculations that reflect the intended data scope.

-

Embrace Simplicity: Begin with straightforward calculations, progressively introducing complexity as your confidence with DAX grows. This approach fosters a solid foundation and reduces the likelihood of errors.

-

Thoroughly Test Your Formulas: Utilize sample datasets to rigorously test your DAX formulas prior to deploying them on larger datasets. This practice not only helps identify potential errors early but also enhances overall performance. For instance, utilizing the DIVIDE method prevents Division by Zero Errors and enhances performance compared to the traditional division operator.

-

Maintain Comprehensive Documentation: Keep detailed notes on your DAX formulas and the underlying logic. This documentation serves as a valuable reference for both you and your colleagues, facilitating better understanding and collaboration in future projects.

-

Stay Informed on Updates: DAX is an evolving language, with new functions and features regularly introduced. Staying informed about these changes can significantly improve your analysis capabilities, enabling you to leverage the latest advancements in AI-driven insights and analytics. As mentioned in recent conversations, AI capabilities allow real-time insights and improve decision-making by delivering precise and prompt information for strategic outcomes.

By following these best practices, you can enhance your DAX skills and ensure that your analysis is both effective and efficient, ultimately driving better decision-making and strategic outcomes. Additionally, leveraging Robotic Process Automation (RPA) from Creatum GmbH can streamline your workflows, reduce errors, and free up your team for more strategic tasks, further enhancing operational efficiency. As Praveen, a Digital Marketing Specialist, emphasizes, mastering these skills is crucial for professionals looking to excel in data-driven environments. Furthermore, addressing challenges in utilizing insights from Power BI dashboards, such as time-consuming report creation and inconsistencies, is essential for maximizing the effectiveness of your analysis efforts.

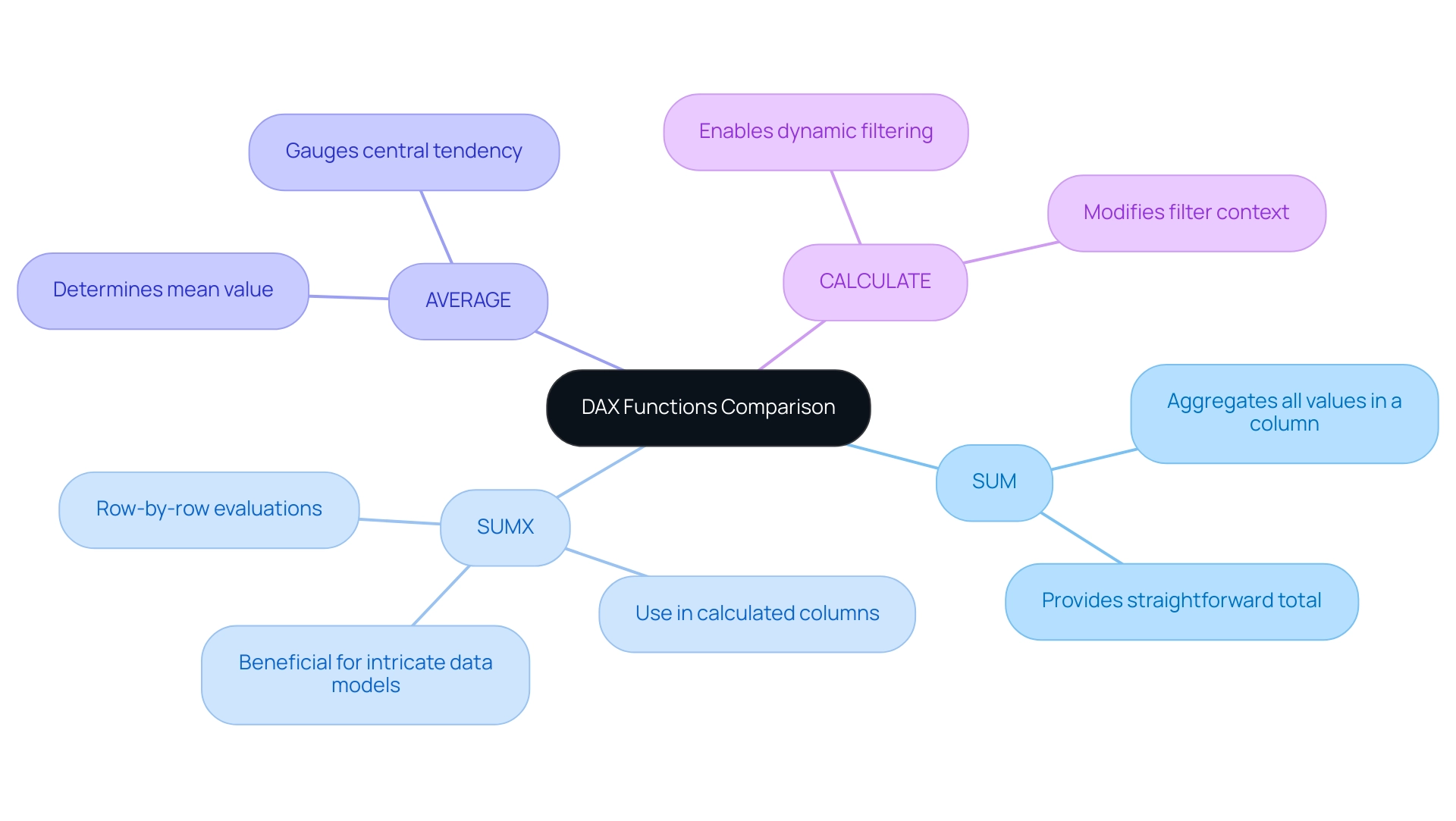

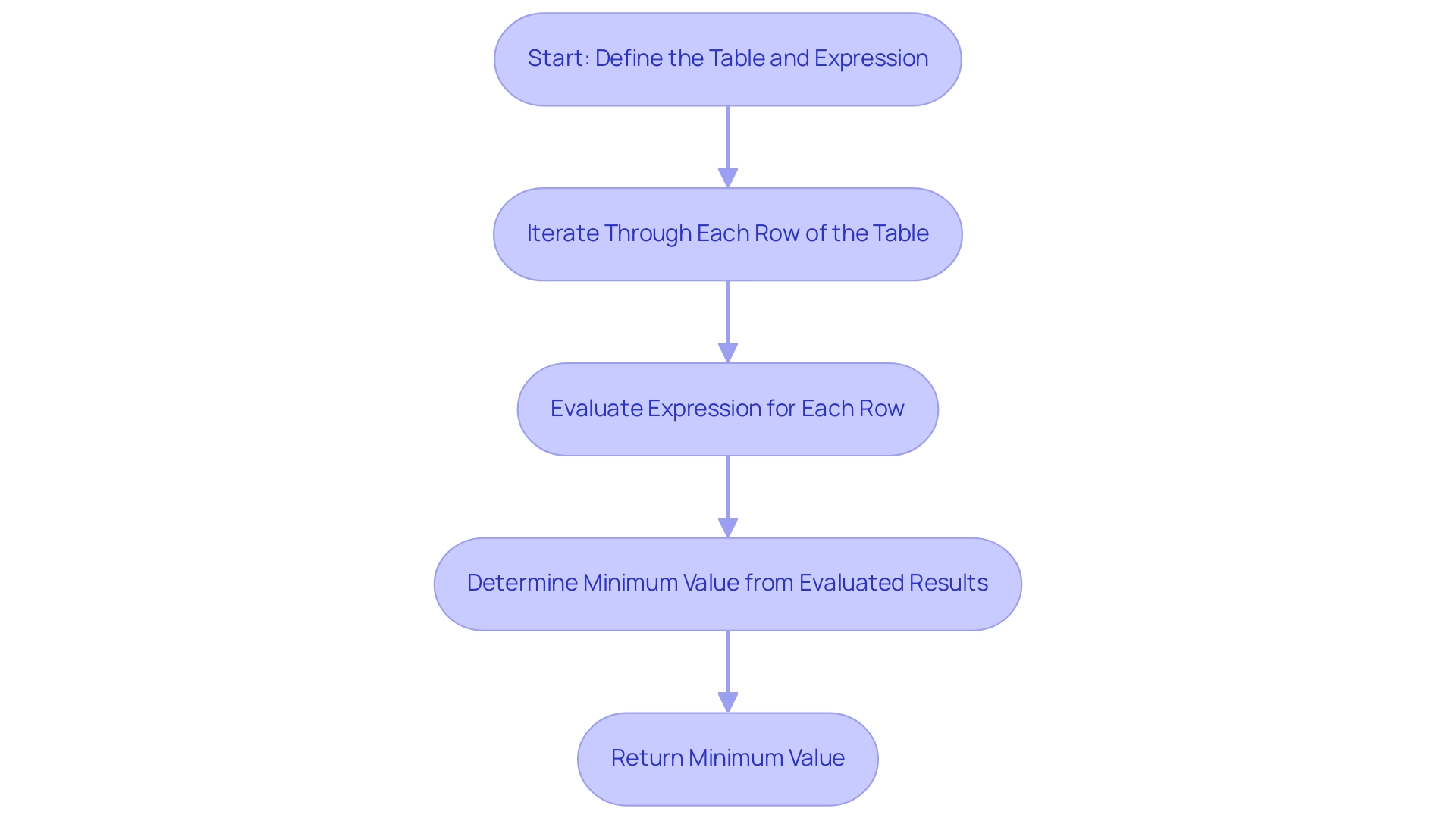

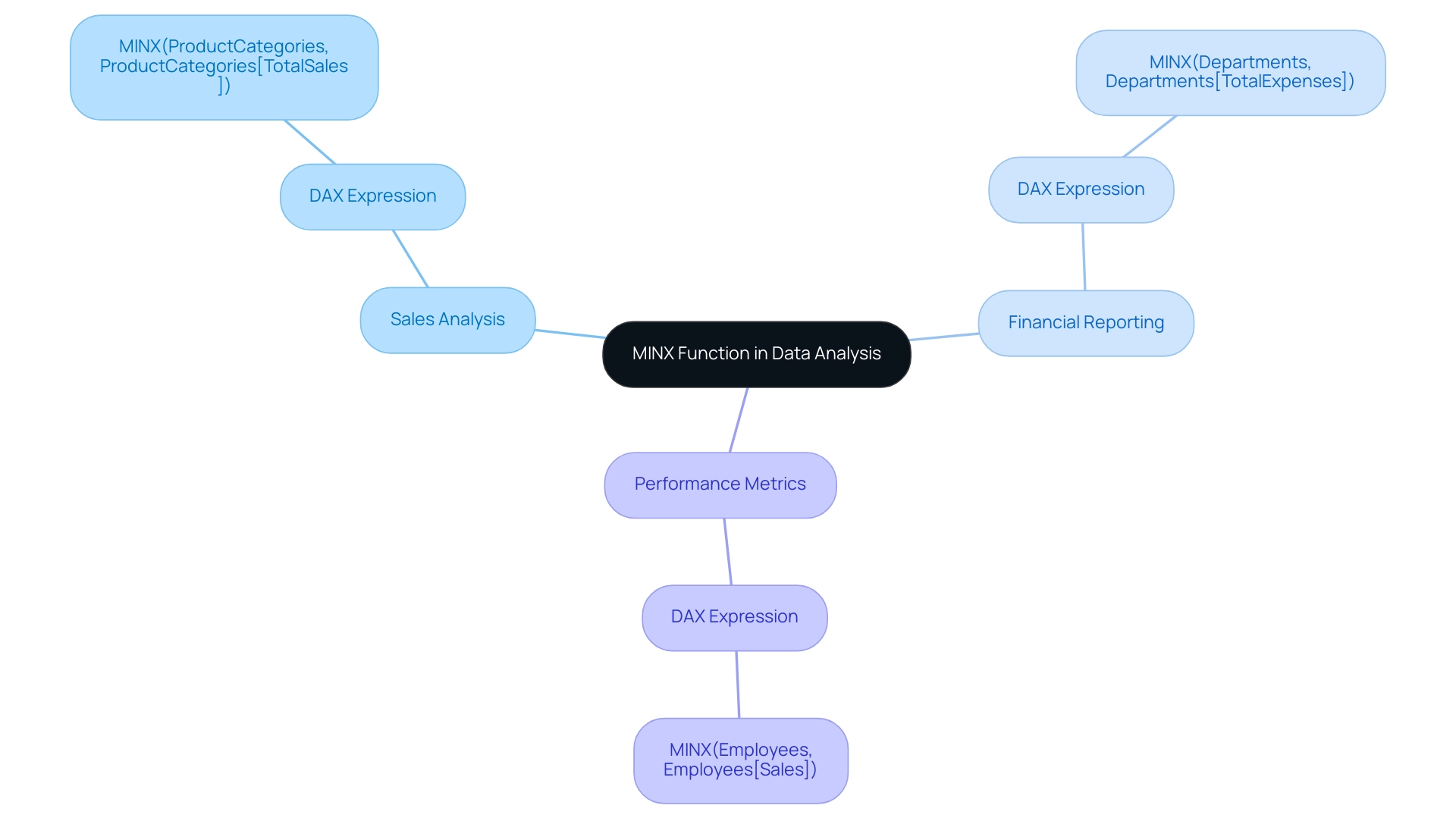

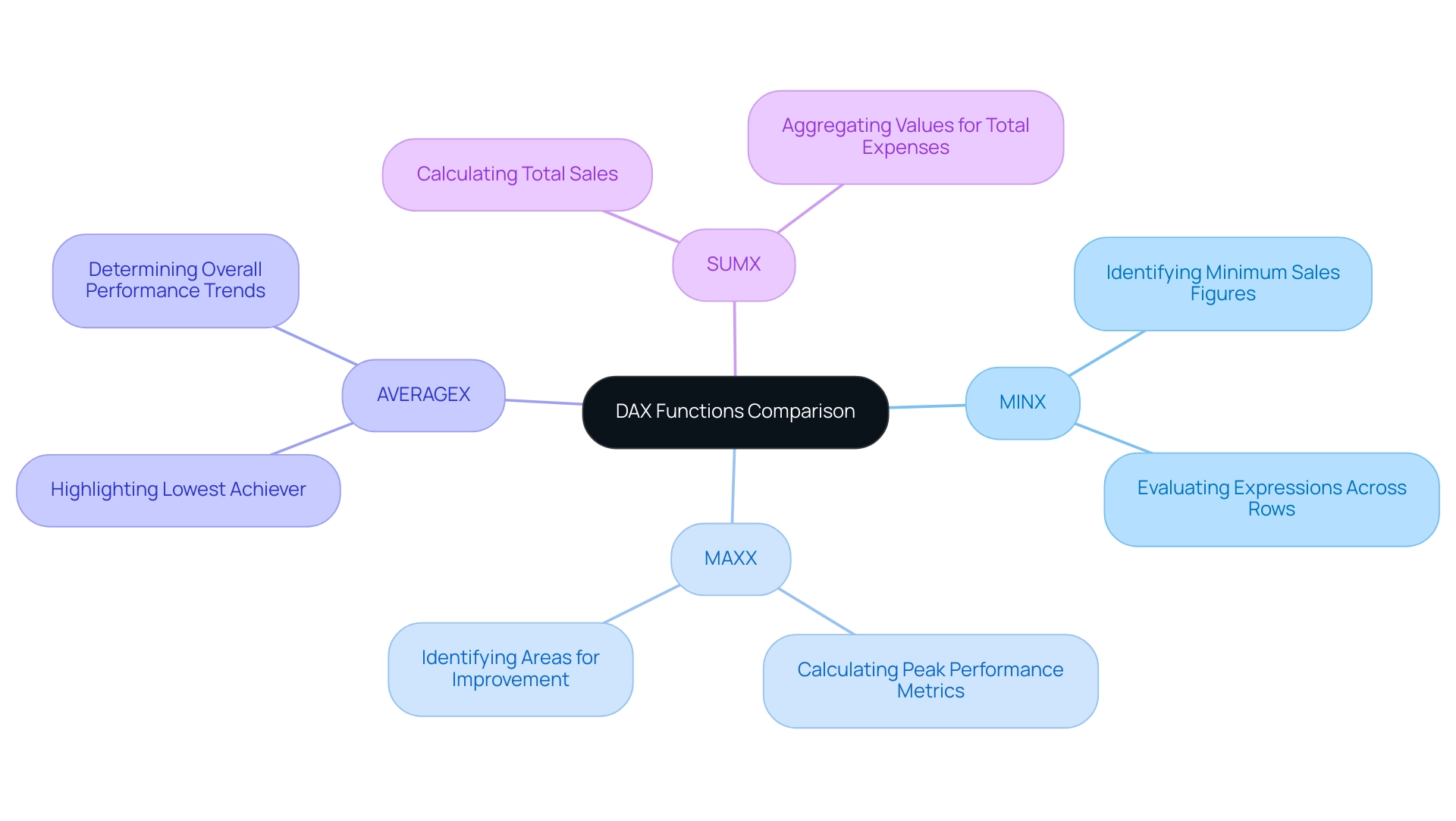

Comparative Analysis: DAX SUM vs. Other DAX Functions

In Power BI, a nuanced comprehension of DAX operations is essential for effective analysis, particularly in a landscape where utilizing Business Intelligence can convert raw information into actionable insights. Here’s a comparative analysis of key DAX operations:

-

SUM vs. SUMX: The SUM operation aggregates all values in a specified column, providing a straightforward total. In contrast, SUMX performs row-by-row evaluations of an expression before summing the results. This makes SUMX especially beneficial for situations needing row-level calculations, like when handling calculated columns or intricate data models.

-

SUM vs. AVERAGE: While SUM provides the total of a column, the AVERAGE calculation determines the mean value. Utilizing AVERAGE is essential when you aim to gauge the central tendency of your dataset, offering insights into typical values rather than just totals.

-

SUM vs. CALCULATE: The CALCULATE operation is powerful for modifying the filter context of calculations. When utilizing DAX SUM with a filter, it allows for dynamic filtering, enabling users to apply specific conditions to their summation, which can significantly enhance the relevance of the results.

Understanding these distinctions is vital for selecting the most appropriate method for your analysis. By leveraging the appropriate DAX functions, you can enhance the accuracy and relevance of your insights, ultimately driving better decision-making in your organization.

Moreover, in today’s information-rich environment, challenges such as time-consuming report creation and inconsistencies can hinder effective analysis. Power BI enables users to tailor their workspace by organizing tools and panels to fit their workflow preferences, which can improve the efficiency of analysis. Best practices in modeling information, such as using the Power Query Editor for preparation and defining relationships between tables, are essential for structuring and organizing content effectively.

A case study on information modeling highlights that effective practices ensure accurate, efficient, and understandable structures, enabling better insights and decision-making.

As Paul Turley, a Microsoft Data Platform MVP, notes, ‘The term ‘architecture’ is more commonly used in the realm of engineering and warehouse project work, but the concept applies to BI and analytic reporting projects of all sizes.’ This highlights the significance of a well-organized method for analysis, particularly when employing DAX capabilities, to address the common challenges of report creation and to offer clear, actionable guidance for stakeholders.

Moreover, incorporating Robotic Process Automation (RPA) solutions, such as EMMA RPA, can further boost operational efficiency by automating repetitive tasks and enabling teams to concentrate on strategic analysis instead of time-consuming report generation. Failing to extract meaningful insights from your information can place your organization at a competitive disadvantage. To learn more about how RPA can complement your BI efforts, consider booking a consultation with Creatum GmbH.

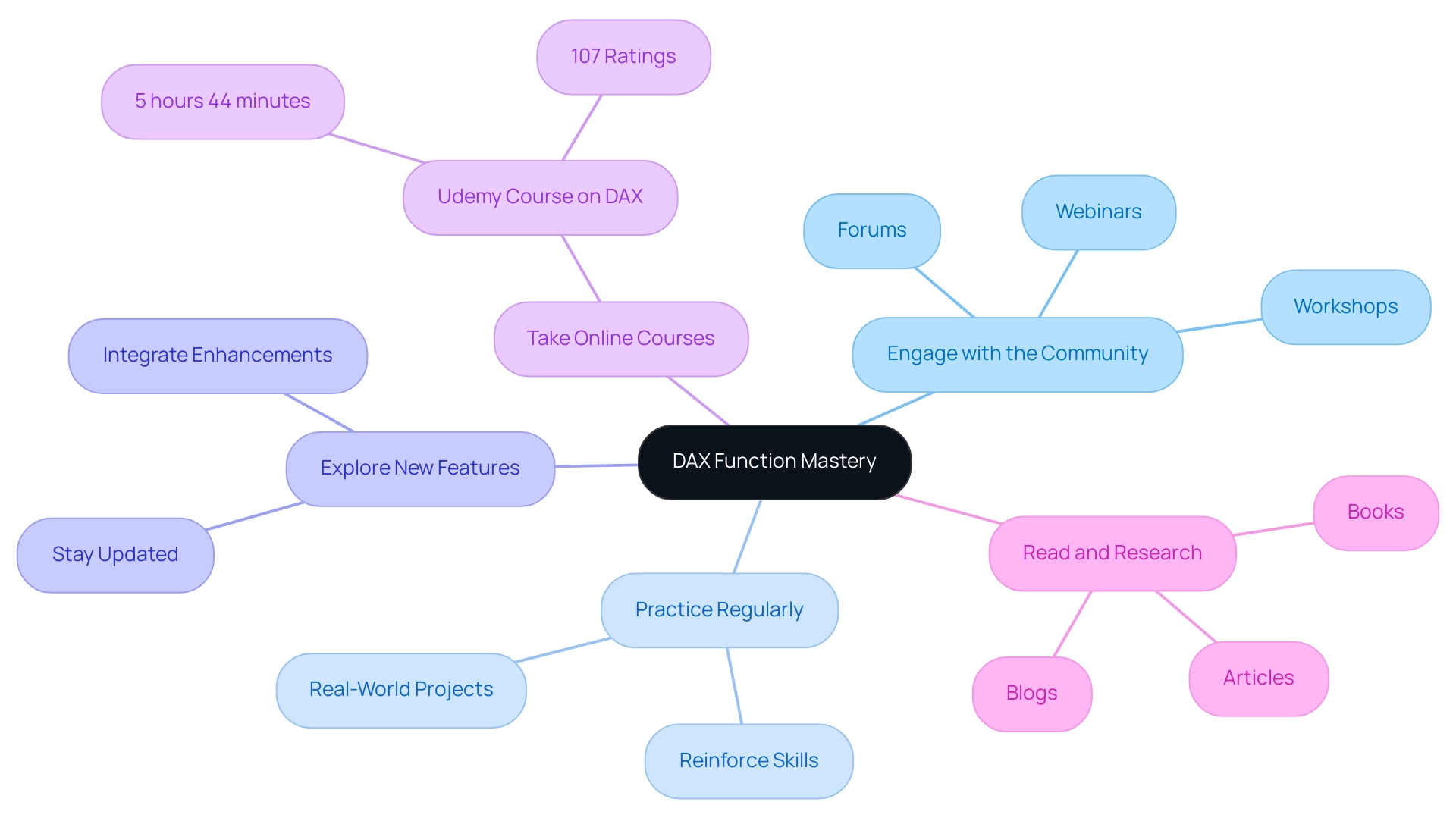

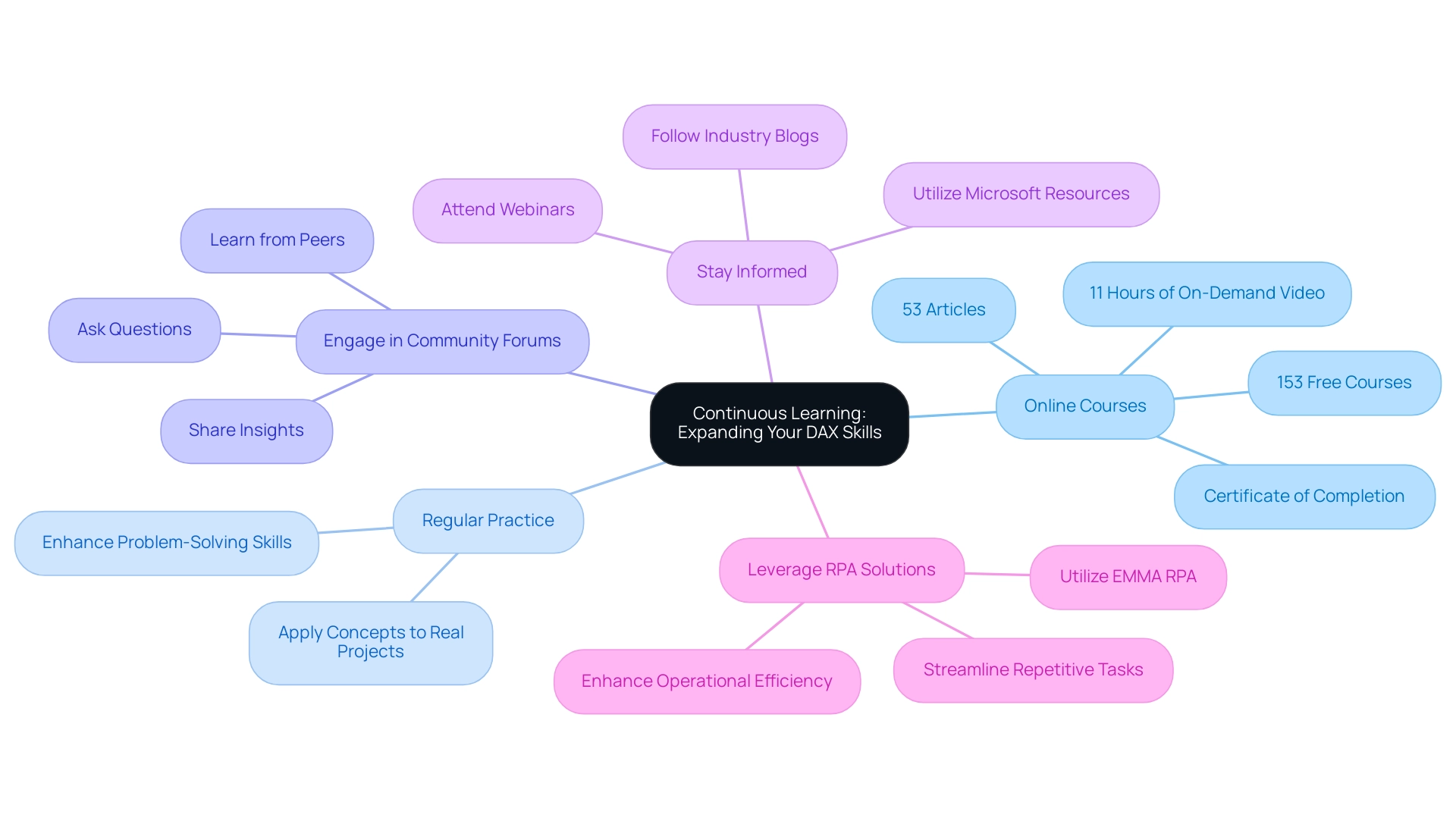

Continuous Learning: Staying Ahead in DAX Function Mastery

To excel in mastering DAX functions and overcoming common challenges in leveraging insights from Power BI dashboards, professionals should adopt the following strategies:

-

Engage with the Community: Actively participating in forums, webinars, and workshops fosters a collaborative learning environment. Interacting with colleagues boosts understanding and offers opportunities to exchange insights and effective methods, assisting in addressing issues such as inconsistencies while enhancing the clarity of actionable guidance.

-

Practice Regularly: Mastery of DAX is achieved through consistent practice. Tackling real-world projects allows individuals to apply theoretical knowledge, reinforcing their skills and understanding while addressing the time-consuming nature of report creation.

-

Explore New Features: Staying updated on the latest enhancements in Power BI and DAX is crucial. New functions can significantly enhance analytical capabilities, making it essential to integrate these advancements into daily workflows to streamline reporting processes and improve governance.

-

Take Online Courses: Investing in online courses, such as the comprehensive 5-hour 44-minute course on optimizing DAX code available on platforms like Udemy, can deepen understanding and skills. With over 107 ratings, such courses are designed to enhance performance and maintainability in DAX, making them a valuable resource for professionals seeking to improve their DAX skills and address the lack of actionable insights in their reports.

-

Read and Research: Continuous learning is vital. Consistently reading articles, blogs, and books on DAX and analysis helps professionals stay updated on the latest trends and techniques, broadening their knowledge base and allowing them to produce more effective reports.

Furthermore, implementing a robust governance strategy is essential to tackle inconsistencies, ensuring that all reports are accurate and reliable. Statistics indicate that organizations with active community engagement in DAX learning see a marked improvement in their analytical capabilities. For instance, dashboards and reports viewed in the past 90 days reflect the growing interest and utilization of DAX across various sectors.

As Bruno, a data consultant, aptly puts it, “Well there you have it, 5 years later and even more reasons to deep dive into DAX.” Case studies demonstrate that DAX not only enhances reporting and analytics but also empowers professionals, including business analysts and finance experts, to unlock the full potential of Power BI. The case study titled “DAX in Power BI” illustrates how DAX enhances reporting and analytics capabilities, reinforcing the practical benefits of mastering DAX for various professionals.

By embracing these strategies, individuals can ensure they remain at the forefront of DAX mastery, supported by the expertise of Creatum GmbH.

Conclusion

Mastering the DAX SUM function is essential for any data analyst aiming to derive meaningful insights from their datasets. This article has explored the foundational role of the SUM function in Power BI, emphasizing its straightforward syntax and significance in effective data aggregation. The inclusion of filters, particularly through the CALCULATE function, enhances calculation accuracy and allows for nuanced data analysis. By employing practical applications, such as sales performance analysis and year-over-year comparisons, analysts can leverage DAX SUM to drive informed decision-making and foster business growth.

However, challenges such as incorrect filter context, performance issues, and the distinctions between row and filter context can hinder effective implementation. By adhering to best practices—such as leveraging CALCULATE appropriately and maintaining thorough documentation—data professionals can overcome these obstacles and enhance their analytical capabilities. Continuous learning through community engagement and online courses further empowers analysts to stay ahead in the ever-evolving landscape of data analysis.

In conclusion, the DAX SUM function, when utilized effectively alongside filters, serves as a powerful tool for transforming raw data into actionable insights. As businesses increasingly rely on data-driven strategies, mastering DAX functions becomes not just beneficial but essential for achieving operational efficiency and fostering strategic growth. Embracing these techniques will undoubtedly place organizations on a path toward success in today’s competitive environment.

Frequently Asked Questions

What is the DAX SUM formula used for?

The DAX SUM formula is used for aggregating numerical data within a specified column, allowing users to perform basic aggregations in Power BI.

What is the syntax for the DAX SUM formula?

The syntax for the DAX SUM formula is SUM(<Column Name>). For example, to calculate total sales from a column named ‘Sales’, the formula would be SUM(Sales).

How prevalent is the DAX SUM function among analysts as of 2025?

Approximately 75% of professionals rely on the DAX SUM function for aggregation tasks, highlighting its significance in Business Intelligence.

How can DAX SUM be enhanced with filters?

DAX SUM can be enhanced with filters using the CALCULATE function, which allows for precise calculations tailored to specific criteria. For example, TotalSalesWest = CALCULATE(SUM(SalesData[TotalSales]), SalesData[Region] = "West") calculates total sales for the ‘West’ region.

Why is mastering DAX SUM with filters important?

Mastering DAX SUM with filters is crucial for effective DAX analysis, as it helps refine the information processed and improves the reliability of insights obtained from analysis.

What techniques can improve the performance of DAX calculations?

Techniques such as simplifying DAX calculations and utilizing tools like ALL, REMOVEFILTERS, and KEEPFILTERS can lead to substantial performance improvements in Power BI reports.

What role does the CALCULATE function play in DAX?

The CALCULATE function is a cornerstone of effective DAX usage, particularly for nuanced information analysis, as it allows users to manipulate filter context and enhance calculation accuracy.

How can filters be applied in Power BI visuals?

Filters can be applied through slicers in Power BI visuals or directly within DAX formulas, allowing for focused analysis and ensuring the information examined is relevant and actionable.

What support does Creatum GmbH offer for DAX functions?

Creatum GmbH offers RPA solutions that can enhance DAX functions by automating repetitive tasks, improving efficiency, and supporting better information management and insight generation.

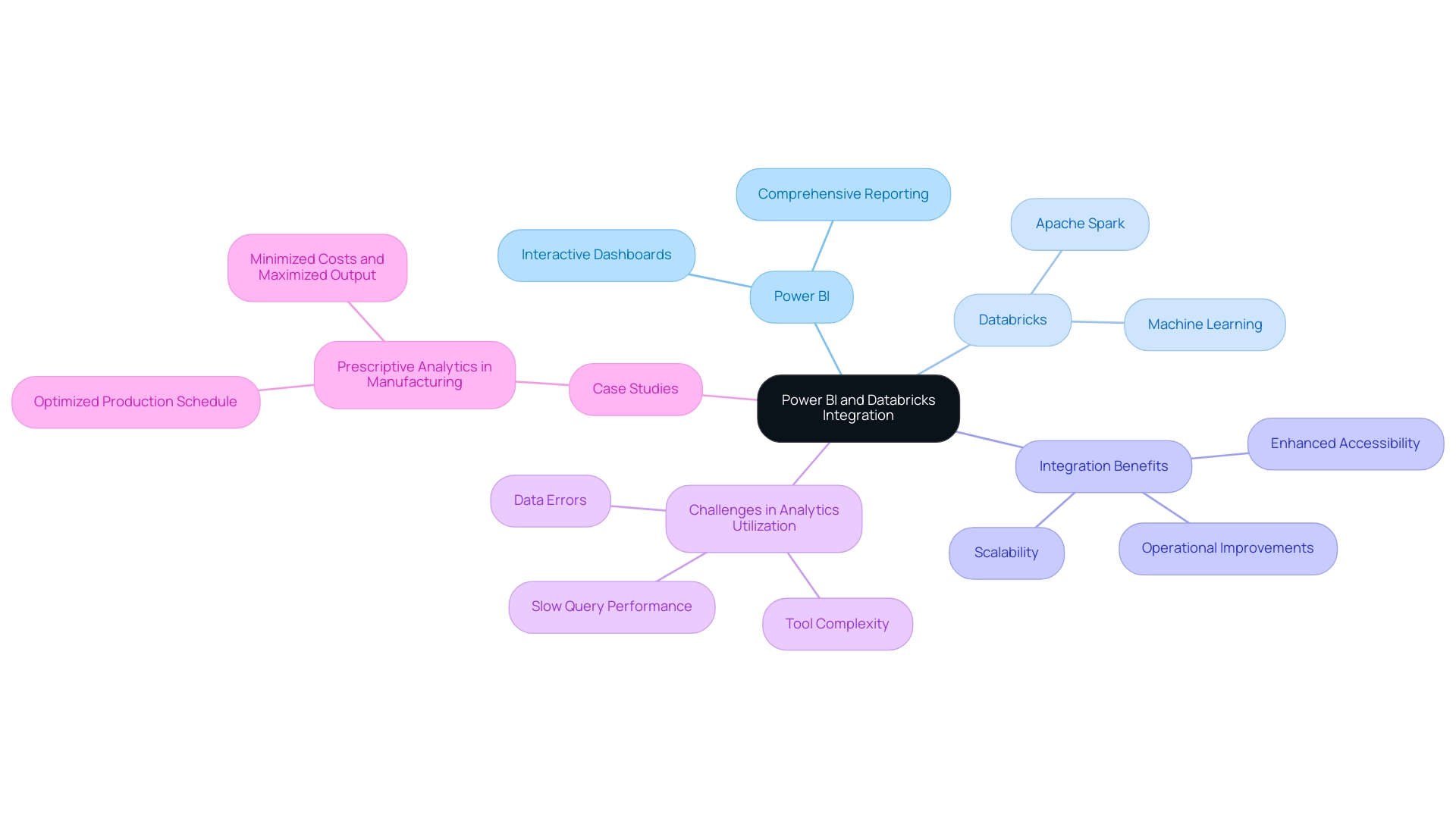

Overview

The article titled “Connecting Power BI Databricks: A Comprehensive Step-by-Step Guide” serves as an essential resource for integrating Power BI with Databricks, aiming to elevate business intelligence capabilities. It begins by outlining the necessary prerequisites, capturing the reader’s attention with the promise of enhanced data processing and visualization.

Following this, the guide presents detailed setup instructions, fostering interest by breaking down complex processes into manageable steps. Best practices for creating visualizations and managing data refreshes are also highlighted, generating desire for effective integration that can significantly improve operational efficiency within organizations.

In conclusion, this comprehensive guide not only equips professionals with actionable insights but also encourages them to reflect on their current practices, prompting immediate action towards optimizing their data strategies.

Introduction

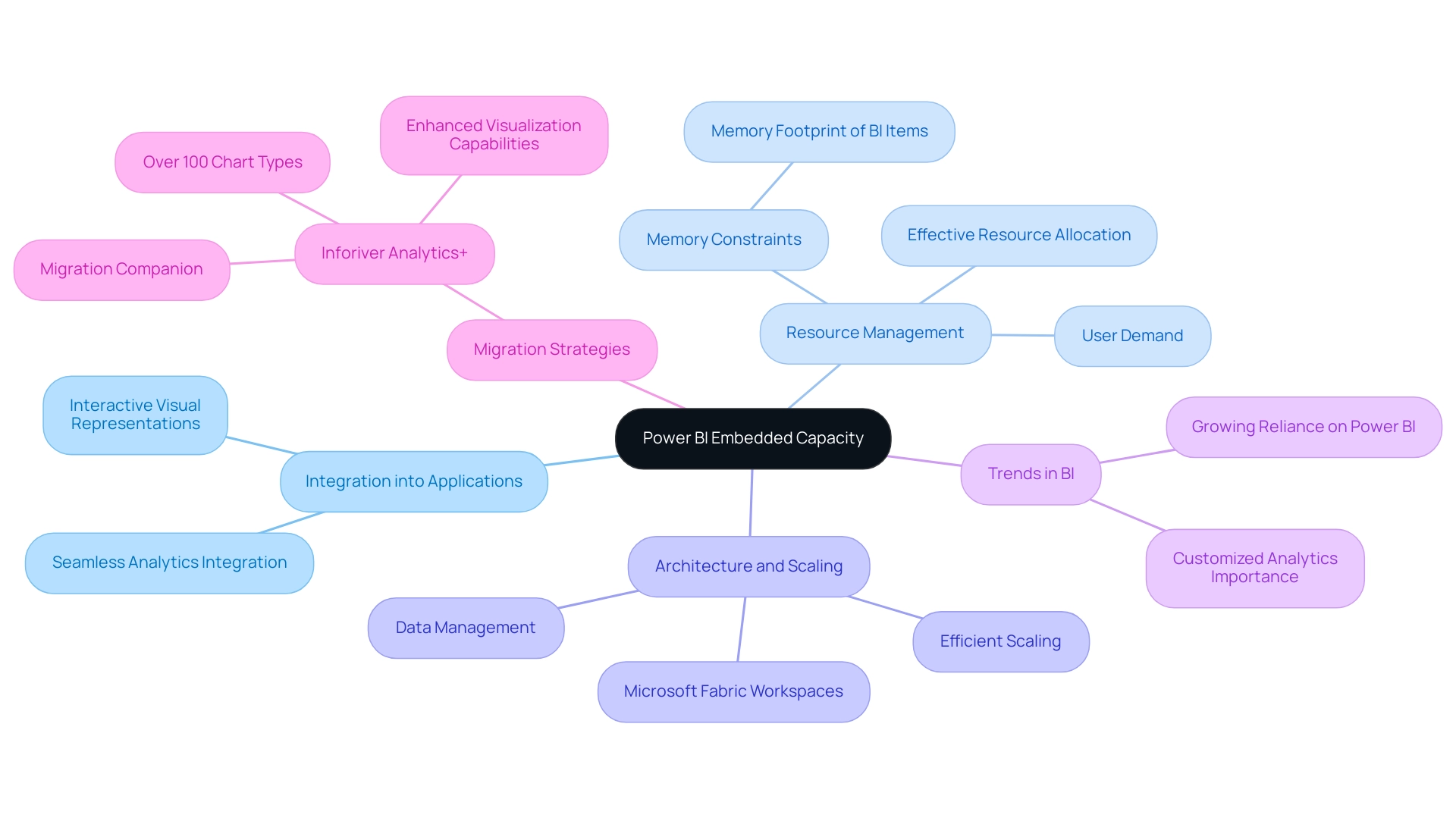

In the dynamic landscape of data analytics, organizations are increasingly turning to powerful tools like Power BI and Databricks to unlock the full potential of their data. Power BI, renowned for its robust visualization capabilities and interactive dashboards, empowers users to derive insights and make informed decisions. Meanwhile, Databricks serves as a cutting-edge analytics platform that harnesses the power of big data and machine learning, enabling organizations to process vast datasets seamlessly. Together, these tools create a formidable synergy, enhancing data accessibility and performance while driving strategic growth in an ever-evolving digital marketplace. As businesses strive to remain competitive, understanding the integration of Power BI with Databricks is essential for transforming raw data into actionable insights that propel innovation and operational efficiency.

Understanding Power BI and Databricks: Key Features and Benefits

This tool distinguishes itself as a robust business analytics instrument, empowering users to visualize information and share insights across their organizations. With interactive dashboards and comprehensive reporting capabilities, BI facilitates informed decision-making. In contrast, this platform serves as an advanced analytics system tailored for large-scale information and machine learning, leveraging the capabilities of Apache Spark.

The integration of BI tools and analytics platforms allows organizations to effectively manage extensive datasets and create significant visual representations.

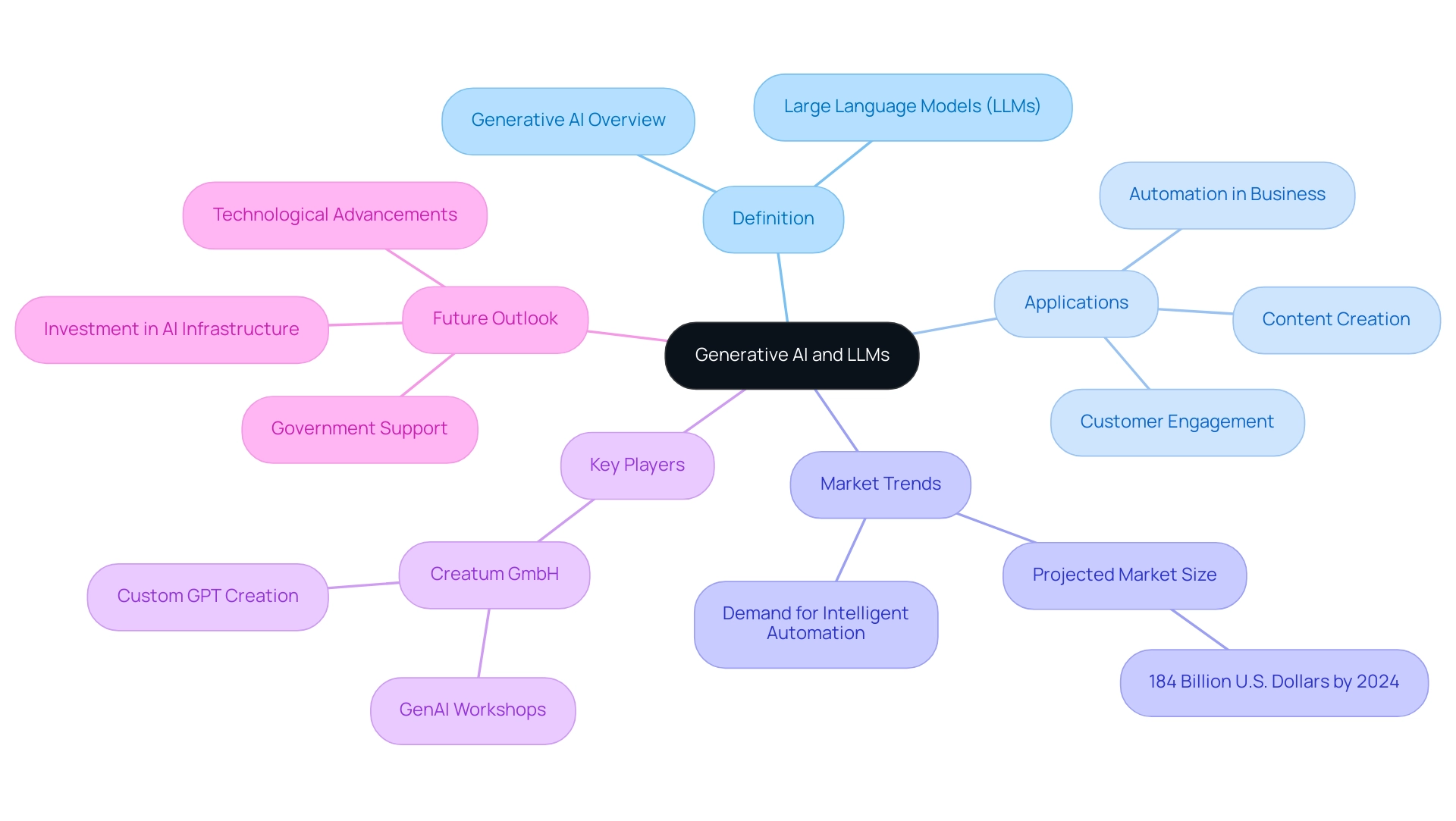

Incorporating BI with another platform significantly enhances accessibility, scalability, and performance. This synergy empowers users to extract deeper insights from their information, driving informed strategies and operational improvements. As the social business intelligence market is projected to reach approximately $25.9 billion by 2025, the adoption of tools like Power BI and Databricks is becoming increasingly essential for entities striving to remain competitive.

However, despite initiatives for self-service tools and modern information platforms, many companies encounter challenges in effectively utilizing analytics due to issues such as tool complexity, slow query performance, and errors. As Tajammul Pangarkar, CMO at Prudour Pvt Ltd, observes, “The effective use of analytics tools is essential for organizations to fully leverage their information assets.” This is where our BI services come into play, offering a 3-Day Sprint for rapid report creation and a General Management App for comprehensive management and smart reviews, ensuring efficient reporting and information consistency.

Recent updates to the Databricks analytics platform further enhance its capabilities, providing features that streamline data processing and analytics workflows. These enhancements, combined with the intuitive interface of Power BI and Databricks, create a powerful ecosystem for data-driven decision-making. Real-world applications illustrate that entities leveraging this integration can achieve significant operational efficiencies, as evidenced by case studies in sectors like manufacturing, where prescriptive analytics has optimized production schedules and minimized costs.

Ultimately, as companies navigate the complexities of analytics, the partnership between BI and another platform emerges as a strategic advantage, enabling organizations to harness the full potential of their assets and drive growth and innovation. Furthermore, utilizing AI through Small Language Models and GenAI Workshops can enhance information quality and training, while automation provides streamlined workflow management, ensuring a risk-free ROI evaluation and professional execution.

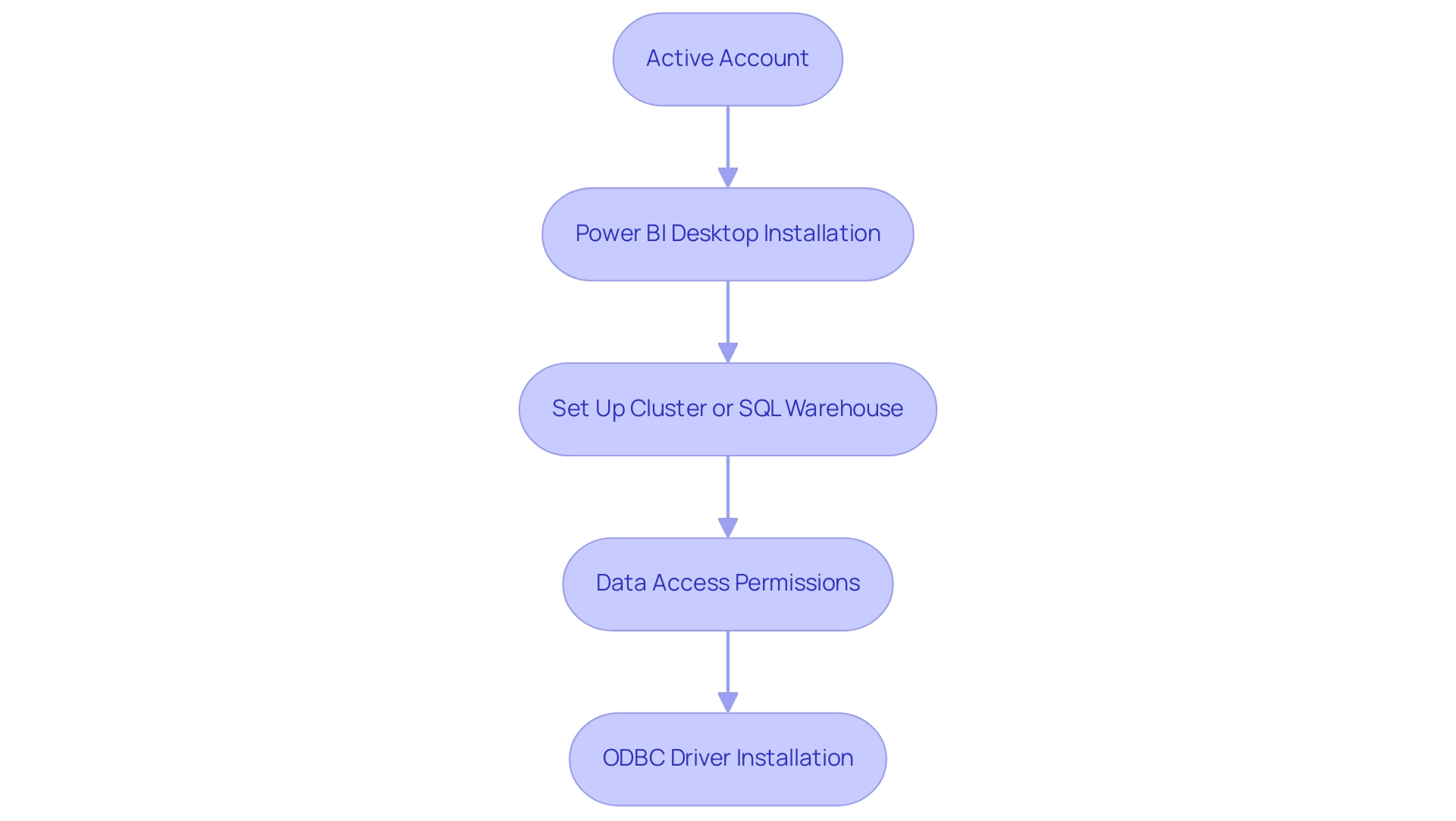

Prerequisites for Connecting Power BI with Databricks

To successfully connect Power BI to Databricks, it is essential to meet the following prerequisites:

- Active account: Ensure you have a current account with access to a workspace, as this is essential for any information operations.

- Power BI Desktop: Install the latest version of Power BI Desktop on your machine to leverage its full capabilities for visualization and reporting.

- Set Up Cluster or SQL Warehouse: A properly set up cluster or SQL warehouse is essential to enable information processing and querying.

- Data Access Permissions: Confirm that you have the required authorizations to access the information stored in the platform, as this will facilitate smooth integration of the information.

- ODBC Driver: If you intend to use ODBC for the connection, ensure that the latest version of the ODBC driver is installed on your system.

In 2025, a significant percentage of organizations are utilizing Power BI Databricks, reflecting the growing trend towards integrated information solutions. Successful implementations have demonstrated that aligning the baseline table schema with the monitored table—excluding the timestamp column in time series or inference profiles—can enhance information accuracy and reporting efficiency.

As Pubudu Dewagama observed, “In today’s information-driven environment, businesses seek powerful solutions to harness the full potential of their resources.” By adhering to these prerequisites, organizations can streamline the connection process, thereby overcoming common challenges such as time-consuming report creation and inconsistencies. This enables them to convert raw information into actionable insights, driving informed decision-making and fostering innovation.

Additionally, integrating Robotic Process Automation (RPA) can further streamline the integration process, enhancing operational efficiency by automating repetitive tasks related to handling information. Customized AI solutions can also help in tackling particular challenges associated with information integration and reporting, ensuring that entities can effectively utilize their assets. The case study titled “Business Intelligence Empowerment” illustrates how the organization enables businesses to extract meaningful insights, further linking the connection process to the broader goal of informed decision-making and innovation.

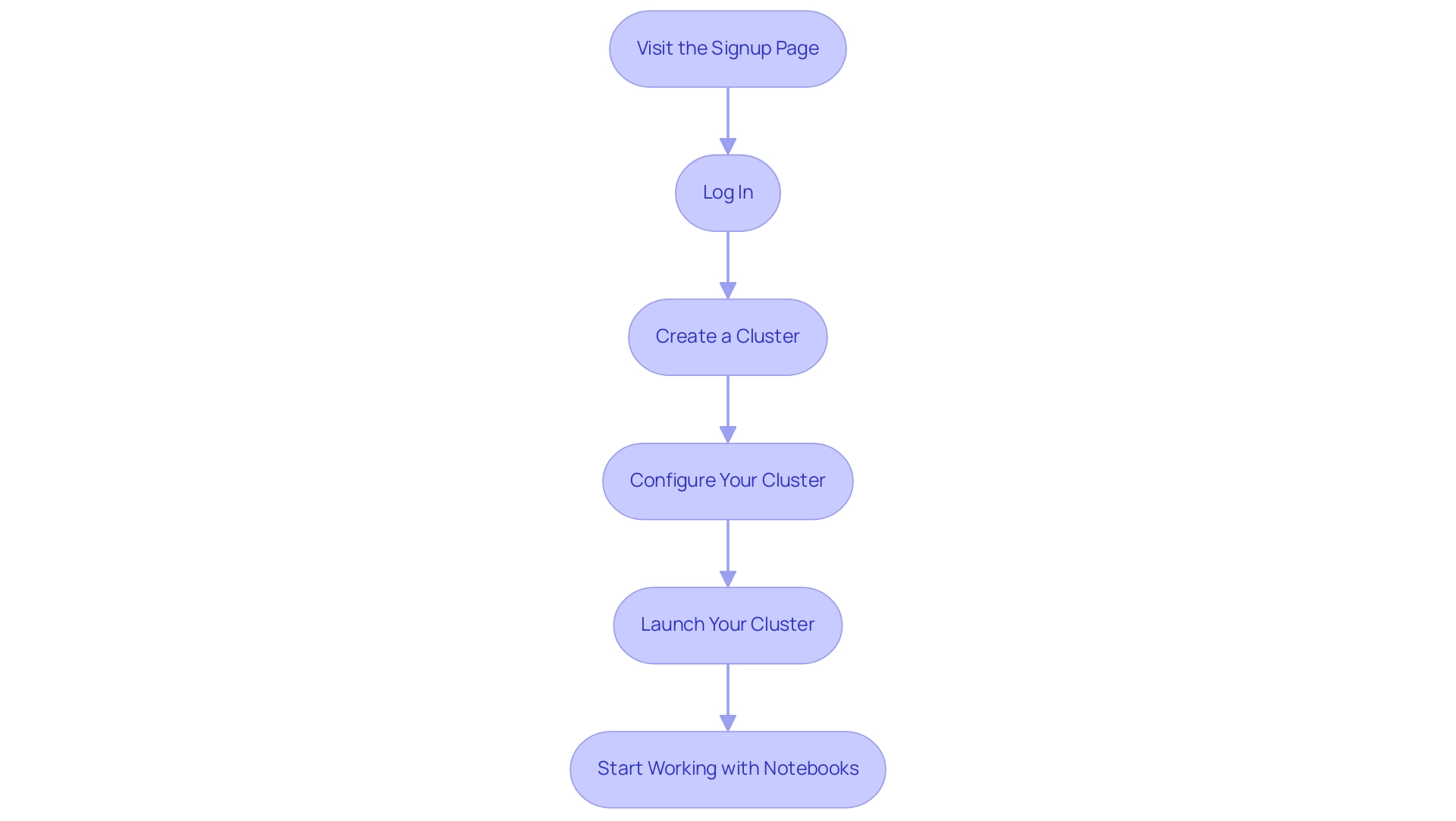

Setting Up Databricks Community Edition: A Step-by-Step Guide

Setting up the Community Edition is a straightforward process that can significantly enhance your data analytics capabilities using Power BI Databricks. Follow these steps to get started:

- Visit the Signup Page: Go to the Community Edition signup page and create your account. This initial step is crucial for accessing the platform’s features.

- Log In: Once registered, log in to your Databricks workspace to begin your journey.

- Create a Cluster: Navigate to the ‘Compute’ section in the sidebar and click on ‘Create Compute’. This is where you will configure the environment for your processing tasks.

- Configure Your Cluster: Name your cluster and select the default settings, typically optimized for Community Edition. This ensures that you are leveraging the best configurations for your needs.

- Launch Your Cluster: Click ‘Create Compute’ to launch your cluster. This step initiates the resources necessary for executing your tasks.

- Start Working with Notebooks: After your cluster is operational, you can begin creating notebooks and importing information. Notebooks are essential for interactive coding and documentation, allowing you to visualize and analyze your information effectively.

As of 2025, the growth rate of Community Edition users has been impressive, reflecting its increasing popularity among analytics professionals. Notably, Delta Cache ensures faster read speeds for processing remote storage data, enhancing the efficiency of your analytics tasks in Power BI Databricks.

Core concepts of the platform, such as clusters for executing code, jobs for automating tasks, and notebooks for interactive coding and documentation, can be effectively utilized with Power BI Databricks. Understanding these elements is crucial for maximizing your use of the platform.

Additionally, Spark metric charts available in the compute metrics UI provide insights into server load distribution, active tasks, and overall cluster performance, which are vital for monitoring your resources effectively.

Real-world examples, such as Airbnb’s implementation of advanced analytics infrastructure using StarRocks, showcase the transformative potential of this technology in driving data-driven decision-making. As Allan Ouko states, “Discover how to utilize this SQL platform for effective analytics, querying, and business intelligence with practical examples and best practices.”

By following this setup guide, you can harness the potential of Community Edition to enhance your analytics capabilities.

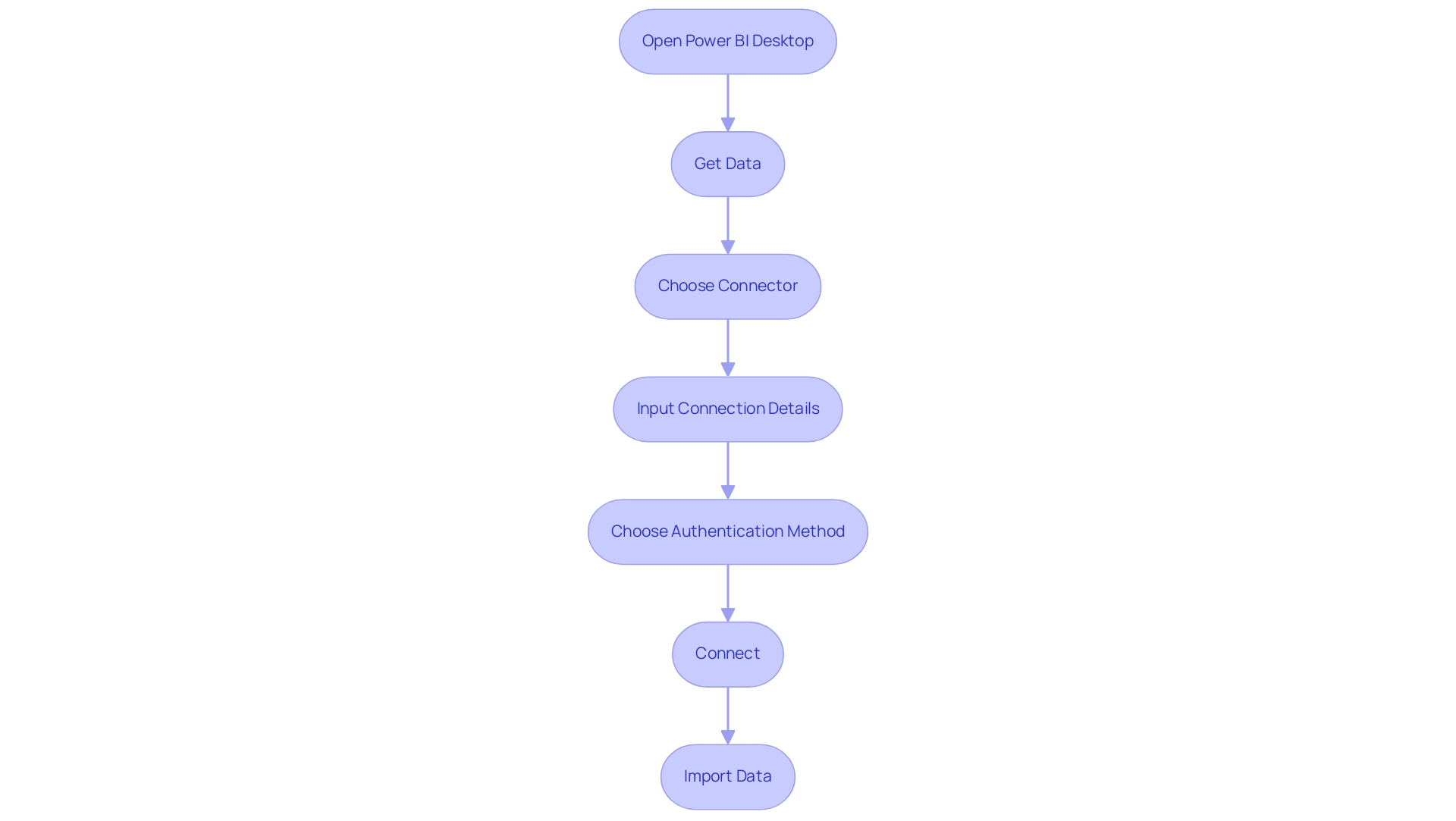

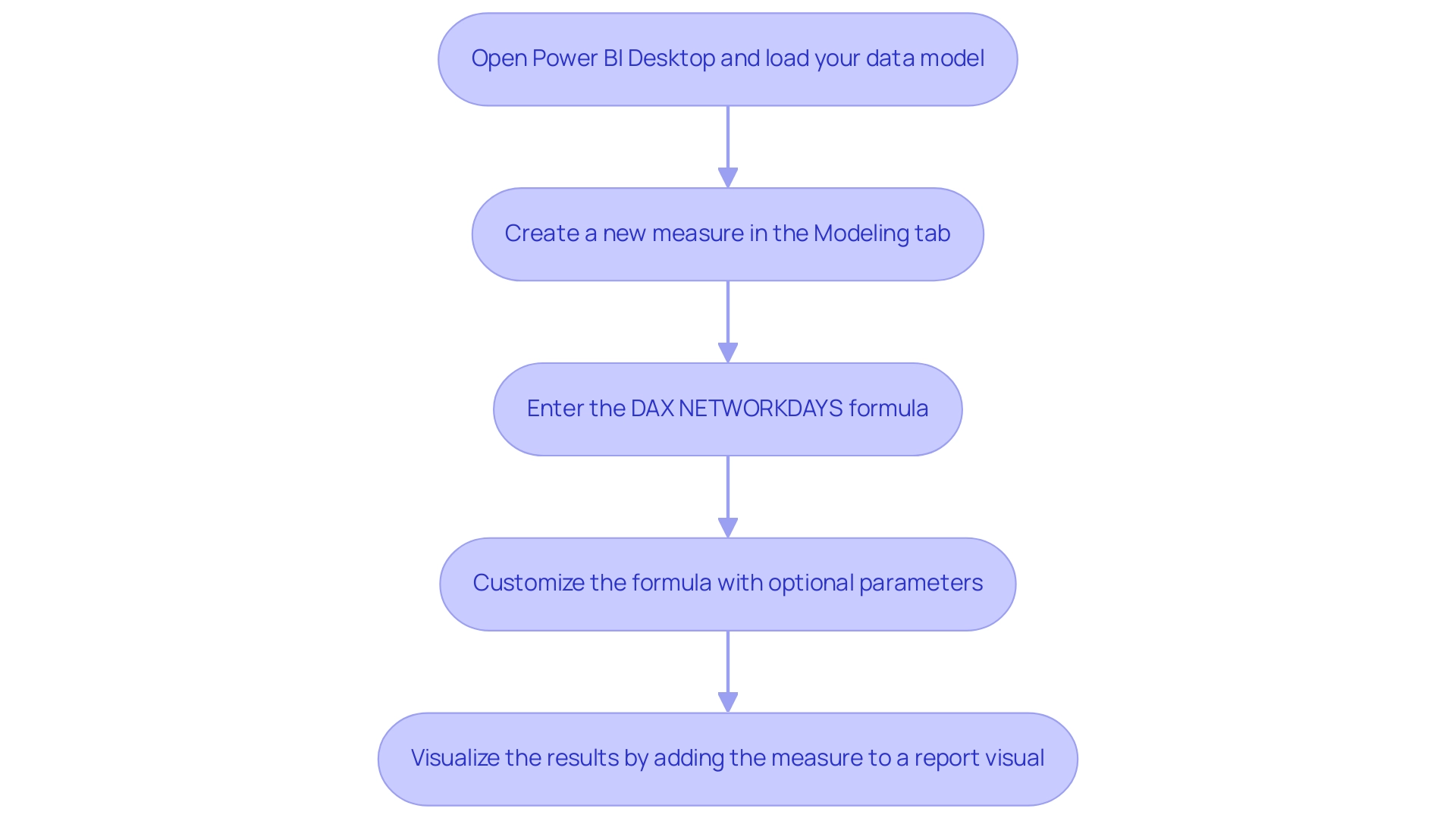

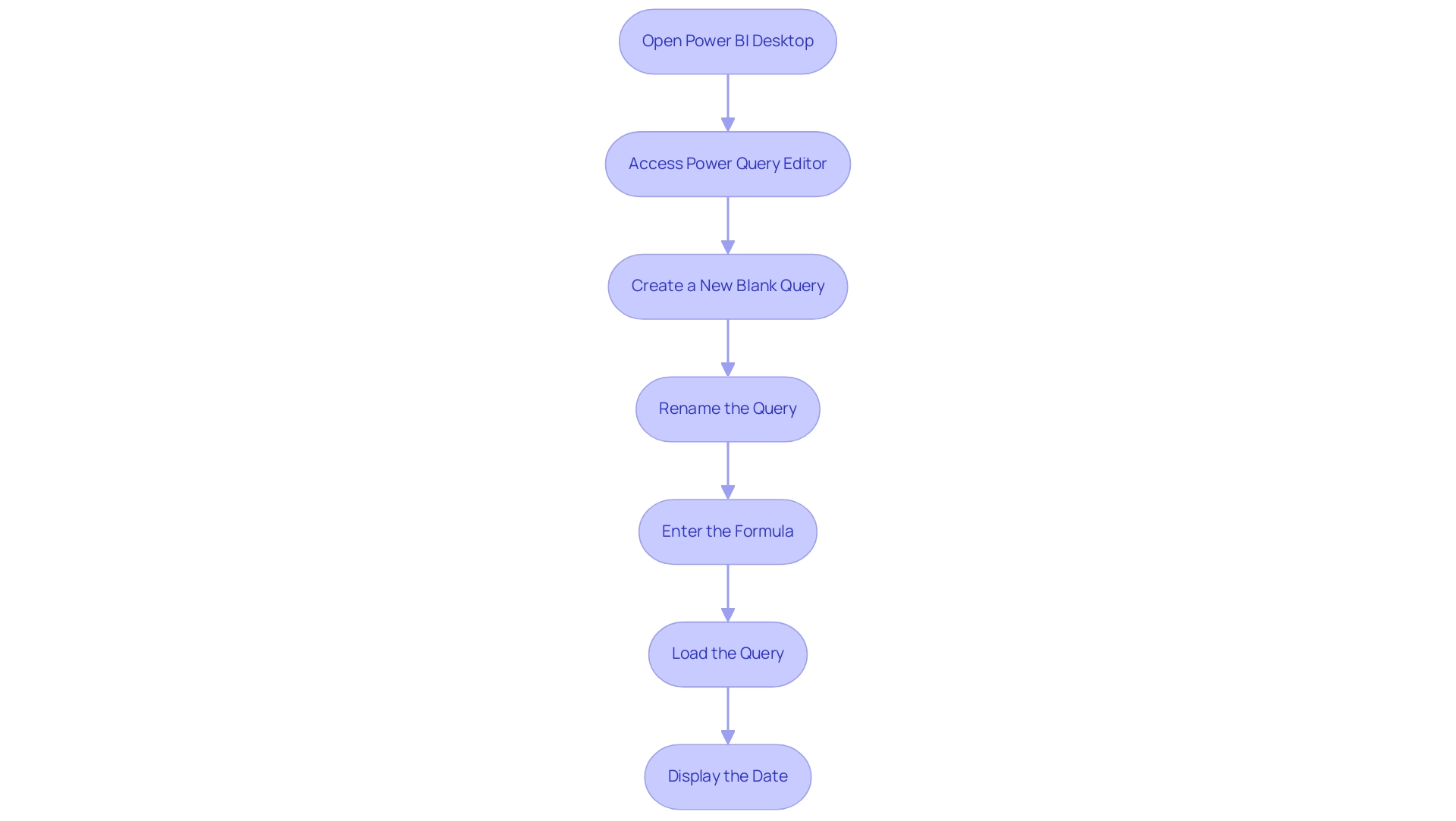

Connecting Databricks to Power BI: Step-by-Step Instructions

Linking the analytics platform to Business Intelligence is a straightforward process that can significantly enhance your data visualization capabilities and drive operational efficiency. Follow these detailed steps to establish a successful connection:

- Open Power BI Desktop: Launch the application and navigate to the main interface.

- Get Data: Click on the ‘Get Data’ option located in the Home ribbon.

- Choose Connector: In the ‘Get Data’ window, type ‘Databricks’ in the search bar and choose the connector from the list.

- Input Connection Details: Enter the Server Hostname and HTTP Path from your workspace. These details are crucial for establishing a secure connection.

- Choose Authentication Method: Select your preferred authentication method, such as Azure Active Directory or Personal Access Token, to ensure secure access to your data.

- Connect: Click ‘Connect’ to initiate the connection process.

- Import Data: Once connected, you will be presented with a list of tables and views available in your environment. Select the ones you wish to import into BI.

Recent updates in 2025 have improved the Power BI Databricks connector, significantly enhancing performance and user experience. For instance, performance metrics indicate that querying a dataset of 2.4 terabytes organized into 200 partitions averages just 2.12 seconds for visual generation, showcasing the efficiency of the lakehouse architecture. However, challenges persist, such as time-consuming report creation and inconsistencies, which can hinder actionable insights.

Real-world examples demonstrate the effectiveness of this integration. A notable case study involved creating a Delta table with sample student information in the platform, illustrating how users can efficiently store and query structured information. This capability not only simplifies information management but also improves analytical processes, tackling the common challenges encountered in utilizing insights from BI dashboards.

To ensure a seamless connection, consider best practices from data integration specialists, such as verifying your connection settings and regularly updating your BI and analytics environments to leverage the latest features and improvements. As Sravan Voona noted, “We strongly believe that the Engine might be encountering issues in parsing the large query (with numerous OR clauses) and generating the execution plan,” which is crucial for users troubleshooting connections. By following these steps and recommendations, you can effectively link the platform to Business Intelligence using Power BI Databricks, unlocking powerful insights and driving data-driven decision-making.

Moreover, utilizing tailored RPA solutions such as EMMA RPA and Automate from Creatum GmbH can improve data quality and streamline the integration process, aligning with our dedication to fostering growth and innovation. Additionally, addressing the lack of data-driven insights through this integration is essential for informed decision-making.

Creating Visualizations in Power BI Using Databricks Data

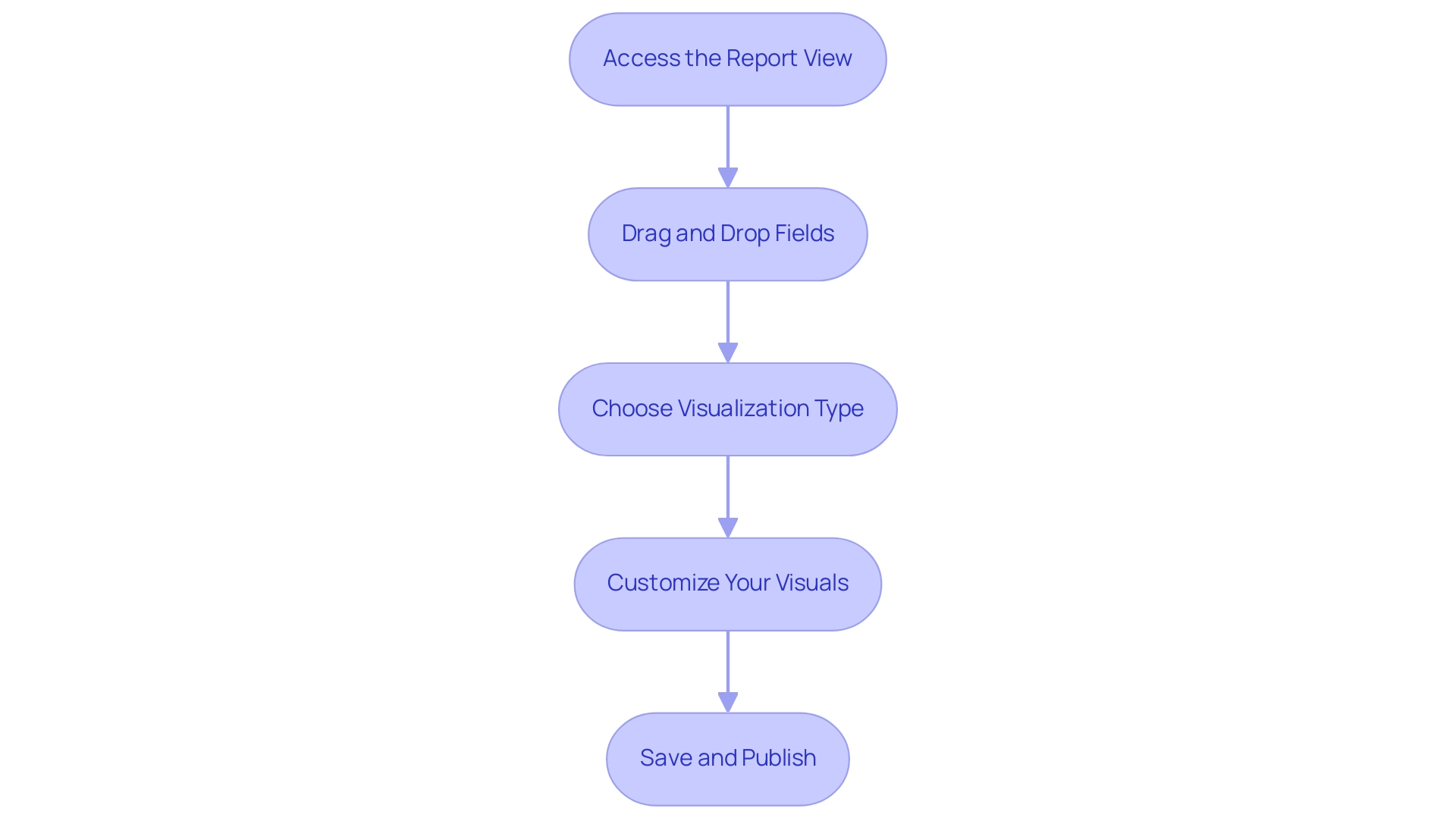

Once you have successfully established a connection between Databricks and Power BI, you can create impactful visualizations by following these streamlined steps:

- Access the Report View: Begin by navigating to the ‘Report’ view within Power BI, where you can design your visualizations effectively.

- Drag and Drop Fields: Select the relevant fields from the Power BI Databricks information model and drag them onto the report canvas. This intuitive action allows for swift visualization of your data, addressing the common challenge of time-consuming report creation.

- Choose Visualization Type: Utilize the visualization pane to select your desired chart or graph type, such as bar charts, line graphs, or advanced options like heatmaps and 3D maps. These can be enhanced using Mapbox and ArcGIS for customizable spatial analytics. Power BI offers two types of cards: Single numbers and Multi-row cards, providing versatile options for displaying key metrics.

- Customize Your Visuals in Power BI Databricks: Tailor your visualizations by adjusting formatting options in the visualization pane. This includes condition-based formatting, which significantly enhances the interpretability of your data. The Advanced Card visual in Power BI features condition-based formatting, support for prefixes and postfixes, and customizable content alignment, enriching your visualizations and ensuring consistency.

- Save and Publish: After finalizing your report, save your work and publish it to the Power BI service. This crucial step ensures that your insights are easily shareable with stakeholders, fostering improved communication and collaboration across teams.

In 2025, the emphasis on information visualization continues to rise, with statistics indicating that organizations utilizing visual tools experience a substantial enhancement in decision-making efficiency. Real-world examples illustrate that effective visualizations not only improve understanding but also drive business performance by aligning teams and reducing silos. For instance, the case study titled ‘Enhanced Communication through Visualization’ demonstrates how visualizing information fosters communication and collaboration between teams, thereby enhancing overall organizational efficiency.

By incorporating this information into Business Intelligence through Power BI Databricks, you can unlock the full potential of your data, transforming it into actionable insights that propel your organization forward. As Satyam Chaturvedi, Digital Marketing Manager, states, “By collaborating with Arka Softwares, you can confidently access the full functionalities of BI, unlocking the inherent value within your data and maximizing its potential.

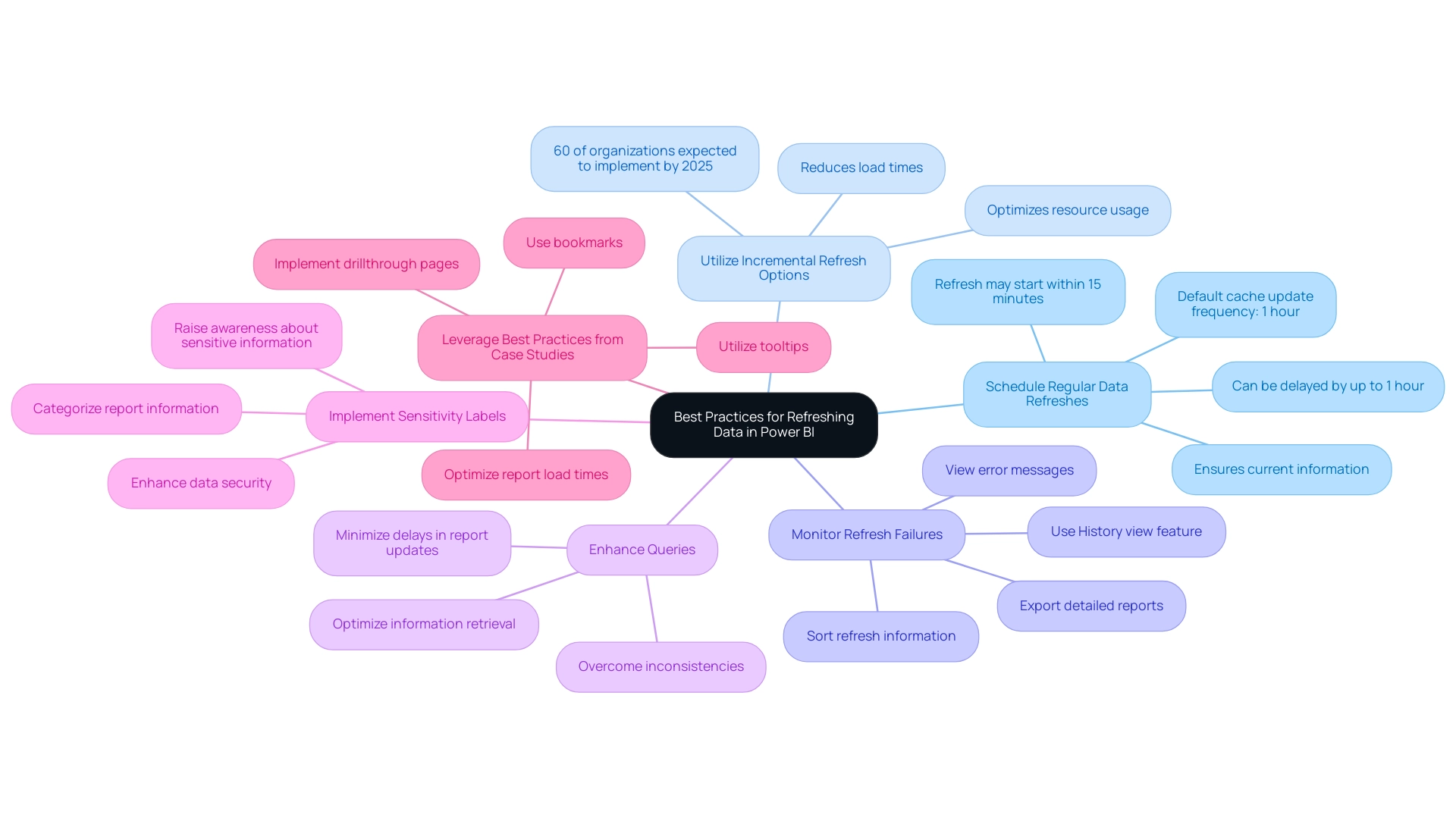

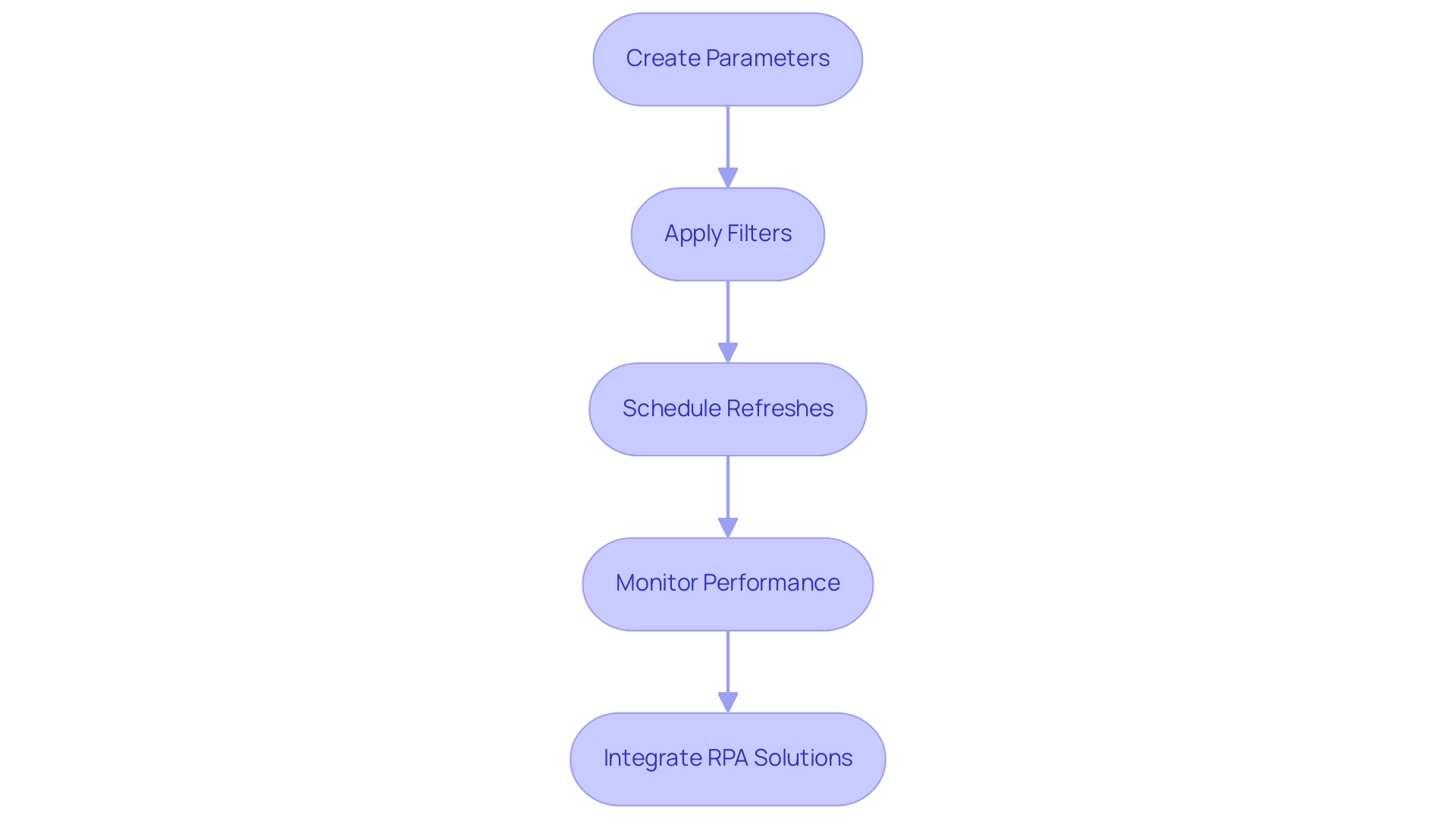

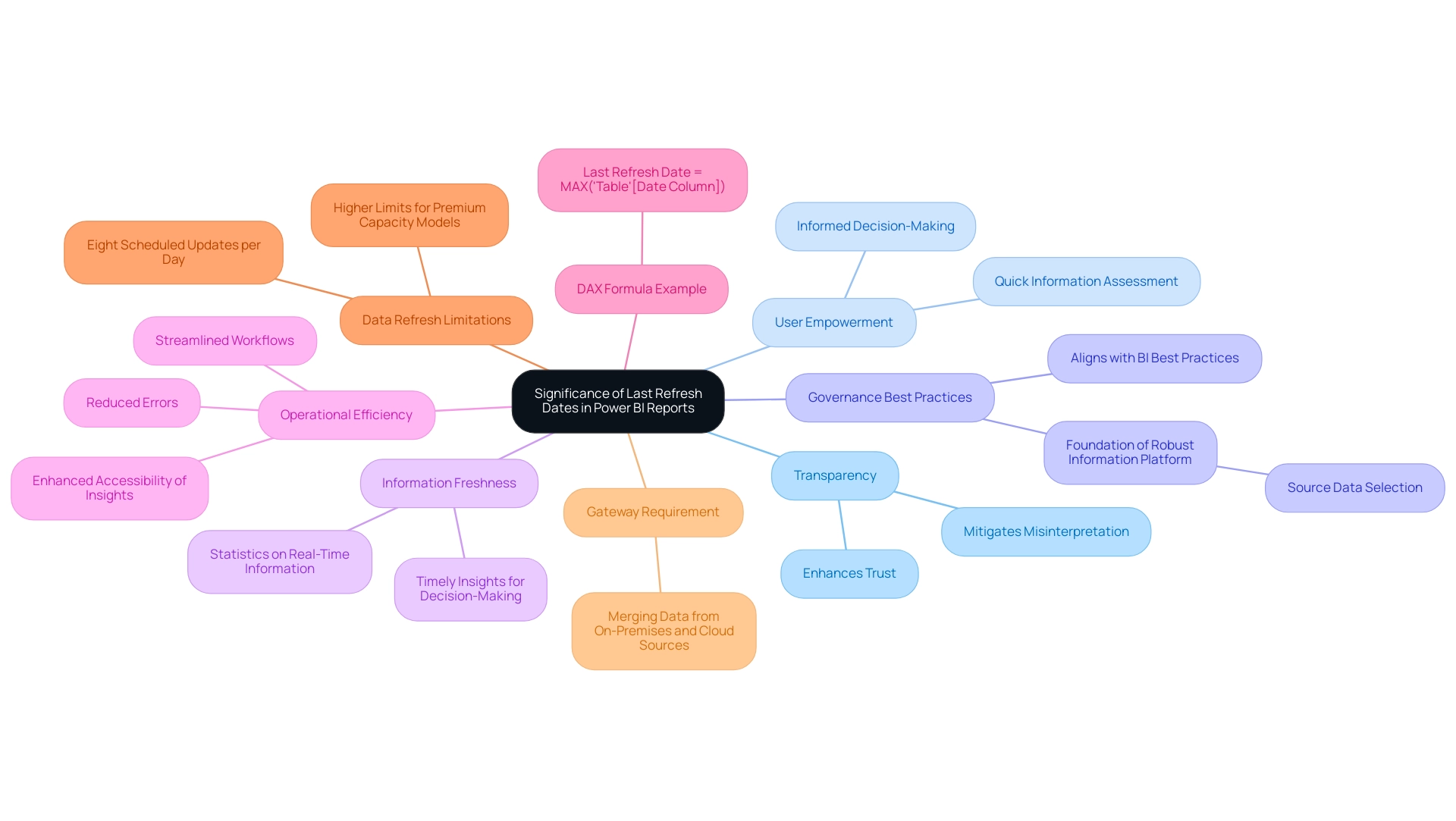

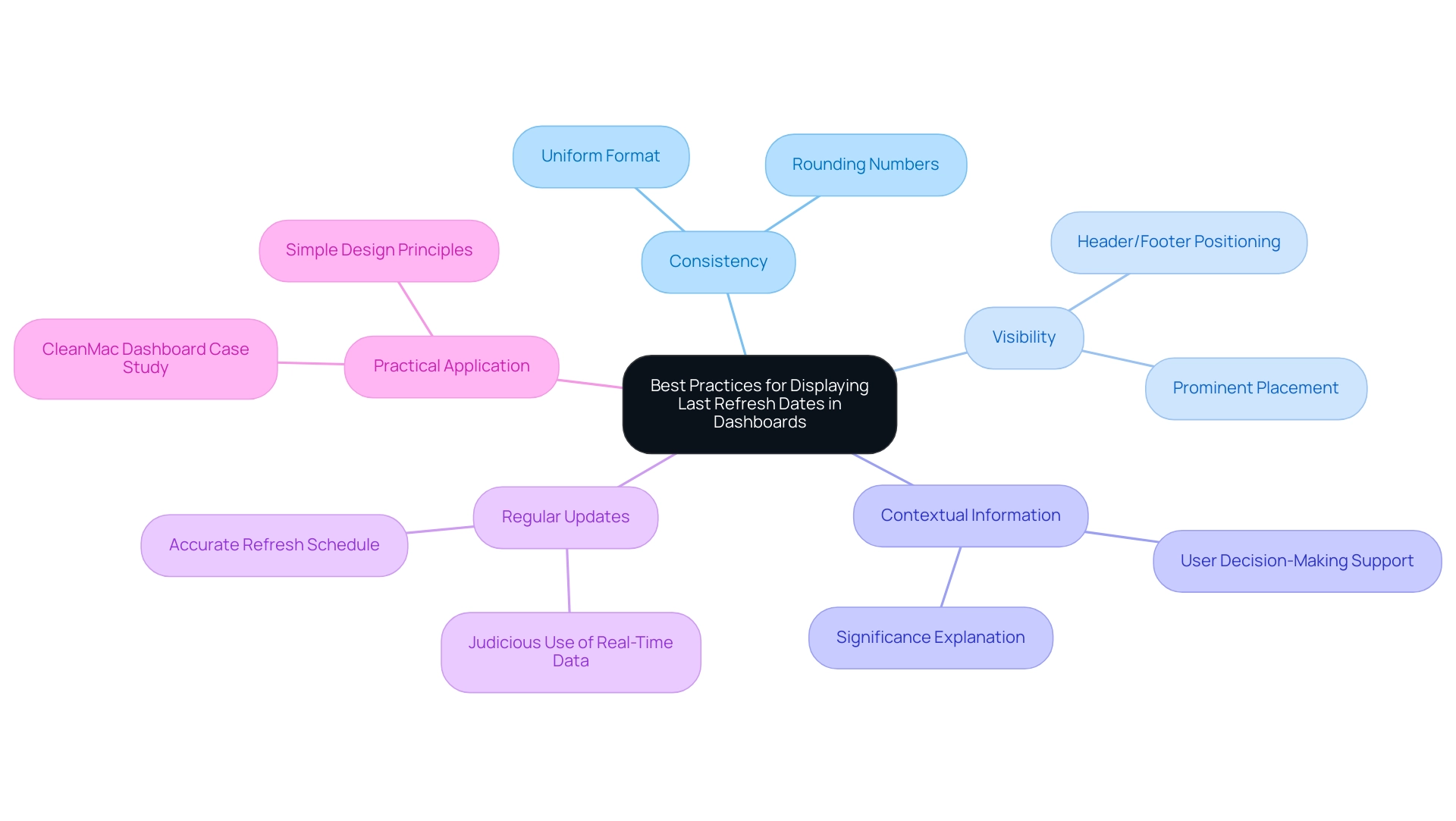

Refreshing Data in Power BI: Best Practices for Databricks Integration

To ensure that Power BI Databricks delivers timely and pertinent insights, implementing effective refresh strategies from Databricks is essential.

-

Schedule Regular Data Refreshes: Establish a routine for refreshing data in BI. This ensures that your reports consistently reflect the most current information, enhancing decision-making capabilities. Note that the Power BI cache update frequency is set to one hour by default; while the actual refresh might start within 15 minutes of the scheduled time, it can be delayed by up to one hour.

-

Utilize Incremental Refresh Options: For entities managing large datasets, leveraging incremental refresh can significantly enhance performance. This approach permits only the new or modified information to be loaded, reducing load times and optimizing resource usage. In 2025, around 60% of organizations are anticipated to implement incremental refresh strategies in BI, emphasizing its increasing significance in information management.

-

Monitor Refresh Failures: Regularly track the outcomes of scheduled refreshes using the History view feature in Power BI. This tool enables administrators to sort refresh information, view error messages, and export detailed reports, facilitating the prompt resolution of any issues that arise. Addressing these challenges is essential for leveraging insights effectively and avoiding time-consuming report creation.

-

Enhance Queries: Effective information retrieval is crucial during refresh operations. By optimizing queries in Power BI Databricks, organizations can ensure that information is fetched quickly and effectively, minimizing delays in report updates. This optimization is vital for overcoming inconsistencies that can hinder actionable guidance.

-

Implement Sensitivity Labels: Categorizing report information by business impact using sensitivity labels not only enhances security but also raises awareness among users about the importance of handling sensitive information appropriately.

-

Leverage Best Practices from Case Studies: For instance, strategies such as using bookmarks, drillthrough pages, and tooltips have been shown to optimize report load times, particularly on landing pages where quick access to information is critical. These practices can significantly enhance user experience and engagement with reports. The case study on optimizing report load times demonstrates how these strategies enhance page load speeds and improve user experience, making them vital for effective reporting.

By adhering to these best practices, organizations can maximize the efficiency of their refresh processes in BI tools, ensuring that insights obtained from cloud-based services are both timely and actionable. This integration of Business Intelligence and RPA, including tools like EMMA RPA and Automate from Creatum GmbH, drives operational efficiency and supports business growth by transforming raw data into meaningful insights.

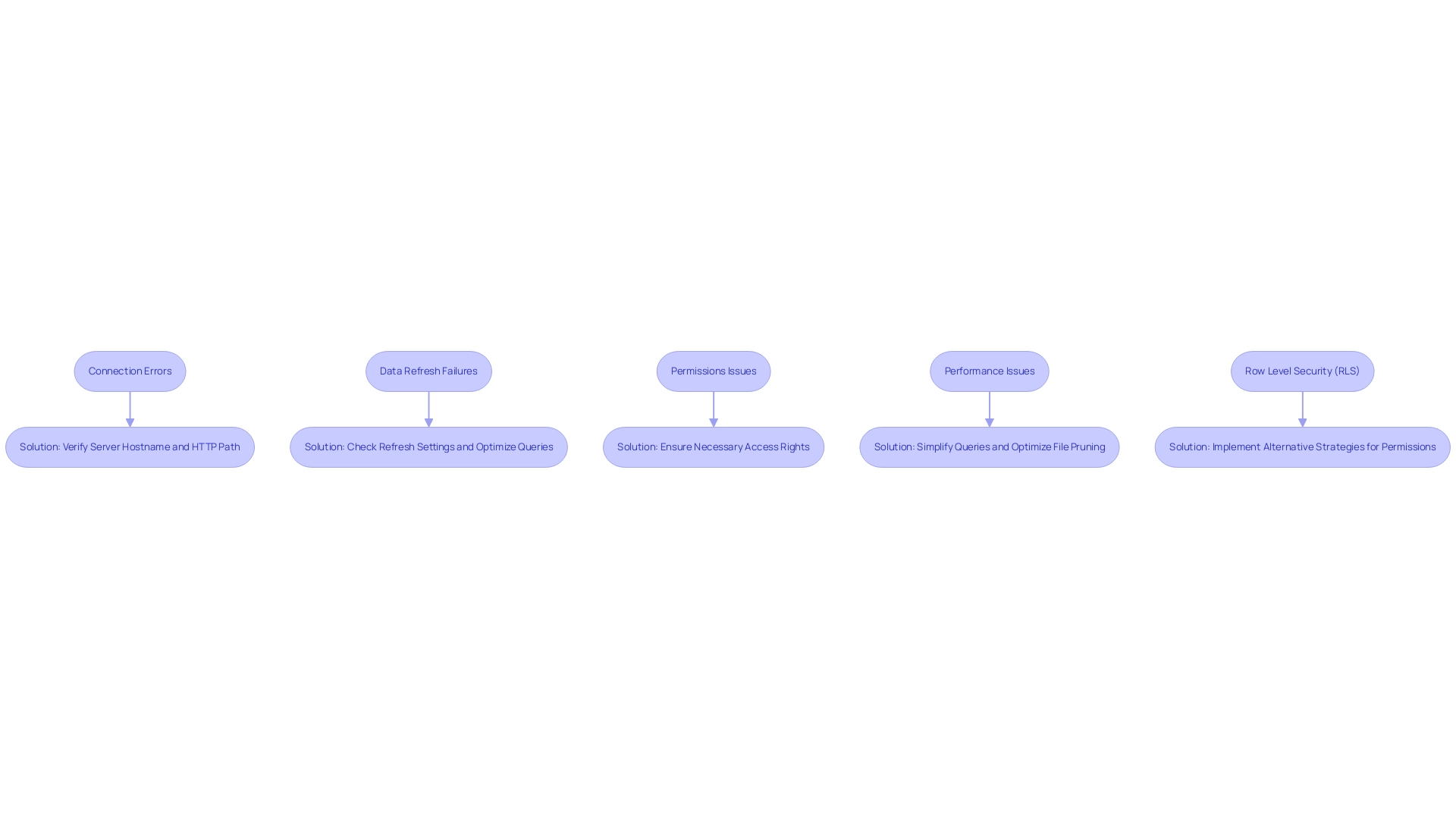

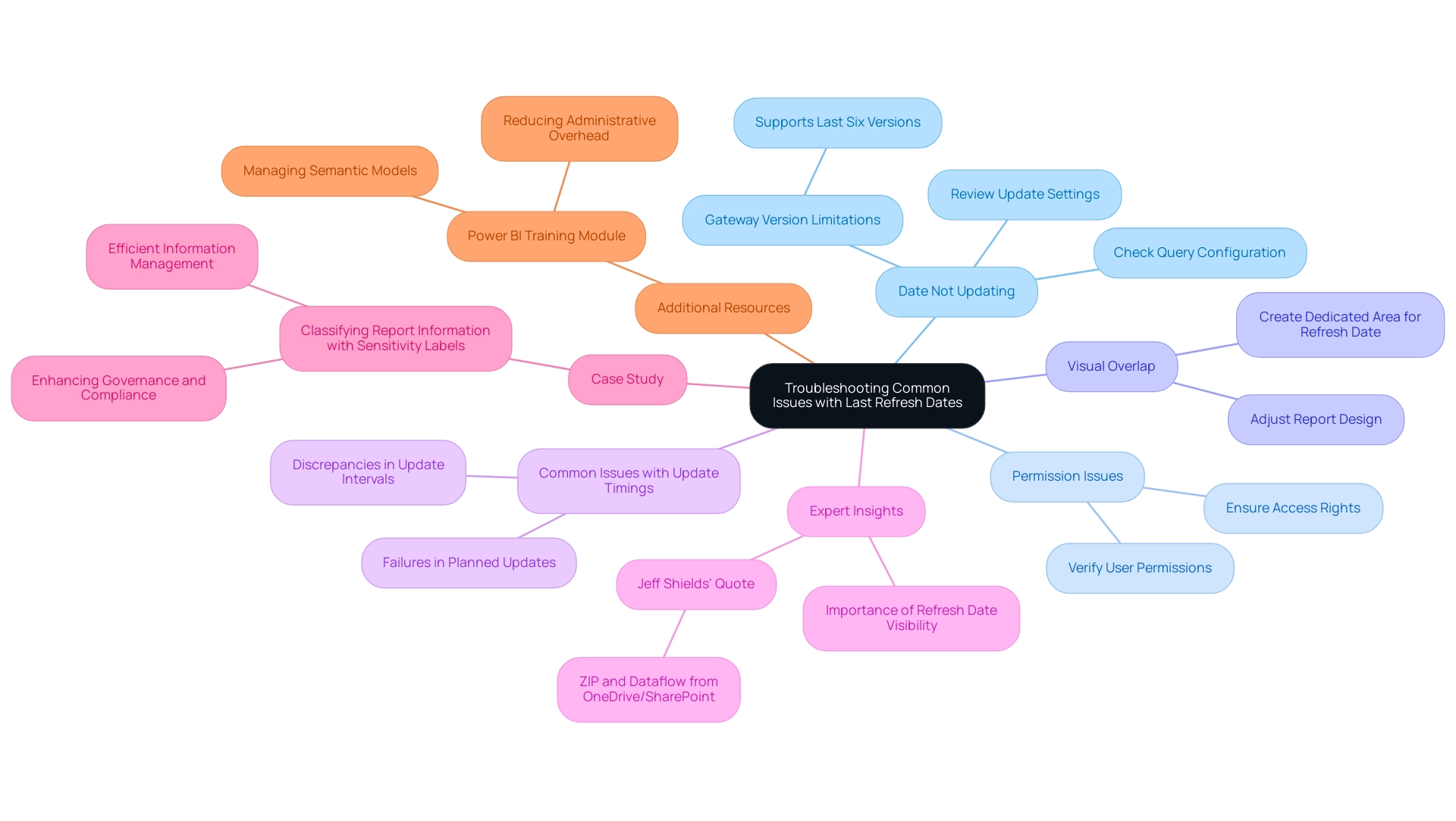

Troubleshooting Common Issues in Power BI and Databricks Integration

Integrating Power BI with alternative platforms can present several challenges that may hinder operational efficiency. Here are some common issues and their solutions:

-

Connection Errors: A frequent issue arises when users encounter connection errors. To resolve this, ensure that your cluster is active and that you have entered the correct Server Hostname and HTTP Path. A significant percentage of users have reported experiencing connection errors, highlighting the importance of verifying these settings.

-

Data Refresh Failures: Data refresh failures can disrupt the flow of information. It’s crucial to check your refresh settings and optimize your queries. For large datasets, consider turning off background refresh in the PBIX file settings, as recommended by support teams. Monitoring CPU and memory usage can also help identify bottlenecks during refresh operations. Additionally, checking local file settings in the PBIX file can further enhance refresh performance.

-

Permissions Issues: Permissions can often be a roadblock. Ensure that you have the necessary access rights to the data stored in the platform. It’s crucial to recognize that Row Level Security (RLS) cannot be implemented using a token for BI connections to a cloud data platform. Without the correct permissions, even the most optimized queries will fail to execute.

-

Performance Issues: Performance can degrade significantly when Power BI generates overly complex queries, particularly those with numerous OR clauses. These queries can exceed 8,000 lines of code, leading to execution delays and cancellations before a plan is generated. As noted by Sravan Voona, the engine might encounter issues in parsing such large queries and generating the execution plan. To mitigate this, consider preprocessing data in the platform, using views, and optimizing file pruning to simplify queries. Users have faced execution times exceeding four minutes due to these complexities, underscoring the need for optimization. The case study titled “Power Bi Performance with Azure” highlights these challenges, illustrating the performance issues stemming from complex queries.

-

Row Level Security (RLS): It’s important to note that Row Level Security cannot be applied using a token for connections to the analytics platform. This limitation can complicate information governance and access control, necessitating alternative strategies for managing user permissions.

By tackling these frequent challenges with specific remedies, entities can improve their incorporation of Power BI Databricks alongside cloud platforms, ultimately converting unrefined information into practical insights for informed decision-making. This aligns with our mission at Creatum GmbH to address the challenges of manual, repetitive tasks that hinder operational efficiency, allowing teams to focus on more strategic, value-adding activities. Leveraging Robotic Process Automation (RPA) can further streamline these workflows, enhancing overall operational efficiency in a rapidly evolving AI landscape.

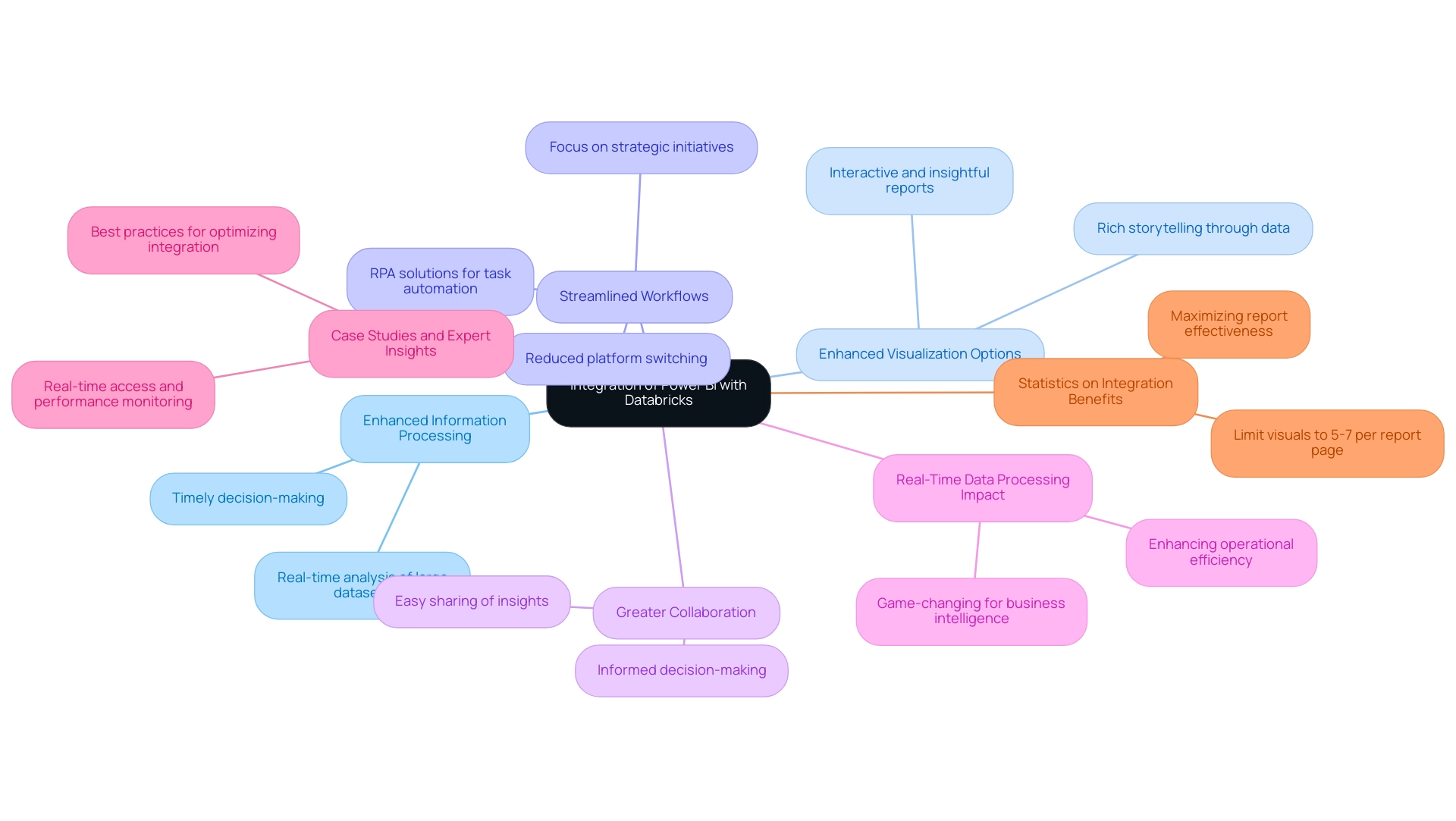

The Benefits of Integrating Power BI with Databricks for Enhanced Business Intelligence

Integrating Power BI with Databricks presents a multitude of advantages that significantly enhance business intelligence capabilities:

-

Enhanced Information Processing Capabilities: This integration enables the analysis of large datasets in real-time, allowing organizations to make timely decisions based on the most current information. In today’s fast-paced business environment, timely insights can lead to competitive advantages.

-

Enhanced Visualization Options: Users can generate interactive and insightful reports that not only showcase information but also narrate a story. The combination of a leading business intelligence tool’s strong visualization features with advanced analytical capabilities produces reports that are visually attractive and rich in insights, aiding improved comprehension and communication of data discoveries.

-

Streamlined Workflows: Leveraging Databricks’ powerful analytics features directly within Business Intelligence allows users to streamline their workflows. This integration reduces the need for switching between platforms, enhancing productivity and enabling teams to focus on strategic initiatives rather than repetitive tasks. Furthermore, Robotic Process Automation (RPA) solutions from Creatum GmbH can address task repetition fatigue, enhancing operational efficiency and boosting employee morale by automating manual workflows.

-

Greater Collaboration Across Teams: Power BI dashboards facilitate easy sharing of insights, fostering collaboration among teams. This collaborative environment ensures that all stakeholders have access to the same information and insights, promoting informed decision-making across the organization.

-

Real-Time Data Processing Impact: In 2025, the ability to process data in real-time will be a game-changer for business intelligence. Organizations harnessing this capability will not only improve operational efficiency but also enhance employee morale by reducing task repetition fatigue, enabling teams to focus on more value-adding activities. As Kyle Hale, Azure Solution Architect at the company, notes, ‘This is a really good article.’ It’s encouraging to see the positive impact of such integrations highlighted.

-

Power BI Databricks: Case studies and expert insights on best practices for optimizing the Power BI Databricks connector emphasize the significance of real-time access and performance monitoring. Implementing these practices can lead to enhanced analytical capabilities, driving growth and innovation. Experts in the field assert that integrating these tools is essential for entities aiming to stay ahead in a data-driven landscape.

-

Statistics on Integration Benefits: It is recommended to limit visuals to five to seven per report page to optimize performance, ensuring that reports remain clear and actionable. This statistic underscores the importance of careful information presentation in maximizing the effectiveness of business intelligence tools.

In summary, the integration of Power BI Databricks not only enhances analysis but also transforms how companies approach business intelligence, making it a vital strategy for success in 2025. Additionally, tailored AI solutions from Creatum GmbH can further support organizations in overcoming technology implementation challenges, ensuring they leverage the full potential of their data.

Conclusion

The integration of Power BI and Databricks signals a transformative shift in how organizations approach data analytics and business intelligence. By leveraging the robust visualization capabilities of Power BI alongside the powerful data processing features of Databricks, businesses can unlock deeper insights and drive strategic decision-making. This synergy enhances data accessibility and operational efficiency while fostering collaboration across teams, ensuring that all stakeholders are equipped with the same information to make informed choices.

Key benefits of this integration include:

- Improved data processing capabilities

- Enabling real-time analysis of large datasets

- A variety of visualization options that convert complex data into compelling narratives

As organizations strive to remain competitive in a rapidly evolving digital landscape, effectively leveraging these tools becomes crucial. Implementing best practices for data refresh, troubleshooting common issues, and optimizing queries can further enhance the overall experience and output of analytics initiatives.

Ultimately, the collaboration between Power BI and Databricks transcends mere technology; it empowers organizations to transform raw data into actionable insights that drive growth and innovation. As businesses navigate the complexities of data, embracing this integration will be essential for cultivating a data-driven culture that prioritizes informed decision-making and operational excellence.

Frequently Asked Questions

What is the primary function of the BI tool mentioned in the article?

The BI tool serves as a robust business analytics instrument that empowers users to visualize information and share insights across their organizations, facilitating informed decision-making through interactive dashboards and comprehensive reporting capabilities.

How does the platform differ from traditional BI tools?

The platform is an advanced analytics system tailored for large-scale information and machine learning, leveraging the capabilities of Apache Spark, which allows for more extensive data processing compared to traditional BI tools.

What benefits arise from integrating BI tools with analytics platforms?

Integrating BI tools with analytics platforms enhances accessibility, scalability, and performance, enabling organizations to manage extensive datasets and extract deeper insights, which drives informed strategies and operational improvements.

What challenges do companies face when utilizing analytics tools?

Companies often encounter challenges such as tool complexity, slow query performance, and errors, which hinder their ability to effectively utilize analytics.

What services does the article mention to help organizations with reporting and information consistency?

The article mentions BI services that include a 3-Day Sprint for rapid report creation and a General Management App for comprehensive management and smart reviews.

What recent updates have been made to the Databricks analytics platform?

Recent updates to the Databricks analytics platform enhance its capabilities by streamlining data processing and analytics workflows, contributing to a powerful ecosystem for data-driven decision-making.

What is the significance of the projected growth of the social business intelligence market?

The social business intelligence market is projected to reach approximately $25.9 billion by 2025, indicating the increasing importance of tools like Power BI and Databricks for organizations aiming to remain competitive.

What prerequisites are necessary to connect Power BI to Databricks?

The prerequisites include having an active account with access to a workspace, installing the latest version of Power BI Desktop, setting up a cluster or SQL warehouse, confirming data access permissions, and installing the latest ODBC driver if using ODBC for the connection.

How can organizations overcome common challenges in report creation and information integration?

By adhering to the prerequisites for connecting Power BI to Databricks, organizations can streamline the connection process, which helps overcome challenges like time-consuming report creation and inconsistencies.

What additional technologies can enhance the integration process?

Integrating Robotic Process Automation (RPA) can streamline the integration process by automating repetitive tasks, and customized AI solutions can address specific challenges associated with information integration and reporting.

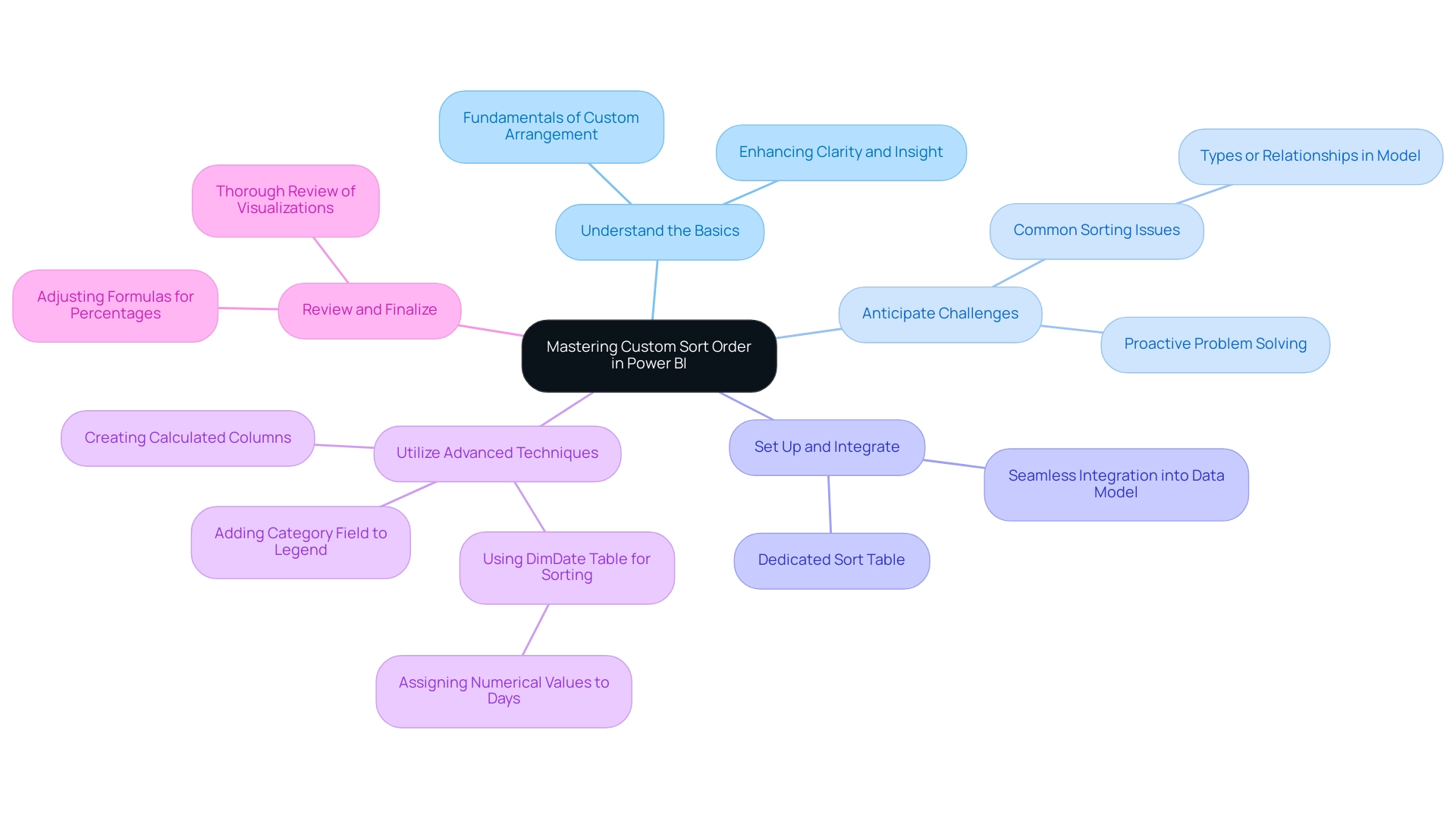

Overview

Mastering custom sort order in Power BI is essential for presenting information in a meaningful sequence. By creating a dedicated sort table and effectively integrating it into your data model, you can significantly enhance data visualization clarity and operational efficiency. This practice not only improves the overall presentation of data but also addresses common challenges users encounter, such as data inconsistencies. Furthermore, it paves the way for advanced sorting techniques, including calculated columns. Are you ready to elevate your data presentation skills?

Introduction

In the dynamic realm of data visualization, Power BI emerges as a formidable tool, empowering users to customize their data presentations to fulfill specific analytical requirements. Custom sorting, a pivotal feature of Power BI, facilitates the organization of data beyond conventional alphabetical or numerical sequences, thereby ensuring that insights are communicated with clarity and impact.

As organizations increasingly depend on data-driven decision-making, mastering the nuances of custom sorting becomes imperative for developing reports that not only inform but also resonate with stakeholders. This article explores the critical role of custom sorting in Power BI, addressing common challenges users encounter, providing practical steps for establishing sort tables, and unveiling advanced techniques that can elevate data analysis to unprecedented levels.

By grasping these concepts, users can refine their data storytelling abilities, fostering enhanced decision-making and operational efficiency in a fiercely competitive landscape.

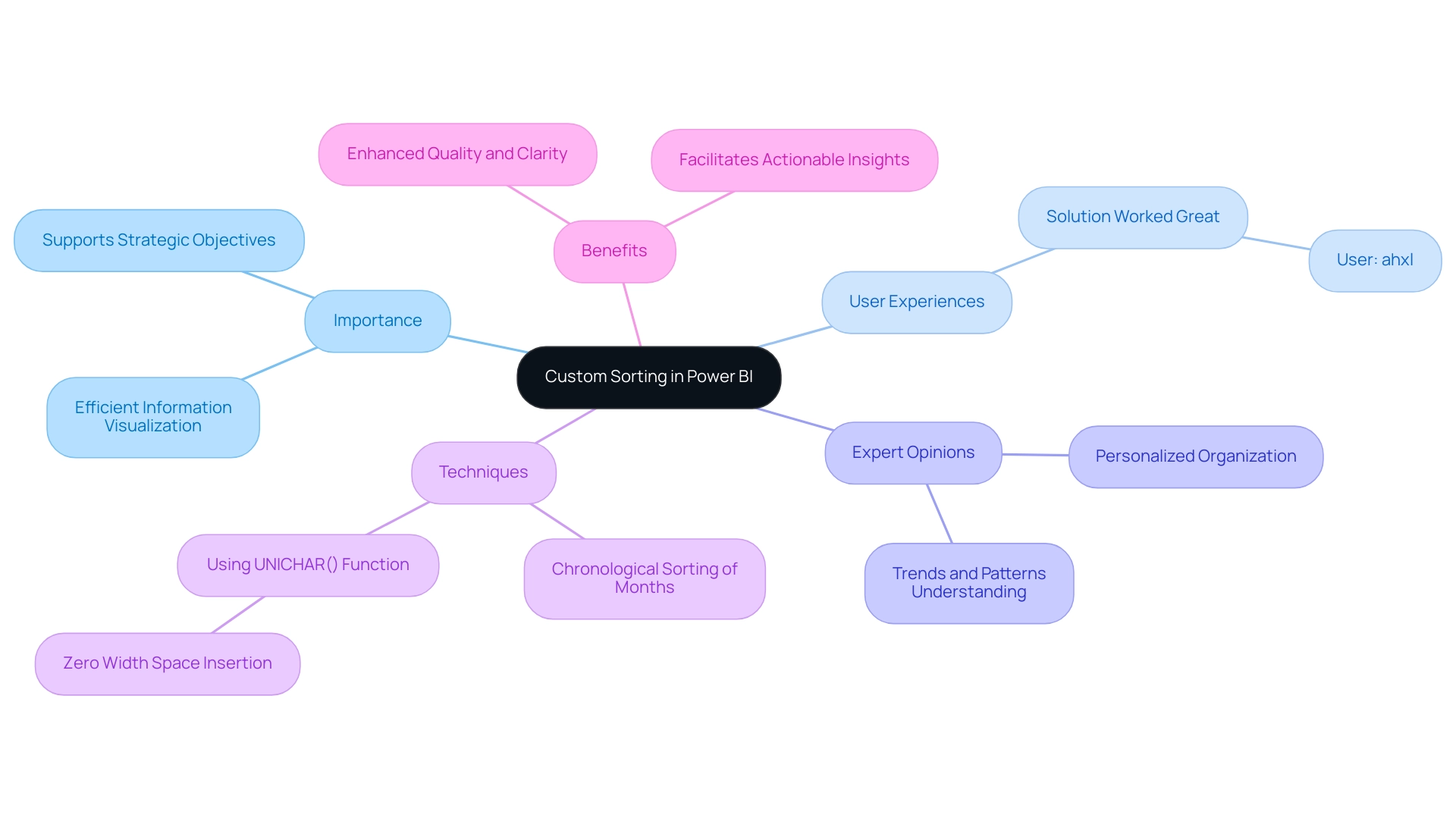

Understanding Custom Sorting in Power BI

The Power BI custom sort order empowers users to dictate the arrangement of information in reports and dashboards, transcending the limitations of default alphabetical or numerical organization. This capability is crucial when the inherent order of information diverges from the desired presentation. For instance, employing Power BI custom sort order ensures that months are arranged chronologically rather than alphabetically. In 2025, the importance of custom arrangement has been underscored by numerous discussions, with one post attracting over 340,426 views as users sought solutions for organizational challenges, particularly with calculated columns.

This statistic highlights the growing recognition of customized organization’s role in efficient information visualization, aligning with the demand for personalized AI solutions that navigate the overwhelming choices present in the market.

Expert opinions affirm that effective personalized organization is essential for information visualization, enabling a more intuitive understanding of trends and patterns. A regular visitor, ahxl, remarked, “This solution worked great for a situation where I had months – August, September, and October not displaying in the correct order in my visual,” illustrating the practical challenges users encounter. A case study further demonstrated how the UNICHAR() function was utilized to establish a custom sort order in a table, successfully grouping ‘Bad’ and ‘Warning’ statuses while preserving a specific sequence.

By inserting a ‘Zero width space’ before text entries, the desired order was achieved without disrupting other visual elements.

The benefits of custom sorting extend to enhancing quality and clarity in visualizations, facilitating easier extraction of actionable insights for stakeholders. This is particularly vital in today’s information-rich environment, where organizations struggle to leverage insights from BI dashboards due to time-consuming report generation and information inconsistencies. Moreover, incorporating the Category field into the Legend can aid in visualizing how segments are categorized, thereby enhancing the clarity of presentations.

As organizations increasingly rely on information-driven decision-making, mastering custom sorting techniques in BI becomes essential for presenting narratives that resonate with audiences and support strategic objectives. This aligns with Creatum GmbH’s unique value in enhancing information quality and streamlining AI implementation, ultimately driving growth and innovation.

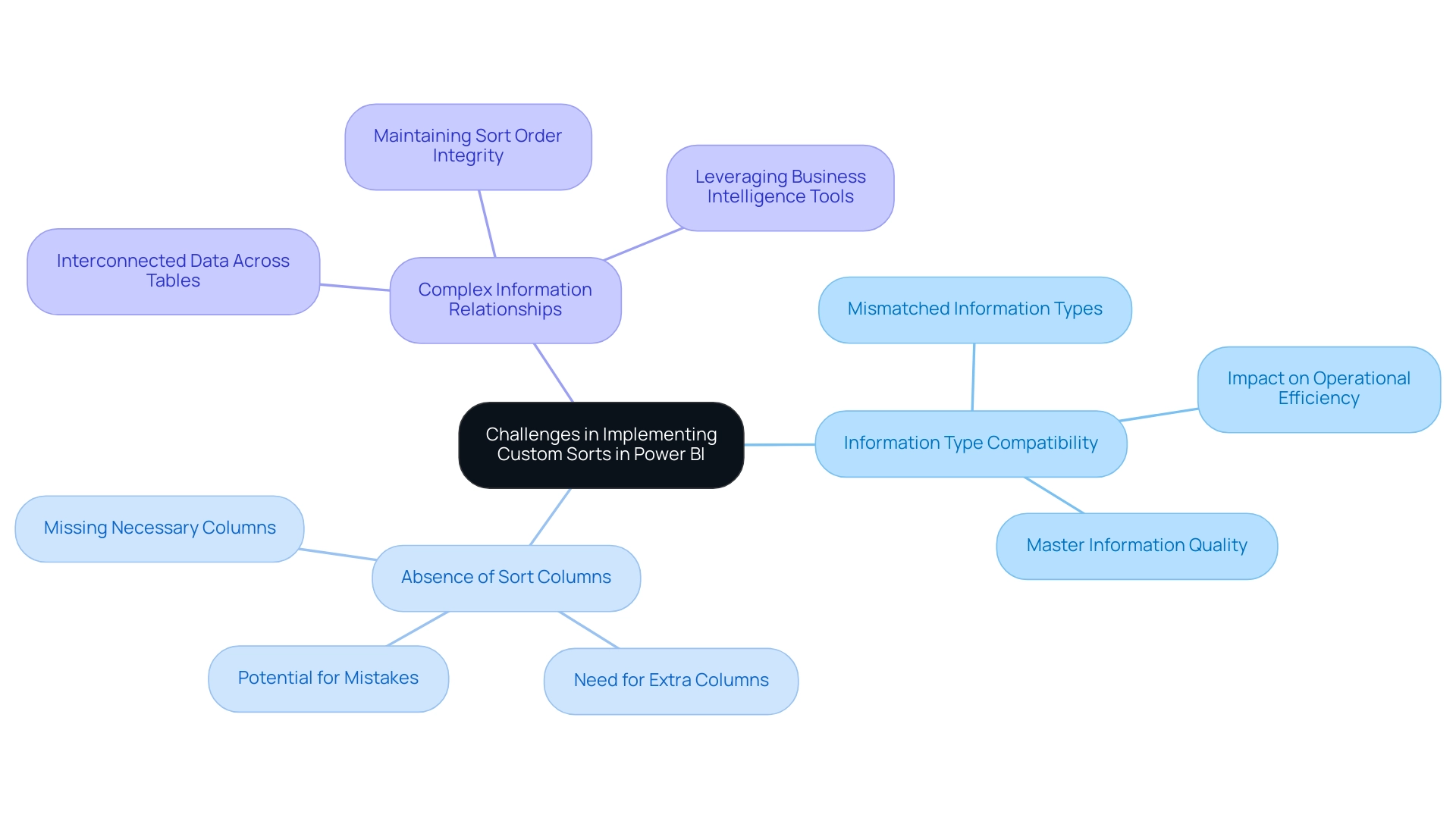

Common Challenges in Implementing Custom Sorts

Implementing a custom sort order in Power BI presents several challenges that users must navigate to achieve effective analysis. Key issues include:

-

Information Type Compatibility: A significant hurdle is ensuring that the types of the columns being sorted are compatible. Organizing text fields necessitates careful attention to detail; mismatched information types can lead to unforeseen ordering outcomes. This issue is especially pressing in 2025, as organizations increasingly depend on various information sources that may not always align seamlessly. This reflects a broader challenge of poor master information quality, which can hinder operational efficiency and decision-making.

-

Absence of Sort Columns: Users often encounter situations where the necessary columns for sorting are missing from the dataset. This limitation may require the creation of extra columns to enable the desired arrangement, complicating the preparation process and raising the potential for mistakes. Such challenges can be exacerbated by apprehension surrounding AI adoption, as organizations may hesitate to integrate advanced technologies that could streamline these processes.

-

Complex Information Relationships: When information is interconnected across multiple tables, maintaining the integrity of sort orders becomes increasingly complex. Users must have a clear understanding of these relationships to ensure that sorting remains consistent and accurate across the entire dataset. This complexity underscores the importance of leveraging business intelligence tools effectively to transform raw data into actionable insights, enabling informed decision-making that drives growth and innovation.

Addressing these challenges is crucial for utilizing Power BI’s custom sort order to its full potential. Microsoft offers a 60-day trial phase of BI Pro, allowing users facing organizational challenges to explore its features. As Brian, an Enterprise DNA Expert, noted, “I hope you found it useful. I really enjoyed this challenge and learned an enormous amount from the experience, which I wanted to document in detail here for my own purposes as well.” This perspective highlights the significance of comprehending categorization challenges in BI.

Furthermore, a case study contrasting BI with Tableau emphasizes BI’s strengths in usability, which can indirectly aid discussions on organizational challenges by showcasing the tool’s overall benefits. The BI community also shares valuable experiences, tutorials, and tips for visual representation, encouraging users to seek additional resources and assistance for overcoming organizational challenges. By recognizing and tackling these common issues, users can enhance their data visualization efforts and drive more informed decision-making.

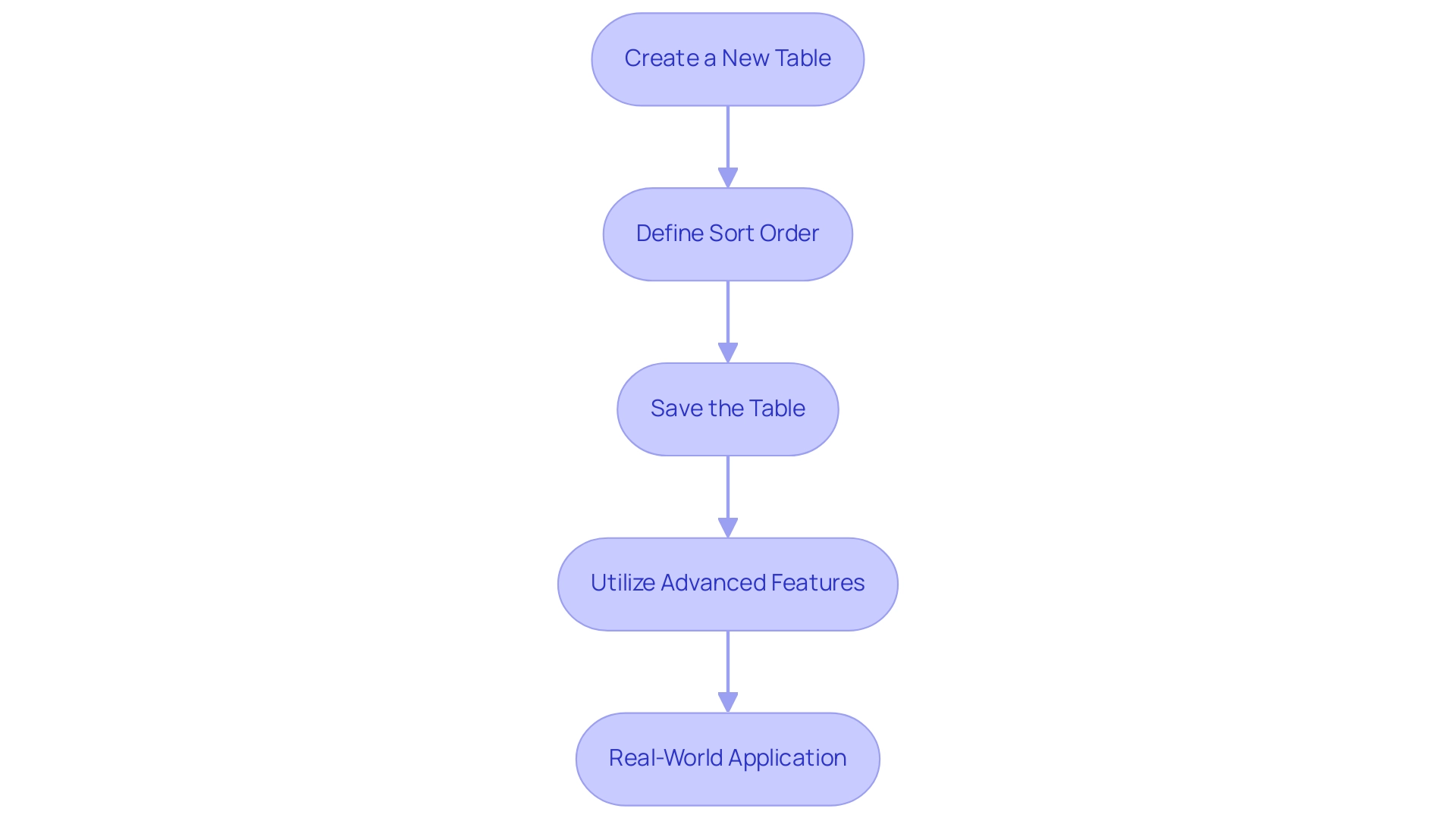

Setting Up a Sort Table for Custom Sorting

To effectively set up a sort table for custom sorting in Power BI, follow these detailed steps while keeping in mind the common challenges faced in leveraging insights from Power BI dashboards:

-

Create a New Table: Start by navigating to the ‘Home’ tab in Power BI and selecting ‘Enter Data’. This allows you to create a new table specifically for sorting purposes. Ensure this table contains two essential columns: one for the values you wish to arrange and another for the Power BI custom sort order. This step is crucial to avoid the time-consuming report creation that often detracts from data analysis.

-

Define Sort Order: In the sort order column, input numeric values that reflect the desired sequence. For example, when arranging months, assign January the value of 1, February the value of 2, and continue this pattern through December. This custom arrangement logic can also classify items into groups, such as Rural, Urban, Mix, and Youth, enhancing the clarity of your presentation and addressing potential inconsistencies that may arise from a lack of governance strategy, utilizing a Power BI custom sort order.

-

Save the Table: After populating your sort table, save it and ensure it is properly connected to your main information table. This connection is essential for effective sorting and will enable seamless analysis. Note that if you wish to share your report, it must be saved in Premium capacity to enable sharing, thus ensuring that stakeholders have access to consistent and reliable information.

-

Utilize Advanced Features: Leverage Power BI’s capabilities, such as conditional formatting, to enhance your visualizations. This feature allows you to apply color gradients and icons based on numerical values, making your information more intuitive and engaging. As Keren Aharon observes, “This solution demonstrates how advanced DAX measures and nested sorting can be utilized in BI to enhance visualization and analysis,” offering practical guidance that is frequently absent in conventional reports. These advanced features specifically address the challenges of report creation and inconsistencies by allowing for more dynamic and insightful presentations of information.

-

Real-World Application: Consider the case study of creating a new table named ‘Key’ in Business Intelligence, which serves as a foundation for future calculations. This approach not only organizes information effectively but also prepares it for advanced analysis, showcasing the importance of a well-structured sort table in driving insights and operational efficiency for business growth.

By following these steps, you can establish a robust sorting mechanism in Business Intelligence using Power BI custom sort order that enhances information visualization and analysis, ultimately driving better decision-making and addressing the challenges of report creation and information governance. At Creatum GmbH, we stress the significance of these strategies in enhancing your information processes.

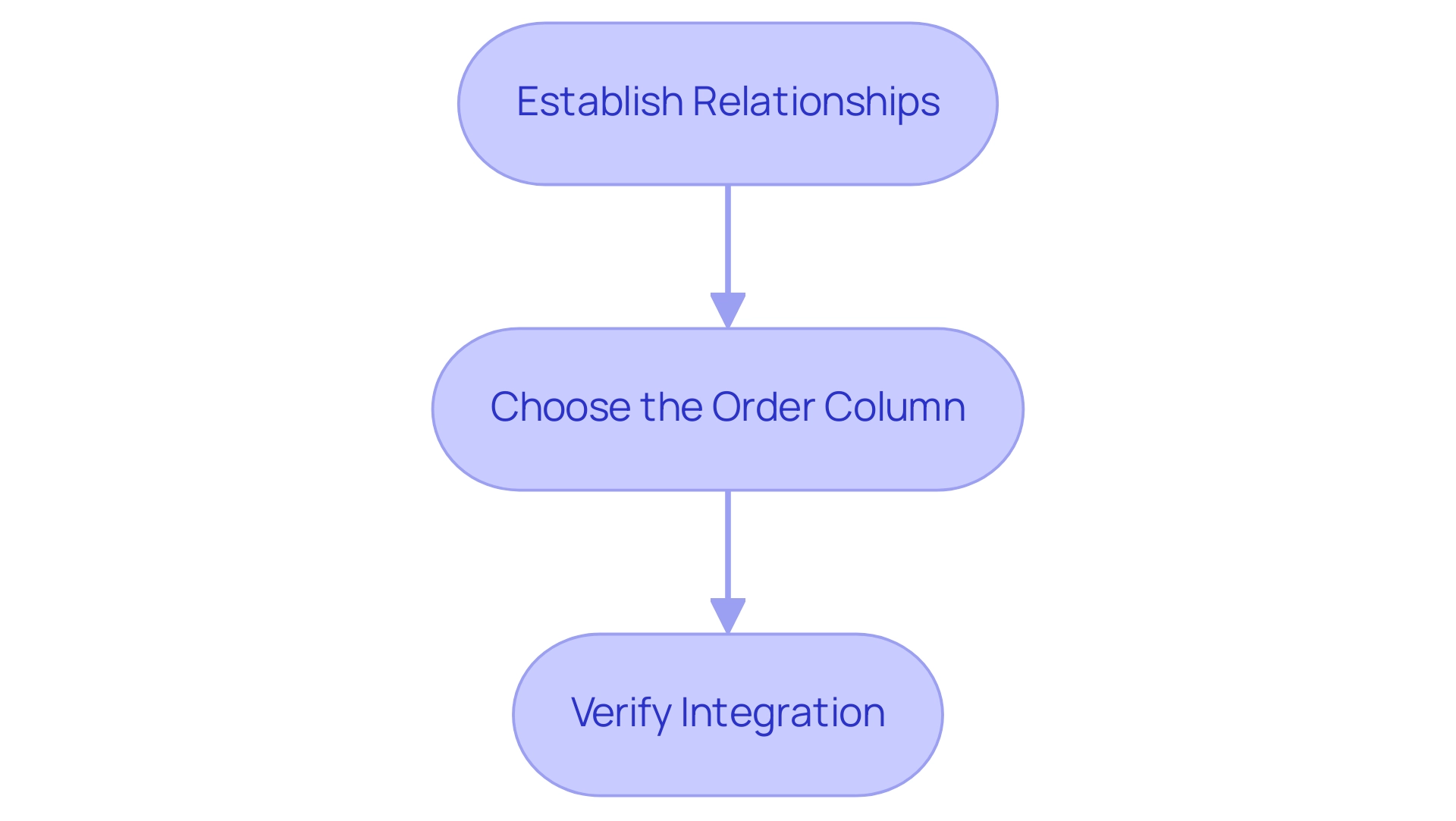

Integrating the Sort Table into Your Data Model

Incorporating an organized table into your Power BI information model is essential for efficient analysis, especially in today’s information-rich environment where leveraging Business Intelligence (BI) and Robotic Process Automation (RPA) is crucial for driving growth and innovation. Follow these steps to ensure seamless integration:

-

Establish Relationships: Start by navigating to the ‘Model’ view. Here, create a connection between your main information table and the sort table. This relationship should link the value column in your main table to the corresponding value in the sort table, which is vital for implementing the Power BI custom sort order for precise representation. Establishing these relationships not only enhances information integrity but also optimizes the performance of your reports. The star schema methodology, which categorizes tables effectively for analytical querying, supports this approach. As Hayley Hodges emphasizes, ‘The star schema is a well-established modeling technique commonly used in relational warehouses.’

-

Choose the Order Column: In your primary information table, identify the column you wish to arrange. Navigate to the ‘Column tools’ tab and select ‘Sort by Column’. From your sort table, choose the appropriate sort order column. This step is critical for ensuring that your information is presented in a meaningful sequence, achieved by applying a Power BI custom sort order that aligns with your analytical goals. Additionally, consider utilizing Power BI’s conditional formatting options, such as color gradients and icons, to visually represent data values in tables, thereby enhancing the overall data visualization experience.

-

Verify Integration: After applying the arrangement order, it is essential to confirm that the integration has been successful. Check your visualizations to ensure that the custom arrangement is functioning as intended. This verification process is crucial, as it impacts the overall effectiveness of your analysis and decision-making.

In 2025, incorporating sort tables in BI models has grown increasingly important, with experts underscoring the necessity for robust relationships between tables to enable Power BI custom sort order. Statistics indicate that organizations leveraging these techniques experience enhanced clarity and operational efficiency. For instance, Shashanka Shekhar’s post on March 10, 2024, garnered 102 interactions, highlighting the relevance and engagement of the topic within the community.

Real-world examples illustrate that effective relationship management in BI enhances sorting capabilities, particularly with the use of Power BI custom sort order, driving better insights and leading to more informed business decisions. Furthermore, employing a star schema can optimize analytical querying and improve the performance and maintainability of BI reports, addressing common challenges such as time-consuming report creation and data inconsistencies. By integrating RPA solutions like EMMA RPA and Automate, businesses can further streamline their processes, reduce manual effort, and enhance operational efficiency.

Finalizing Your Custom Sort Settings

To effectively finalize your custom sort settings in Power BI, follow these structured steps:

-

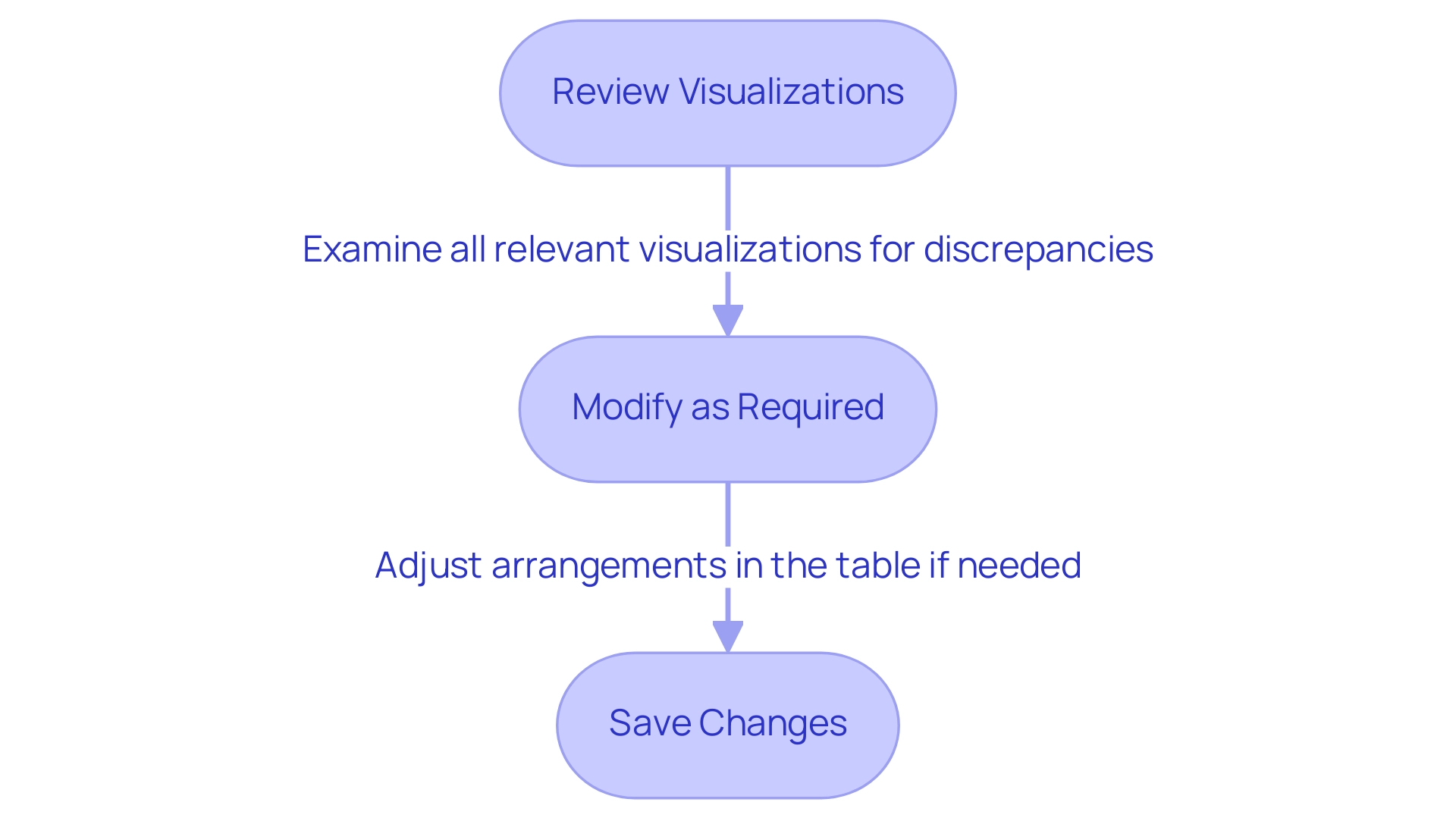

Review Visualizations: Begin by thoroughly examining all relevant visualizations to confirm that the custom arrangement is accurately applied. Pay close attention to any discrepancies in how information is presented, as this can significantly impact analysis outcomes and hinder your ability to leverage actionable insights from your dashboards.

-

Modify as Required: If you notice any visual representations that do not display the desired arrangement, review the arrangement table and the connections within your information model. Making these adjustments is crucial for ensuring consistency across your reports. BI administrators can enable or disable data model editing in the service for specific security groups, which is essential for maintaining data integrity and addressing challenges like data inconsistencies.

-

Save Changes: After confirming that your custom sort settings meet your expectations, save your Power BI report. This action preserves your adjustments, ensuring that your custom sorting remains intact for future analyses, thus streamlining the report creation process.

In 2025, the significance of examining visualizations cannot be overstated, as it directly affects the effectiveness of analysis. Organizations utilizing advanced analytics tools, such as Inforiver Analytics+, can leverage over 100 chart types to visualize information effectively, supporting use cases with more than 30,000 points. This capability highlights the necessity of meticulous review processes to enhance information clarity and usability, ultimately driving business growth through informed decision-making.

Real-world examples, such as the CleanMac Dashboard developed by Outcrowd, illustrate how thoughtful information architecture can aid users in navigating complex data without feeling overwhelmed. Additionally, adding the Category field to the Legend helps visualize how segments are categorized, further enhancing the clarity of your reports. As Patrick LeBlanc, Principal Program Manager, emphasizes, “We highly value your feedback, so please share your thoughts using the feedback forum.”

By adopting these practices, you can guarantee that your BI visualizations not only demonstrate precise arrangement but also support informed decision-making, utilizing the full potential of Business Intelligence and RPA for operational efficiency.

Advanced Techniques: Using Calculated Columns for Sorting

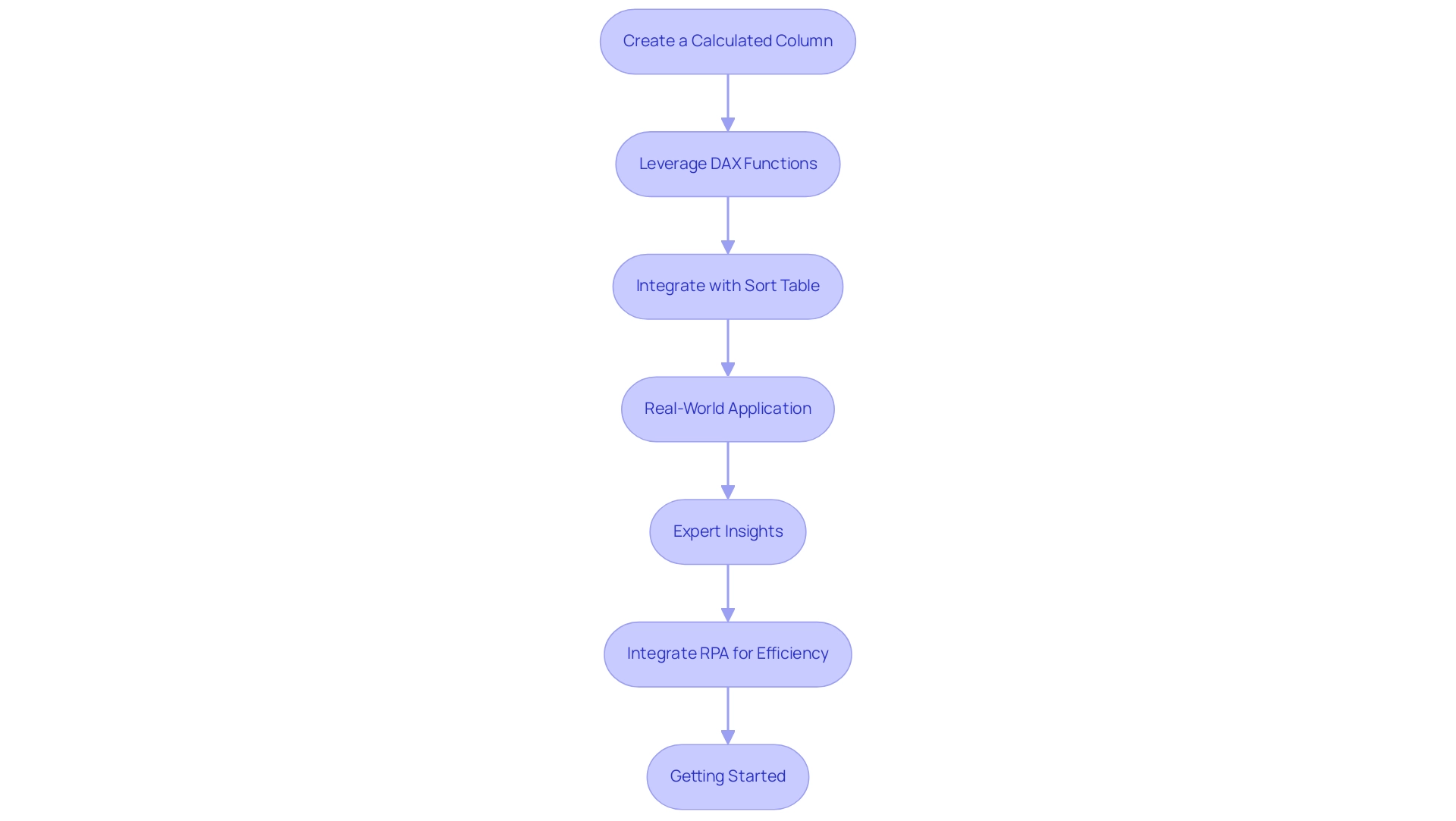

Advanced users can significantly elevate their custom sorting capabilities in Power BI custom sort order by effectively utilizing calculated columns. Here’s a detailed approach to mastering this technique, while also addressing the challenges of time-consuming report creation and data inconsistencies that often hinder effective data analysis:

-

Create a Calculated Column: Begin by adding a calculated column to your main table. This column should establish the arrangement based on specific criteria pertinent to your analysis. For instance, if you aim to sort products by sales figures, your calculated column could reflect the total sales for each product, thus enhancing the actionable insights derived from your data.

-

Leverage DAX Functions: Utilize DAX (Data Analysis Expressions) functions to establish dynamic ordering logic. This approach enables you to implement more intricate sorting scenarios, such as sorting by multiple criteria simultaneously. For example, you could arrange products first by category and then by sales within each category, enhancing the clarity of your visualizations and addressing the common issue of lack of actionable guidance in Power BI dashboards.

-

Integrate with Sort Table: It is crucial to ensure that your calculated column is seamlessly integrated with your sort table. This integration maintains consistency in arrangement across all visualizations, allowing for a cohesive data presentation. By doing so, you can avoid discrepancies that may arise from manual organization methods, thus improving operational efficiency.

-

Real-World Application: A case study on nested arrangement in Power BI Bar charts illustrates the effectiveness of using calculated fields. This study highlights the limitations of traditional sorting methods, such as the Shift + Click technique, and demonstrates how calculated columns can facilitate hierarchical sorting. By utilizing calculated fields or ranking measures, users can ensure that visualizations accurately reflect the desired organization, overcoming the challenges posed by conventional methods and enhancing the overall information-driven decision-making process.

-

Expert Insights: Industry specialists, including Devin Knight, highlight the significance of mastering X-axis customization techniques for creating more meaningful visuals in BI. Knight states, “Driving adoption of technology through learning is essential for operational efficiency.” By adopting calculated columns for sorting, users can utilize a Power BI custom sort order to enhance their storytelling capabilities, ultimately driving better decision-making and leveraging the full potential of Business Intelligence.

-

Integrate RPA for Efficiency: To further streamline the report creation process, consider integrating Robotic Process Automation (RPA) tools like EMMA RPA or Automate. These tools can automate repetitive tasks involved in data preparation and report generation, significantly reducing the time spent on manual processes and minimizing data inconsistencies. This integration not only enhances operational efficiency but also allows users to focus on deriving actionable insights from their BI reports.

-

Getting Started: Users can create a BI report by opening BI Desktop and clicking ‘Get Data’ in the ‘Home’ tab, providing a practical starting point for their analysis.

By implementing these advanced techniques and integrating RPA, users can transform their BI reports into effective tools for data analysis, ensuring that insights are not only accurate but also presented in a visually compelling manner. Mastering X-axis customization techniques is crucial for achieving meaningful visuals in BI, thereby reinforcing the significance of BI and RPA in driving business growth.

Key Takeaways for Mastering Custom Sort Order

To effectively master Power BI custom sort order, consider the following essential points.

-

Understand the Basics: Grasping the fundamentals of custom arrangement is crucial for effective information visualization. Custom organization enables a more intuitive arrangement of information, enhancing clarity and insight, which is essential in overcoming challenges related to poor master information quality.

-

Anticipate Challenges: Recognizing potential difficulties in arranging is vital. With 330,215 views for user reporting sorting issues, common problems may arise from types or relationships within your model. Being ready to address these proactively can save time and enhance results, especially in an environment where inconsistencies can obstruct effective decision-making.

-

Set Up and Integrate: Establish a dedicated sort table and ensure it is seamlessly integrated into your data model. This integration is key to achieving the desired arrangement effect across your visualizations, aligning with the need for tailored solutions that enhance operational efficiency.

-

Utilize Advanced Techniques: Leverage advanced sorting methods, such as creating calculated columns. For example, utilizing a DimDate table to assign numerical values to days of the week enables a more structured presentation of time-related information. This approach is demonstrated in case studies where users successfully sorted abbreviated day names. Additionally, adding the Category field to the Legend helps visualize how segments are categorized, providing clarity in your reports and addressing the challenges of time-consuming report creation.

-

Review and Finalize: Conduct thorough reviews of your visualizations. Finalizing your settings is essential to ensure that the information is presented accurately and effectively, which is particularly important in an information-rich environment where clarity is paramount. As noted by theblackknight, when calculating percentages for each category after arranging, you will need to adjust your formula to aggregate based on the new column created in the original table.